Synthetic Data Generation Platform

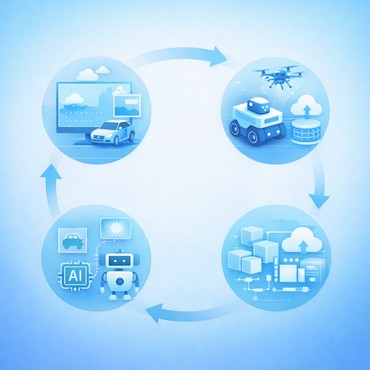

The QuData team built a production-grade synthetic data platform powered by Unreal Engine, JSBSim and AirSim to generate highly realistic diverse datasets with automatic annotations. The solution enables training and validating perception models in rare, hazardous, and edge-case conditions that are difficult or impossible to capture safely at scale in the real world.

Business Challenge

Business Challenge

Modern computer vision systems depend on large and diverse datasets. Teams building autonomous delivery robots, self-driving vehicles, warehouse platforms, and smart city monitoring systems face the same two persistent obstacles.

The first is the diversity of operating conditions. A perception model trained on sunny streets and clean sidewalks may fail in freezing rain, in heavy twilight shadows, or in dense pedestrian traffic near a station entrance. Real-world deployment demands robustness not just in normal conditions, but in edge cases.

Another major challenge is data annotation. Labeling large image datasets manually requires significant time and resources and can introduce inconsistencies or errors. Human annotators make mistakes, especially in low-visibility scenes, crowded environments, or frames with partial occlusion. For safety-critical systems, those mistakes propagate directly into model behavior.

The goal was therefore not simply to build another simulator. The objective was to create a production-grade synthetic data environment capable of:

- generating virtual scenes for robots and autonomous agents;

- simulating weather conditions, lighting, and other external disturbances;

- emulating multiple sensors, not only RGB cameras;

- automatically producing ground-truth labels;

- testing robot control algorithms before field deployment;

- reducing the cost and risk associated with collecting rare real-world scenarios.

Solution Overview

Solution Overview

The solution was to build a digital twin pipeline that merges visual realism with physical realism.

Using Unreal Engine for high-quality rendering, AirSim for vehicle and sensor simulation and JSBSim for simulation the physics of aircraft and drones, the team created an extensible virtual environment where nearly every parameter can be controlled. Engineers can switch from summer noon to winter dusk in seconds, trigger rainfall, inject fog, alter road friction, reposition obstacles, vary pedestrian density, or simulate emergencies without waiting for them to happen in reality. Unreal Engine is used as a real-time 3D creation platform, while AirSim and JSBSim provide an autonomous-vehicle simulation layer for drones, cars, and programmatic control workflows.

At the center of this solution is Qudata virtual environment platform (QuVE), the scene-construction and scenario-orchestration system. It is responsible for assembling virtual worlds from modular assets and behavioral rules. Instead of manually designing every frame, the team defines classes of environments: streets, courtyards, warehouses, logistics hubs, pedestrian crossings, loading zones, and indoor corridors.

For model development, the exported synthetic datasets can be used to train detectors and segmenters such as YOLO, and then fine-tuned with a smaller amount of real-world data to improve transfer into deployment environments.

Technical Details

Technical Details

The QuVE platform integrates several components responsible for environment generation, simulation, orchestration, and data production.

Environmental Engine. Unreal Engine serves as the foundation for creating photorealistic digital environments. It provides advanced rendering capabilities, realistic materials, and flexible lighting systems that allow the modeling of urban, industrial, and indoor spaces. These environments are designed with sufficient visual realism to support the development and evaluation of computer vision models, enabling perception systems to operate in conditions similar to real-world scenarios.

Physics and Vehicle Simulation. AirSim and JSBSim add a simulation layer for autonomous platforms operating within these virtual environments. It enables programmatic control of simulated vehicles such as drones and ground robots while generating virtual sensor streams that replicate the behavior of real-world perception systems. This allows engineers to connect the simulation environment directly to robotics algorithms, training pipelines, and testing workflows.

Orchestration Layer. The orchestration of virtual environments and simulation scenarios is managed by the QuVE framework. This system is responsible for procedural scene generation and the automation of dataset creation. Engineers define scenario templates and environment classes, after which the platform assembles scenes dynamically using modular assets and configurable parameters. Environmental conditions such as weather and lighting can be varied automatically, while assets and events can be randomized to generate diverse simulation scenarios. The framework also supports large-scale batch dataset generation together with automated export of annotation data.

Data Capture Pipeline. During each simulation run, the platform records synchronized sensor streams along with detailed metadata describing the state of the environment. Each generated frame contains information such as timestamps, camera parameters, object identities, and scene configuration. This supports both supervised learning and robotics debugging.

Automated Annotation. The automated annotation system eliminates the need for slow and error-prone manual labeling. Since every object in the digital environment is procedurally generated and its exact coordinates are known, the system can export perfect ground-truth labels simultaneously with the rendering of each frame. This includes a wide range of metadata such as 2D and 3D bounding boxes, pixel-level semantic segmentation masks, object identities, and precise depth values. Even in complex scenarios the platform maintains absolute geometric precision that human annotators cannot match. The resulting datasets are automatically formatted for immediate use in training advanced models like YOLO or etc.

Training Pipeline. The datasets support the development of perception systems for tasks including object detection, semantic segmentation, classification, object tracking and anomaly recognition. In addition to dataset generation, the environment allows engineers to test robotic control algorithms within simulated scenarios before deploying them in real-world environments.

Technology Stack

Unreal Engine

JSBSim

AirSim

Python

C++