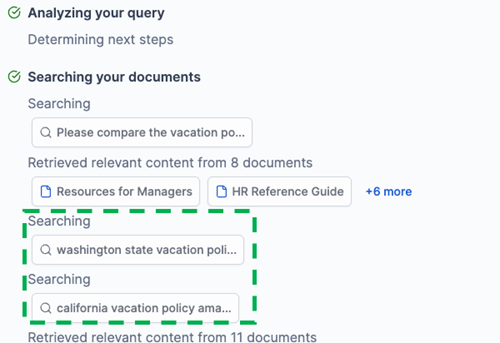

Amazon Q Business utilizes generative AI to assist organizations in unlocking value from their data. The introduction of Agentic RAG brings a new intelligent, agent-based retrieval strategy for more accurate and comprehensive responses to complex enterprise queries.

Atlassian founder pushes for US-style copyright law in Australia to enable AI innovation. Scott Farquhar warns of investment risks without legal AI content access.

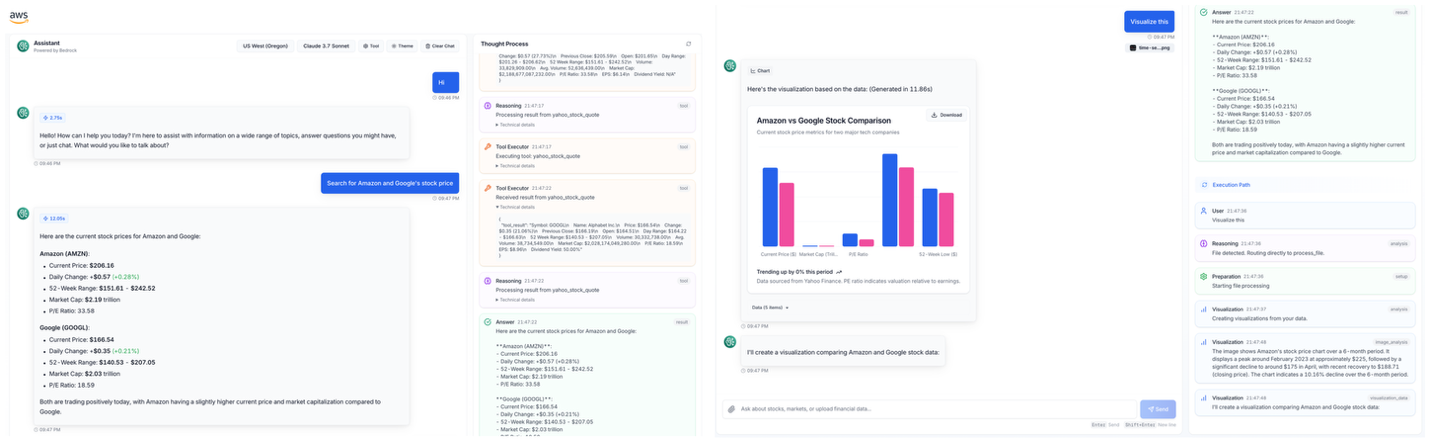

Agentic AI transforms financial services with autonomous decision-making. Combining LangGraph, Strands Agents, and MCP for innovative financial analysis workflows.

Labor debates response to AI technology amid fears of intellectual property theft. Human rights commissioner warns of AI entrenching racism and sexism in Australia.

Black Forest Labs’ FLUX. 1 Kontext [dev] image editing model is now available as an NVIDIA NIM microservice, simplifying generative AI workflows for image editing with simple language prompts. NVIDIA and Black Forest Labs collaborated to optimize the model size and performance, making it accessible to a wider audience for coherent, high-quality image edits.

Jobs and Skills Australia dismisses doomsday AI predictions; most occupations will be augmented by artificial intelligence. Consider nursing, construction, or hospitality for AI-proof careers, not book-keeping, marketing, or programming.

MIT's LIDS team develops software to evaluate and improve text classifiers for automated conversations, ensuring accuracy and reliability. Chatbots and online information sites are increasingly using sophisticated algorithms to classify content, raising concerns about accuracy and potential vulnerabilities.

MIT's INM aims to transform manufacturing through technology, talent development, and scaling for higher productivity and resilience. Industry giants like Amgen, GE Vernova, and Siemens are collaborating with MIT to break manufacturing barriers and drive adoption of AI and automation.

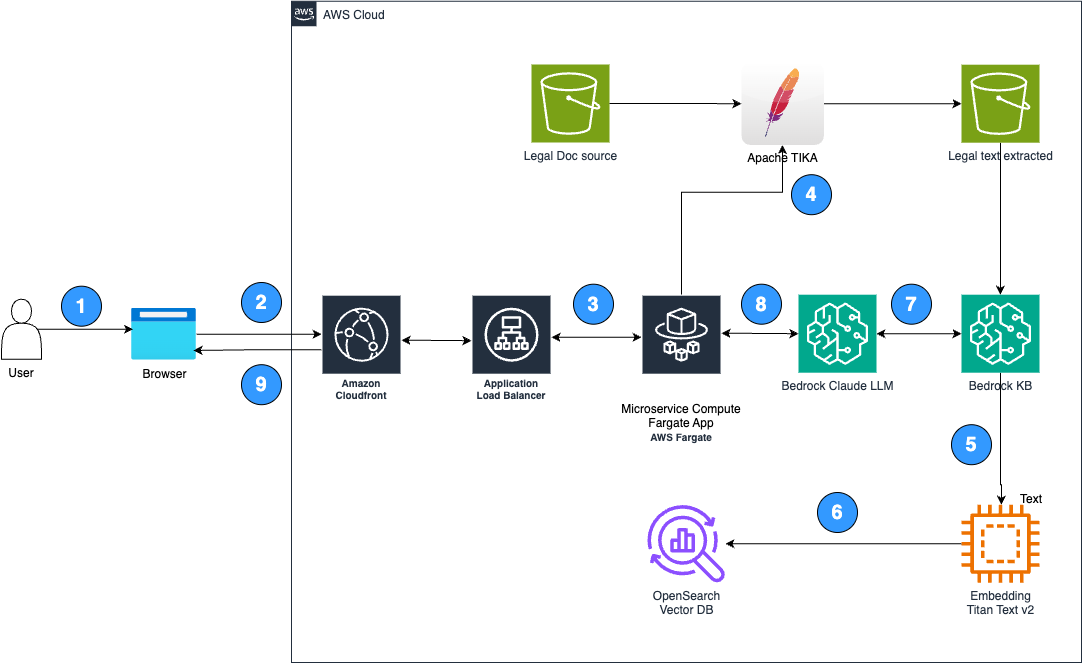

Lexbe leveraged Amazon Bedrock to revolutionize legal document review with AI and machine learning, enhancing efficiency and accuracy. By integrating Amazon Bedrock into Lexbe Pilot, legal teams can quickly extract insights from massive datasets, preventing costly oversights.

US medical journal warns against using ChatGPT for health info after man develops bromism from advice to cut salt. Annals of Internal Medicine reports rare bromide toxicity case linked to AI chatbot.

New series on transforming Australia's economy features Nobel laureate Daron Acemoglu's roadmap for AI adoption. Genuine productivity comes when technology augments human capability.

Elon Musk accuses Apple of antitrust violation over app rankings, sparking feud with OpenAI CEO. xAI threatens legal action.

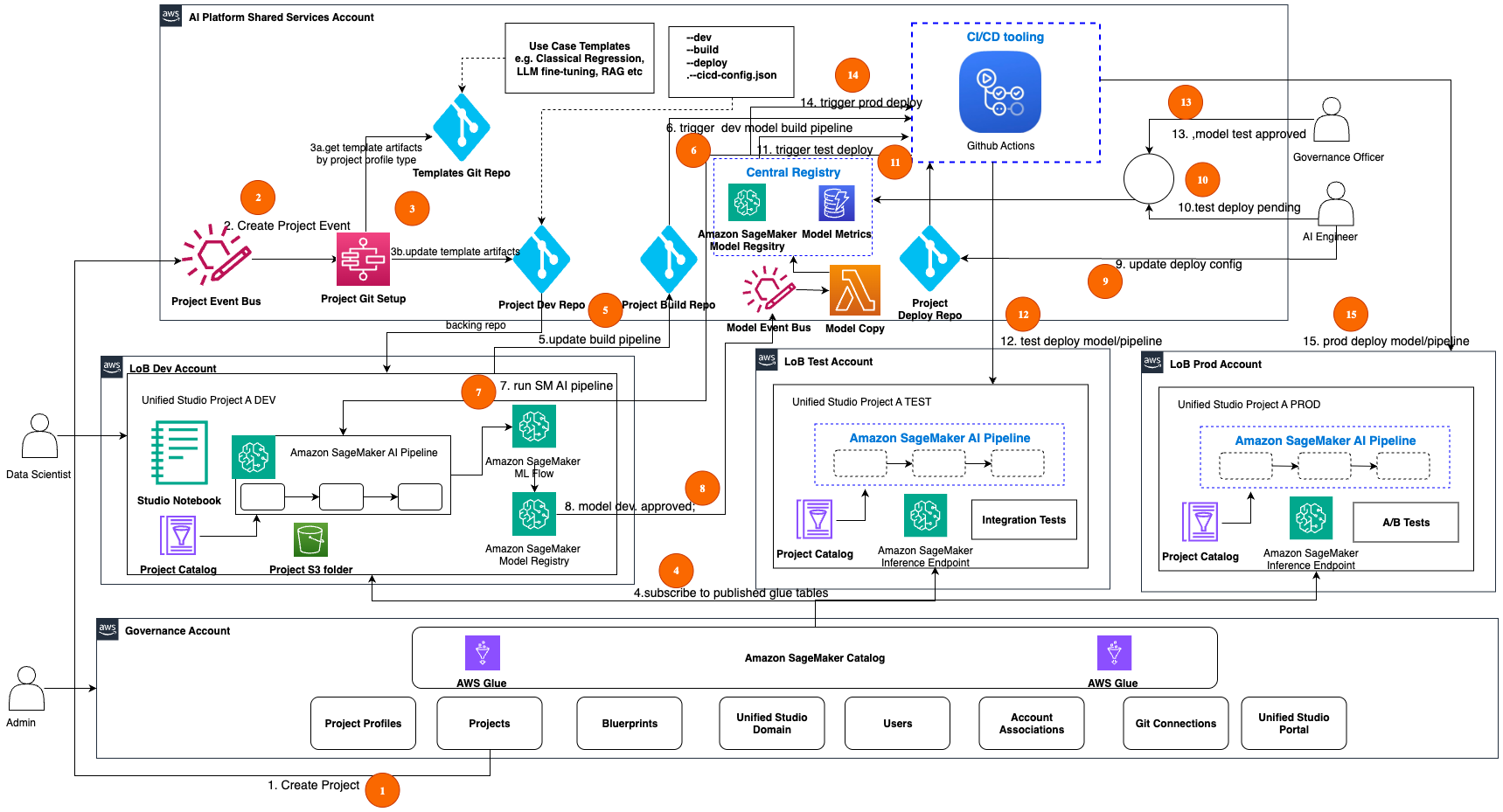

Amazon SageMaker Unified Studio streamlines data, analytics, and AI workflows. Challenges include scaling, automation, and governance controls. Architectural strategies and a scalable framework help manage multi-tenant environments and automate AIOps in SageMaker Unified Studio.

Labor appoints team to review 2025 campaign victory, focusing on cyber misinformation and AI threats. Despite historic win, party aims to enhance future campaign readiness.

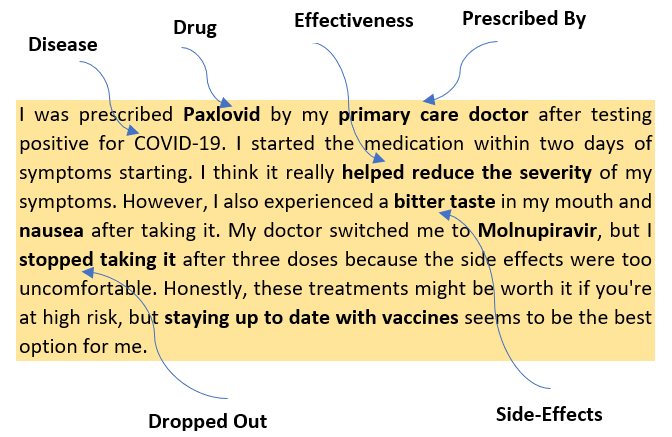

Indegene Limited utilizes Amazon technology to extract valuable insights from digital healthcare conversations, helping pharmaceutical companies engage effectively with patients and healthcare professionals. The company's Social Intelligence Solution uses advanced AI to address the growing preference for digital channels, providing strategic insights for the life sciences industry.