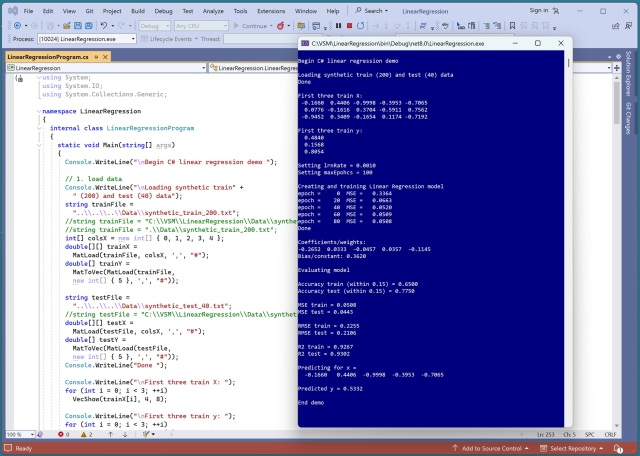

Machine learning regression models aim to predict a numeric value. Key evaluation metrics include MSE, RMSE, and R2 for model accuracy. R2 measures how well a model predicts compared to guessing the average y values.

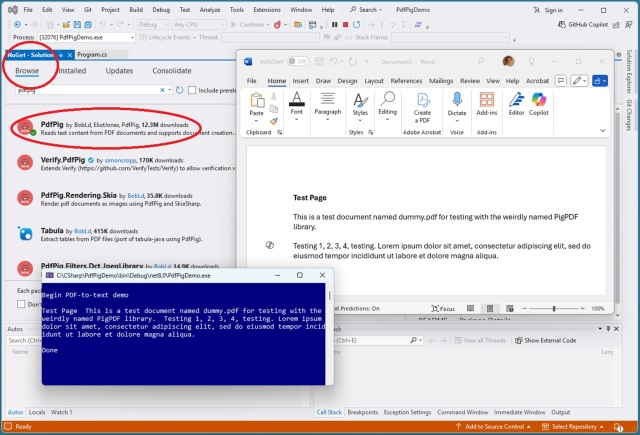

Extracting text from PDFs can be challenging due to their visual nature. The leading C# libraries for this task are iText and PdfPig, with Python's PyMuPDF being the superior choice.

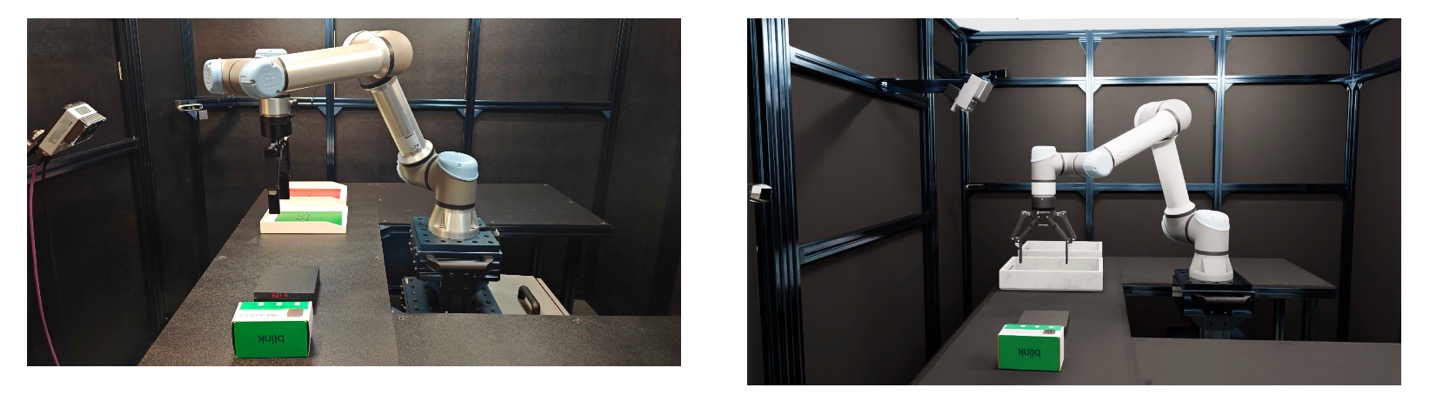

Amazon Devices & Services utilizes NVIDIA digital twin tech to enhance manufacturing with AI software, training robots for product auditing and integration without physical prototyping. This innovative approach offers faster, more efficient inspections and enables flexible production pipelines for diverse products using simulation-first techniques.

LSE research reveals gender bias in AI-generated care decisions, downplaying women's health issues. Google's AI tool "Gemma" shows language discrepancies in case note summaries.

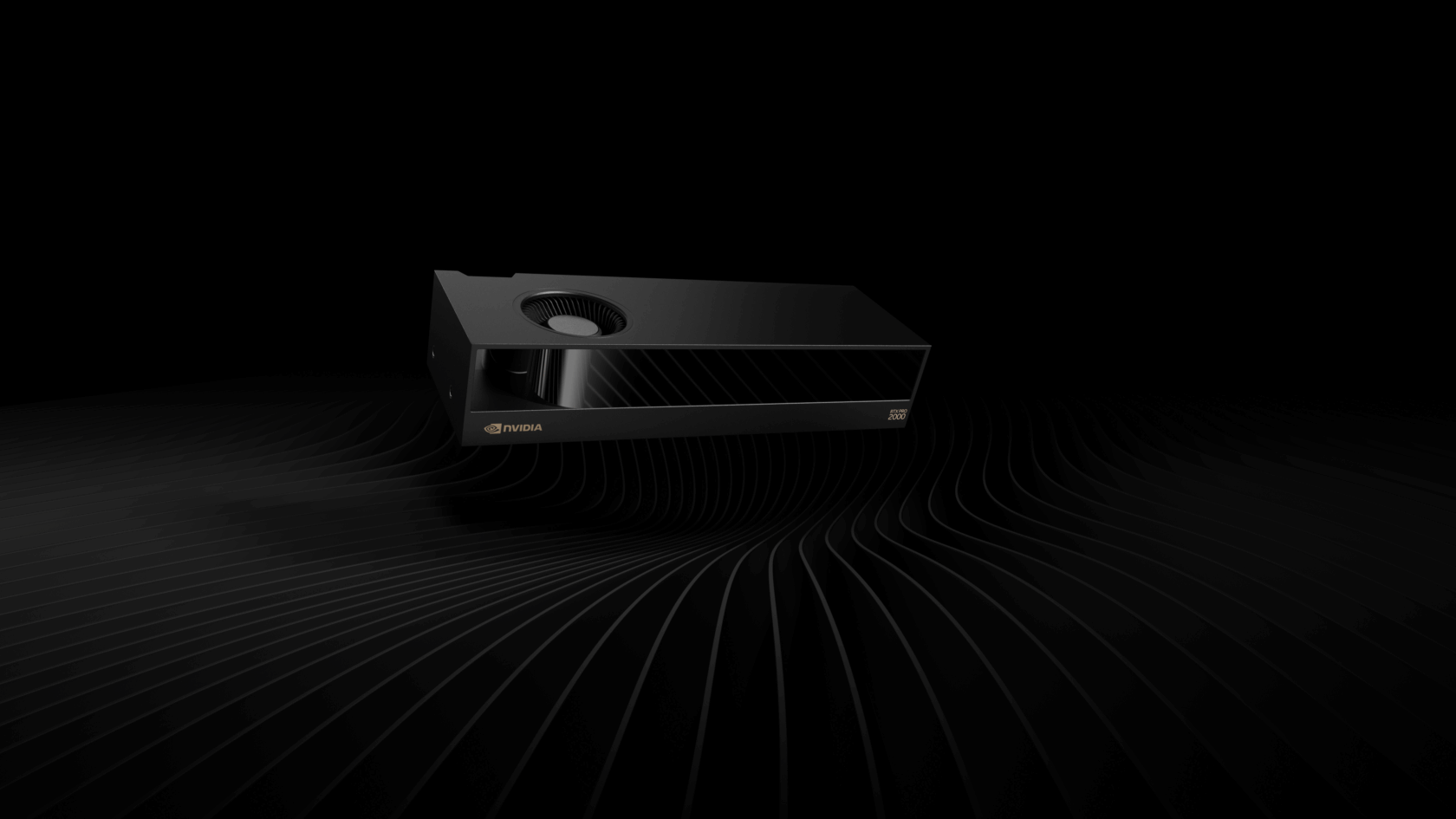

NVIDIA introduces RTX PRO 4000 SFF and RTX PRO 2000 GPUs with AI acceleration for professional workflows, offering higher performance in compact sizes. Businesses like Mile High Flood District and Studio Tim Fu are benefiting from the speed and efficiency of NVIDIA RTX PRO GPUs for tasks like flood simulations and AI-driven design.

Australia's Productivity Commission may exempt text and data mining in Copyright Act. From typewriters to Commodore 64, technology evolution impacts journalism industry.

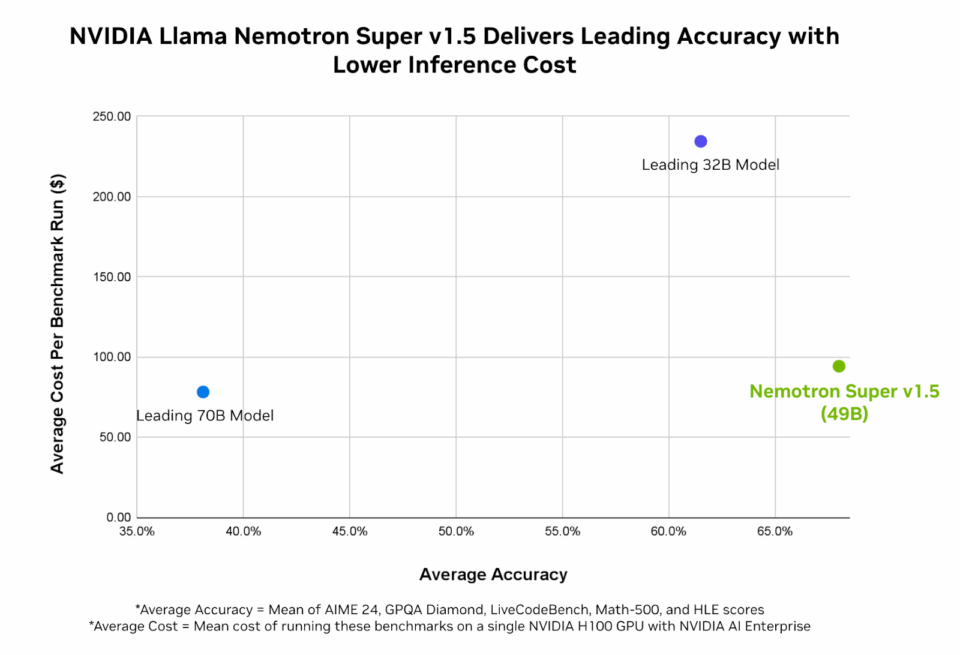

AI agents, like NVIDIA Nemotron and Cosmos, are driving $450 billion in revenue gains by 2028. These models offer highest accuracy and efficiency for enterprise AI, enhancing productivity and decision-making.

AI-generated videos are taking over YouTube, with nearly 1 in 10 of the fastest growing channels being solely AI content. From surreal scenarios to cat soap operas, channels like Super Cat League and Cuentos Facinantes are captivating millions of subscribers.

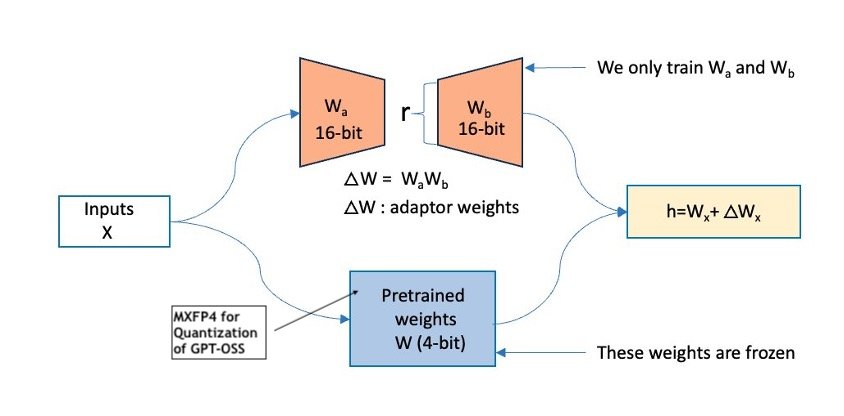

OpenAI's GPT-OSS models, gpt-oss-20b and gpt-oss-120b, available on AWS with MoE architecture for high reasoning performance and reduced compute costs. Fine-tuning transforms models into domain-specific experts, enhancing accuracy and reliability for targeted tasks.

Staff at the Alan Turing Institute warn of collapse due to government funding threats. Whistleblowing complaint highlights governance and internal culture concerns.

Dr. Karl Kruszelnicki plans to launch a chatbot for climate crisis queries, leveraging his 40 years of science communication experience. Despite his iconic status, the 77-year-old scientist seeks to expand his reach and impact with this innovative tool.

Avoid extreme tanning to prevent skin cancer (Burn notice: Gen Z and the terrifying rise of extreme tanning). Explore art nouveau treasures in Nancy, France, showcasing works by Émile Gallé and Louis Majorelle (Editorial, 8 August).

OpenAI's new GPT-5 model offers enhanced capabilities, but at a steep energy cost. Asking for a recipe could consume 20 times more electricity than the previous version.

New study shows Tesla's self-driving technology outperforms competitors, reducing accidents by 10%. Elon Musk plans to expand the technology to other industries beyond automotive.

OpenAI's latest AI model, GPT-5, displayed basic errors like claiming three Bs in 'blueberry'. Users also found it mistakenly thinking there are three Rs in 'Northern Territory'.