Generative AI technologies are reshaping software development, with AI agents taking on tasks like monitoring and optimizing software. The Model Context Protocol (MCP) by Anthropic opens new possibilities for AI agents to access data sources and act autonomously, transforming how applications are built and deliver value.

Microsoft plans to invest $80bn in AI this year, exceeding revenue expectations with $70.07bn reported. Earnings per share surpass analyst predictions at $3.46, showcasing AI's financial success.

AWS customers in EMEA, like Il Sole 24 Ore and Booking. com, are successfully using generative AI to enhance customer experience and boost operational efficiency. Companies are leveraging AWS services to implement AI solutions that provide personalized recommendations and improve service quality, setting the stage for future growth in their respective industries.

Product Analytics tracks customer engagement, reveals behavioral patterns, and drives adoption, retention, and conversion. Product-Market Fit is key for sustainable growth, with indicators like cohort retention trends and PMF surveys revealing customer satisfaction and advocacy.

The Universal Approximation Theorem reveals the power of a single hidden layer neural network. Hugging Face showcases over one million pretrained models, highlighting the need for diverse network architectures.

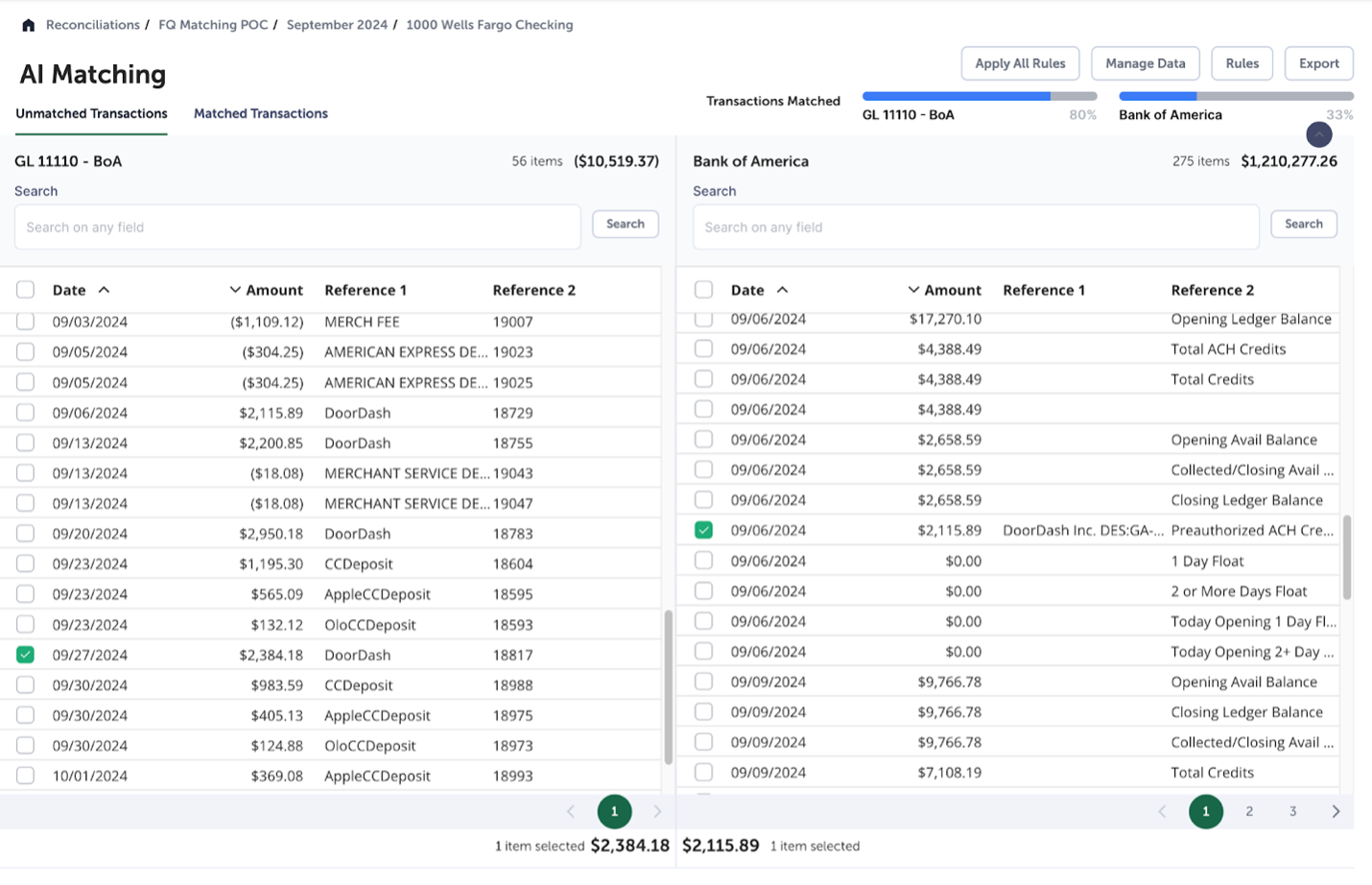

Generative AI solutions like Amazon Bedrock are transforming industries, empowering organizations to leverage foundation models for innovative AI applications. FloQast, with over 2,800 clients, streamlines accounting operations using AI-powered solutions on Amazon Bedrock, tackling complexities at scale.

Government to conduct economic impact assessment of copyright changes, addressing artists' concerns before crucial vote. Promise to publish reports on transparency, licensing, and data access for AI developers.

The MIT-Portugal Program (MPP) has signed a new agreement with the Portuguese Science and Technology Foundation (FCT) to support innovative research in fields like AI and climate change until 2030. This longstanding partnership has fostered trust, collaboration, and impactful scientific contributions, with a focus on addressing global challenges and transforming economies.

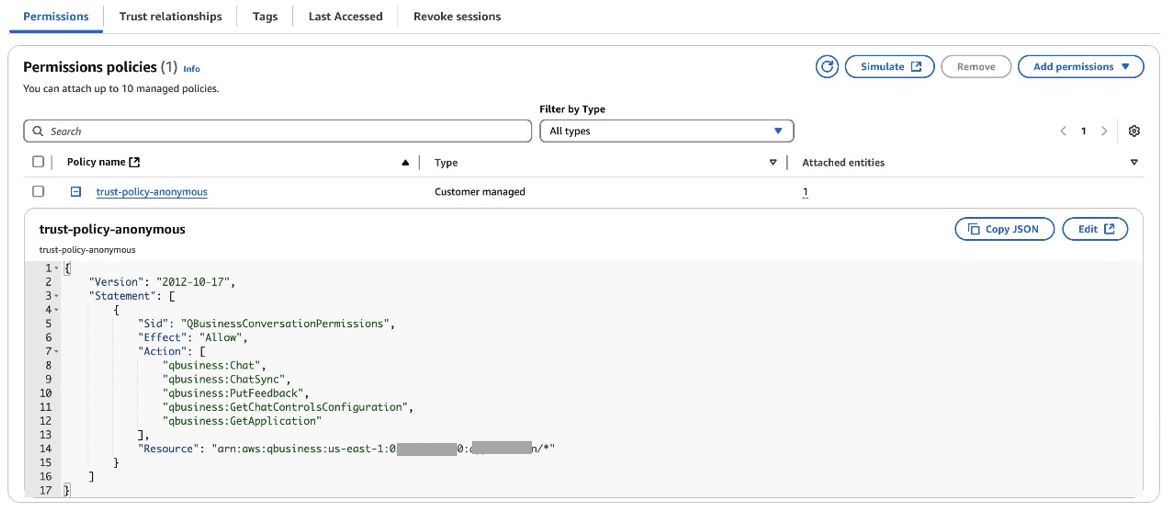

Amazon Q Business is an AI assistant that securely completes tasks based on enterprise data. Now supports anonymous user access for public-facing websites and portals, offering powerful AI-driven assistance.

BBC Maestro offers online video lessons by "Agatha Christie" on crime writing tips, utilizing AI technology and restored audio recordings. Aspiring writers can learn about story structure, plot twists, and suspense directly from the iconic author herself.

Probabilistic Machine Learning changes the way we view machine learning models, emphasizing the importance of understanding probability distributions in predictions. This approach not only provides answers but also reveals the model's confidence level, leading to better decision-making.

Microsoft and academic researchers introduce 1-shot RLVR, achieving impressive results with just one training example, revolutionizing language model fine-tuning for reasoning tasks. Developers can leverage this technique for math agents, tutors, and copilots without the need for massive datasets or human labels.

Data scientists face challenges in the experimentation phase due to reliance on Jupyter Notebooks and poor coding practices. Implementing structured principles can streamline experimentation, reduce time to value, and improve project delivery efficiency.

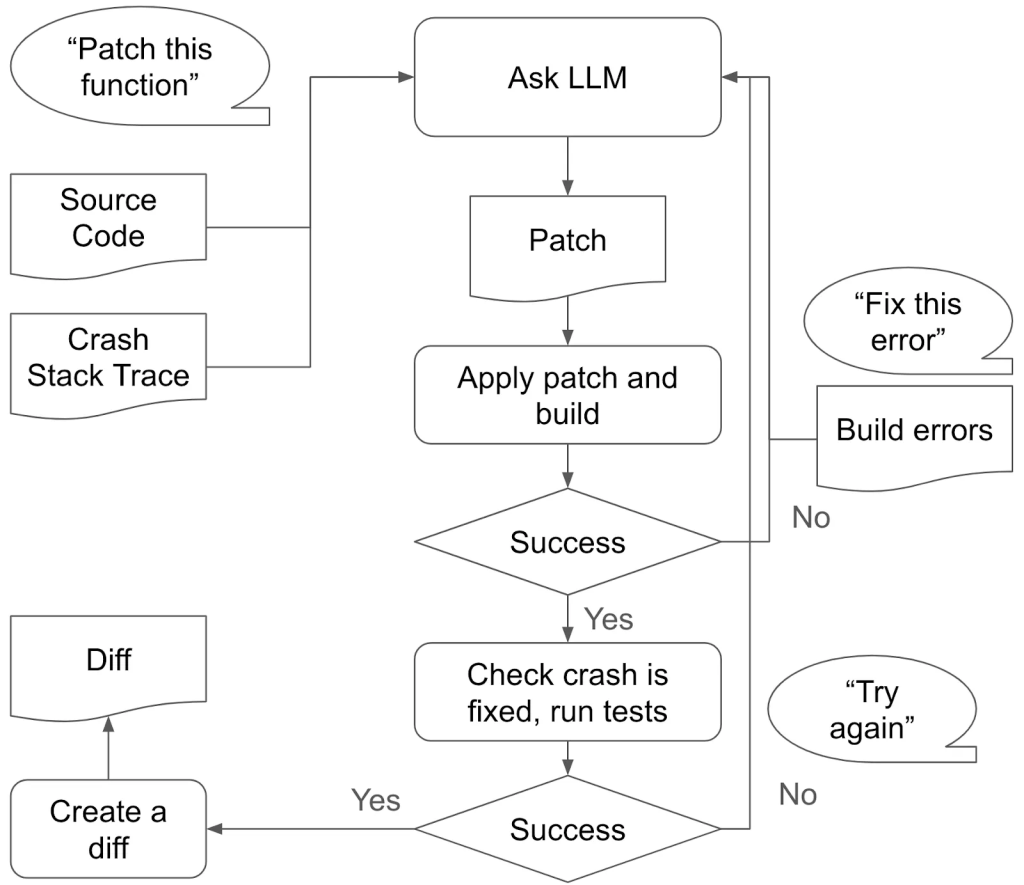

Introducing AutoPatchBench, a benchmark for automated vulnerability repair through AI, now available on GitHub. This initiative aims to enhance security solutions by evaluating and comparing AI program repair systems for fuzzing-identified vulnerabilities.

Building a reliable transcription system for long audio interviews in French using Google's Vertex AI posed unexpected challenges. Despite model limitations, the team navigated through budget evaluations and timestamp drift disasters to create a scalable solution.