Machine learning boosts mobile advertising and gaming industries with neural networks for click prediction. Top players like Applovin are investing billions in user acquisition, migrating to deep learning for enhanced performance.

Leading companies like Microsoft, Oracle, and Snap are utilizing NVIDIA's AI inference platform for high-throughput and cost-effective AI services. NVIDIA's advancements in software optimization and the Hopper platform are revolutionizing AI inference, delivering exceptional user experiences while optimizing total cost of ownership.

Machine learning models have made great strides, but their complexity can hinder interpretation. Human Knowledge Models offer a solution by distilling data into simple, actionable rules, improving trust and ease of use in various domains. This approach is especially valuable for field experts like doctors, enabling clear insights from complex data for better decision-making.

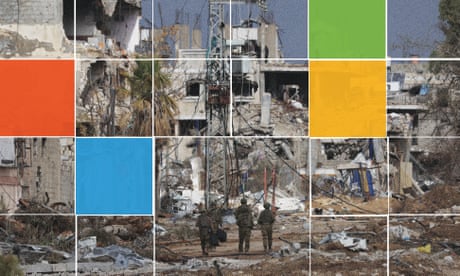

Israel increased use of Microsoft cloud & AI tech during Gaza bombardment, deepening defense ties post-2023 with $10m in deals for support.

Generative AI models like AlphaFold and RFdiffusion are transforming drug discovery by predicting molecular structures. MIT's MDGen offers a new approach, simulating dynamic molecular movements efficiently to aid in designing new molecules for diseases like cancer.

Pope Francis urges Davos leaders to closely monitor AI's impact on humanity's future, warning of a potential truth crisis. Governments and businesses advised to exercise caution and vigilance in navigating the complexities of artificial intelligence.

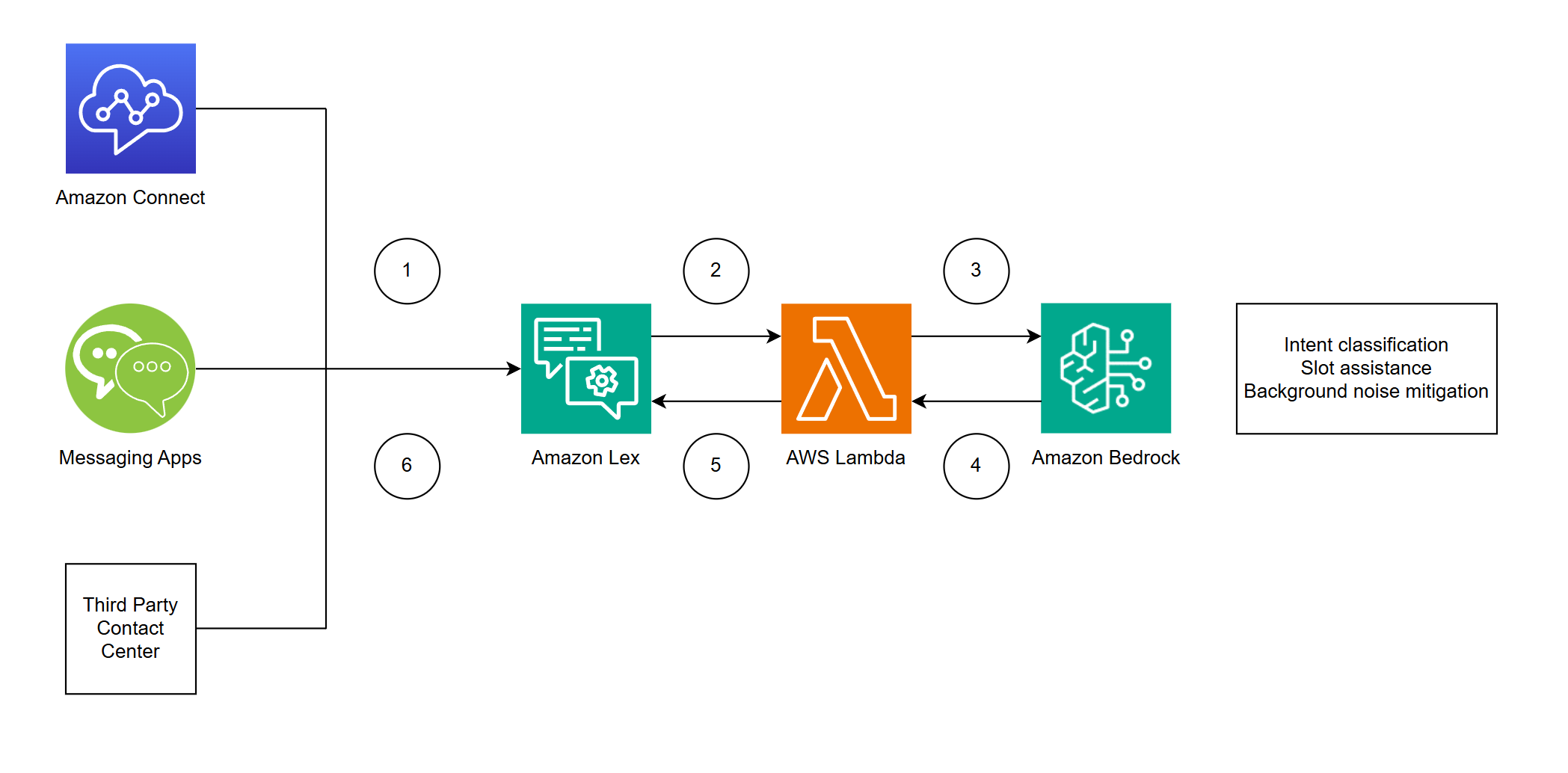

AI technologies like Amazon Lex and Amazon Bedrock are transforming customer experiences, reducing handle times, and enhancing self-service tasks. Integrating LLMs with Amazon Lex and Bedrock improves intent classification and slot resolution, ensuring accurate customer interactions.

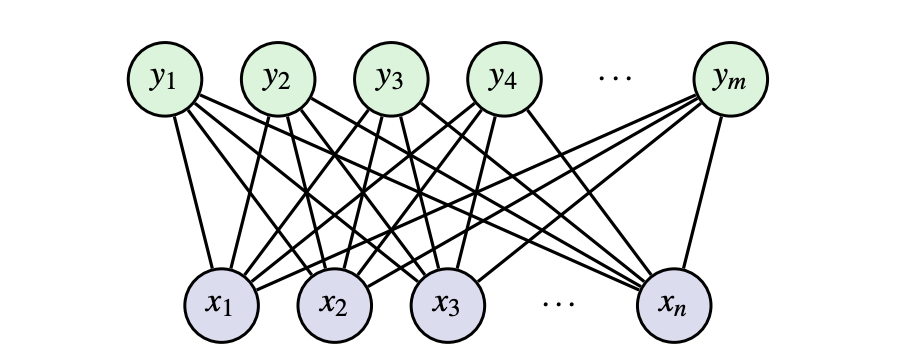

Geoffrey Hinton's Nobel Prize-winning work on Restricted Boltzmann Machines (RBMs) explained and implemented in PyTorch. RBMs are unsupervised learning models for extracting meaningful features without output labels, utilizing energy functions and probability distributions.

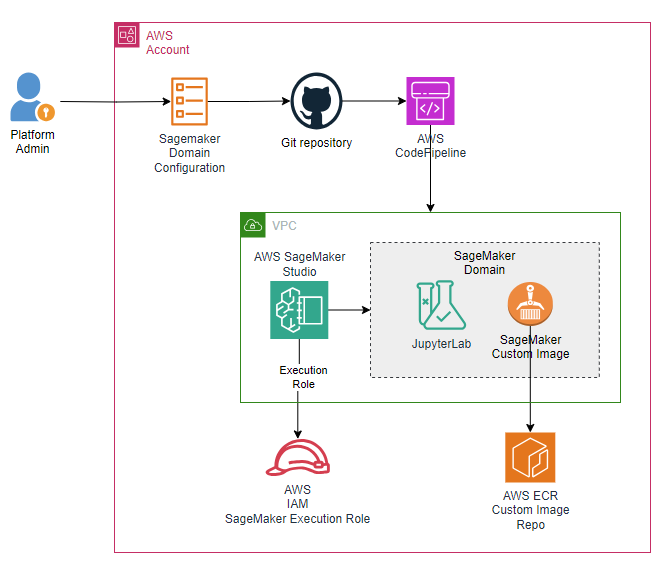

Automate attaching custom Docker images to Amazon SageMaker Studio domains for increased productivity and security. Deploy a pipeline using AWS CodePipeline to streamline image creation and attachment process.

Hands-on machine learning projects reveal challenges in transitioning to production. Optimize model performance by aligning loss functions and metrics with business priorities.

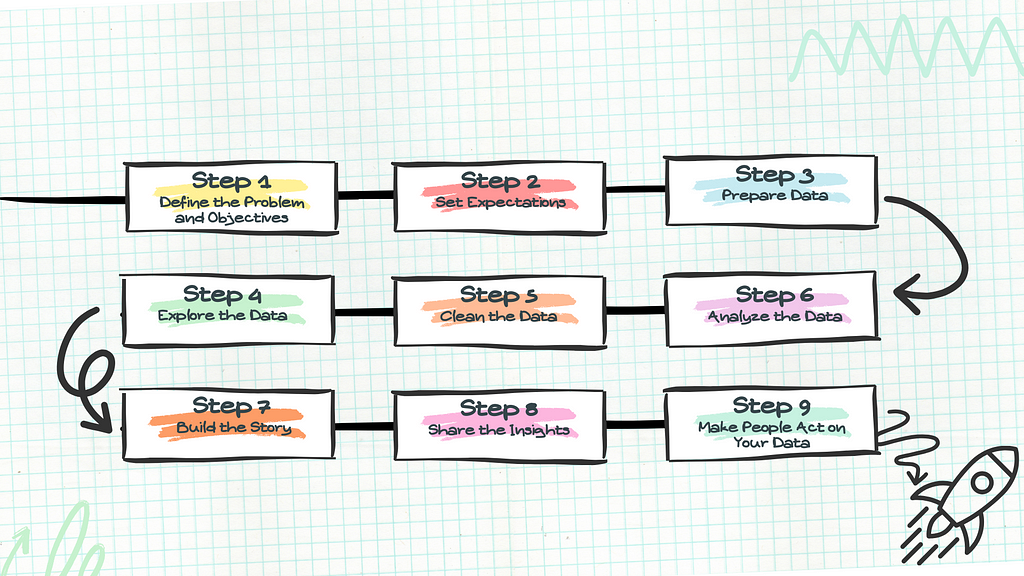

Learn how to approach data analytics projects like a pro: Define the problem, set expectations, and prepare effectively for impactful insights. Stakeholders' clear objectives and proper planning are key to successful data analysis projects.

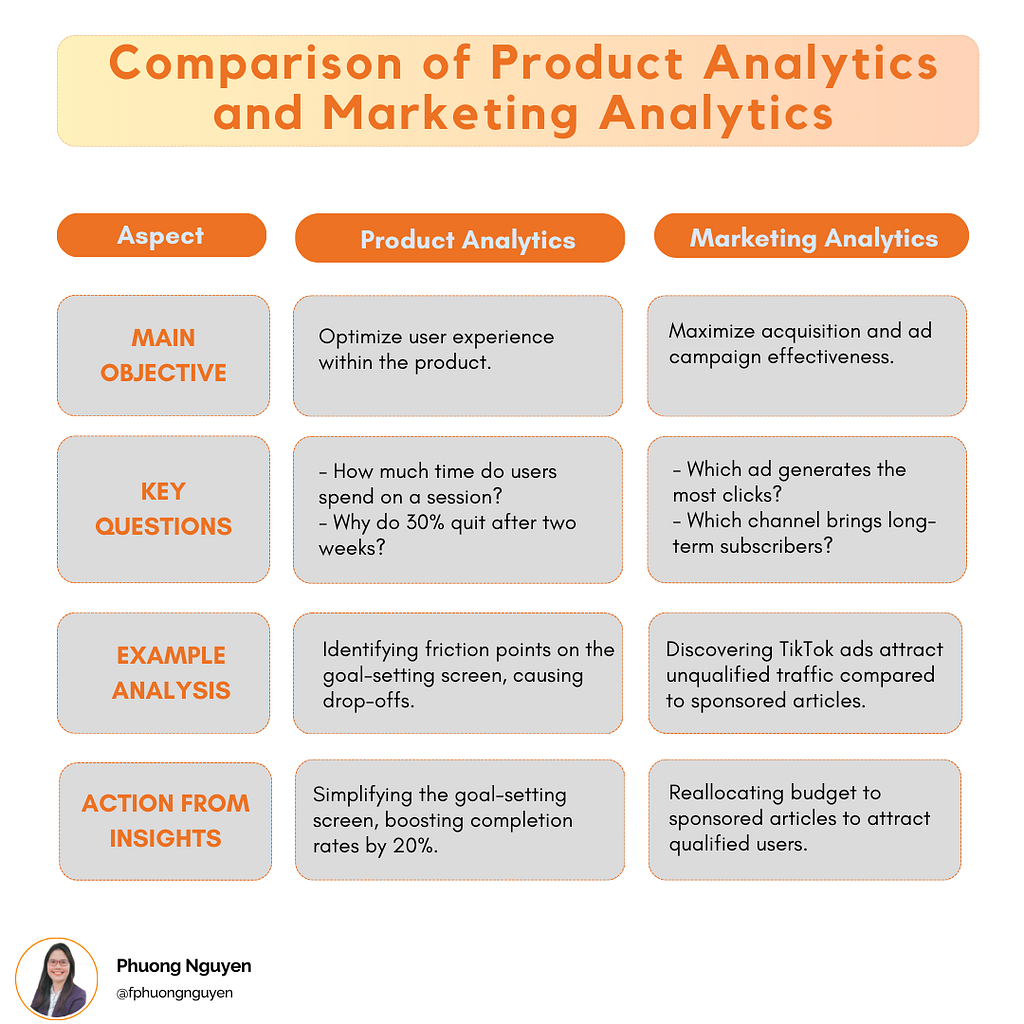

Data Analysts face confusion over the differences between Product Analytics and Marketing Analytics. Product Analytics improves user experience, while Marketing Analytics focuses on acquiring new users.

Independents urge urgent action on deepfakes as AEC warns of foreign interference in Australian election. Pocock and Chaney call for truth in political advertising reform.

AI tools are transforming daily lives, helping with organization and efficiency at work. Share your experiences with AI adoption in work or personal life.

Keir Starmer aims to boost AI use in public sector for significant change, with plans for AI growth zones like Culham, Oxfordshire. The environmental impact of increasing AI implementation is a key concern raised by Guardian's Helena Horton.