Amazon Comprehend offers pre-trained and custom APIs for natural-language processing. They have developed a pre-labeling tool that automatically annotates documents using existing tabular entity data, reducing the manual work needed to train accurate custom entity recognition models.

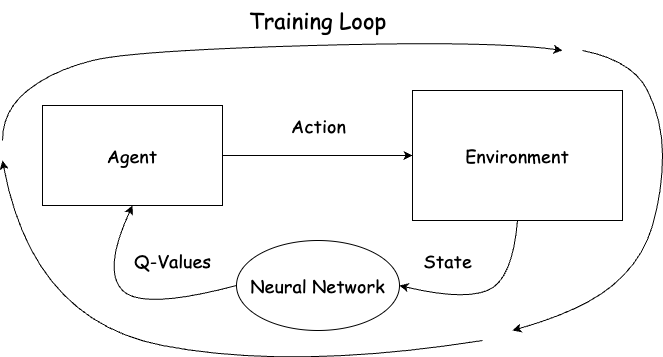

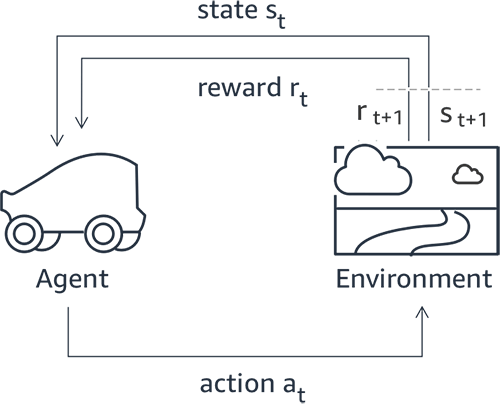

Dive into the world of artificial intelligence â build a deep reinforcement learning gym from scratch. Gain hands-on experience and develop your own gym to train an agent to solve a simple problem, setting the foundation for more complex environments and systems.

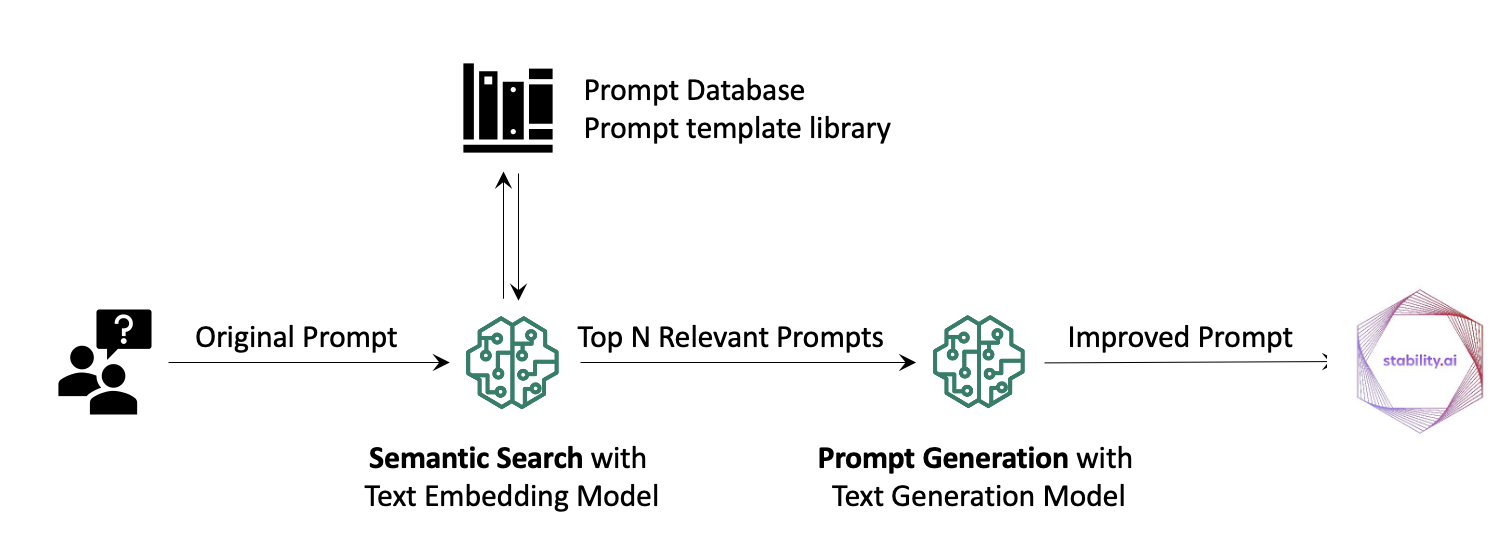

Text-to-image generation is a rapidly growing field of AI, with Stable Diffusion allowing users to create high-quality images in seconds. The use of Retrieval Augmented Generation (RAG) enhances prompts for Stable Diffusion models, enabling users to create their own AI assistant for prompt generation.

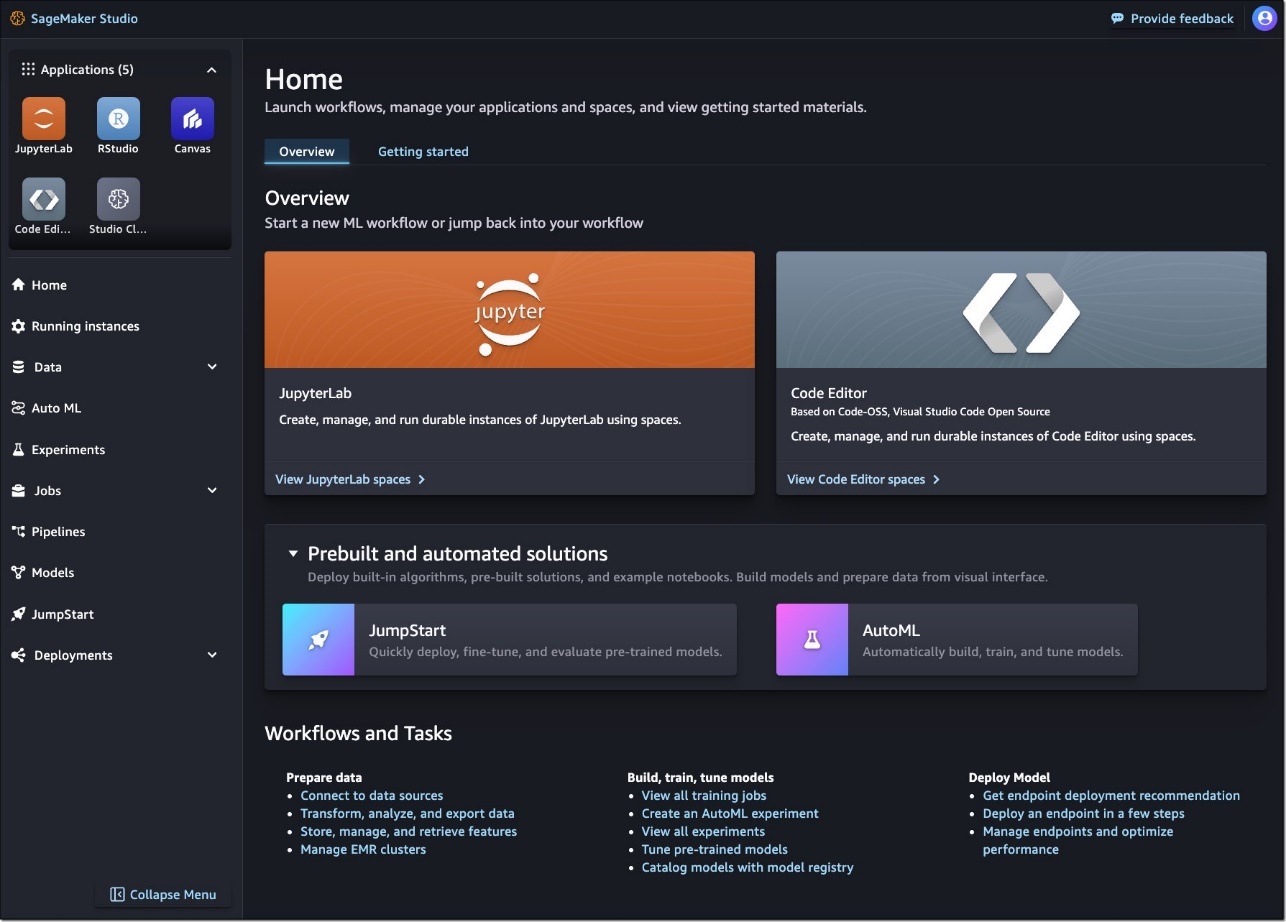

Amazon SageMaker Studio now offers a fully managed Code Editor based on Code-OSS, along with JupyterLab and RStudio, allowing ML developers to customize and scale their IDEs using flexible workspaces called Spaces. These Spaces provide persistent storage and runtime configurations, improving workflow efficiency and allowing for seamless integration of generative AI tools.

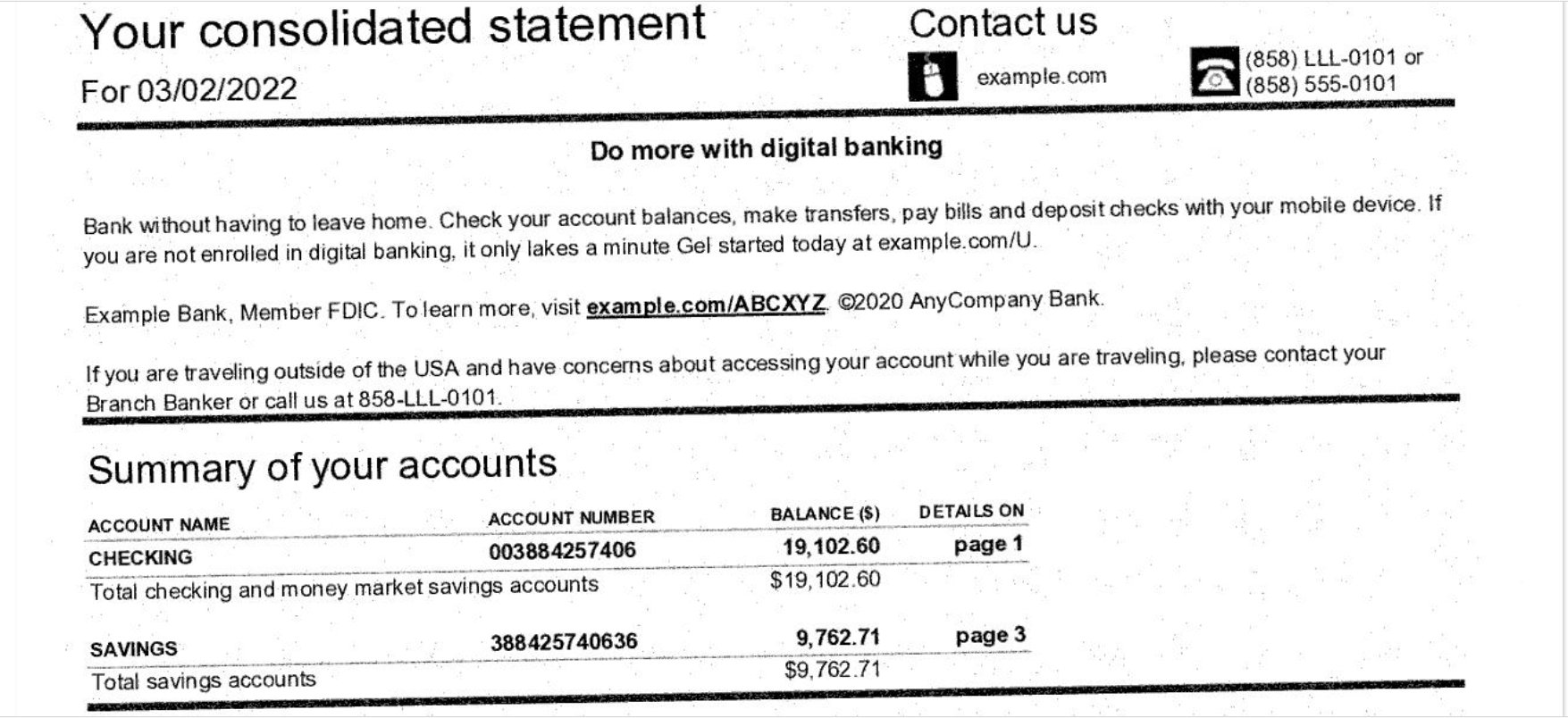

The US Federal Trade Commission warns against QR code scams that can take control of smartphones, make fraudulent charges, or obtain personal information. Scammers are targeting QR codes on parking lot kiosks, leading to look-alike sites that funnel funds to fraudulent accounts.

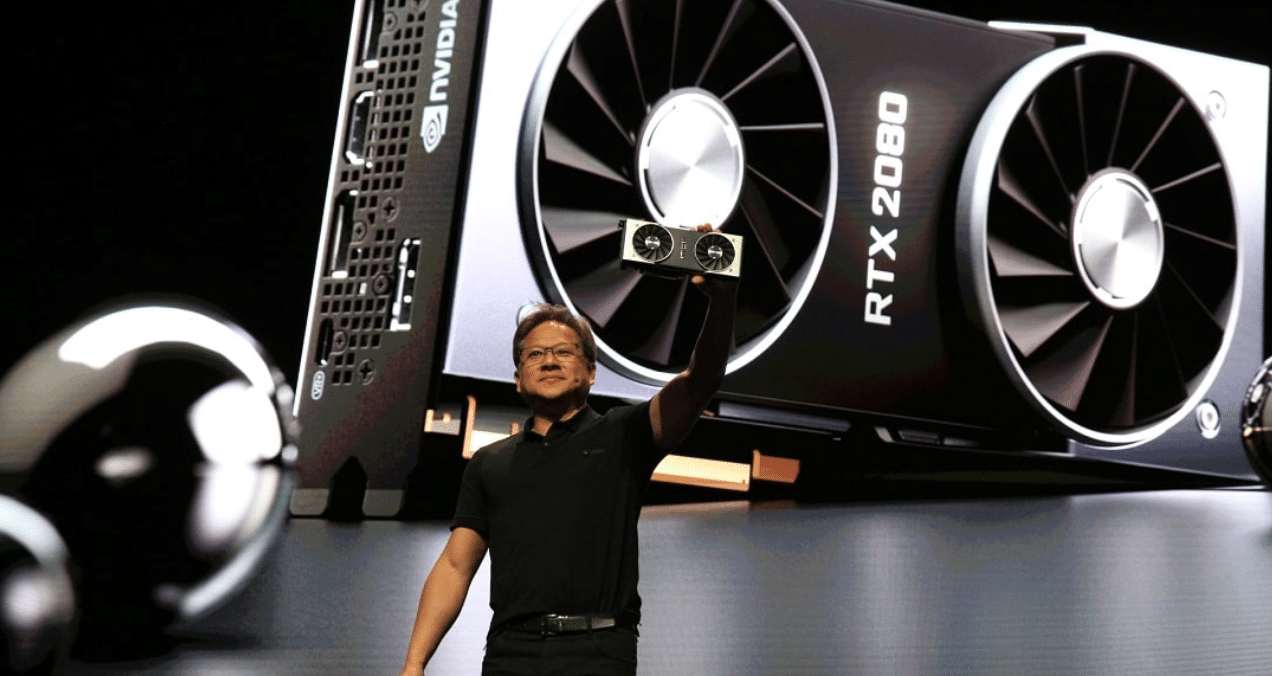

NVIDIA celebrates milestone with 500 RTX games and applications, revolutionizing gaming graphics and performance. Ray tracing and DLSS technologies have transformed visual fidelity and boosted performance in titles like Cyberpunk 2077 and Minecraft RTX.

GeForce NOW adds 17 new games, including The Day Before and Avatar: Frontiers of Pandora, with over 500 games now supporting RTX ON. Ultimate members can experience cinematic ray tracing and stream at up to 4K resolution, while Priority members can build and survive at 1080p and 60fps.

Generative AI and large language models dominated enterprise trends this year, with companies like Amdocs, Dropbox, and SAP building customized applications using RAG and LLMs. Open-source pretrained models are set to revolutionize businesses' operational strategies, while off-the-shelf AI and microservices make it easier for developers to create complex applications.

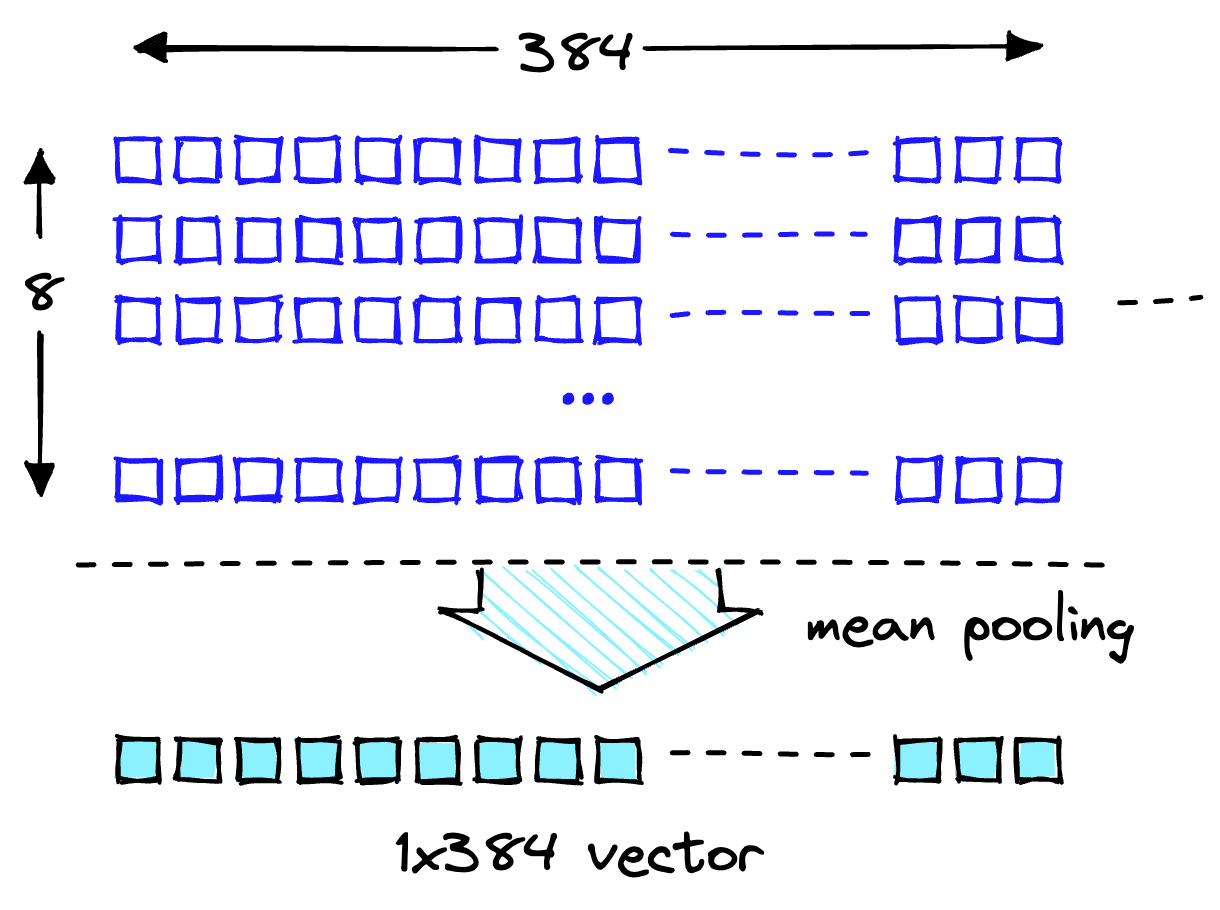

LLMs like Llama 2, Flan T5, and Bloom are essential for conversational AI use cases, but updating their knowledge requires retraining, which is time-consuming and expensive. However, with Retrieval Augmented Generation (RAG) using Amazon Sagemaker JumpStart and Pinecone vector database, LLMs can be deployed and kept up to date with relevant information to prevent AI Hallucination.

Vodafone is transforming into a TechCo by 2025, with plans to have 50% of its workforce involved in software development and deliver 60% of digital services in-house. To support this transition, Vodafone has partnered with Accenture and AWS to build a cloud platform and engaged in an AWS DeepRacer challenge to enhance their machine learning skills.

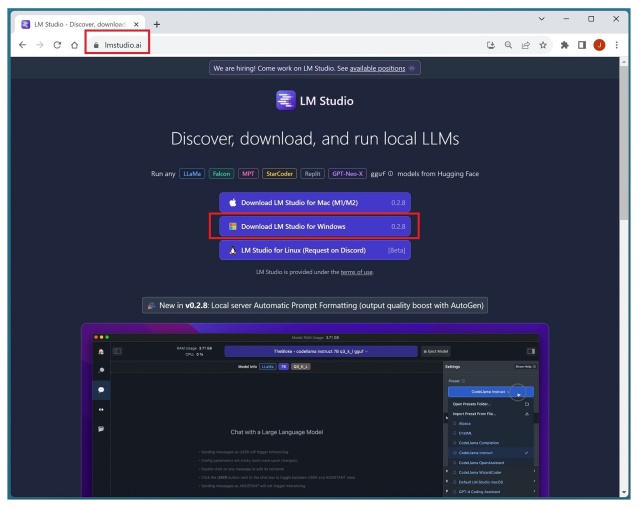

LM Studio is a tool that allows local machine usage of large language models like GPT-x, LLaMA-x, and Orca-x, offering a clean and intuitive UI for exploring models and conducting reasoning tasks. However, its creator and potential connections with other companies remain unclear.

This article explores the importance of classical computation in the context of artificial intelligence, highlighting its provable correctness, strong generalization, and interpretability compared to the limitations of deep neural networks. It argues that developing AI systems with these classical computation skills is crucial for building generally-intelligent agents.

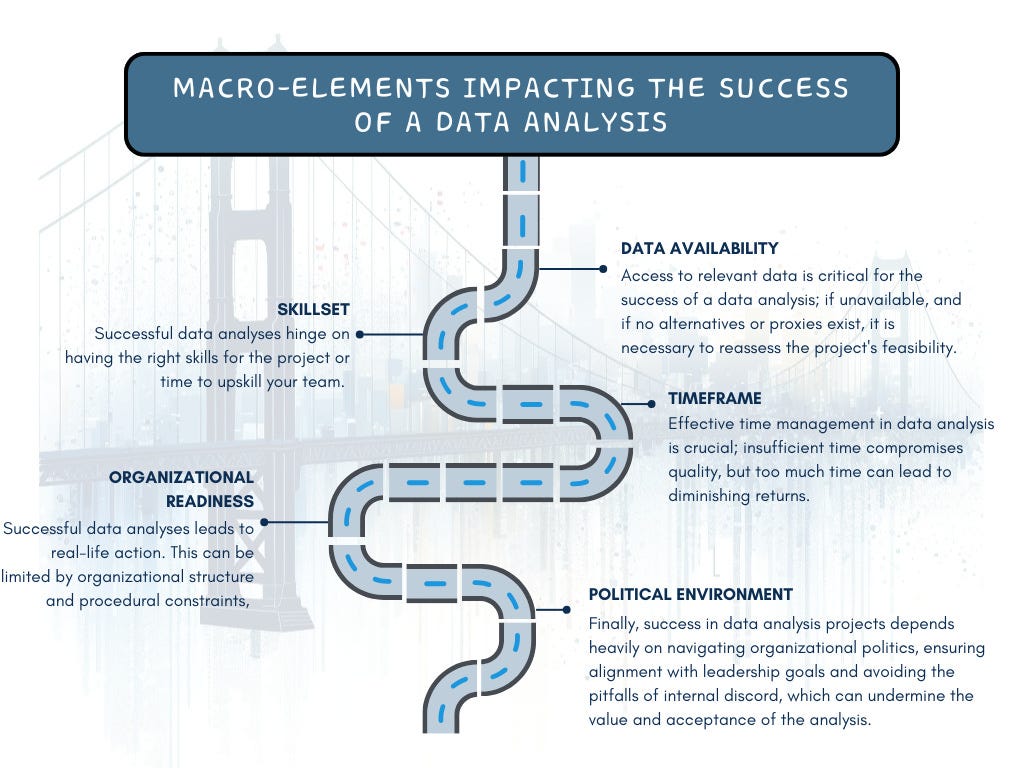

Data projects often fail to deliver real-life impact due to macro-elements such as data availability, skillset, timeframe, organizational readiness, and political environment. The availability and accessibility of relevant data are fundamental, and if data is unattainable, the feasibility of the project should be reconsidered.

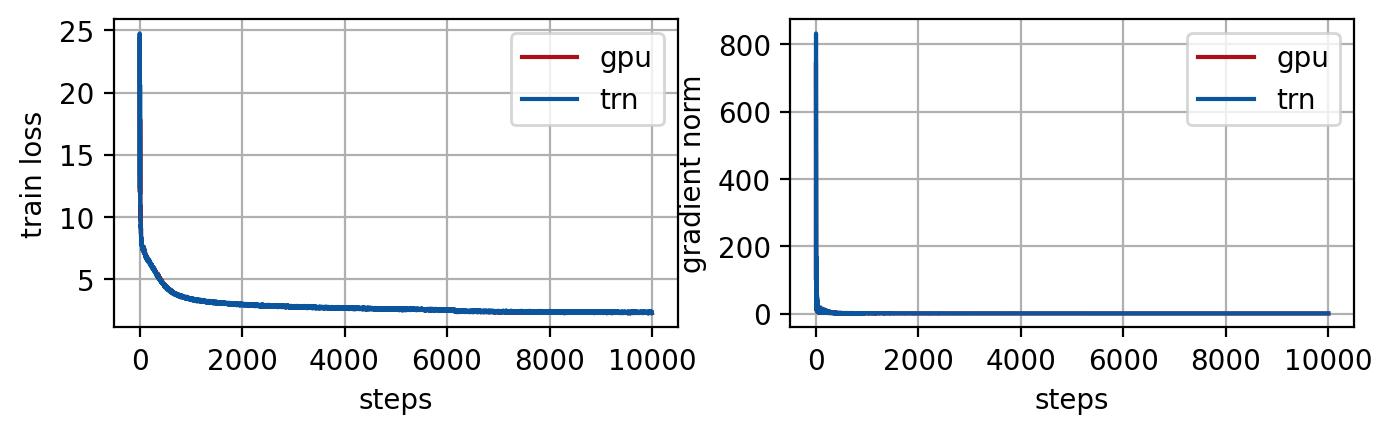

Large language models (LLMs) like GPT NeoX and Pythia are gaining popularity, with billions of parameters and impressive performance. Training these models on AWS Trainium is cost-effective and efficient, thanks to optimizations like rotational positional embedding (ROPE) and partial rotation techniques.

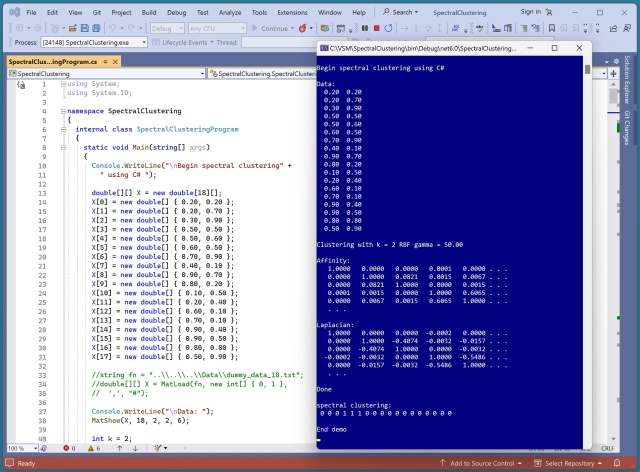

Spectral clustering is a complex machine learning technique that uncovers patterns in data. Implementing it involves computing affinity and Laplacian matrices, eigenvector embeddings, and performing k-means clustering.