Mastering Sensor Fusion: Analyzing obstacle detection with KITTI data using color images. Deep dive into YoloWorld and YoloV8 object detectors for KITTI dataset analysis.

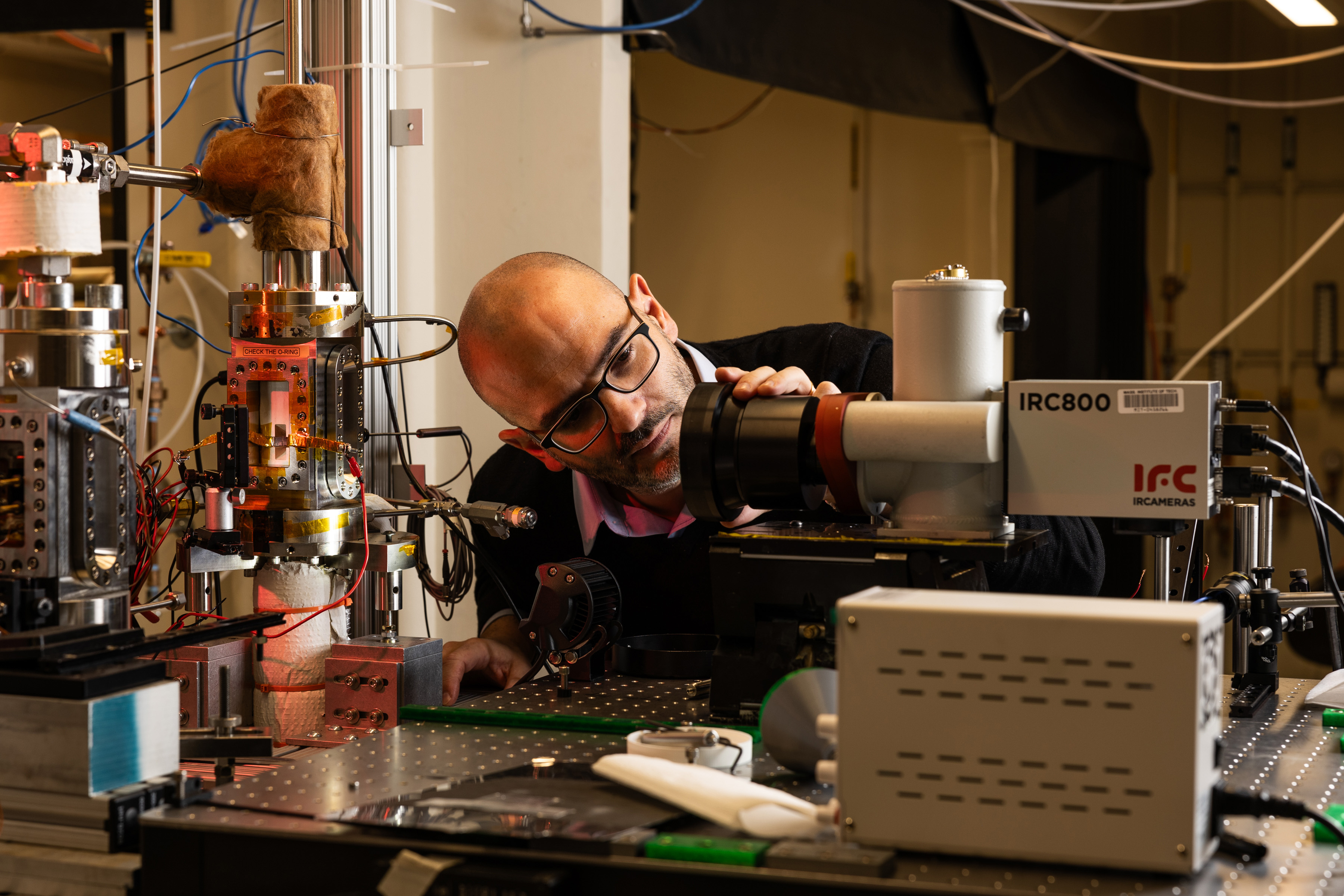

Matteo Bucci's research on boiling, crucial for power plants, electronics cooling, and more, could lead to breakthroughs in energy production and prevent nuclear disasters. His innovative approach to studying boiling phenomena has the potential to revolutionize multiple industries.

MIT researchers have developed a technique using large language models to accurately predict antibody structures, aiding in identifying potential treatments for infectious diseases like SARS-CoV-2. This breakthrough could save drug companies money by ensuring the right antibodies are chosen for clinical trials, with potential applications in studying super responders to diseases like HIV.

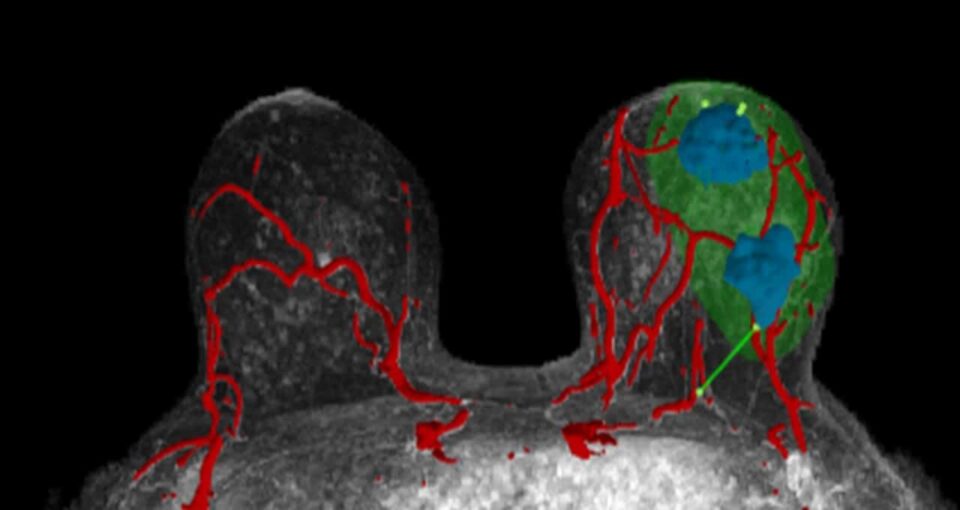

NVIDIA's AI and accelerated computing are transforming industries worldwide, from aiding surgeons with 3D models to cleaning up oceans with AI-driven boats. These innovations are revolutionizing healthcare, energy efficiency, environmental conservation, and technological advancement in Africa.

Prof Geoffrey Hinton warns of AIs surpassing human intelligence, sparking fears for humanity's future. Why pursue something "very scary"?

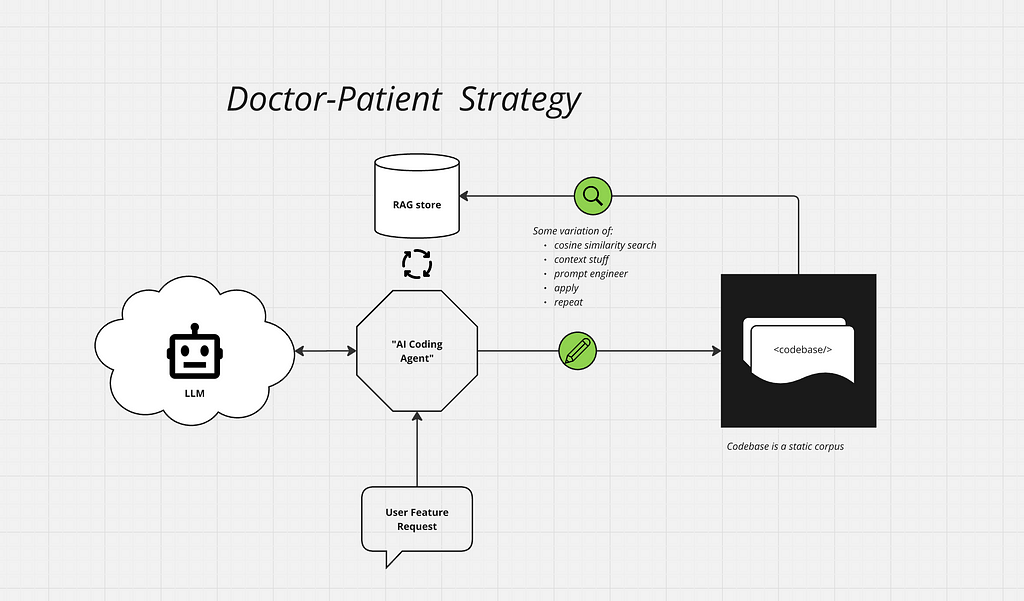

Reflective generative AI tools like GitHub Copilot & Devin. ai automate software development, aiming to build autonomous platforms. The Doctor-Patient strategy in GenAI tools treats codebases as patients, revolutionizing the process of automation.

Jeff Koons, the world's most expensive artist, rejects using AI in his work, despite its growing popularity in the art world. His hands-off approach to creating iconic pieces like balloon dogs and stainless steel rabbits is on display at the Alhambra in Granada, where he sees his art as intertwined with biology.

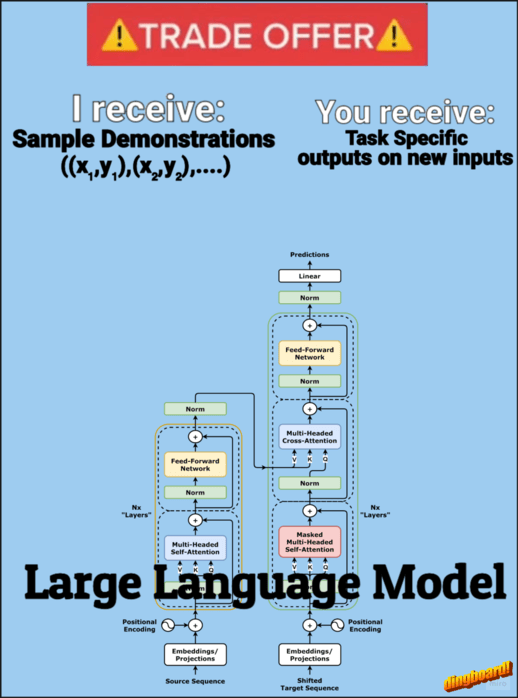

Transformers learn from examples through In-context learning (ICL) and few-shot prompting. Softmax attention with an inverse temperature parameter allows for nearest neighbor behavior in processing examples.

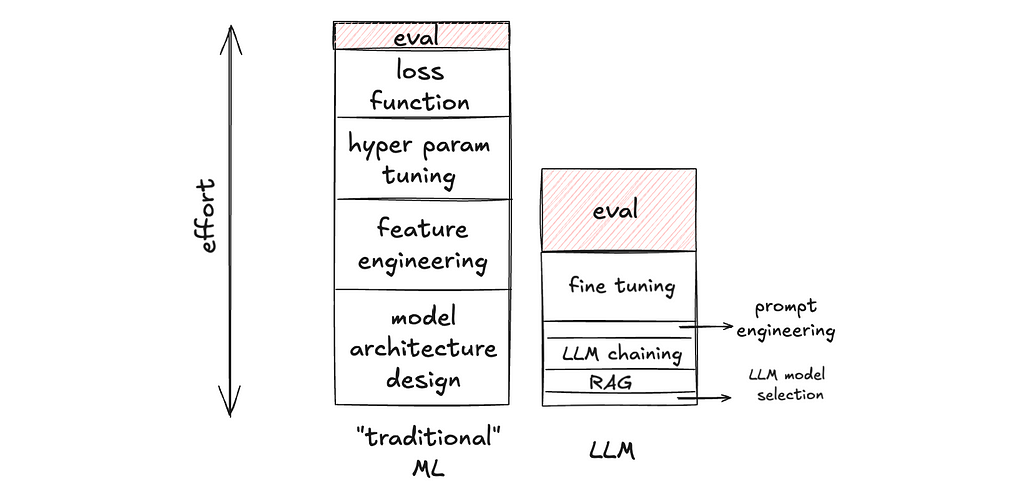

LLMs require a new approach to evaluation, with 3 key paradigm shifts: Evaluation is now the cake, benchmark the difference, and embrace human triage. Evaluation is crucial in LLM development due to fewer degrees of freedom and the complexity of generative AI outputs.

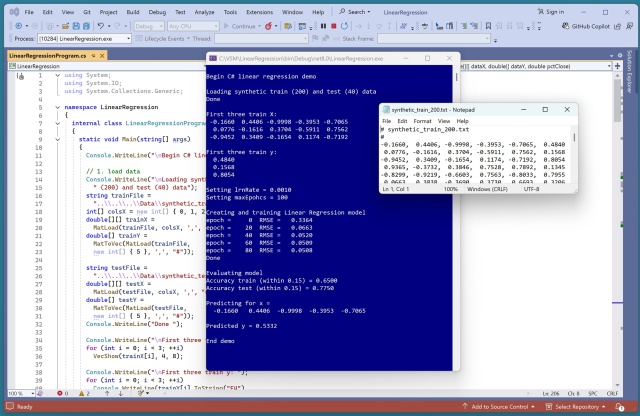

Tech company employee creates linear regression demo using synthetic data, highlighting API design insights resembling scikit-learn library. Predictions show accuracy of 77.5% on test data, showcasing practical application of stochastic gradient descent.

Implementing a resume optimization tool using Python and OpenAI's API for tailored job applications. Learn how to streamline the process with a 4-step workflow and example code.

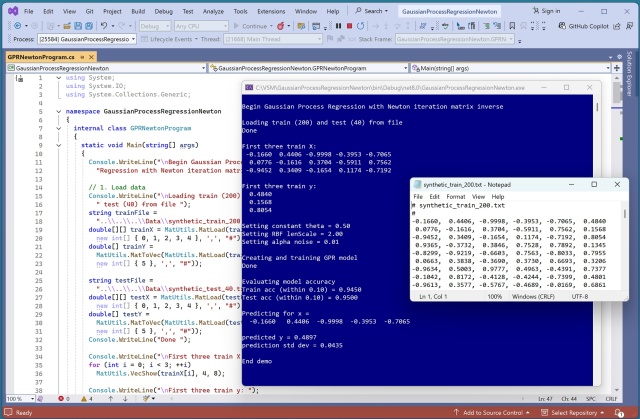

Newton iteration matrix inverse was successfully used in Gaussian process regression to improve efficiency, accuracy, and robustness. The demo showcased high accuracy levels in predicting target values for synthetic data with a complex underlying structure.

Thresholding is a key technique for managing model uncertainty in machine learning, allowing for human intervention in complex cases. In the context of fraud detection, thresholding helps balance precision and efficiency by deferring uncertain predictions for human review, fostering trust in the system.

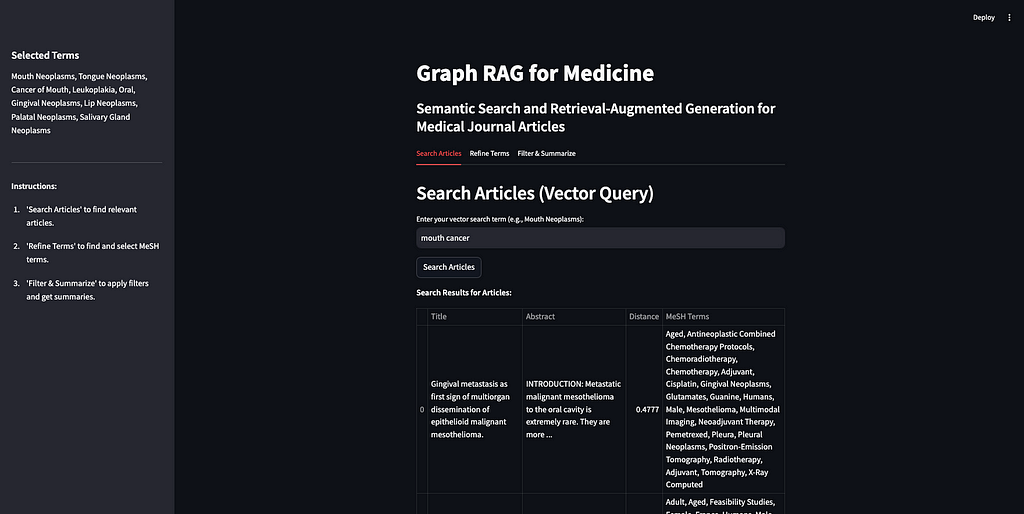

Knowledge graphs and AI combine for a Graph RAG app, enhancing LLM responses with contextual data. Graph RAG gains popularity, with Microsoft and Samsung making significant moves in knowledge graph technology.

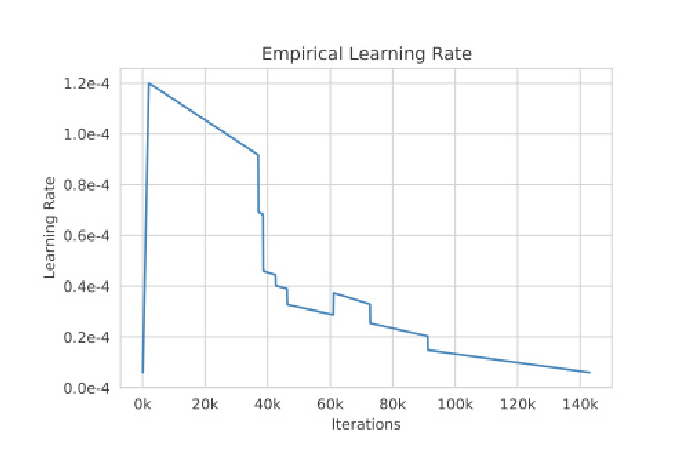

Training large language models (LLMs) from scratch involves scaling up from smaller models, addressing issues as model size increases. GPT-NeoX-20B and OPT-175B made architectural adjustments for improved training efficiency and performance, showcasing the importance of experiments and hyperparameter optimization in LLM pre-training.