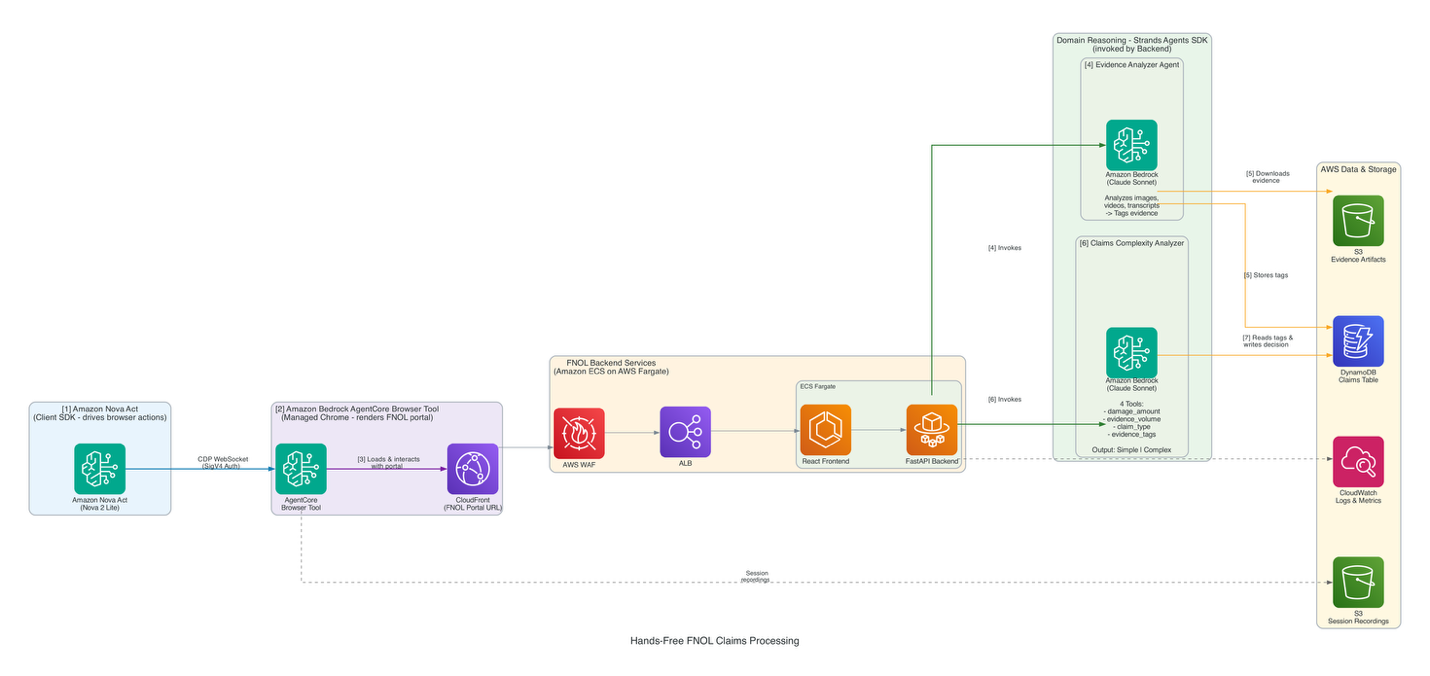

New technology streamlines FNOL intake for insurance adjusters, reducing repetitive tasks and improving efficiency. Hands-free system combines Strands Agents SDK and Amazon Bedrock for faster, more accurate claims processing.

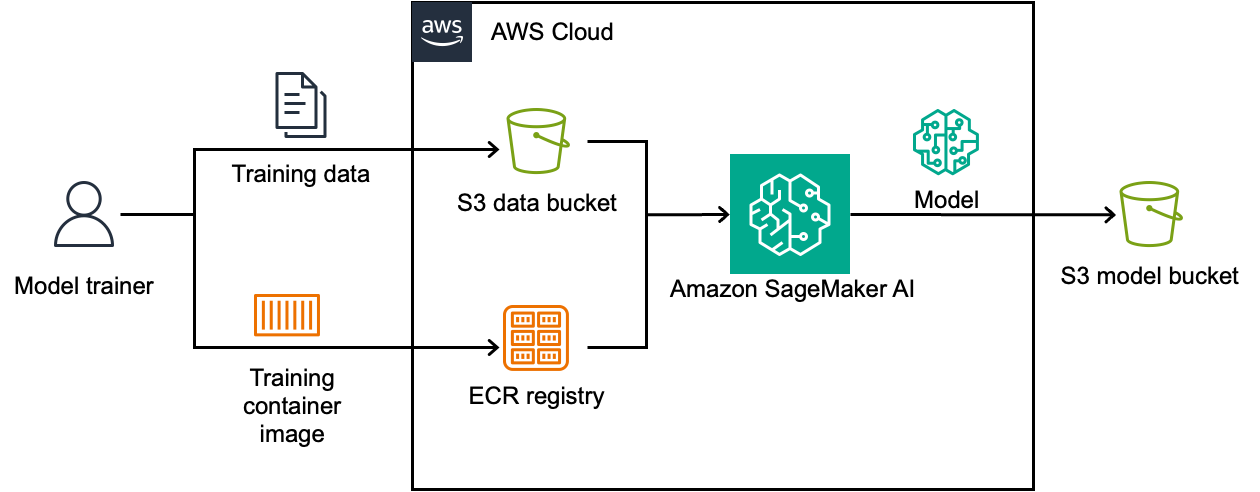

Physical AI is transitioning from research to production, with robots trained in high-fidelity simulations before deployment. Amazon SageMaker AI streamlines compute infrastructure for robot policy reinforcement learning, offering resiliency with SageMaker HyperPod.

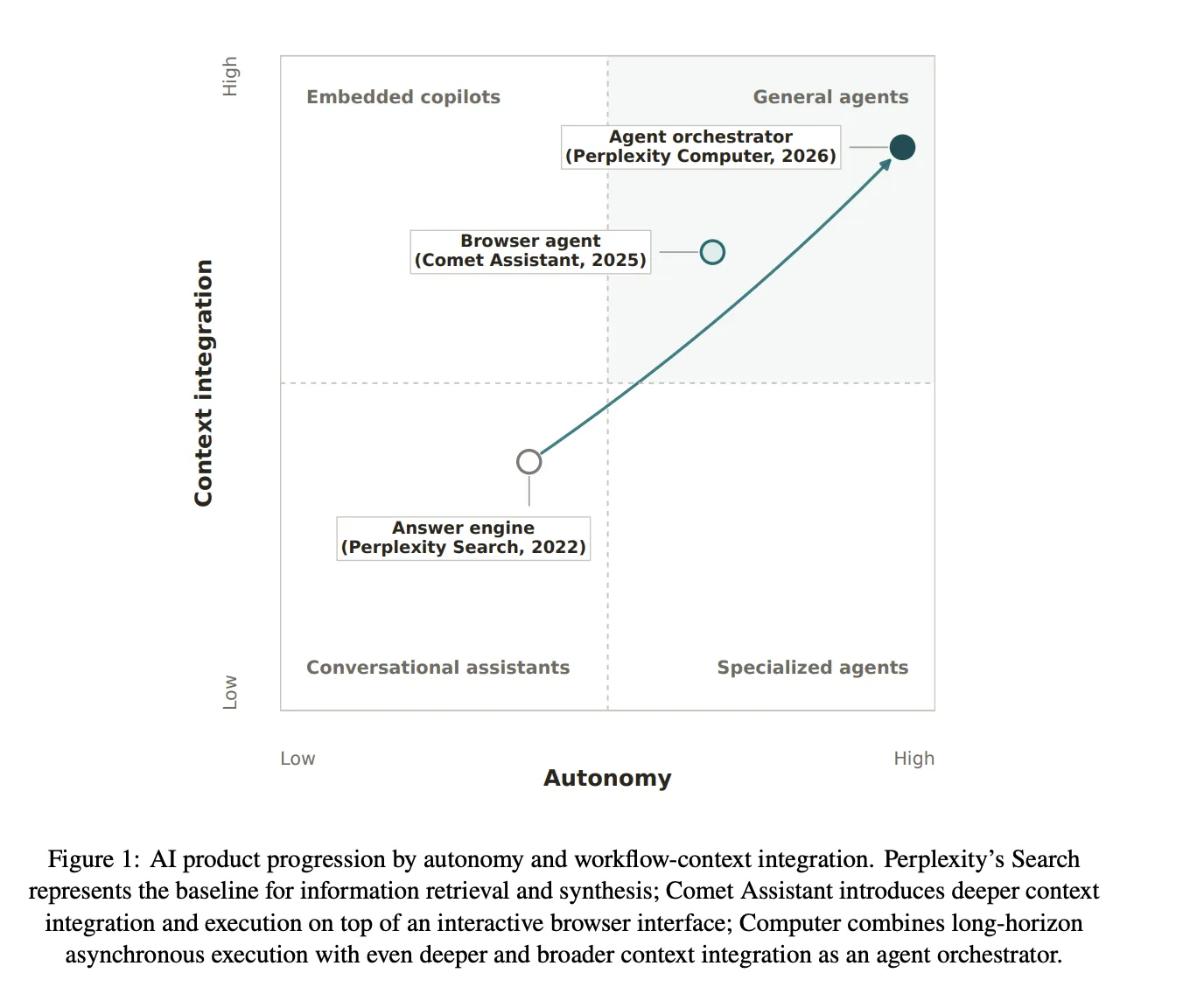

Perplexity and Harvard research shows AI agents reshape knowledge work, increasing efficiency and adoption rates. The study found Computer's autonomous work saves time and enhances user satisfaction compared to Search.

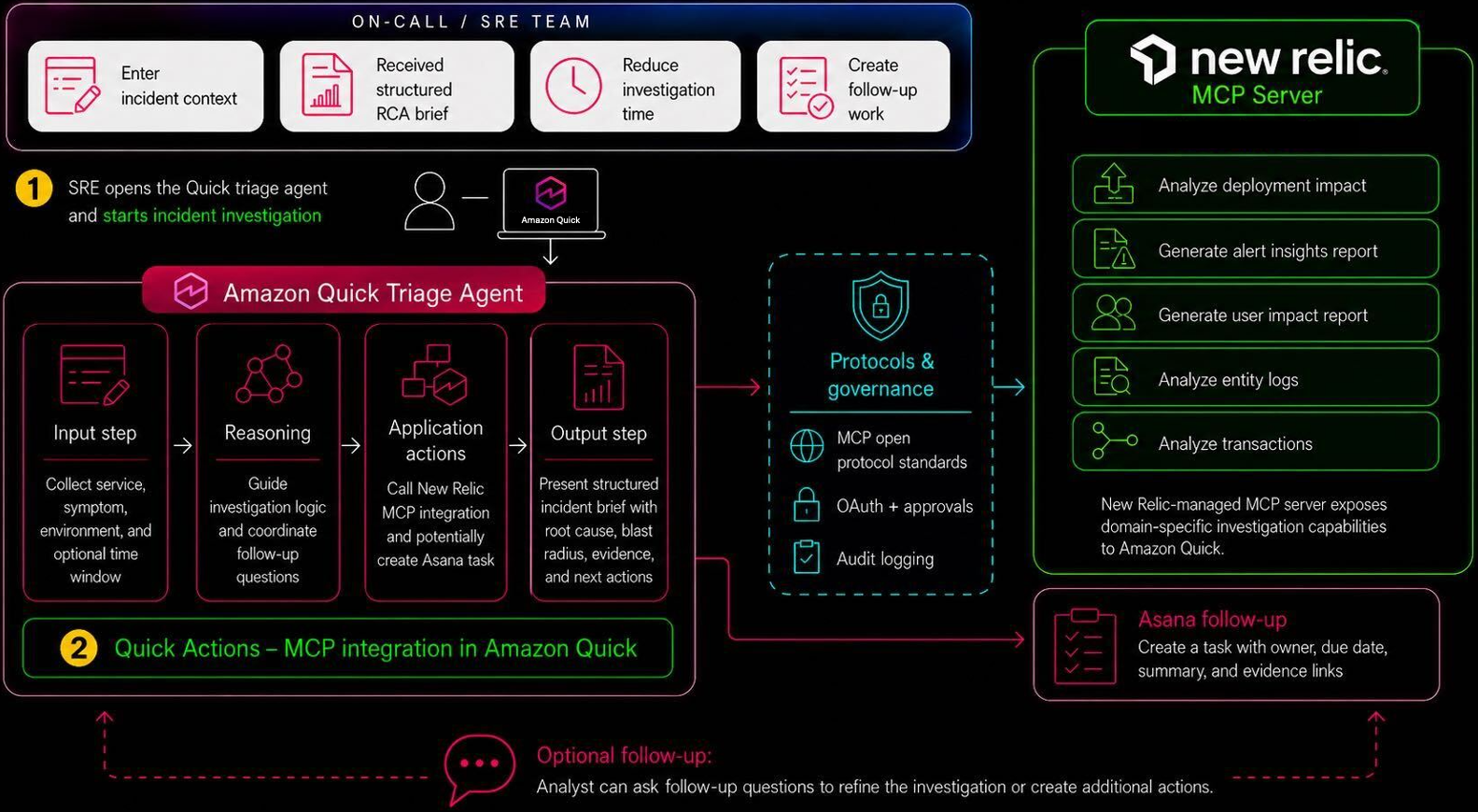

Amazon Quick and New Relic streamline incident triage by creating a custom assistant agent for faster resolution and reduced risk. The agent orchestrates investigation, root cause analysis, and task creation in a single prompt, improving mean time to resolution.

Large language models like ChatGPT are increasingly used for news consumption. MIT study shows AI dependency paradox: users become worse at detecting misinformation without AI assistance.

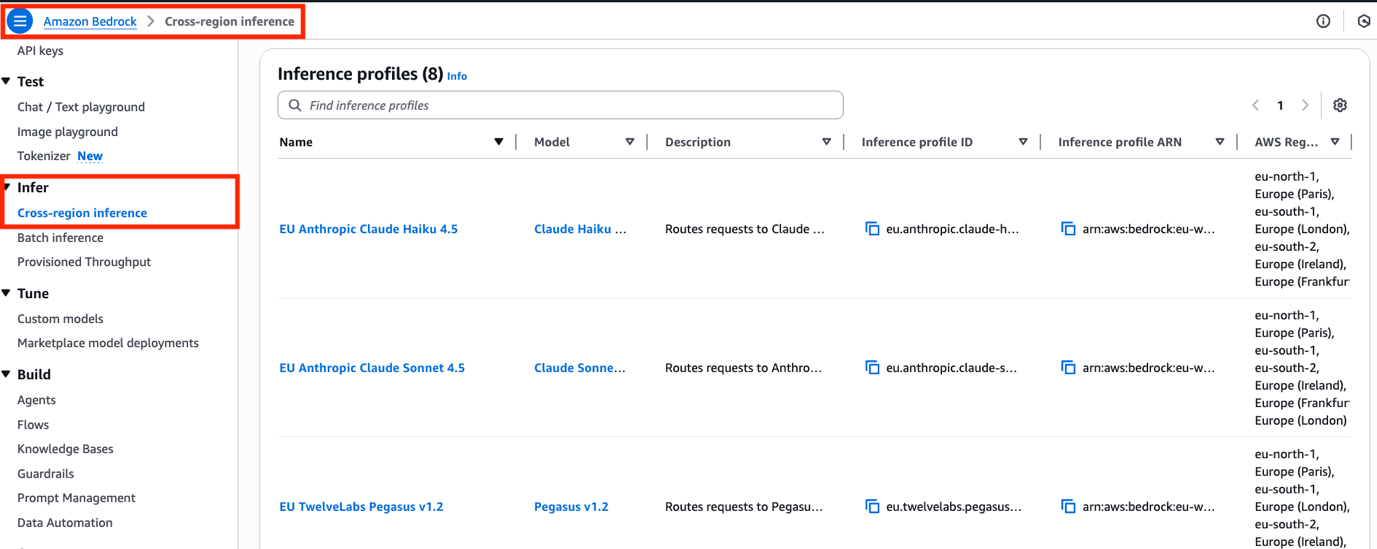

AWS introduces Cross-Region Inference (CRIS) on Amazon Bedrock, allowing customers to route generative AI requests across multiple AWS Regions, ensuring capacity and security. CRIS profiles optimize model throughput, offering global and EU geographic scopes to meet regulatory requirements and enhance application resilience.

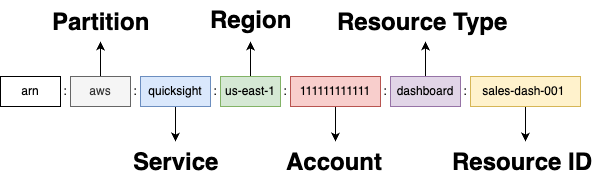

Amazon Quick administrators tackle permission issues with ARNs. Understanding ARN structure is crucial for scaling deployments across AWS accounts.

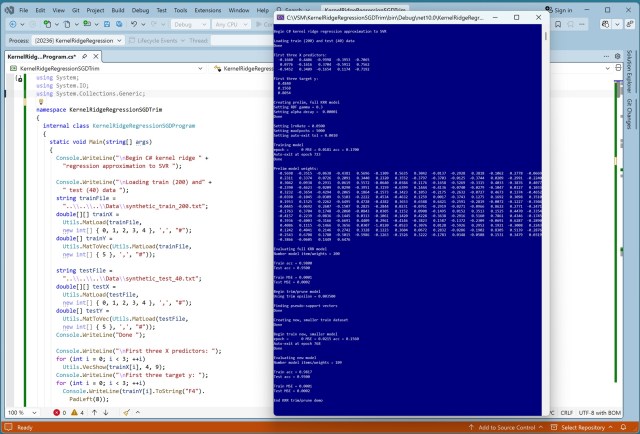

Machine learning regression techniques like Kernel Ridge Regression (KRR) and Support Vector Regression (SVR) are compared for predicting numeric values. A novel approach combining KRR and SVR results in a trimmed model with advantages of both techniques, demonstrated in a C# implementation.

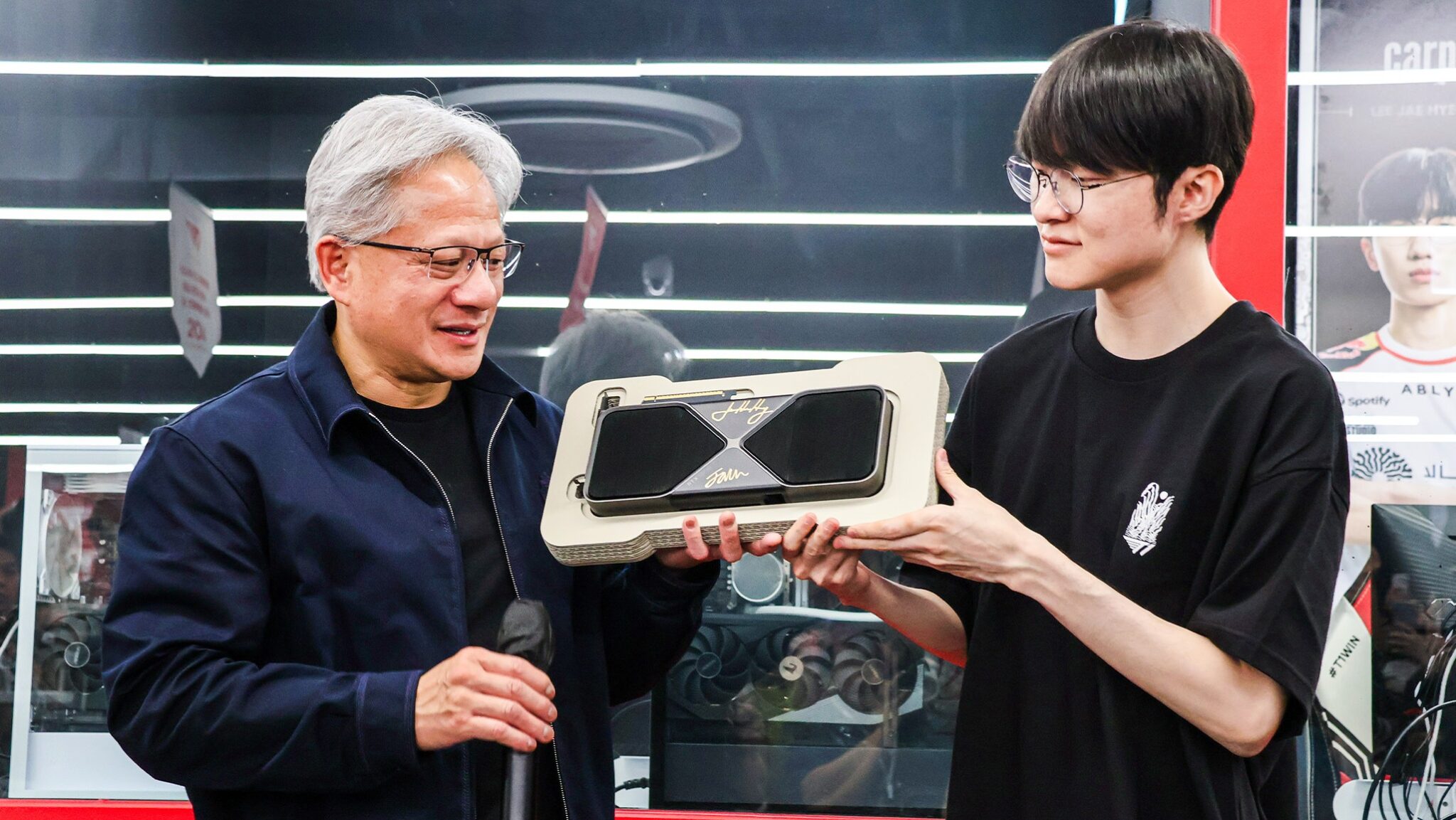

NVIDIA CEO Jensen Huang visits South Korea, praising the nation's AI leadership and gaming community. Partnerships with LG, SK, Hyundai, Naver, and Doosan to advance AI infrastructure.

Developers are ditching laptops for Amazon Bedrock AgentCore Runtime, offering isolated environments for coding agents to run efficiently. Say goodbye to security risks and collisions with a dedicated workspace, real shell, and seamless integration with tools like GitHub and Jira.

NVIDIA and partners showcase U. K.'s AI progress at London Tech Week, with increased AI cloud deployments and Isambard-AI powering ambitious research and startups. U. K. government's Sovereign AI Fund supports homegrown companies like Ineffable Intelligence and NVIDIA Inception startups pushing AI boundaries.

Amazon SageMaker AI now enables ML inference with fully homomorphic encryption (FHE), keeping data encrypted throughout the process. This approach allows for secure cloud-based ML applications in sensitive industries like healthcare, energy, and telecommunications.

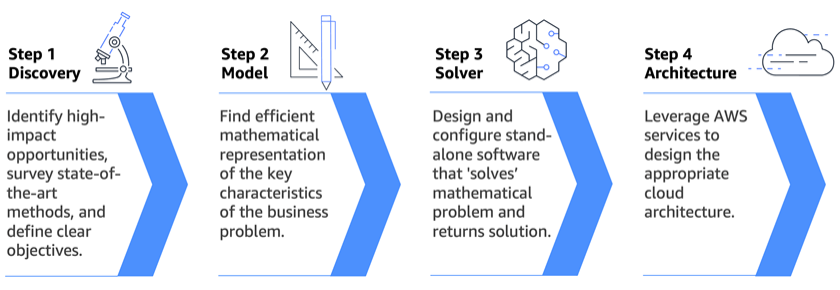

Leading organizations are turning to mathematical optimization to make optimal decisions in complex scenarios. AWS Generative AI Innovation Center offers scientific expertise to solve high-impact problems using AI and optimization, delivering measurable business outcomes.

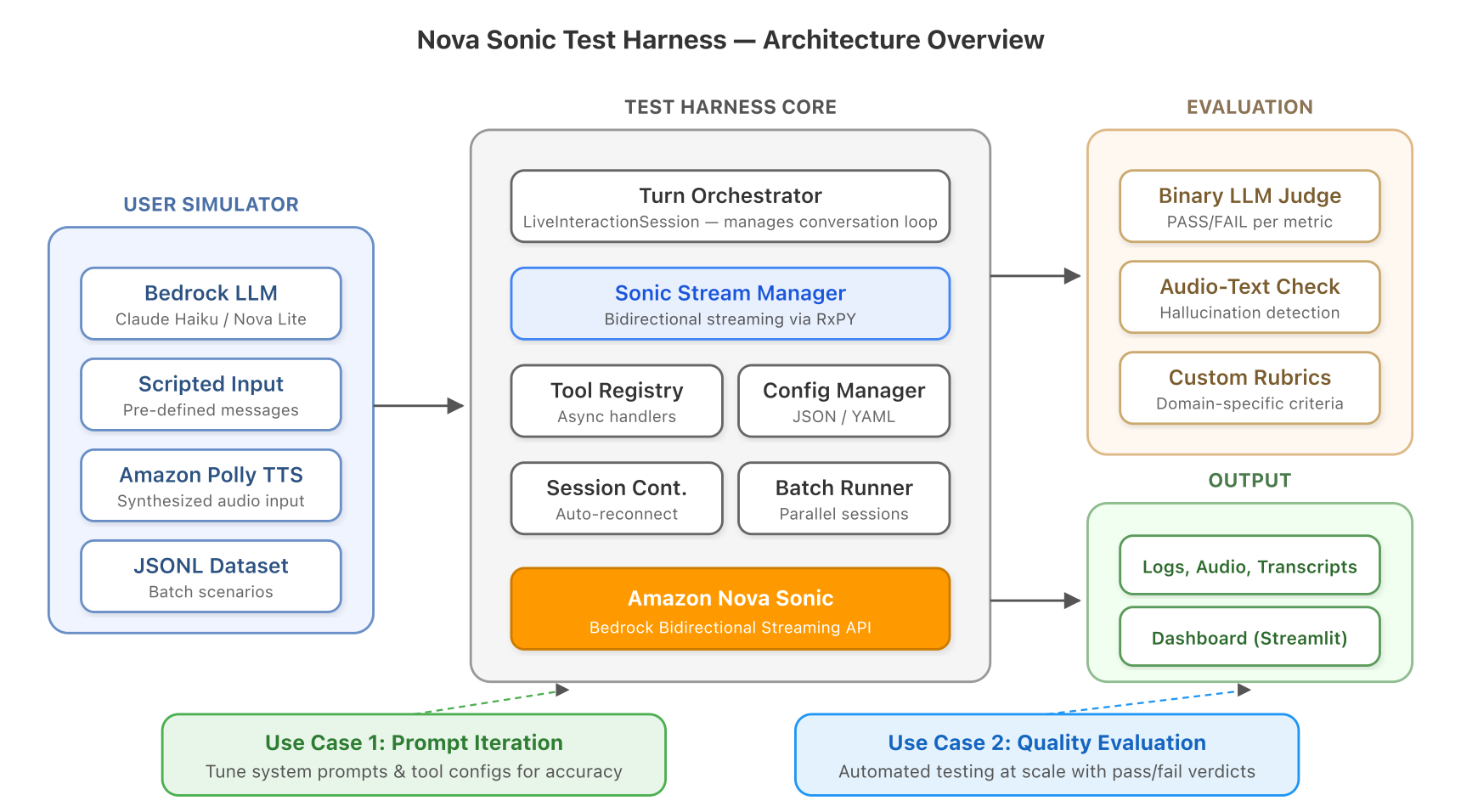

Voice agents are transforming customer interactions, but testing them poses challenges. Nova Sonic Test Harness offers a solution for rapid iteration and comprehensive evaluation of voice agent quality, without the need for manual testing. It addresses issues like bidirectional streaming, non-deterministic responses, and multi-turn context that make speech-to-speech testing fundamentally differ...

NVIDIA introduces RTX Spark, a superchip for Windows PCs, offering enhanced gaming experience with AI and ray tracing technologies. Collaboration with top game developers in Korea, including KRAFTON and NC, to bring popular titles to RTX Spark-powered systems, igniting excitement in the gaming community.