US judge rules Anthropic fairly used books to train AI, but storing pirated books in library infringed copyright. Anthropic's Claude LLM trained with authors' works without permission.

Meta's CTO, Andrew Bosworth, joins US army corps with Palantir and OpenAI, fusing tech and military expertise. Bosworth, known as "Boz," honored to be part of Detachment 201 integrating top tech figures into military innovation.

MIT Sea Grant's LOBSTgER project uses AI and underwater photography to document and share vulnerable ocean life in the Gulf of Maine. The initiative merges art, science, and technology to deepen public connection to the natural world through innovative visual storytelling.

University graduates in the UK are encountering the toughest job market since 2018, with roles for recent graduates at a seven-year low, down 33% from last year. Employers are using AI to reduce costs, impacting job opportunities for graduates.

Former chief of staff to Rishi Sunak, Liam Booth-Smith, lands role at Anthropic, a company collaborating with the government on AI. Booth-Smith encountered Anthropic while working at No 10 and now joins as "external affairs" chief.

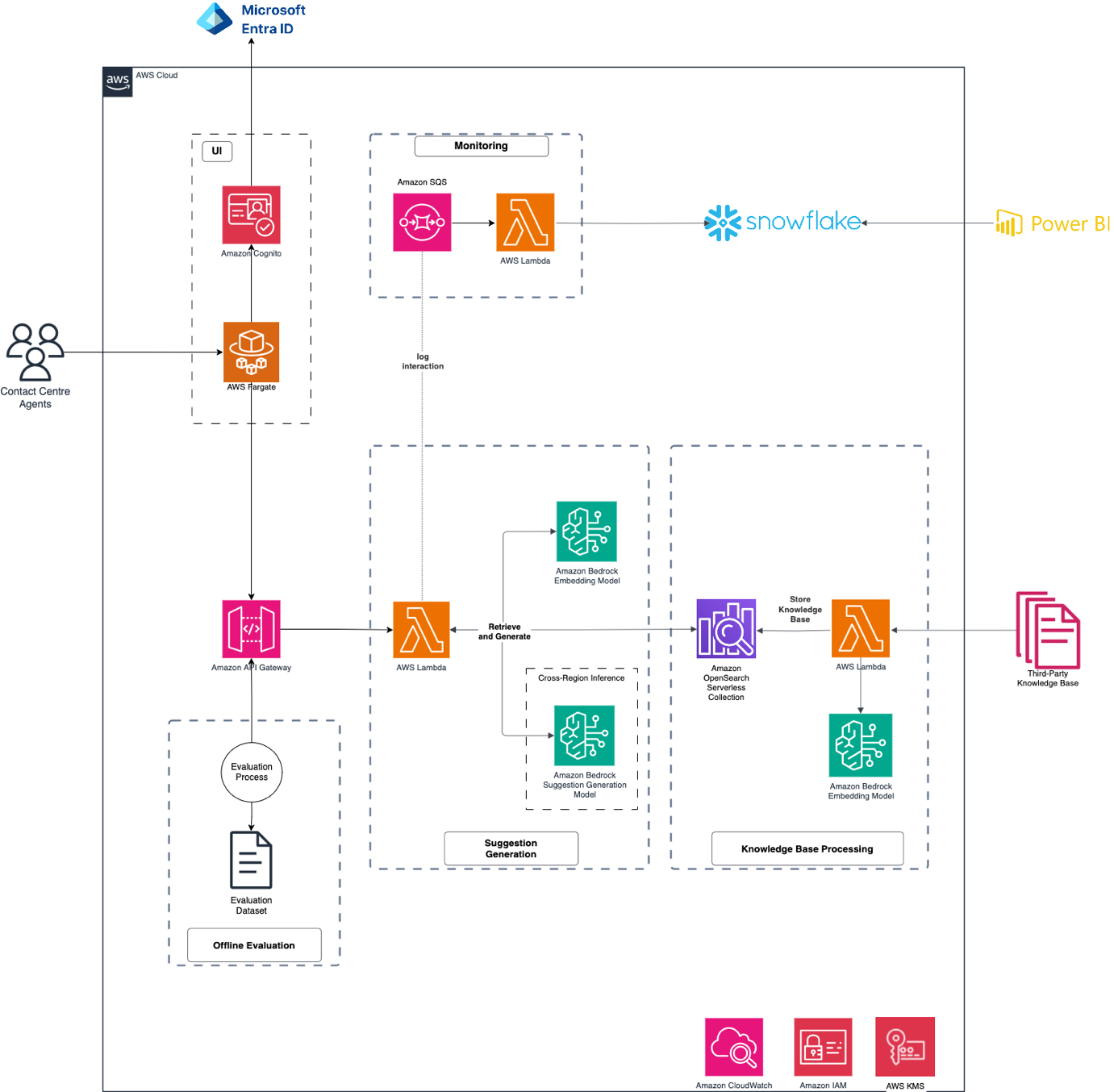

NewDay leverages generative AI to enhance customer service, creating NewAssist chatbot for rapid call resolution. Despite challenges, NewDay's agile approach led to successful implementation with 80% accuracy.

Matt Clifford, author of UK government's AI action plan, to resign for personal reasons. Keir Starmer's AI tsar stepping down after 6 months in role.

MIT Generative AI Impact Consortium (MGAIC) receives 180 proposals, funds innovative AI projects across MIT. Presentation highlights include AI-driven tutors and real-time human-AI musical collaboration.

BBC threatens legal action against Perplexity AI for scraping content to train AI model. Perplexity AI accused of using BBC content without permission.

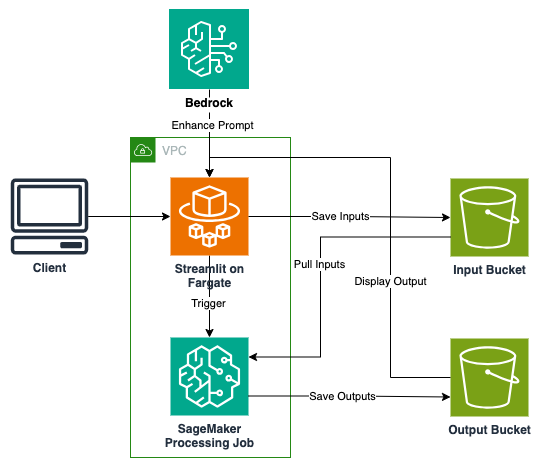

AI/ML tech advances revolutionize digital content creation with video generation capabilities. AWS-based solution using CogVideoX model transforms businesses with scalable, secure video generation.

Journalists at Rupert Murdoch's Australian mastheads express concern over in-house AI tool NewsGPT. Tool allows writers to adopt different styles, enhancing workplace creativity.

Reinforcement learning fine-tunes AI systems with human feedback, combating hallucinations. McCaffrey warns of bias risks in human-in-the-loop systems.

The AWS DeepRacer Student Portal transitions to an AWS Solution by September 15, 2025, offering AI & ML education evolution. The enhanced AWS AI & ML Scholars program now focuses on generative AI, preparing students for AI careers.

Generative AI models require massive computational scale for pre-training. Amazon SageMaker HyperPod offers a resilient solution for large-scale ML training, automating instance repairs for minimal disruption.

Meta's AI assistant fails a user seeking rail firm helpline, providing wrong number, leading to an awkward situation. Despite CEO Mark Zuckerberg's praise, the chatbot's mishap highlights limitations in its intelligence.