WeTransfer revises terms to clarify user content won't train AI, following public backlash over potential use for machine learning models. Service popular among creative professionals for online file transfers.

Meta urges Australia not to restrict use of personal data from Facebook and Instagram for AI training. Parent company of popular social media platforms advocates for global privacy policy alignment.

AI's influence on health policy raises concerns; ChatGPT offers tailored advice but prompts skepticism from parents. Edna Bonhomme explores the implications.

AI agents are transforming industries like healthcare, finance, and agriculture. AWS is leading the way in building secure, reliable AI systems at scale with new capabilities like Amazon Bedrock AgentCore.

Meta's CEO, Mark Zuckerberg, plans to invest billions in AI, aiming to outthink humans with "super-intelligence." The parent company of Facebook, Instagram, and WhatsApp is making high-profile deals and offering multimillion-dollar pay packages to AI researchers.

MIT researchers highlight challenges in AI for software engineering, emphasizing the need for automation beyond code generation. They argue real-world tasks like refactoring, migrations, and testing require more advanced AI solutions to free up human engineers for higher-level design.

Meta's open-source AI tool optimizes concrete mixes for strength, sustainability, and speed, reducing environmental impact. Developed with Amrize and U of I, it accelerates the discovery of low carbon concrete for construction, aiding the goal of net zero emissions by 2030.

OpenAI's self-serving policy raises concerns about its influence on the future agenda, reminiscent of Idiocracy's satire on society's direction. In the film, a wrestler is president, a sports drink company dominates, and humanity faces collapse - a cautionary tale for our AI-powered future.

Accenture Spotlight offers a scalable video highlight solution using Amazon Nova and Amazon Bedrock Agents, automating the creation of personalized short clips and sports highlights. Real-world use cases include personalized video generation, sports editing, content matching, and real-time retail offer generation.

AWS Generative AI Innovation Center helps customers like Formula 1 and FOX drive AI innovation, announcing $100 million investment for next wave. Center delivers results through collaborative innovation, empowering customers to pioneer AI solutions in as little as 45 days.

Trump angers climate groups by linking AI expansion to oil and gas at Pittsburgh summit. Mega-bill threatens AI growth by sidelining renewable energy, experts warn.

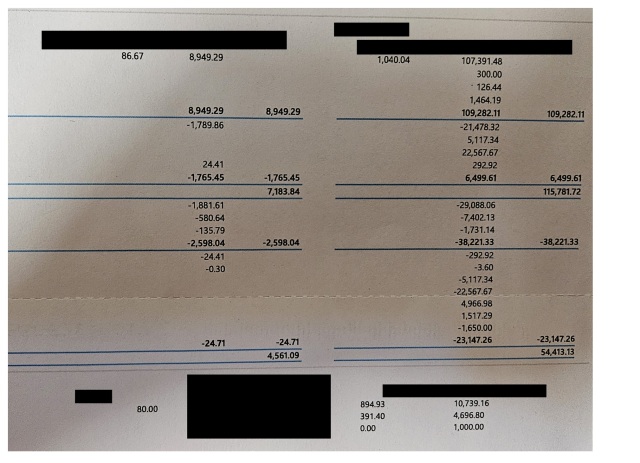

Benford's Law predicts leading digits in financial data, with real-life data closely matching the theory. The Ahlstrom Conjecture suggests spotting fraud in financial data by identifying repeated consecutive digits, showing promise in detecting fake data.

MIT researchers develop a new framework for studying treatment interactions, allowing for efficient estimation of effects on cells. This method could lead to better understanding of disease mechanisms and development of new medicines for cancer and genetic disorders.

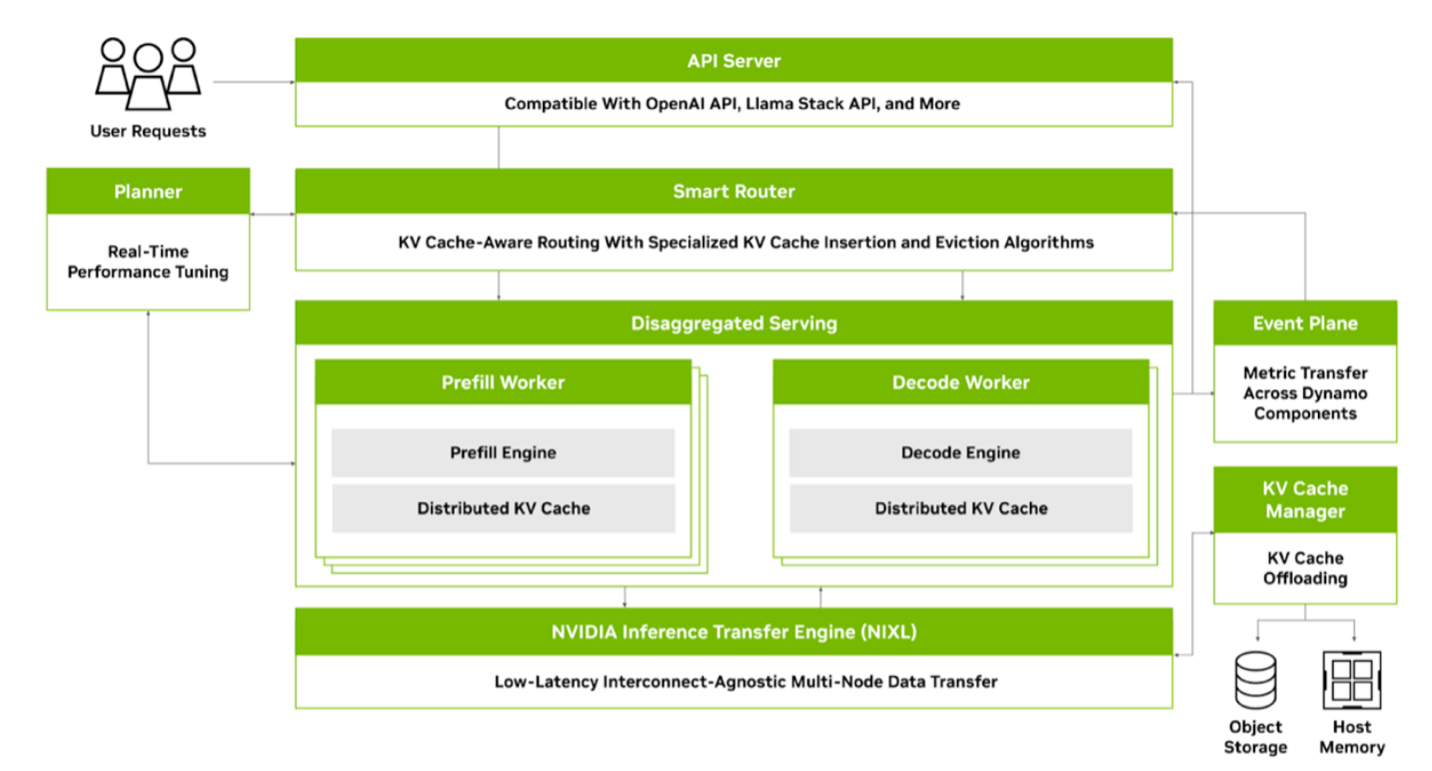

NVIDIA Dynamo is an open-source inference framework designed for efficient, scalable, and low-latency inference solutions, supporting AWS services like Amazon S3 and EKS. It boosts LLM performance with innovative solutions like dynamic GPU resource scheduling and KV cache offloading for higher system throughput.

Organizations face increasing security risks due to interconnected systems. Rapid7 uses ML to predict CVSS vectors for effective vulnerability management.