DeepSeek's R1 LLM outperforms competitors like OpenAI's o1, at a fraction of the cost. Model distillation key to R1's success, may signal a shift towards LLM commoditization.

Researchers have developed ProtGPS, a model that predicts protein localization to specific compartments in cells. This AI tool can also design novel proteins and help understand disease mechanisms.

Amazon Bedrock offers a serverless experience for using language embeddings in applications, like a RSS aggregator. The solution uses Amazon services like API Gateway, Bedrock, and CloudFront for zero-shot classification and semantic search features.

Data engineering is crucial for businesses, with a focus on building Data Engineering Center of Excellence. The evolution of Data Engineers ensures accurate, quality data flow for data-driven decisions.

US vice-president criticizes European regulation at AI Action Summit in Paris, warning against China cooperation. Emmanuel Macron acknowledges AI's disruptive potential with deepfake montage, highlighting global tensions.

AI chatbots like ChatGPT excel in verbose dream analysis, offering a captivating and potentially insightful exploration of the subconscious. Despite initial apprehension, the promise of safely decoding dreams with a preternaturally intelligent assistant proves enticing.

Elon Musk threatens to withdraw $97.4bn offer for OpenAI if it goes for-profit, insists on preserving charity's mission. Musk's lawyers demand assets stay non-profit or charity to be compensated by market value.

Eric Schmidt warns AI could be used by North Korea, Iran, Russia for weapons. Concerns raised about potential biological attacks.

Scarlett Johansson cautions about AI dangers due to deepfake video featuring her and other Jewish celebrities opposing Kanye West's remarks. Video includes AI-generated versions of prominent figures like David Schwimmer, Jerry Seinfeld, Drake, and Mila Kunis.

Large Language Models (LLMs) predict words in sequences, performing tasks like text summarization and code generation. Hallucinations in LLM outputs can be minimized using Retrieval Augment Generation (RAG) methods, but trustworthiness assessment is crucial.

Statistical inference helps predict call center needs by analyzing data using Poisson distribution with mean value λ = 5. Simplifies estimation process by focusing on one parameter.

Virtualization enables running multiple VMs on one physical machine, crucial for cloud services. From mainframes to serverless, cloud computing has evolved significantly, impacting our daily digital interactions.

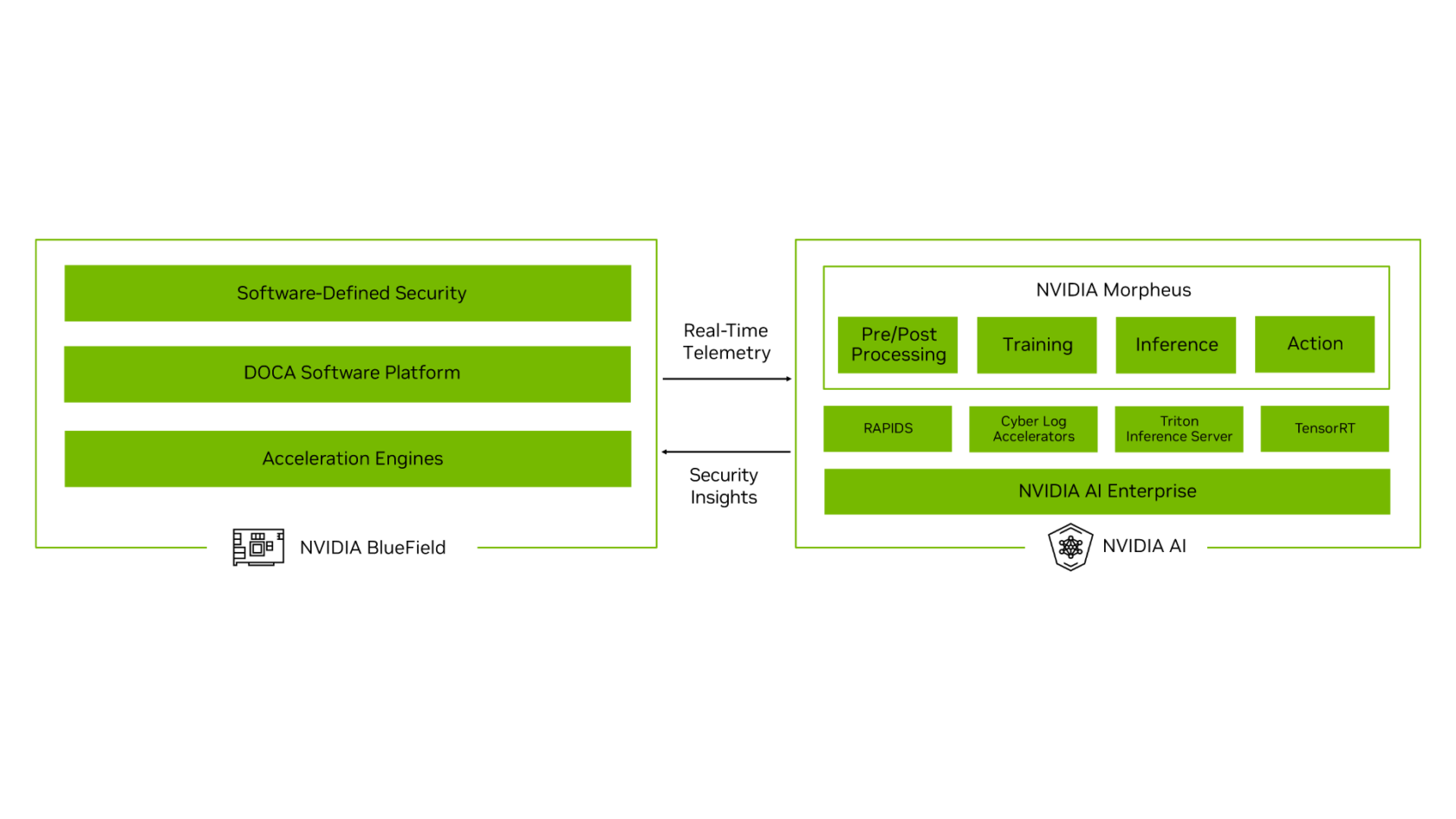

Generative AI advances lead to new cybersecurity threats. Armis, Check Point, CrowdStrike, Deloitte, and WWT integrate NVIDIA AI for critical infrastructure protection at S4 conference.

Developers use Pydantic to securely handle environment variables, storing them in a .env file and loading them with python-dotenv. This method ensures sensitive data remains private and simplifies project setup for other developers.

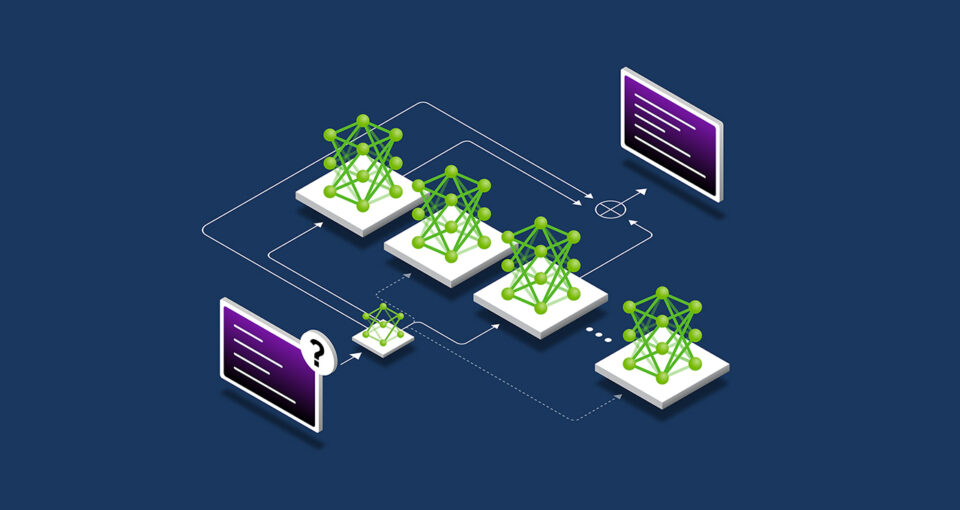

AI scaling laws describe how different ways of applying compute impact model performance, leading to advancements in AI reasoning models and accelerated computing demand. Pretraining scaling shows that increasing data, model size, and compute improves model performance, spurring innovations in model architecture and the training of powerful future AI models.