Marks & Spencer uses AI to personalize online shopping by advising on outfit choices based on body shape and style preferences, aiming to boost online sales. The 130-year-old retailer leverages technology to enhance the shopping experience and recommend items for consumers.

Summary: Analyzing the performance patterns in heptathlon and decathlon reveals intriguing insights on event importance and scoring systems. The data shows significant differences in points received, shedding light on the impact of varying event performances at elite levels.

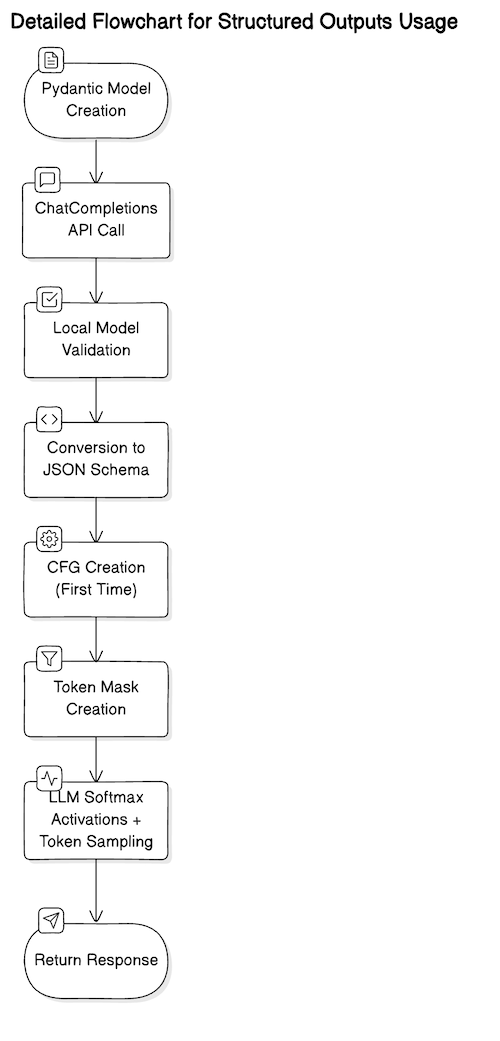

Structured outputs and LLMs are being utilized in various scenarios post-OpenAI's ChatCompletions API release. Pydantic is recommended by OpenAI for JSON schema implementation, enhancing code readability and maintainability.

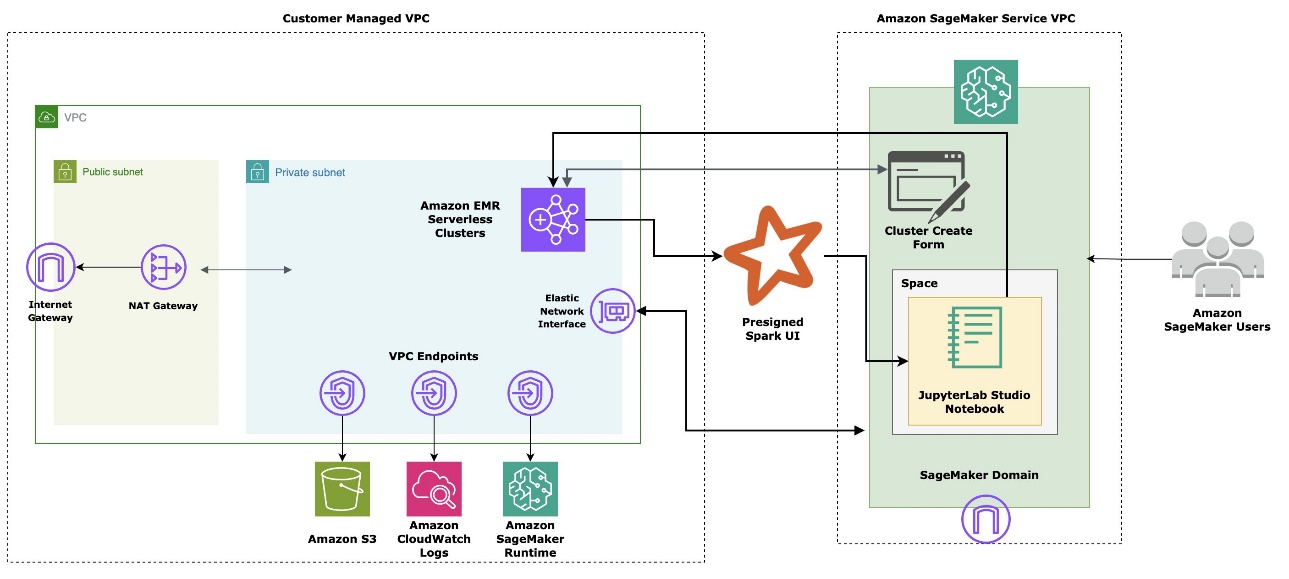

Amazon introduces EMR Serverless integration in SageMaker Studio, simplifying big data processing and ML workflows. Benefits include simplified infrastructure management, seamless integration, cost optimization, scalability, and performance improvements.

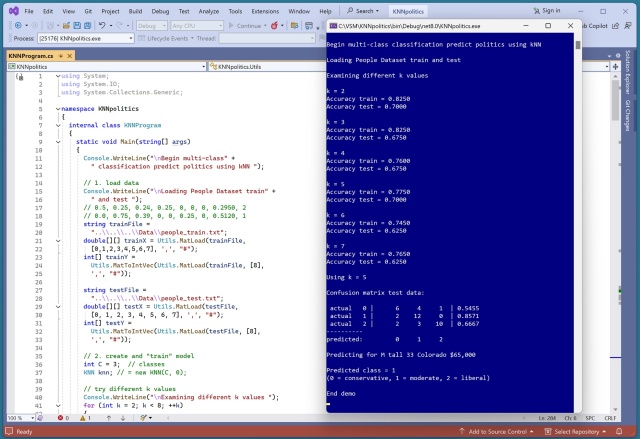

Implementing multi-class k-nearest neighbors classification from scratch using a synthetic dataset. Encoding and normalizing raw data for accurate predictions, with k=5 yielding the best results.

ABC announced "AI and the Future of Us: An Oprah Winfrey Special" featuring tech industry figures like OpenAI CEO Sam Altman. Critics question guest list and framing of the show, calling it an extended sales pitch for the generative AI industry.

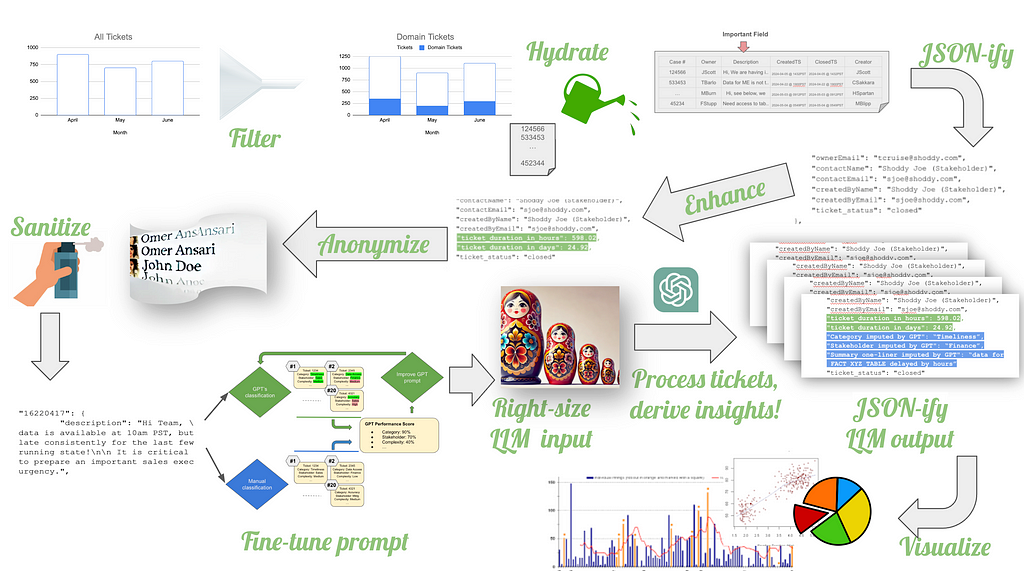

Large Language Models can help make sense of messy data without cleaning it at the source. Best practices for using generative AI like GPT to streamline data analysis and visualization, even with unreliable metadata.

Summary: Explore six unique encoding methods for categorical data in machine learning to bridge the gap between descriptive labels and numerical algorithms. Proper encoding is crucial for preserving data integrity and optimizing model performance.

OpenAI's ChatGPT sees 200M weekly users, doubling since Nov 2023. 92% of Fortune 500 companies now utilize OpenAI's generative AI tools, despite skepticism.

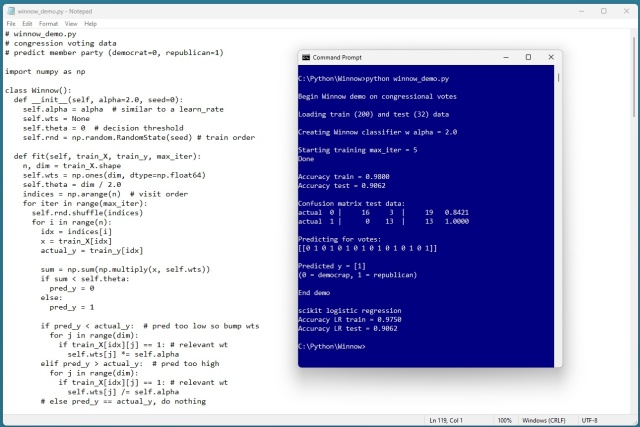

Winnow binary classification is designed for binary variables. A demo using Congressional Voting Records dataset shows its simple yet effective approach.

Sinister Alexa upgrade terrorizes family in hokey AI horror film AfrAId, a rushed jumble of half-ideas. Sony skips press screening for fear of critical drubbing, as movie tracks to make $5-7m in lackluster Labor Day weekend.

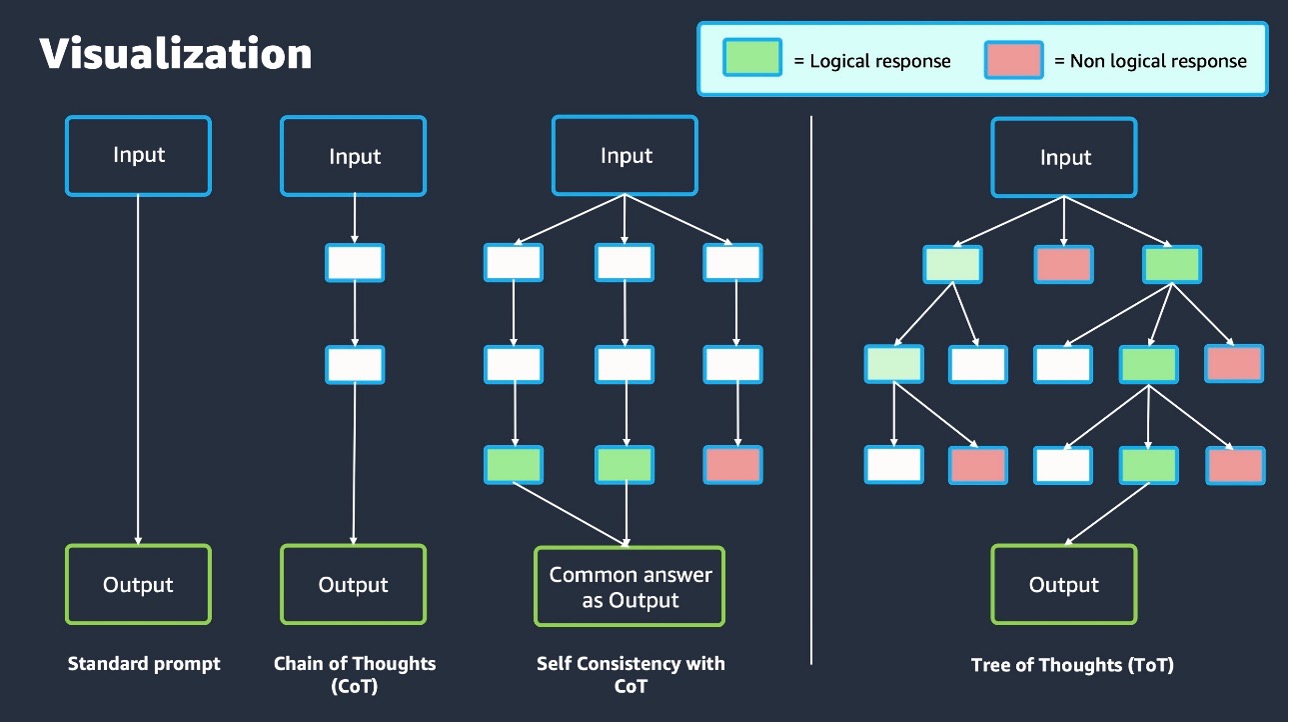

Crafting prompts is key to maximizing generative AI capabilities. Learn about advanced techniques in prompt engineering using Amazon Bedrock.

Alexander Grothendieck's metaphysical theories intrigue, despite his reclusive nature. A surprising encounter in a French hamlet sparks curiosity and wonder.

Tony Blair discusses leadership, emphasizing the importance of experience and humility in governance. He warns against hubris and highlights the evolution of effective leadership over time.

Researchers from MIT and other institutions developed a tool called the Data Provenance Explorer to improve data transparency for AI models, addressing legal and ethical concerns. The tool helps practitioners select training datasets that fit their model's intended purpose, potentially enhancing AI accuracy in real-world applications.