The MIT Abdul Latif Jameel Clinic for Machine Learning in Health discussed whether the "black box" decision-making process of AI models should be fully explained for FDA approval. The event also highlighted the need for education, data availability, and collaboration between regulators and medical professionals in the regulation of AI in health.

The aviation industry has a fatality risk of 0.11, making it one of the safest modes of transportation. MIT scientists are looking to aviation as a model for regulating AI in healthcare to ensure marginalized patients are not harmed by biased AI models.

MIT neuroscientists have discovered that sentences with unusual grammar or unexpected meaning generate stronger responses in the brain's language processing centers, while straightforward sentences barely engage these regions. The researchers used an artificial language network to predict the brain's response to different sentences.

MIT scientists have developed two machine-learning models, the "PRISM" neural network and a logistic regression model, for early detection of pancreatic cancer. These models outperformed current methods, detecting 35% of cases compared to the standard 10% detection rate.

Atacama Biomaterials, a startup combining architecture, machine learning, and chemical engineering, develops eco-friendly materials with multiple applications. Their technology allows for the creation of data and material libraries using AI and ML, producing regionally sourced, compostable plastics and packaging.

Researchers at MIT and IBM have developed a new method called "physics-enhanced deep surrogate" (PEDS) that combines a low-fidelity physics simulator with a neural network generator to create data-driven surrogate models for complex physical systems. The PEDS method is affordable, efficient, and reduces the training data needed by at least a factor of 100 while achieving a target error of 5 per...

MIT PhD students are using game theory to improve the accuracy and dependability of natural language models, aiming to align the model's confidence with its accuracy. By recasting language generation as a two-player game, they have developed a system that encourages truthful and reliable answers while reducing hallucinations.

This article explores methods for creating fine-tuning datasets to generate Cypher queries from text, utilizing large language models (LLMs) and a predefined graph schema. The author also mentions an ongoing project that aims to develop a comprehensive fine-tuning dataset using a human-in-the-loop approach.

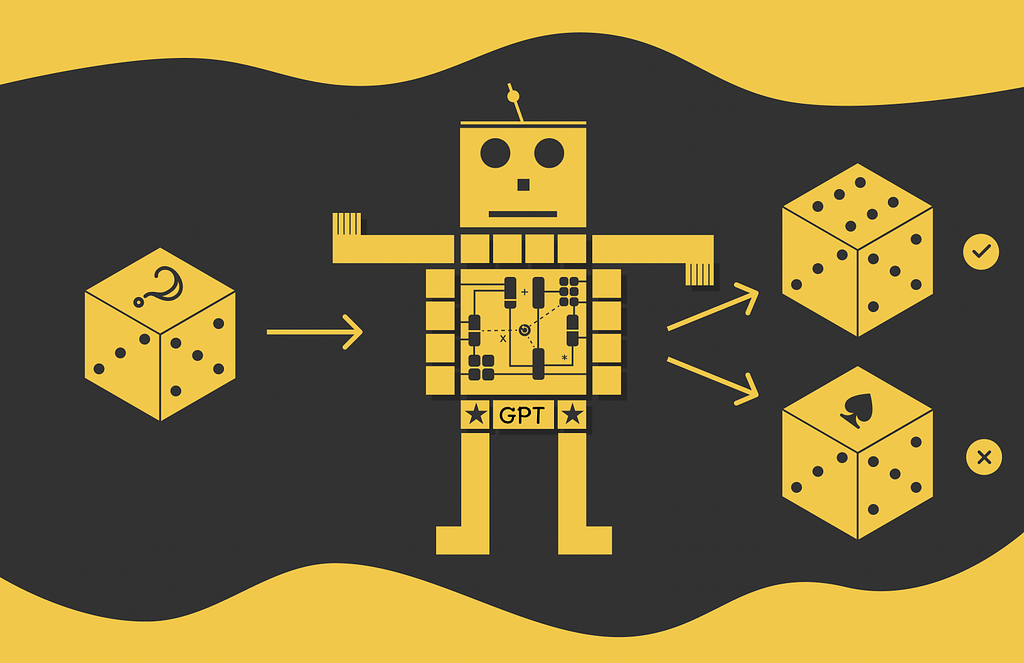

Google Brain introduced Transformer in 2017, a flexible architecture that outperformed existing deep learning approaches, and is now used in models like BERT and GPT. GPT, a decoder model, uses a language modeling task to generate new sequences, and follows a two-stage framework of pre-training and fine-tuning.

The article discusses the importance of understanding context windows in Transformer training and usage, particularly with the rise of proprietary LLMs and techniques like RAG. It explores how different factors affect the maximum context length a transformer model can process and questions whether bigger is always better.

OpenAI introduces updates to ChatGPT AI models, addressing the "laziness" issue in GPT-4 Turbo and launching the new GPT-3.5 Turbo model with lower pricing. Users have reported a decline in task completion depth with ChatGPT-4, prompting OpenAI's response.

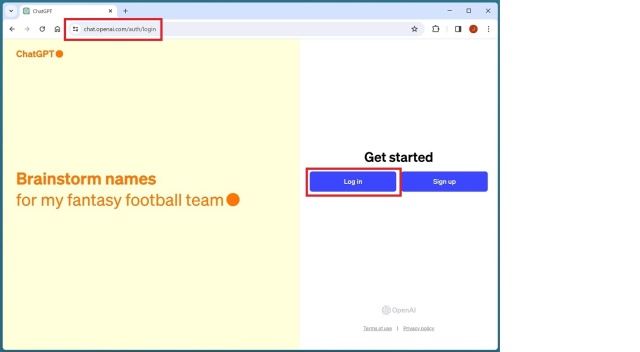

OpenAI has released an easy-to-use web tool to create custom AI assistants without coding, requiring only a Google or Microsoft account and a $20/month OpenAI Plus subscription. Users can personalize their AI assistant's name, picture, tone, and interaction style, and enhance its knowledge by uploading specific documents.

Developing LLM applications can be both exciting and challenging, with the need to consider safety, performance, and cost. Starting with low-risk applications and adopting a "Cheap LLM First" policy can help mitigate risks and reduce the amount of work required for launch.

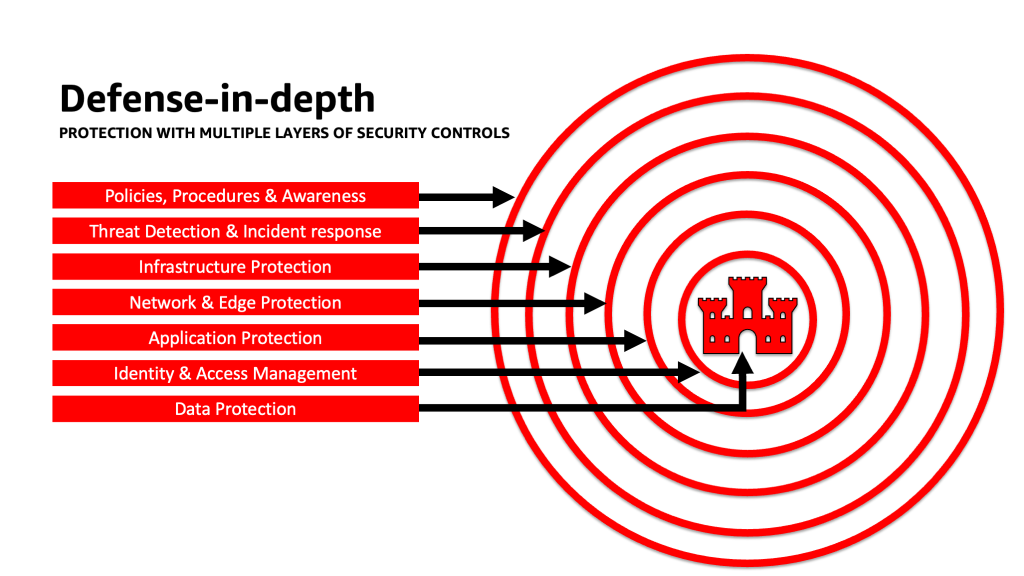

Generative AI applications using large language models (LLMs) offer economic value, but managing security, privacy, and compliance is crucial. This article provides guidance on addressing vulnerabilities, implementing security best practices, and architecting risk management strategies for generative AI applications.

This article explores the limitations of using Large Language Models (LLMs) for conversational data analysis and proposes a 'Data Recipes' methodology as an alternative. The methodology allows for the creation of a reusable Data Recipes Library, improving response times and enabling community contribution.