OpenAI secures $200m contract with US Department of Defense to utilize AI for national security challenges. San Francisco startup to pioneer AI applications for military operations and government tasks.

Hexagon partners with NVIDIA to introduce AEON, a humanoid robot for industrial tasks like reality capture and manipulation. AEON utilizes NVIDIA's advanced AI systems for training and simulation, showcasing the future of robotics in various sectors.

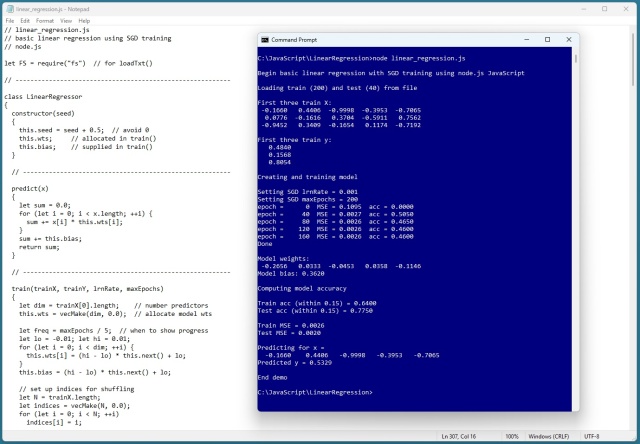

Linear regression demo in JavaScript uses SGD for training. Predicts income from age, height, education with 64% accuracy.

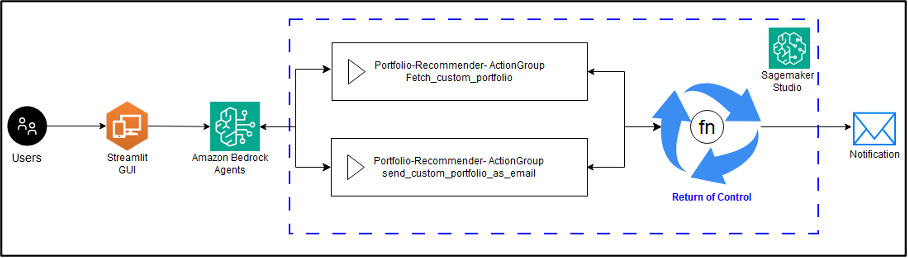

Amazon Bedrock Agents streamline agent creation and enhance return of control capabilities, simplifying complex interactions in distributed systems. By leveraging AWS Lambda and Step Functions, developers can focus on building scalable applications without infrastructure concerns, enabling personalized investment portfolio solutions and tailored recommendations aligned with user goals.

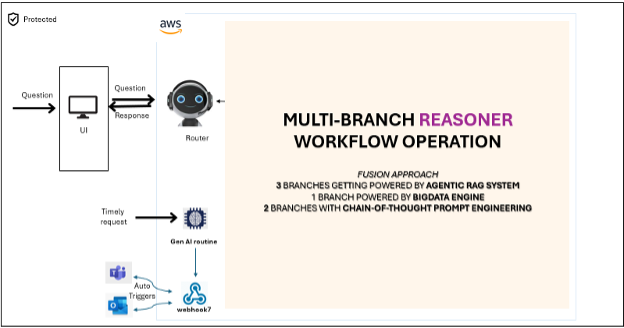

Apollo Tyres partners with Amazon Web Services for a digital transformation journey, using generative AI to optimize manufacturing processes. The solution, Manufacturing Reasoner, automates tasks, connects to IoT, and enables data-driven decision-making for operational efficiency.

MIT AgeLab's AVT Consortium marks a decade of industry collaboration, focusing on driver response to advanced vehicle technologies. Industry leaders call for a strategic, data-driven approach to automotive safety, challenging the industry to prioritize innovation and regulation alignment.

Guardian investigation uncovers 7,000 cases of AI cheating among UK university students, surpassing traditional plagiarism. Experts warn this is just the beginning, with numbers on the rise.

BT's CEO Allison Kirkby foresees further job cuts with the help of AI, aiming to streamline the company. BT plans to shed up to 55,000 workers to become a leaner business by the end of the decade.

Government's AI tool, Humphrey, uses models from OpenAI, Anthropic, and Google, sparking concerns over reliance on big tech in public sector reform. All officials in England and Wales to be trained in AI toolkit as part of civil service efficiency push.

Generative artificial intelligence is rapidly adopted across various sectors, from healthcare to education, but concerns arise over the tendency of large language models to generate inaccurate information. Critics question the true value of this technology for the UK economy due to the persistent flaw of LLMs making things up.

UK workers urged by Technology Secretary Peter Kyle to embrace AI now or risk falling behind, only 2.5 hours of training needed to bridge generational gap in tech usage.

ChatGPT AI chatbot tested for personal finance advice by human experts. Can AI effectively manage our money?

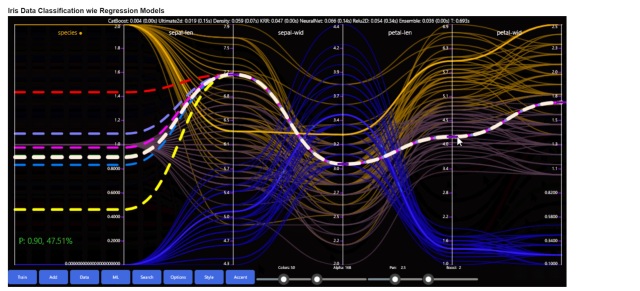

Thorsten Kleppe (https://github.com/grensen) creates stunning, interactive data visualizations that captivate viewers. His latest explorations showcase beauty and interactivity in machine learning visuals.

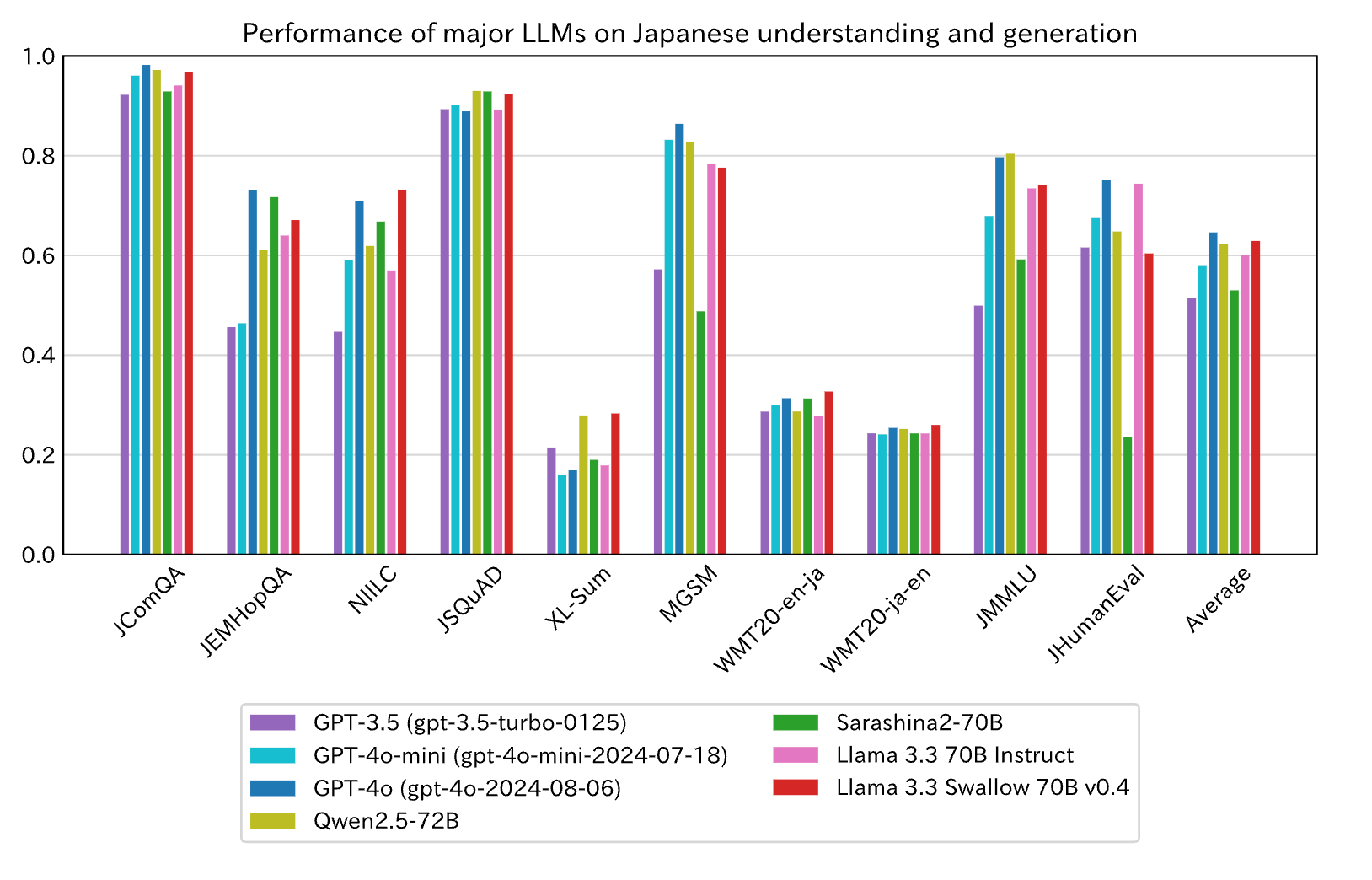

The Institute of Science Tokyo developed Llama 3.3 Swallow, a 70-billion-parameter LLM with superior Japanese language capabilities, outperforming GPT-4o-mini. The model, available on Hugging Face, was trained using Amazon SageMaker HyperPod and specialized enhancements for Japanese processing.

Article showcases linear support vector regression using C# with particle swarm training for model prediction accuracy assessment. Demo reveals challenges in predicting non-linear data, highlighting the importance of specialized optimization algorithms like particle swarm.