Global investment in AI is surging, with Nvidia leading the way. Concerns about ethics and inclusivity are being overshadowed in the AI frenzy.

Home secretary faces pressure on police due to early release proposals. Defence sources predict UK will commit to 3.5% GDP defense spending target by 2035 at Nato summit. Lords challenge government over data bill, accusing them of neglecting creative industries against AI.

Fraudsters exploit streaming services with fake AI-generated tracks, impacting indie artists. The music industry faces a battle as manipulation tactics grow on platforms like Spotify and Apple Music.

Ewan Morrison explores generative AI's limits with ChatGPT, inventing fake book titles. He resists AI in his work, wary of its potential harm and human costs of technology.

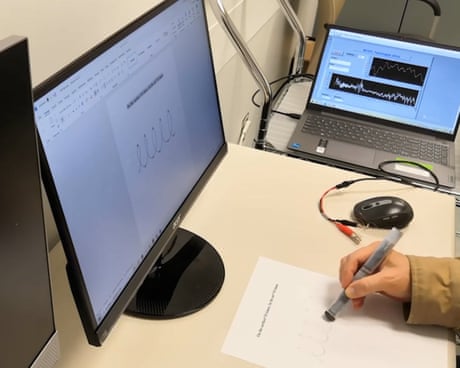

AI machine learning analyzes handwriting-generated electrical signals to detect Parkinson's tremors using a 3D-printed magnetic ink pen. Early diagnosis aids in accessing support for the 10 million individuals worldwide living with Parkinson's disease.

Research team from Olivetti Group and MIT CSHub use AI to find sustainable alternatives to cement in concrete, discovering ceramics and mining byproducts as viable options. Their machine-learning framework sorts through over 1 million rock samples to identify 19 types of materials that can reduce costs and emissions in concrete production.

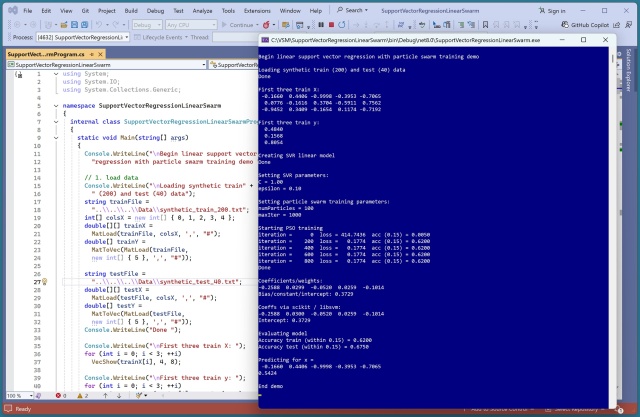

Training linear support vector regression (SVR) poses challenges due to the non-calculus differentiable loss function. Utilizing particle swarm optimization (PSO) proved more effective than evolutionary algorithms for training linear SVR models.

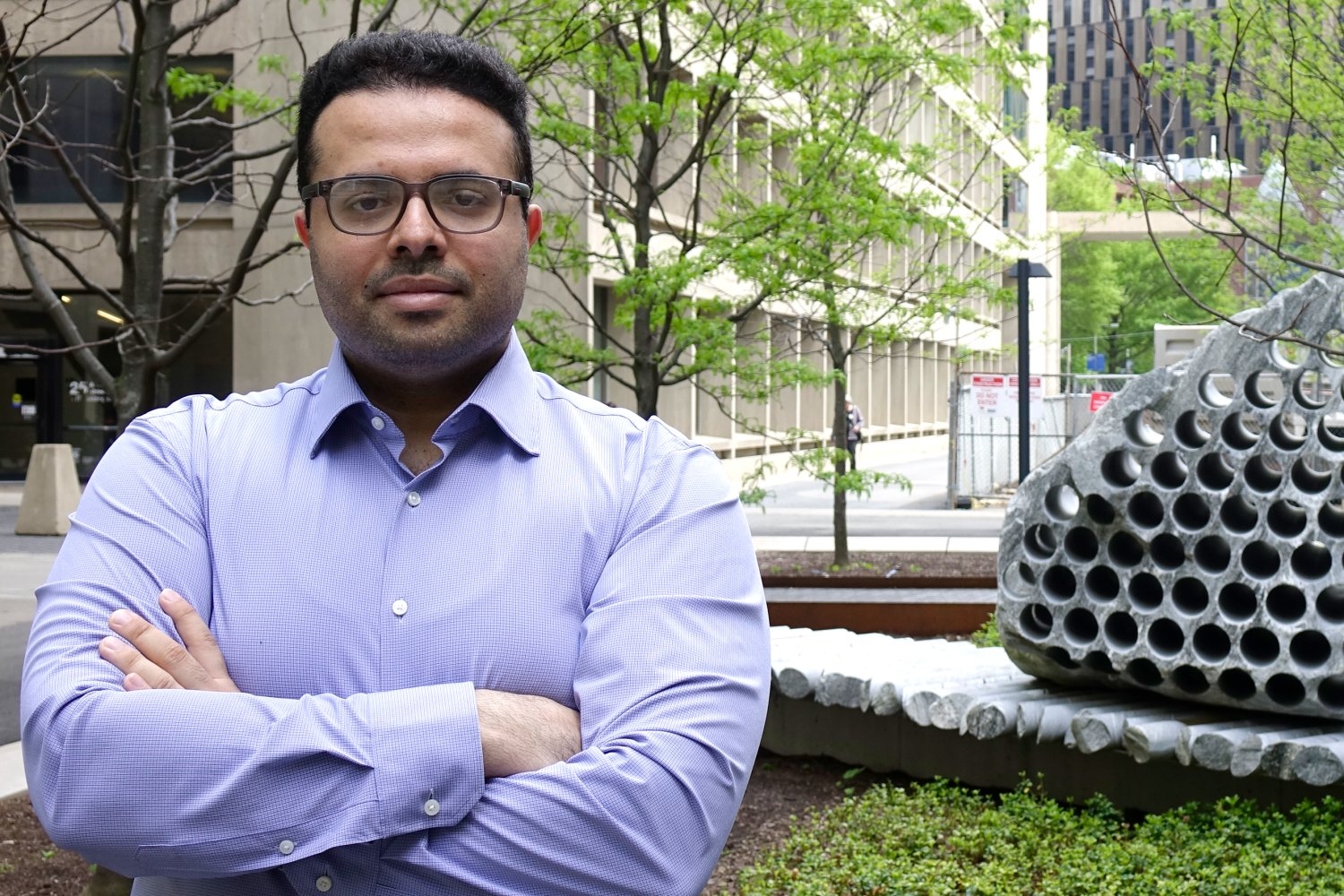

Leo Anthony Celi of MIT addresses bias in AI training data, highlighting flaws and proposing solutions for more accurate models. He emphasizes the importance of teaching students to thoroughly evaluate data to prevent biases in AI applications.

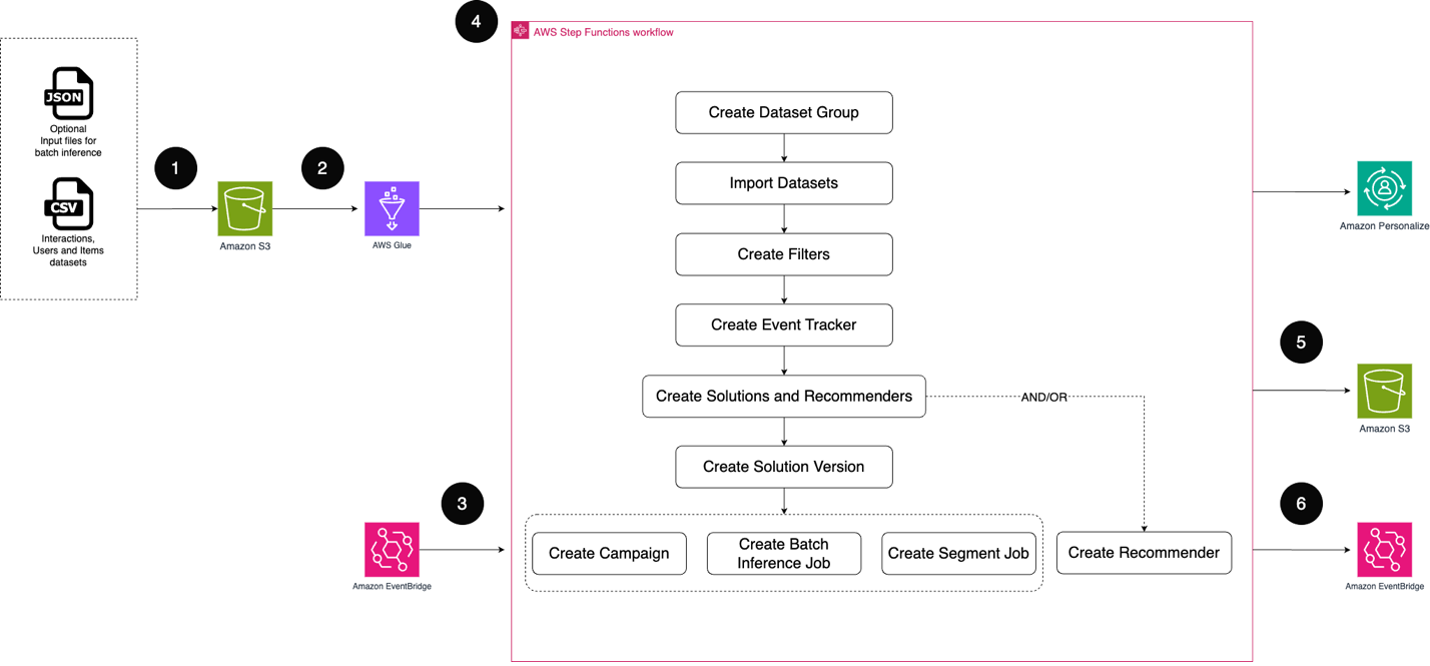

Crafting personalized experiences boosts engagement and loyalty. Amazon Personalize uses ML to generate tailored recommendations, streamlining the process with MLOps practices.

MIT and Stanford develop SketchAgent, an AI system that creates sketches stroke-by-stroke based on natural language prompts. The tool aims to revolutionize how humans communicate with AI through a more natural and iterative drawing process.

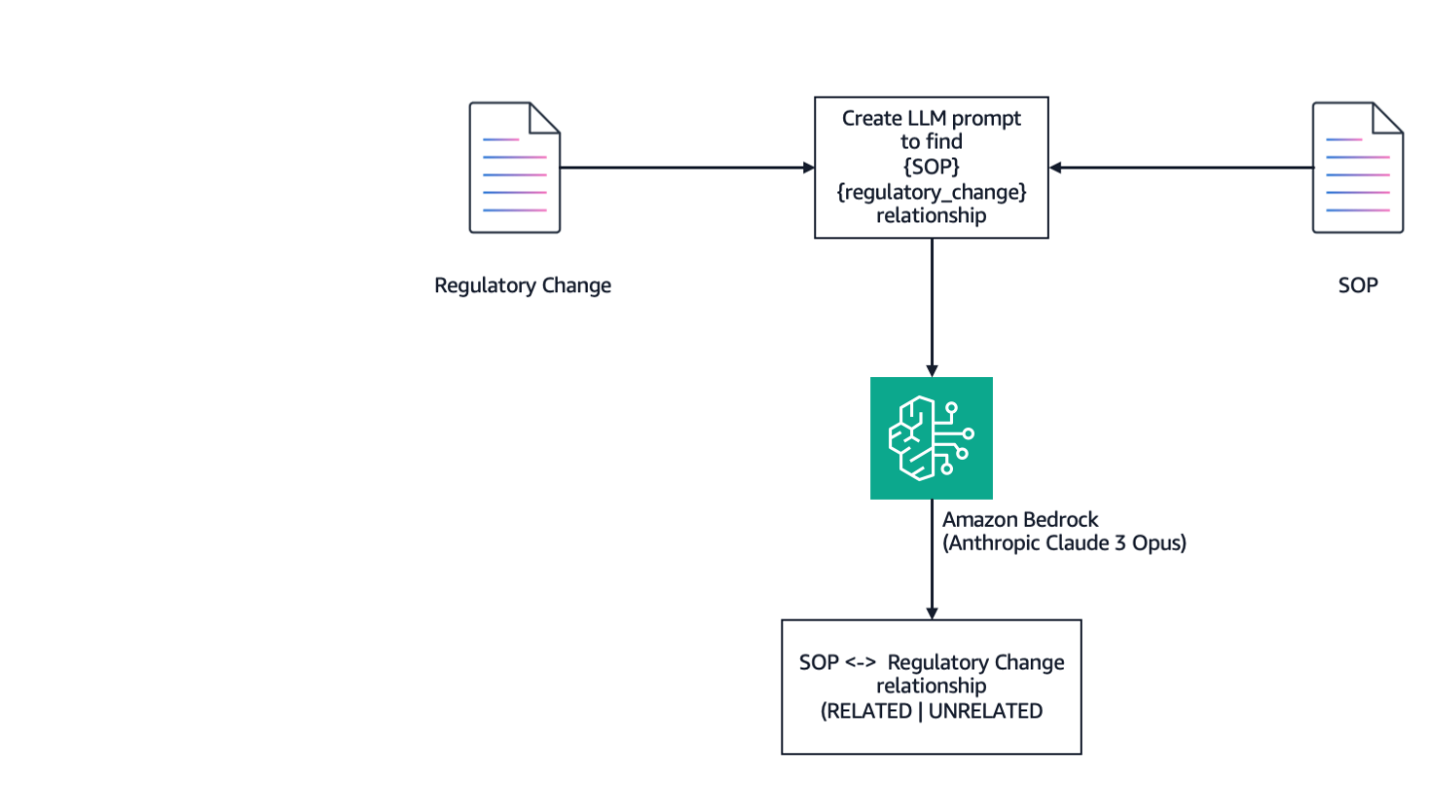

SOPs are crucial in FDA-regulated industries, like healthcare and life sciences, to ensure compliance with regulatory standards. Using Amazon Bedrock, organizations can automate the alignment of SOPs with changing regulations, streamlining processes and reducing resources.

Meta, owner of Facebook and Instagram, will use AI tools to aid advertisers in creating targeted campaigns by end of next year, disrupting traditional marketing industry. The move threatens advertising and media agencies by allowing Meta to directly target brands' marketing budgets.

Salman Rushdie warns at Hay festival: AI lacks humor, but if it writes a funny book, "we're screwed." Rushdie admits he avoids AI, believing authors are safe until AI can create humor.

Radio host replaced by avatars & artist copied by Midjourney - the impact of being replaced by bots. Mateusz Demski shares his experience.

Utah lawyer Richard Bednar sanctioned for using ChatGPT to reference nonexistent court case in filing. Utah appeals court discovers false citations, leading to apology from Bednar.