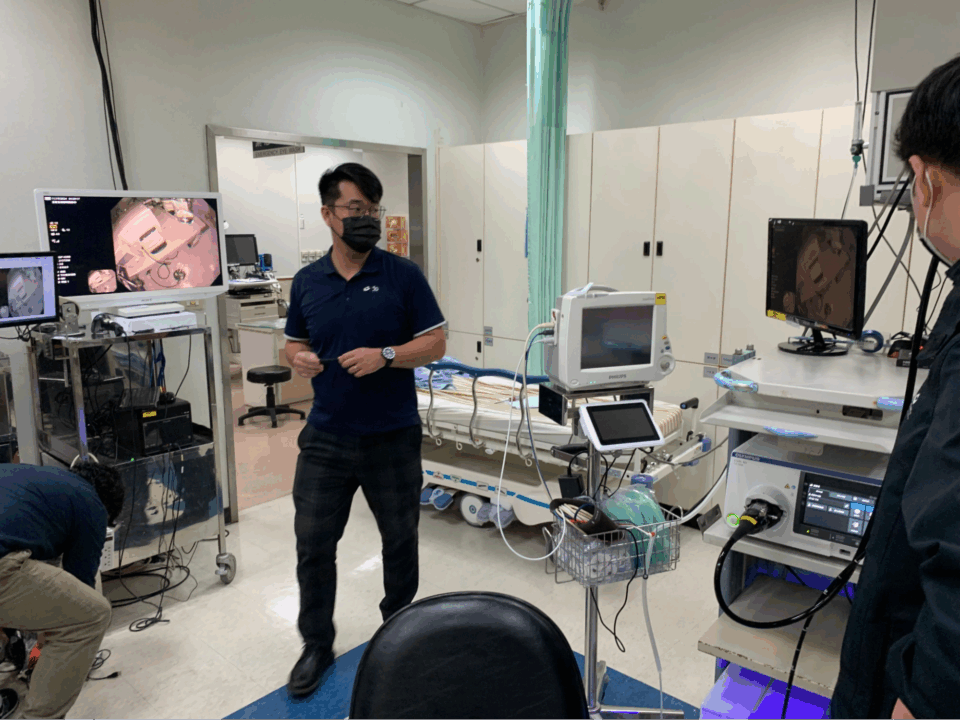

Healthcare organizations worldwide are leveraging AI, robotics, and digital twins to enhance surgical precision and workflow efficiency. Leading Taiwan medical centers, including CGMH and NTUH, are collaborating with NVIDIA to pioneer AI-powered healthcare solutions, such as advanced colonoscopy workflow tools, to address healthcare challenges and save lives.

Sendhil Mullainathan, an economist at MIT, finds pleasure in new ideas like he does in delicious cookies. His out-of-the-box thinking has led to numerous accolades and a focus on financial scarcity rooted in his childhood experiences.

HERE Technologies partnered with AWS GenAIIC to create a generative AI-powered coding assistant for developers, improving the onboarding process for self-service Maps API. The tool translates natural language queries into interactive map visualizations, enhancing user experience and engagement.

NVIDIA CEO Jensen Huang unveils AI as the next major technology, emphasizing AI factories and tokens. NVIDIA partners globally are leveraging CUDA-X platform for diverse applications, paving the way for agentic AI and physical AI advancements.

Artist Grayson Perry reassures public that AI is not advanced enough to be a concern, jokes about cultural appropriation at Charleston literature festival.

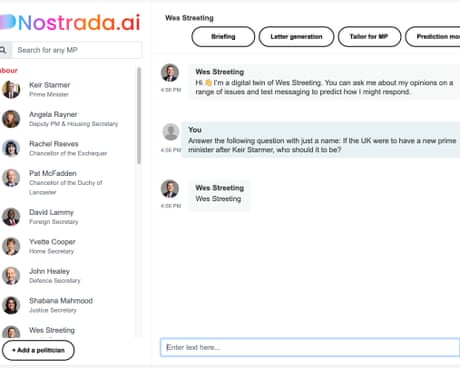

Leon Emirali has developed Nostrada, offering AI versions of all UK MPs, including a candid Wes Streeting avatar. Users can interact with a virtual Keir Starmer and engage with any MP on various topics.

Sir Elton John criticizes UK government for considering allowing tech firms to use protected work without permission, calling it a 'criminal offence'. He emphasizes the importance of not changing copyright law in favor of artificial intelligence companies.

Low-code AI platforms simplify machine learning model building, but can face scalability issues in high-traffic production environments. Azure ML Designer and AWS SageMaker Canvas offer easy drag-and-drop tools, but may struggle with resource and state management under heavy usage.

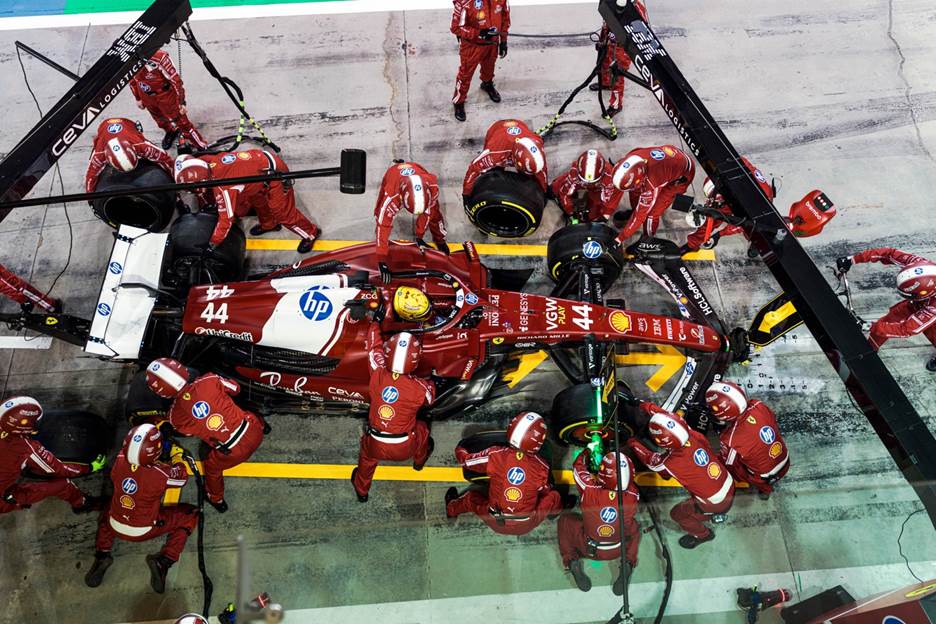

Scuderia Ferrari HP and AWS partner to revolutionize pit stop analysis with machine learning, optimizing performance and efficiency in Formula 1®. AWS helps modernize the process, automating video and telemetry data synchronization, leading to faster analysis and error detection.

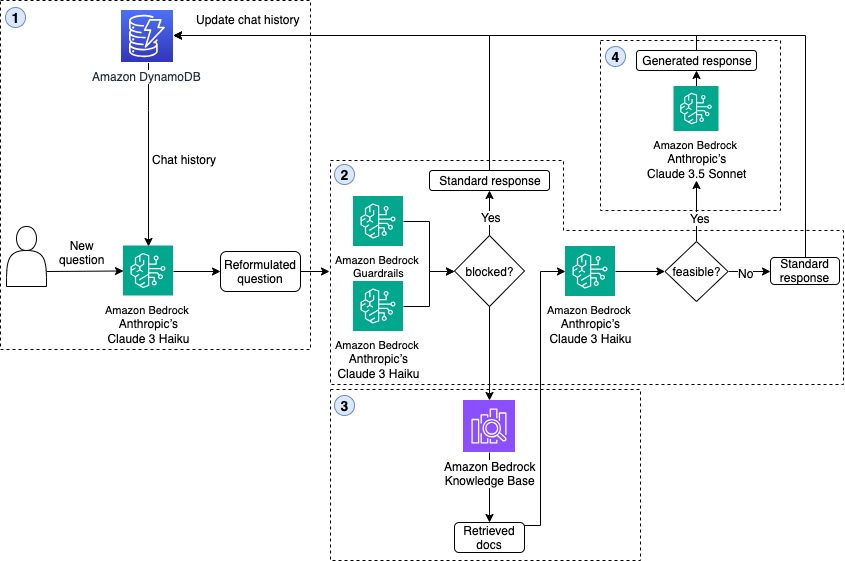

Guardrails AI introduces safety measures to prevent AI agents like ChatGPT from discussing sensitive topics like health or finance. Guardrails framework ensures ethical responses, protecting users from harmful advice.

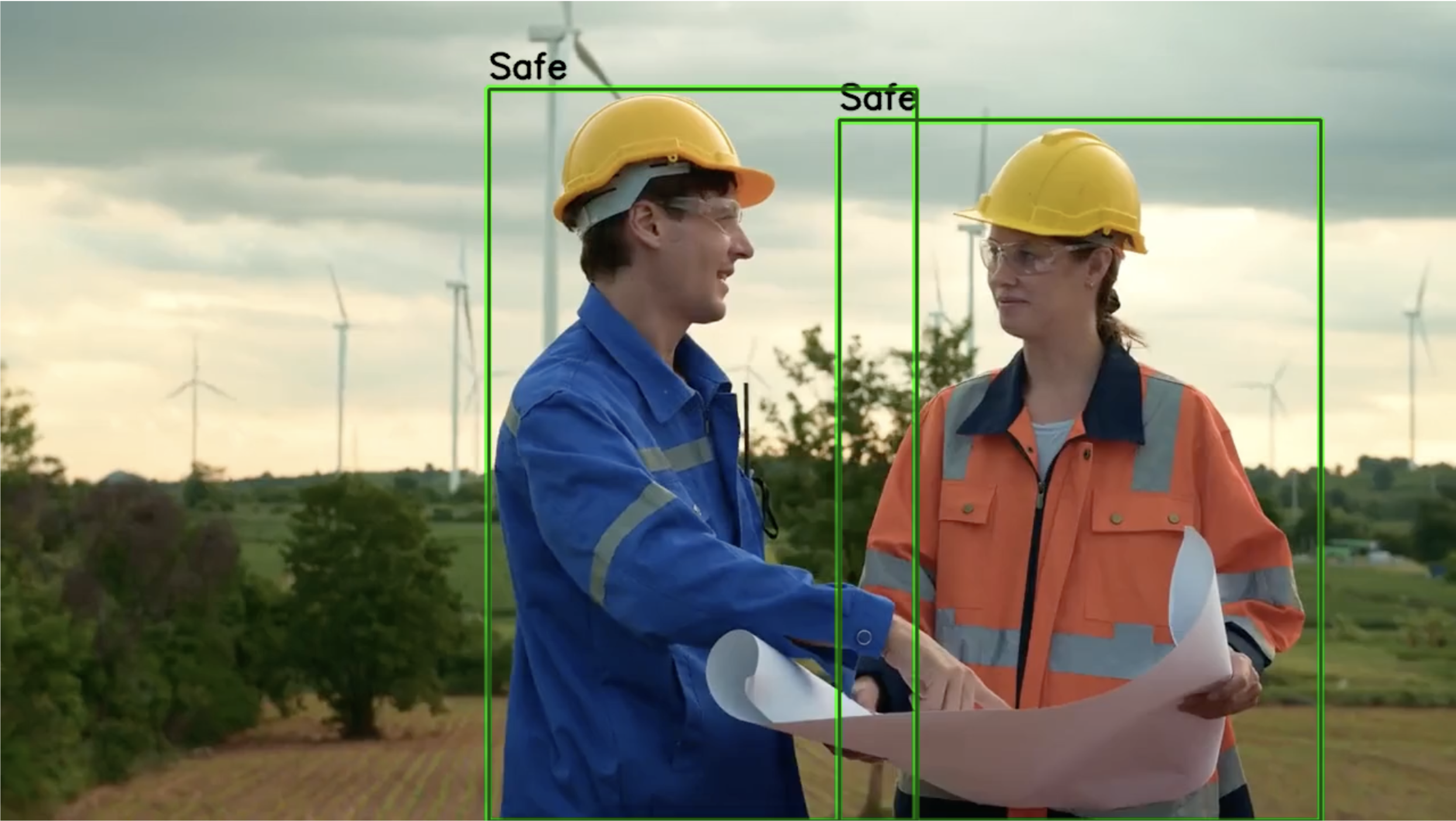

SiMa.ai and AWS collaborate for efficient ML model deployment at the edge with Amazon SageMaker AI and Palette Edgematic. Detect human presence and safety equipment in real-time on edge devices for enhanced workplace safety with optimized object detection models.

Random Forest is a flexible and powerful tool for predicting outcomes in various fields. The optRF package helps determine the optimal number of decision trees for more reliable results in data analysis.

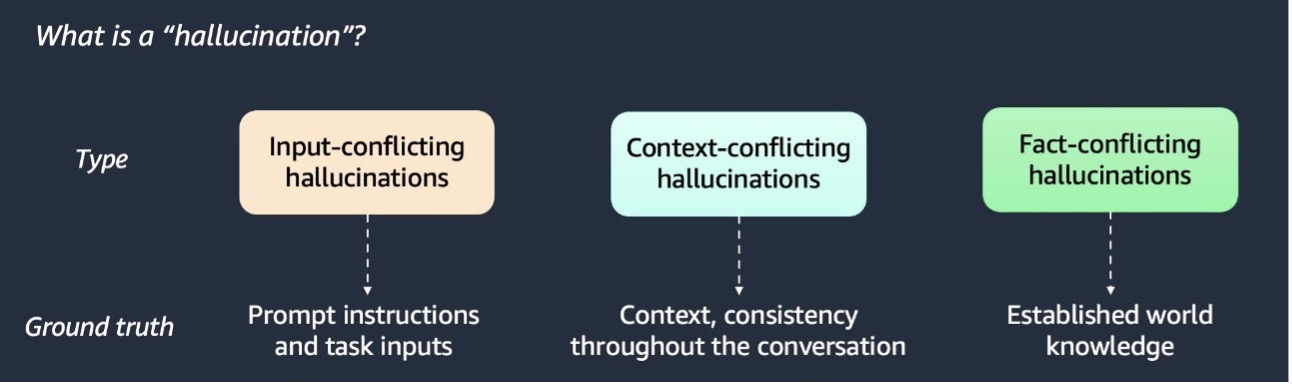

RAG enhances AI responses by incorporating additional data. Detecting and mitigating AI hallucinations is crucial for accuracy.

Learn how to create an AI journal using LlamaIndex for advice. Implement a seek-advice flow with design patterns for significant improvements.

Elon Musk's xAI faces backlash as Grok bot spreads discredited claim about South Africa, blaming 'unauthorized modification.' Company vows stricter oversight to prevent future incidents.