Open foundation models (FMs) offer customized AI applications, but deployment can be complex. Amazon Bedrock Custom Model Import simplifies deployment with automatic scaling and cost efficiency, making it an attractive solution for organizations.

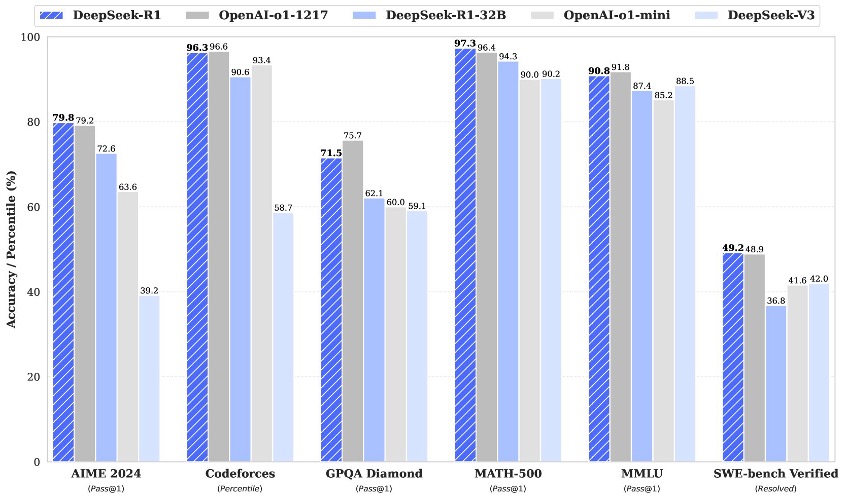

DeepSeek-R1 by DeepSeek AI integrates reinforcement learning for refined outputs. Model variants like DeepSeek-V3 utilize MoE architecture for efficient scaling.

Parquet, a column-oriented format, enhances Big Data performance with faster queries and reduced storage. Python tools like PyArrow dissect Parquet files for better understanding and manipulation, showcasing its efficiency over Pandas.

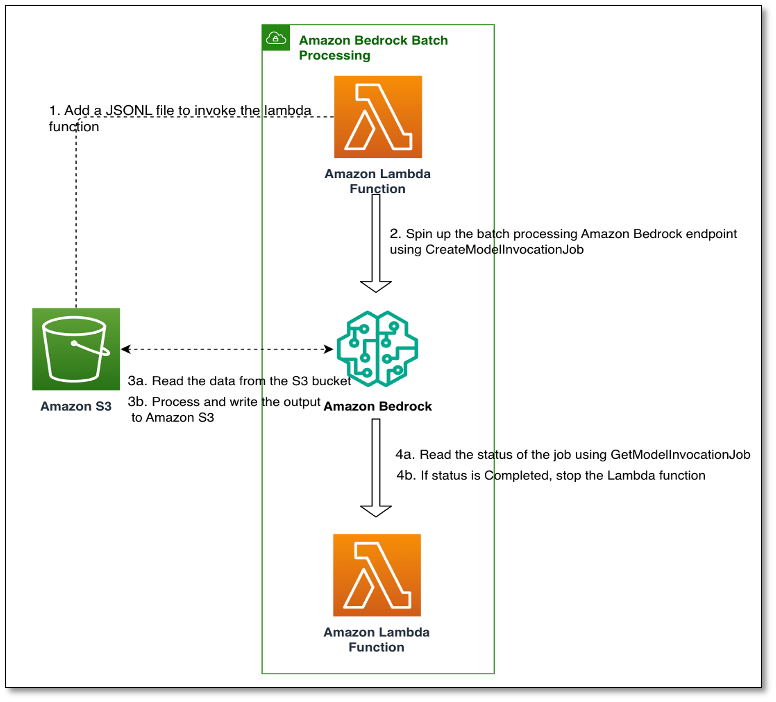

Generative AI solutions can enhance businesses by improving customer experiences. GoDaddy collaborated with the Generative AI Innovation Center to use batch inference in Amazon Bedrock to improve product categorization.

Randall Pietersen, a MathWorks Fellow at MIT and U.S. Air Force engineer, aims to develop drone-based systems for remote airfield assessment, focusing on detecting unexploded munitions using hyperspectral imaging. His multidisciplinary approach and extreme sports background contribute to cutting-edge research at MIT.

New techniques like SVF and SVFT are challenging LoRA for fine-tuning LLMs in a more parameter-efficient way. Leveraging SVD, these alternatives offer more economical options with composable tuned models.

ChatGPT reveals 'good at creative writing' AI model. AI as 'alternative intelligence' can offer what human race needs for progress.

OpenAI's CEO Sam Altman impressed by new creative writing AI model from ChatGPT. Model produces moving metafictional stories on grief.

Discover the power of Amazon Nova Canvas with curated AI-generated images and their prompts. From landscapes to character portraits, explore the creative possibilities with this innovative tool. Unlock your creativity and optimize workflows with practical guidance on crafting effective prompts for Amazon Nova Canvas.

OpenAI's ChatGPT reveals AI model excelling in creative writing. Metafictional story about grief by Jeanette Winterson is striking and original.

Techno-optimism resurges as billionaires envision a golden age through technology. San Francisco AI scenesters advocate for maximum acceleration of technological advancement in a viral manifesto.

Enthusiasts experiment with AI chatbot to recreate classic arcade games, with mixed results. Microsoft, Google, and xAI's Grok chatbot enable creation of virtual worlds and old arcade game clones, like a Pac-Man replica.

RAG and Fine-Tuning are two methods to enhance Large Language Models like ChatGPT and Gemini, enabling access to external knowledge sources for up-to-date information retrieval without retraining. RAG improves input by retrieving external data, while Fine-Tuning adapts the model to specific requirements, revolutionizing LLM capabilities for various applications.

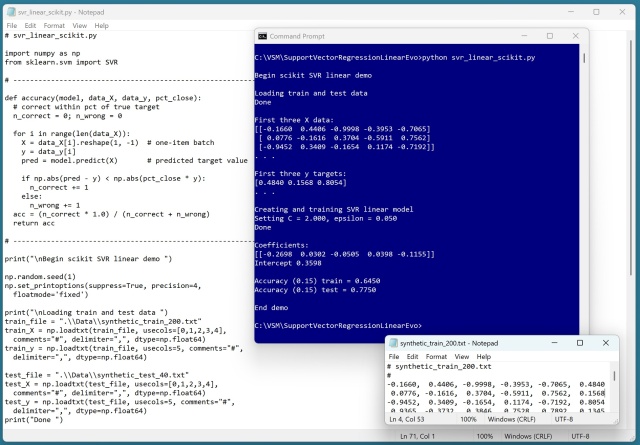

Support Vector Regression (SVR) with a linear kernel penalizes outliers more than close data points, controlled by C and epsilon parameters. SVR, while complex, yields similar results to plain linear regression, making it less practical for linear data.

Transitioning from Data Analyst to Data Scientist can be a smart career move. Marina from Amazon provides tips on skills, resources, and strategies for success.