Wall Street Journal's heatmaps show vaccines' impact on diseases in the US. Matplotlib's pcolormesh() recreates measles heatmap, showcasing data storytelling power.

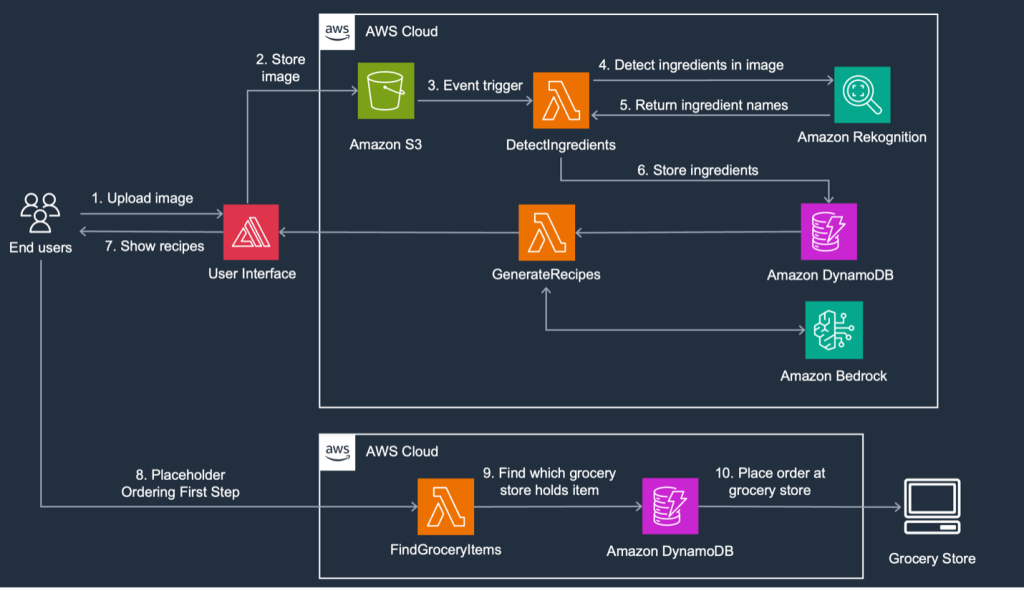

FoodSavr, a solution using generative AI on AWS, recommends recipes based on fridge contents and expiring items in local stores, reducing food waste and saving money. By utilizing Amazon Rekognition and Amazon Bedrock, users can upload fridge images to receive personalized recipes and nearby grocery store suggestions.

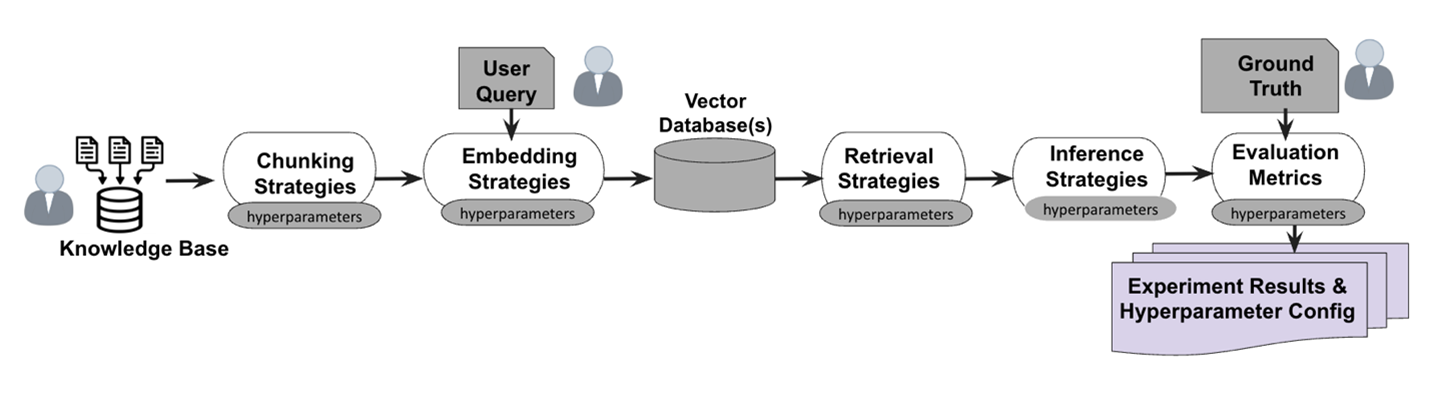

FloTorch compared Amazon Nova models with OpenAI’s GPT-4o, finding Amazon Nova Pro faster and more cost-effective. Amazon Nova Micro and Amazon Nova Lite also outperformed GPT-4o-mini in accuracy and affordability.

Microsoft and Google unveil new AI models simulating video game worlds, with Microsoft's Muse tool promising to revolutionize game development by allowing designers to experiment with AI-generated gameplay videos based on real gameplay data from Ninja Theory's Bleeding Edge.

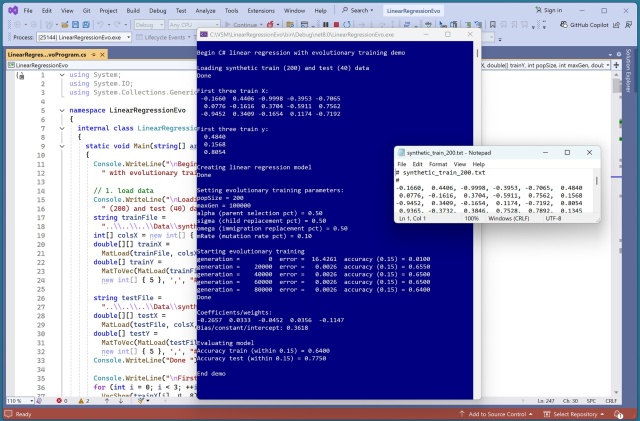

Demo showcases evolutionary training for linear regression using C#. Utilizes a neural network to generate synthetic data. Evolutionary algorithm outperforms traditional training methods in accuracy.

Researchers are tackling spurious regression in time series analysis, a critical issue often overlooked, with real-world implications. Understanding this concept is vital for economists, data scientists, and analysts to avoid misleading conclusions in their models.

GPT-3 sparked interest in Large Language Models (LLMs) like ChatGPT. Learn how LLMs process text through tokenization and neural networks.

AI struggles to differentiate between similar dog breeds due to entangled features. PawMatchAI uses a unique Morphological Feature Extractor to mimic how human experts recognize breeds, focusing on structured traits.

LettuceDetect, a lightweight hallucination detector for RAG pipelines, surpasses prior models, offering efficiency and open-source accessibility. Large Language Models face hallucination challenges, but LettuceDetect helps spot and address inaccuracies, enhancing reliability in critical domains.

Octus transforms credit analysis with AI-driven CreditAI chatbot, offering instant insights on thousands of companies. Octus migrated CreditAI to Amazon Bedrock, enhancing performance and scalability while maintaining zero downtime.

Data team structure is crucial for leveraging Data and AI effectively. Centralized teams can become bottlenecks without proper domain expertise integration.

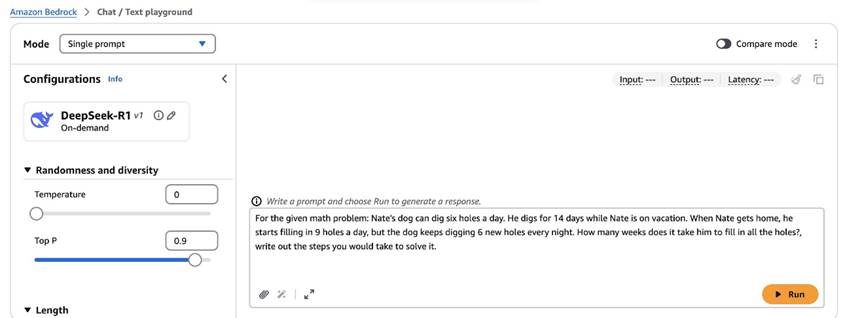

DeepSeek-R1 models on Amazon Bedrock Marketplace show impressive math benchmark performance. Optimize thinking models with prompt optimization on Amazon Bedrock for more succinct thinking traces.

Autonomous digital assistants like Operator from OpenAI can now order groceries for users, but oversight is crucial. The AI agent can navigate websites and complete tasks, offering a new level of convenience and intrigue.

AI bots are set to assist users on dating apps by flirting, crafting messages, and writing profiles. Experts caution against relying too heavily on artificial intelligence, as it may diminish human authenticity in relationships.

MIT and NVIDIA researchers develop a new framework allowing users to correct robot behavior in real-time without retraining. This intuitive method outperformed alternatives by 21%, potentially enabling laypeople to guide factory-trained robots in household tasks.