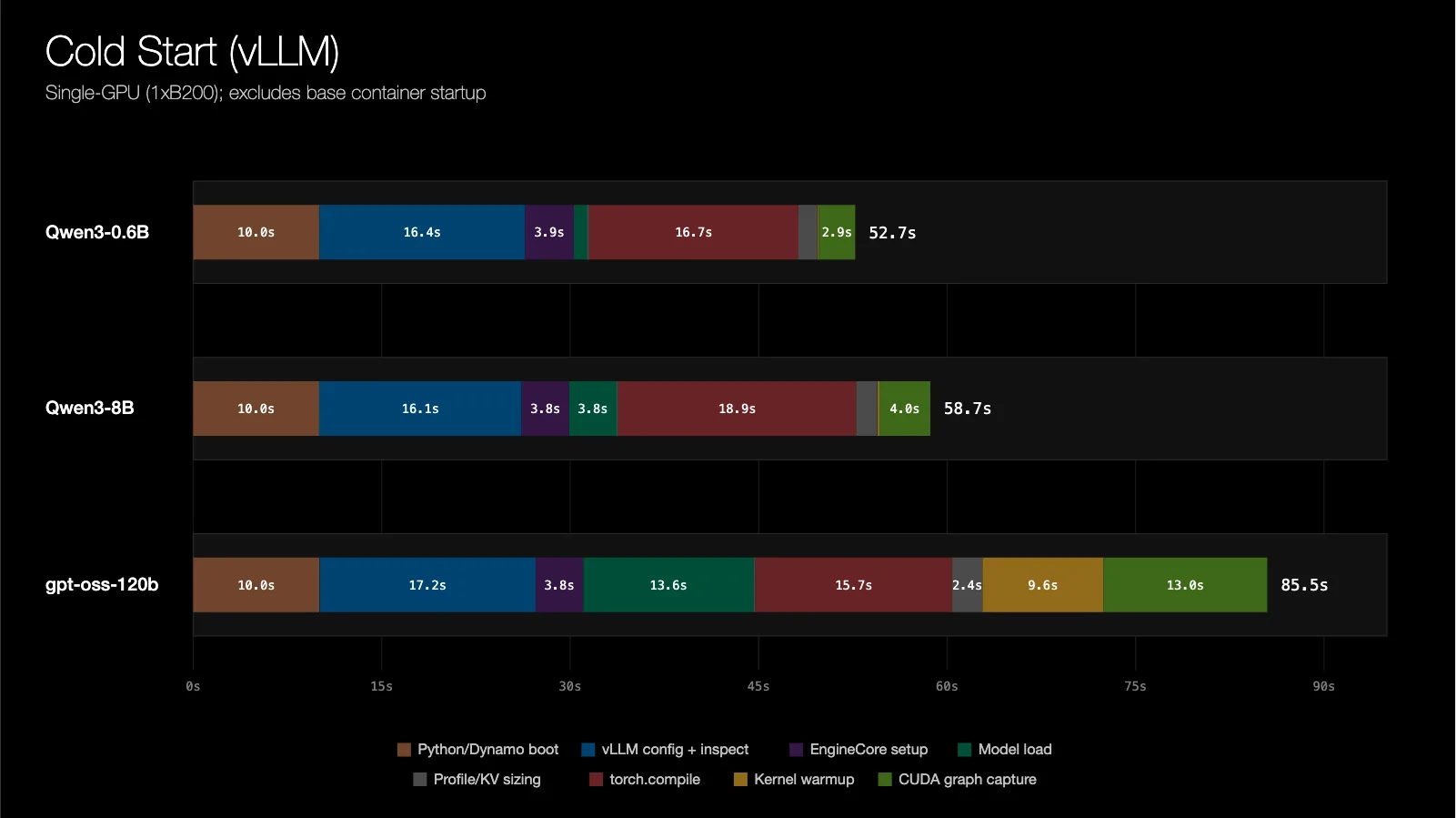

NVIDIA introduces Dynamo Snapshot for AI inference on Kubernetes, reducing cold-start latency and improving scalability during demand spikes. CRIU and cuda-checkpoint work together to checkpoint GPU and CPU states, allowing for seamless restoration and minimal downtime.

MIT's SERC symposium focused on AI's impact on society, featuring talks on air pollution forecasting and ethical AI deployment. Panel discussions highlighted challenges of aligning AI with human values and governance of AI systems.

GeForce NOW offers 18 new games this month, including the highly anticipated NTE: Neverness to Everness. Explore surreal worlds and classic remakes instantly via cloud streaming, with no downloads necessary.

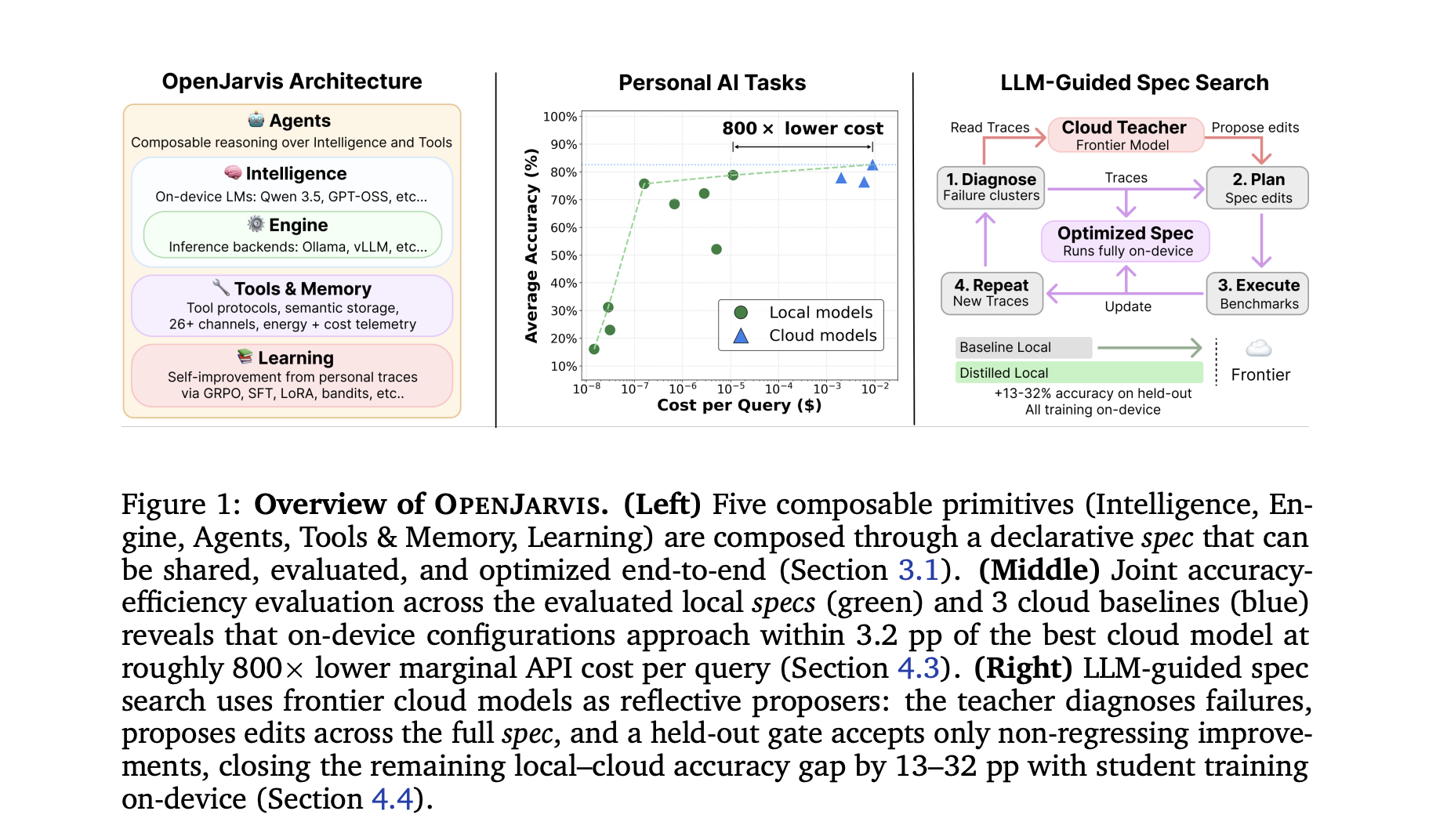

Stanford University and Lambda Labs researchers developed OpenJarvis, an on-device framework that rivals cloud models in efficiency and latency. OpenJarvis allows easy composition of models, agents, and memory, with a unique LLM-guided spec search for optimization.

MIT, Georgia State University, and partners launch PATH to provide industry-aligned AI training for community colleges, emphasizing hands-on learning and collaboration. Program aims to develop practical AI skills and mindsets for a workforce prepared for the future.

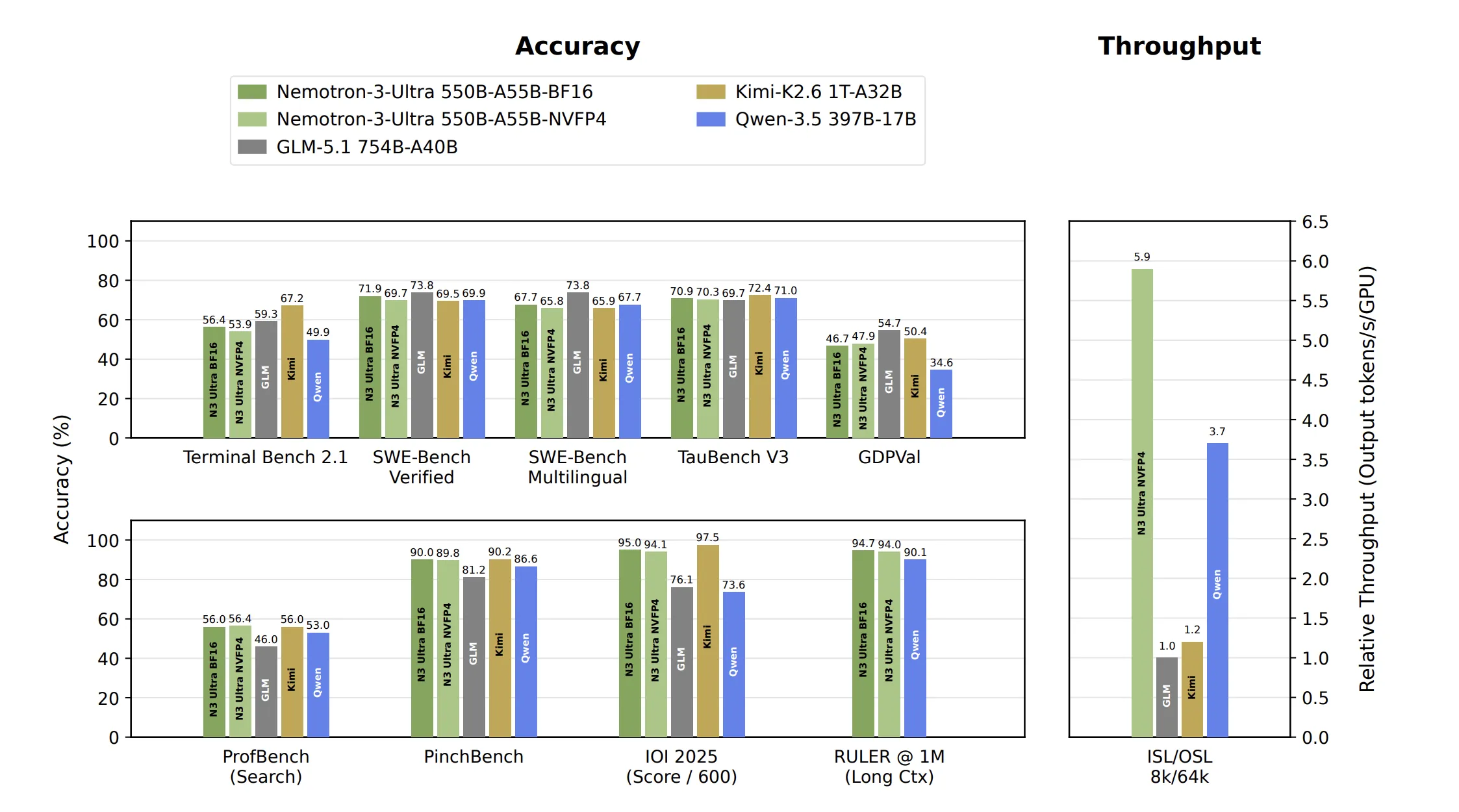

NVIDIA introduces Nemotron 3 Ultra, a 550 billion parameter model with hybrid Mamba-Attention architecture, offering 6x higher inference throughput. The model uses Multi-Token Prediction for faster generation and achieves stable, accurate training with NVFP4 datatype.

The NSF has renewed funding for MIT's IAIFI, focusing on AI advancing physics and physics improving AI. Collaborative research across physics and AI is leading to groundbreaking discoveries and innovative scientific approaches.

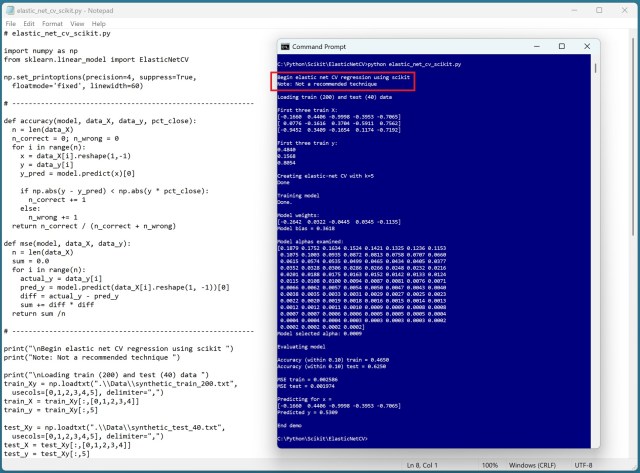

Cross-validation in machine learning is deemed ineffective by a seasoned expert due to numerous flaws in both k-fold and leave-one-out techniques. The lack of generalizability and unreliable hyperparameter tuning make cross-validation a questionable practice in real-world scenarios.

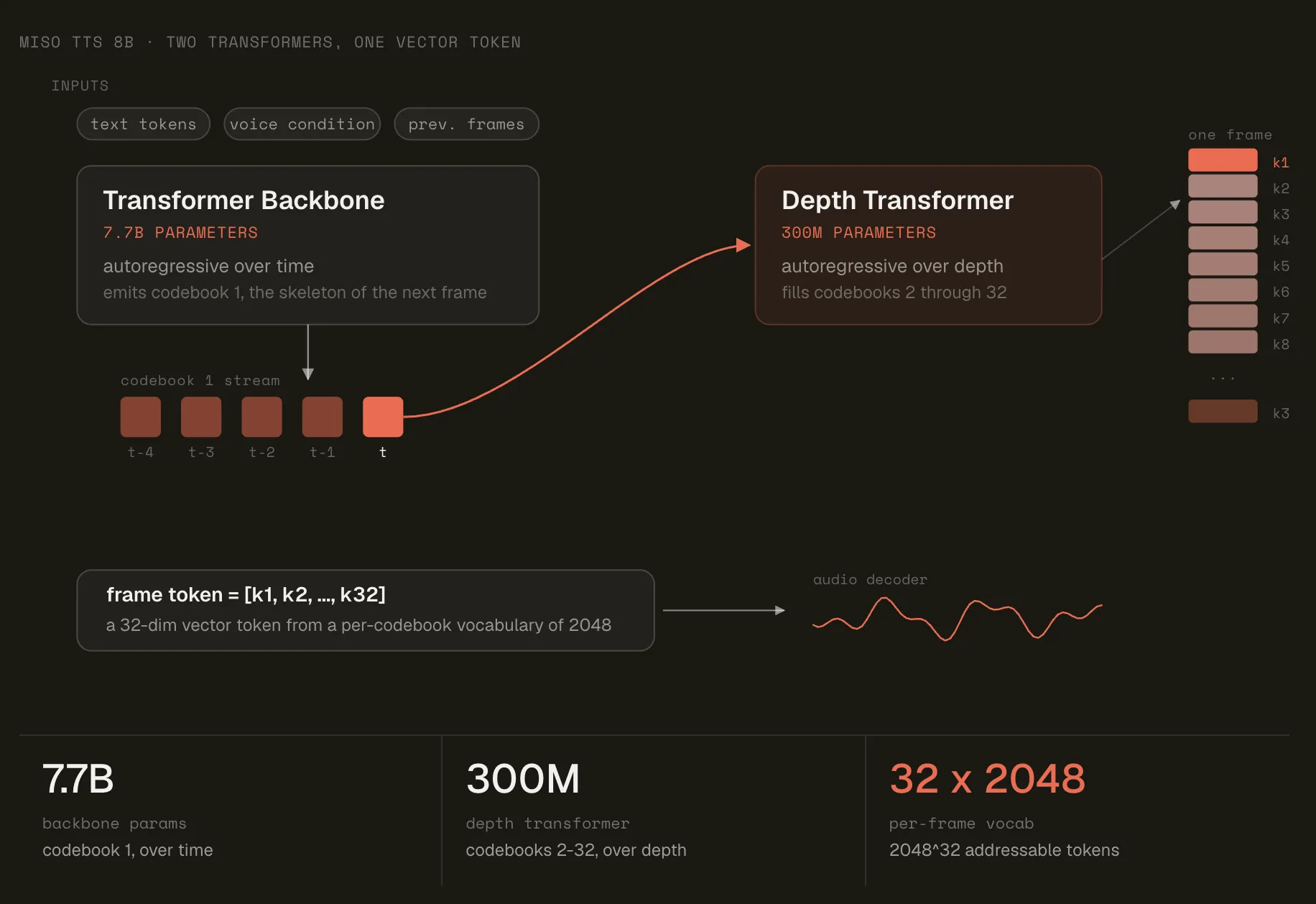

Miso Labs introduces MisoTTS, an 8-billion-parameter text-to-speech model with RVQ technology for expressive speech generation. It addresses the vocabulary size problem and interlocutor tone, achieving 110ms latency.

Tod Machover, music tech pioneer at MIT Media Lab, awarded George Peabody Medal for groundbreaking work in AI and participatory opera. Known as a musical visionary, Machover expands music's boundaries and potential for all.

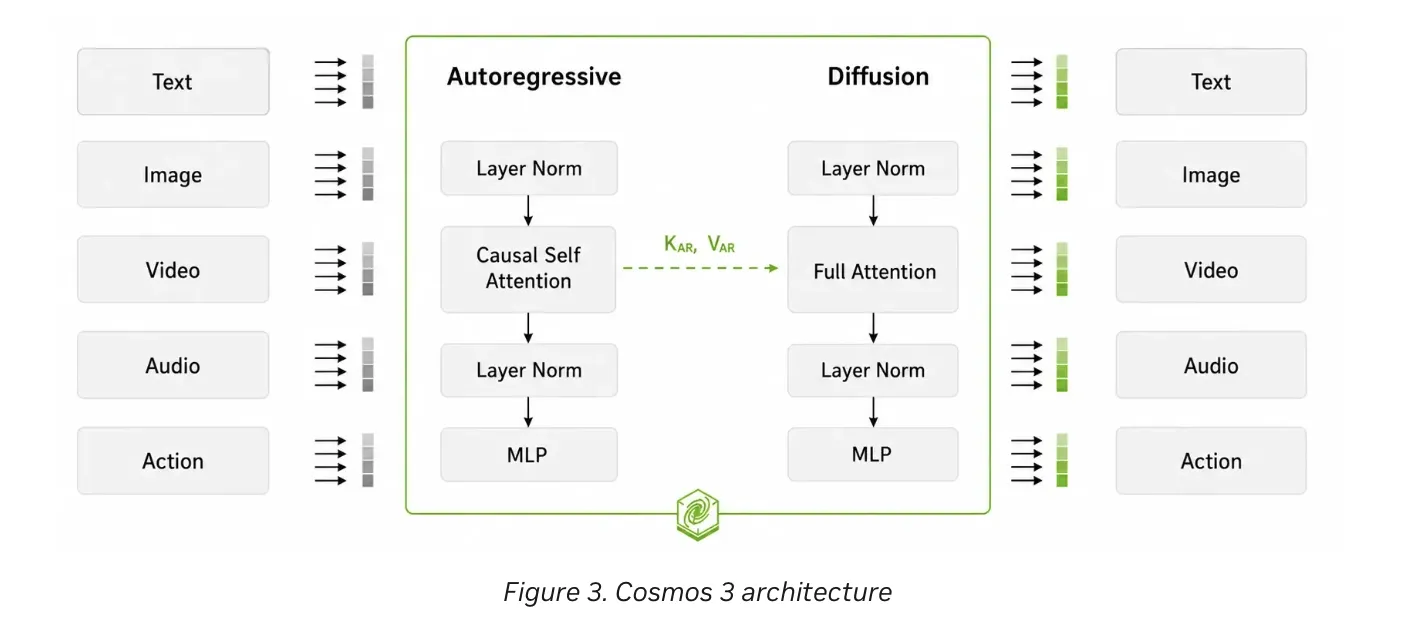

NVIDIA AI team releases Cosmos 3, a unified model for physical AI. Combines physical reasoning, world generation, and action generation for robotics and autonomous vehicles.

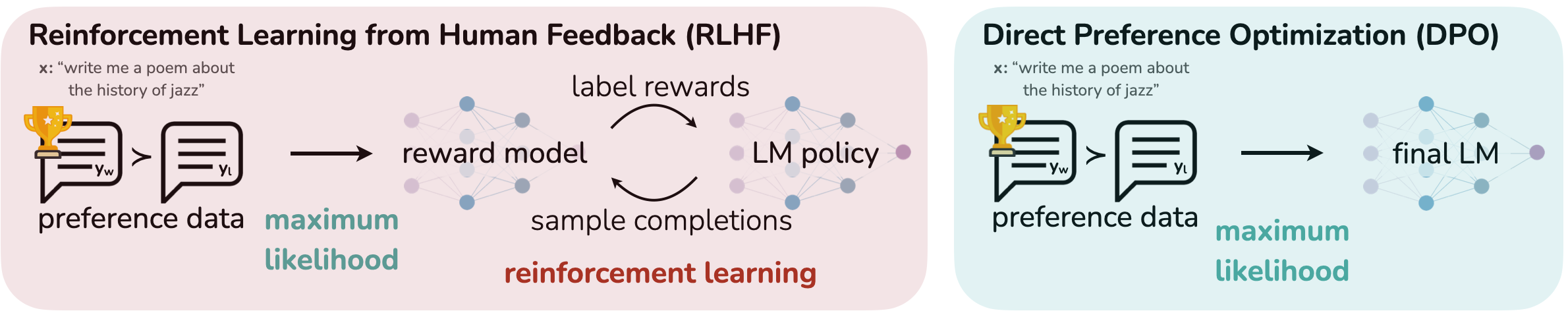

AI agents must select the right tools for tasks to avoid errors and delays. Learn how SFT and DPO improve tool-calling accuracy in language models for reliable automation.

Deep Learning AMI and AWS Deep Learning Containers now support SOCI snapshotter and index for efficient container image management. SOCI's lazy loading reduces network bandwidth usage and improves container startup times, benefiting organizations managing large container images in cloud environments.

Researchers from MIT and the MIT-IBM Computing Research Lab developed ChartNet, a dataset and series of open-source models that outperform commercial AI models in tasks like chart interpretation. This breakthrough could empower small firms with limited budgets to leverage AI for business trend analysis and scientific figure interpretation.

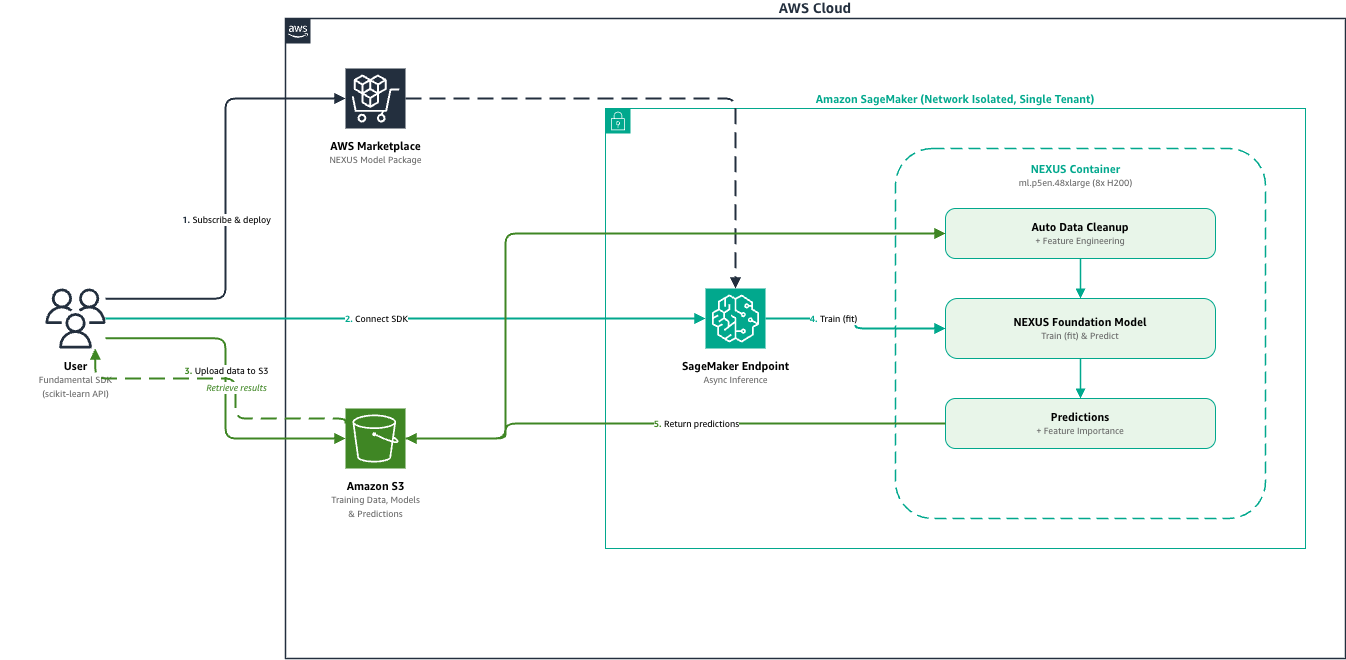

Amazon SageMaker AI now supports Fundamental's NEXUS model for accurate tabular data predictions in days. NEXUS offers deterministic results, native tabular understanding, and non-sequential reasoning for structured data analysis.