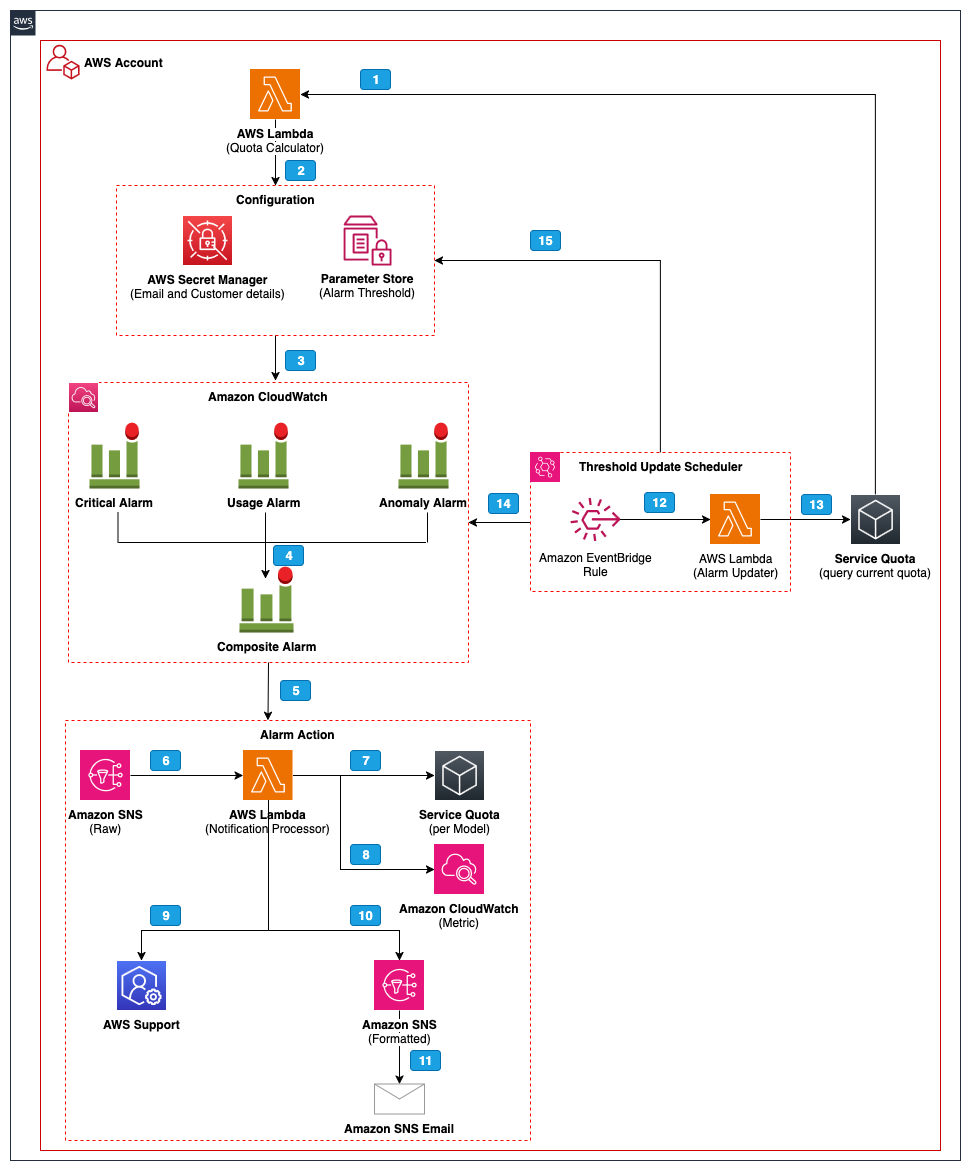

Amazon Bedrock enables generative AI for 100,000+ organizations worldwide, offering comprehensive capabilities for bold innovation. Introducing Amazon Bedrock Ops Alert, a proactive monitoring solution for sustainable operational management of AI workloads, empowering teams to drive real business impact.

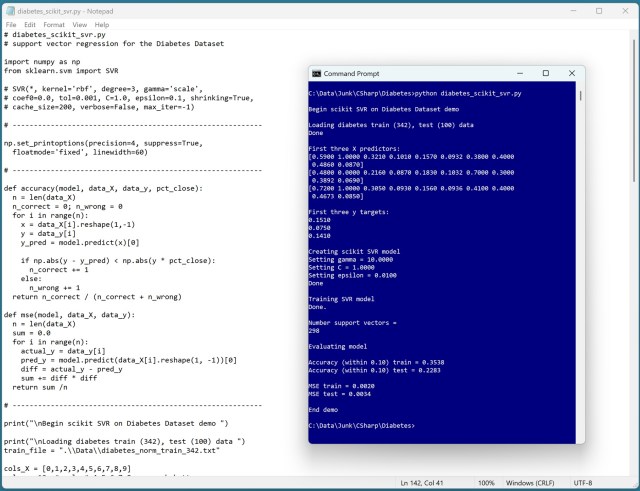

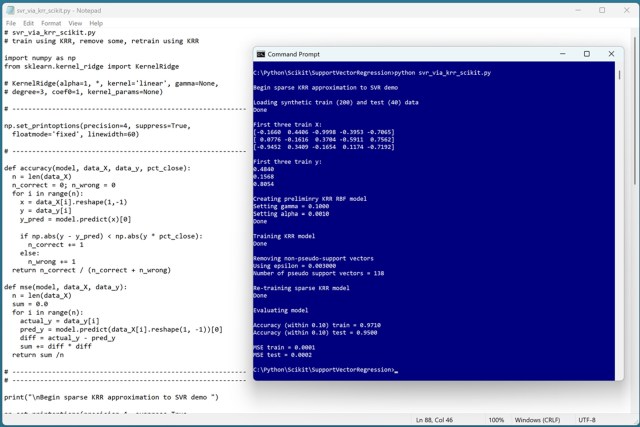

Practicing code with the Diabetes Dataset, scikit SVR model had poor prediction accuracy. Kernel SVR outperformed linear SVR due to its power and scalability, closely related to KRR.

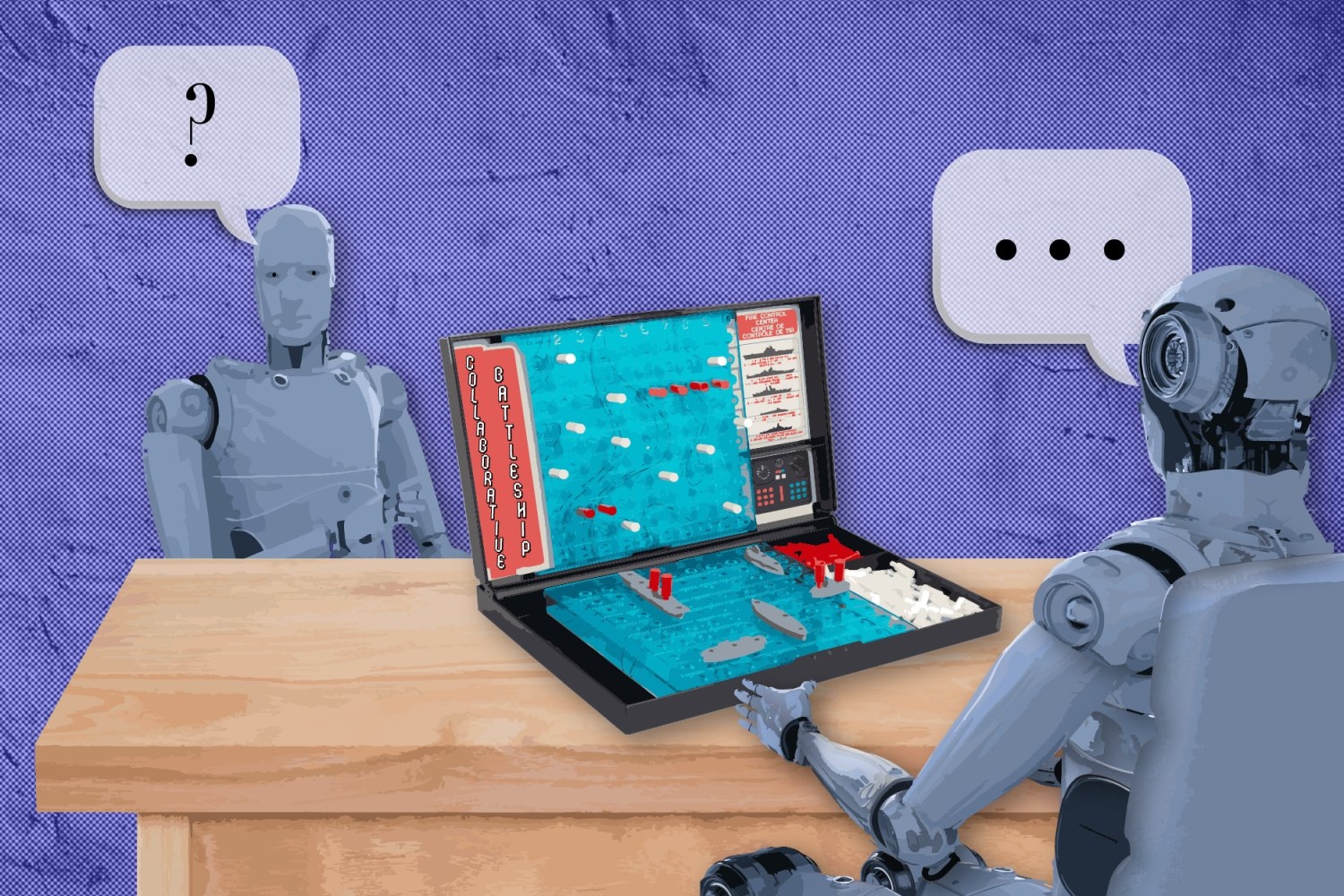

In 2026, AI agents excel at tasks like customer service, but struggle with complex inquiries. MIT and Harvard researchers improved AI's ability to ask questions through a "Battleship" game, leading to significant gains in performance and efficiency.

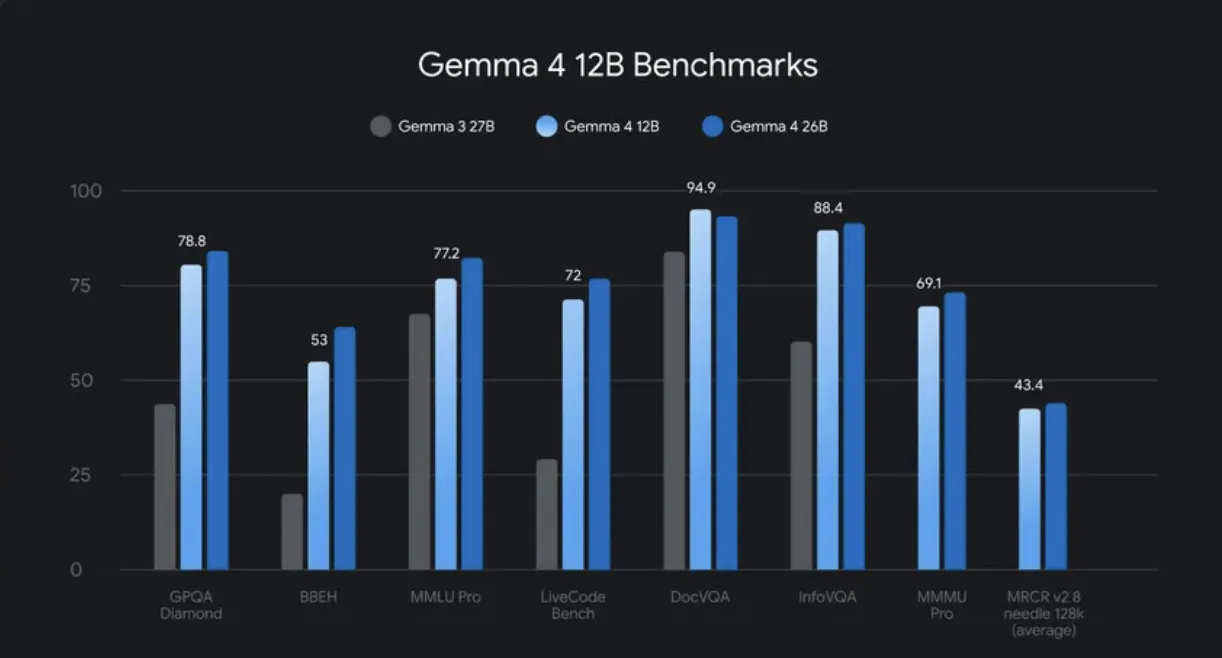

Google DeepMind released Gemma 4 12B, an encoder-free multimodal model for text, images, audio, and video. The model runs on a laptop with 16 GB of RAM, bridging the gap between edge-friendly and larger variants, with open-source weights available for download.

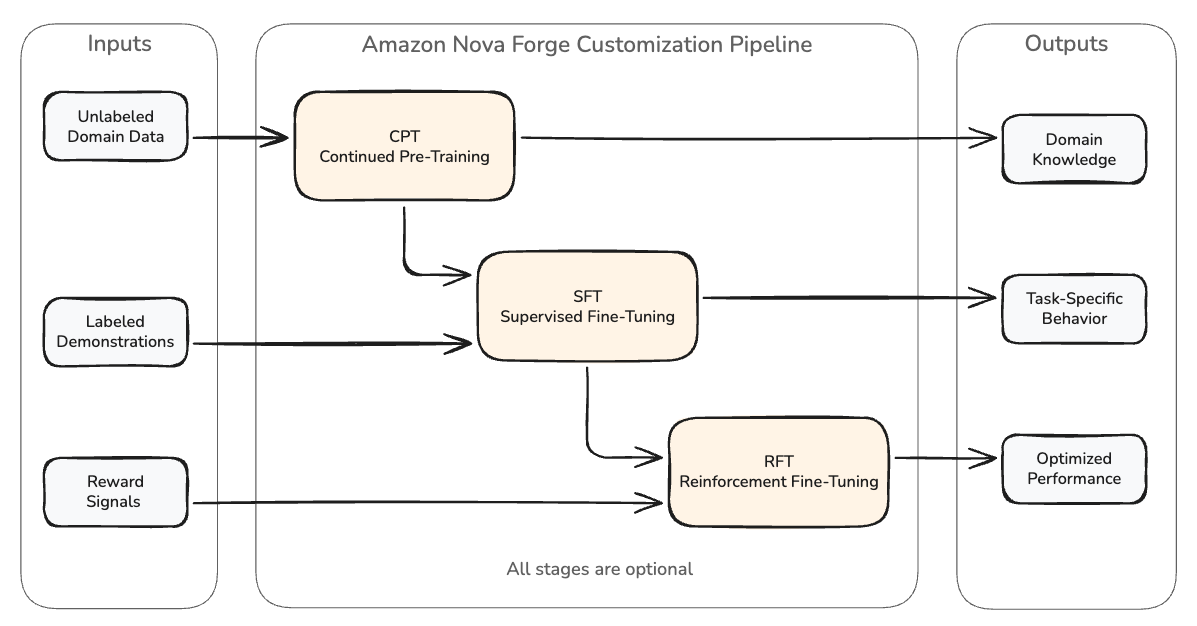

Amazon Nova Forge allows users to build customized language models that blend proprietary data with curated datasets, preventing catastrophic forgetting and improving domain performance without degrading general capabilities. The tool helps navigate the challenges of hyperparameter tuning for domain-specific tasks, avoiding expensive failures and ensuring the right balance between stability and...

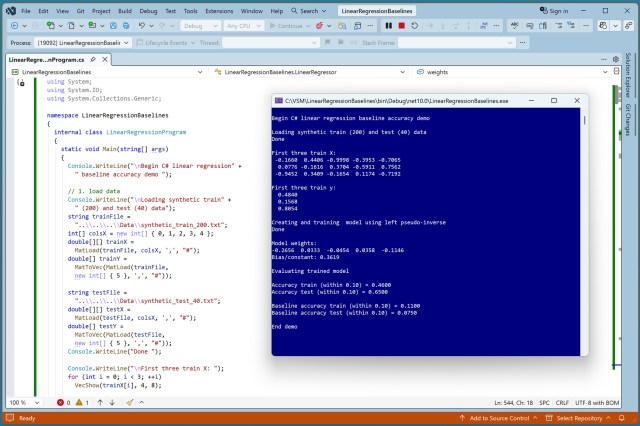

Linear regression model demo showcases 46% accuracy on training data, outperforming baseline predictions. Galaxy Science Fiction, known for stunning cover art, featured renowned space artist Chesley Bonestell.

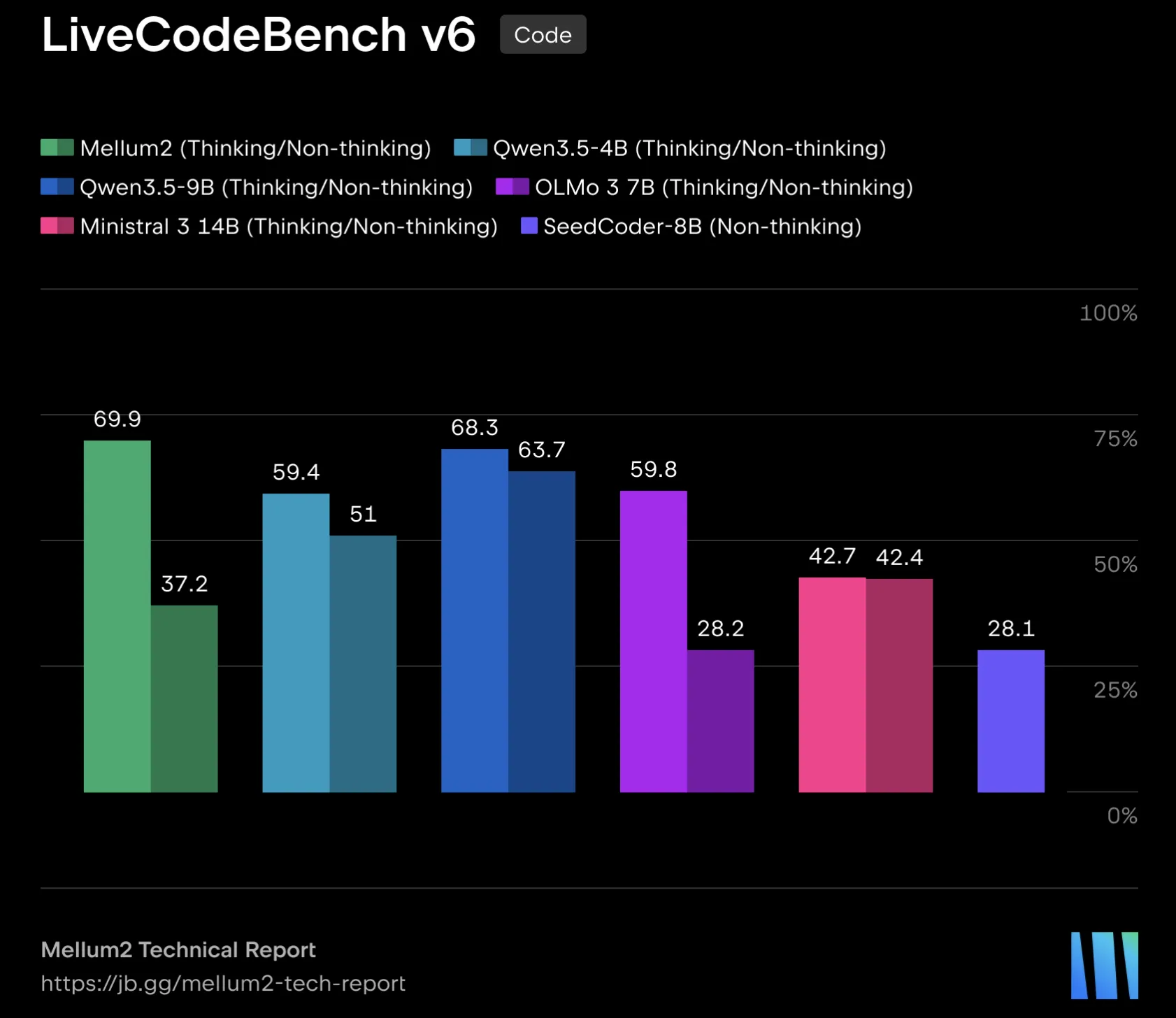

JetBrains released Mellum2, a specialized AI model for software engineering with 12B parameters. It uses a Mixture-of-Experts architecture and goes through extensive pre-training and post-training stages for various tasks.

Amazon Nova 2 Lite offers a cost-effective object detection solution with no training needed. Easily deploy with Amazon Bedrock, AWS Lambda, and API Gateway for various industries.

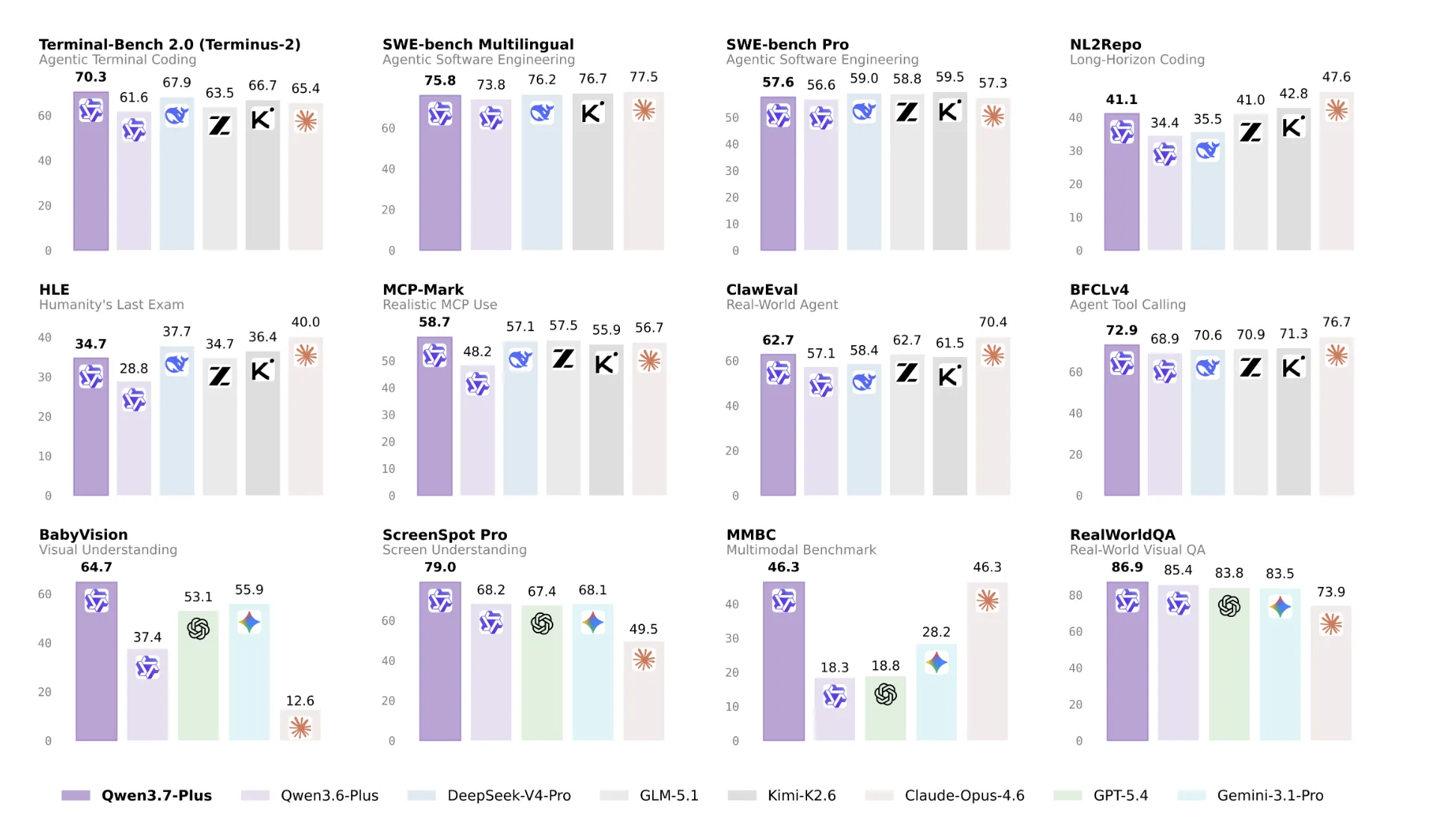

Alibaba releases Qwen3. 7-Plus, a multimodal agent model on Bailian platform with image and video understanding. It offers deep reasoning and self-programming capabilities, positioning it for long-running tasks.

TinyFish introduces BigSet, an open-source multi-agent system that turns natural-language descriptions into structured web datasets. BigSet automates schema inference, data gathering, deduplication, and scheduled updates for efficient dataset creation.

Kernel ridge regression (KRR) and support vector regression (SVR) are machine learning techniques that can be combined to create a sparse KRR model approximating an SVR model. This hybrid approach offers the benefits of KRR's large dataset handling and SVR's efficiency in model storage, demonstrating high predictive accuracy in a demo using the scikit KernelRidge module.

Amazon Bedrock AgentCore payments, in partnership with Coinbase and Stripe (Privy), allows agents to access paid resources on behalf of end users. AgentCore addresses risks like runaway spending and lack of end user consent in autonomous payment systems.

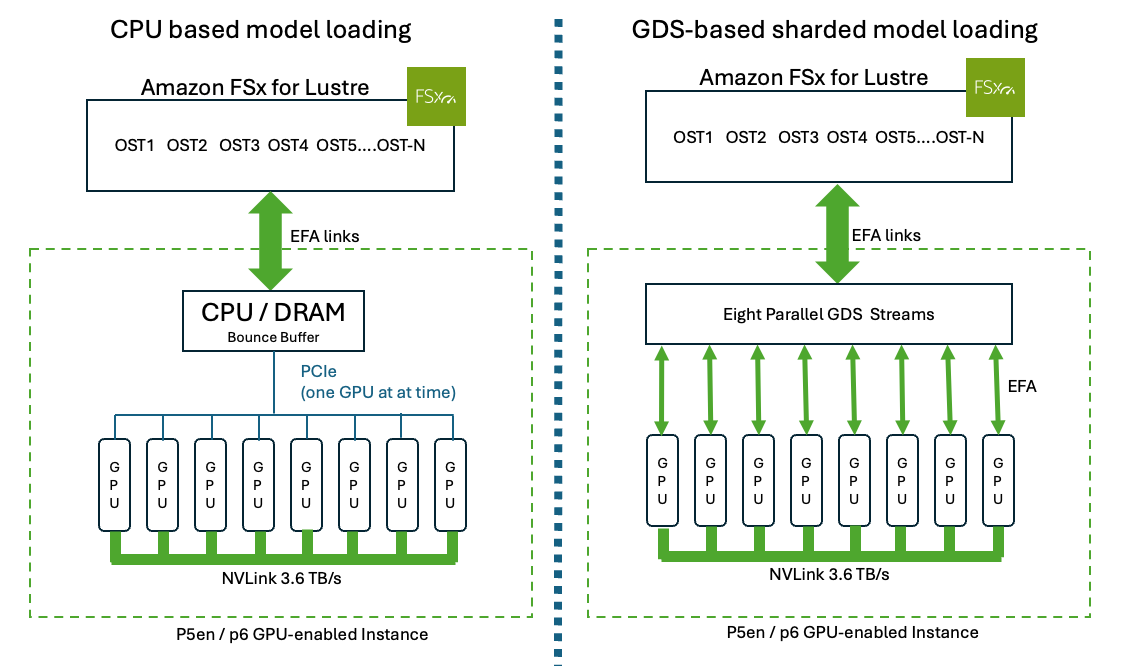

Large language models (LLMs) on AWS GPU instances face lengthy model load times. Amazon FSx for Lustre and NVIDIA GPUDirect Storage (GDS) drastically reduce load times, improving total time to first token (TTFT) from minutes to seconds for models like Llama 3.1 with 405B parameters on AWS P6e UltraServers.

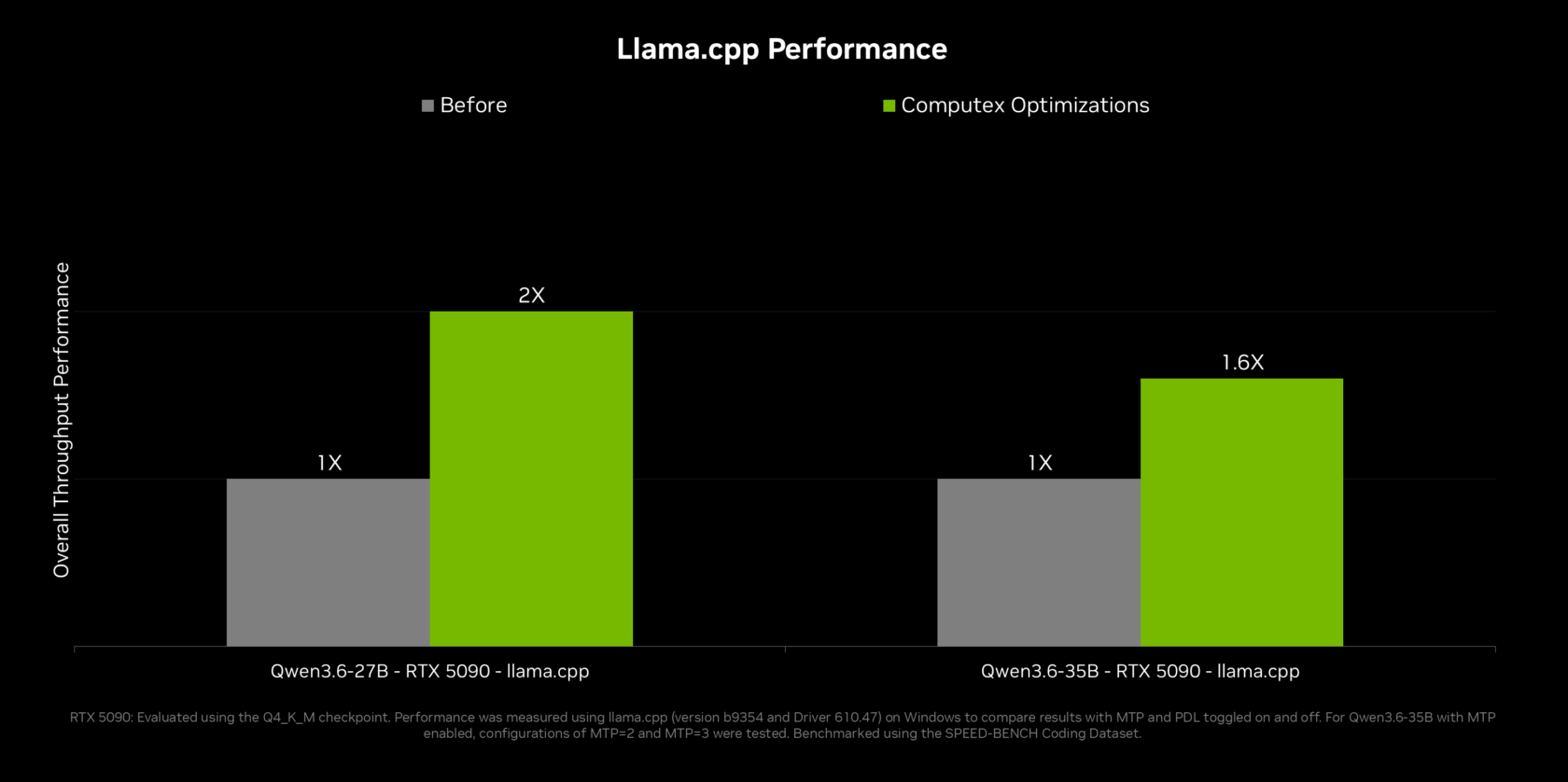

NVIDIA introduces RTX Spark PCs for personal agents at GTC Taipei, with new AI compute and memory capabilities. Partnership with Microsoft brings secure on-device agents to Windows, along with updates for Hermes Agent and OpenClaw.

OpenAI's GPT-5.5, GPT-5.4, and Codex now available on Amazon Bedrock for advanced AI applications. High-performance inference engine for complex tasks.