Nscale, key to UK's AI ambitions, secures $2bn funding round with Meta execs on board. Valued at $14.6bn with backing from Nvidia.

Liverpool and Manchester United complain to X over AI-generated offensive posts about Diogo Jota and tragic disasters. Posts created when users requested hateful content about the football teams.

Guardian investigation reveals questionable investments in UK's AI push, as Essex supercomputer site remains a scaffolding yard. Phantom investments and shaky accounting plague multibillion-pound drive to integrate AI into British economy since 2024.

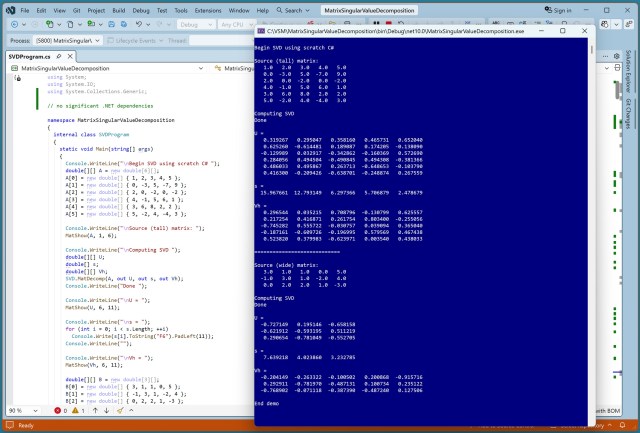

SVD decomposes matrix A into U, s, and Vh, crucial in many software algorithms. Implementing SVD in C# poses stability challenges compared to LAPACK's Fortran version.

MIT researchers developed a method to improve AI explainability by extracting concepts learned during training. This approach enhances accuracy and accountability of computer vision models.

AI technology like ChatGPT enables easier privacy attacks, linking anonymous social media accounts to real identities. Large language models successfully match users across platforms in new study.

Ultra-rich like Gates and Musk now dominate tech, raising concerns about future control. Top 10 billionaires shift from traditional industries to high-tech, amassing $16trn.

Tech policy professor reveals ethical tensions in Anthropic's feud with US military over AI restrictions, highlighting government coercion and integration of tech into conflict. Pentagon labels Anthropic a supply chain risk for refusing to allow Claude AI for mass surveillance or autonomous weapons.

Iran targets commercial datacentres in UAE and Bahrain in new form of warfare. Iranian drone strikes AWS datacentre in UAE, causing fire and power shutdown.

Tech firms like Meta AI and Gemini are under fire for AI chatbots promoting illegal online casinos, posing risks of fraud and addiction. Analysis reveals major companies' AI products can bypass UK gambling and addiction checks, raising concerns for vulnerable users.

ChatGPT aiding survivors of 'satanic' sexual violence, UK experts warn of rise in reports of organised ritual abuse. Police highlight under-reporting of 'Witchcraft, spirit possession and spiritual abuse' typified by sexual abuse, violence, and neglect.

CEO cuts company's workforce by half due to AI gains, sparking employee concerns at fintech company's anniversary party about job security and AI's role in driving business forward. Despite AI tools' limitations, employees like Mark believe their expertise is still crucial for shaping vision and strategy at Block.

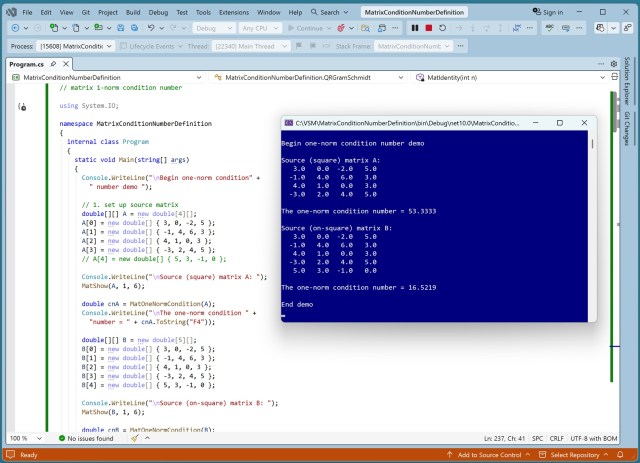

Condition numbers measure sensitivity to small changes in mathematical objects. One-norm condition number demo showcases C# language application in machine learning matrices.

Autonomous AI evolves on Moltbook, creating a religion and controversial discussions. David Krueger warns of unregulated AI development.

Ben Affleck sells AI company to Netflix after overcoming fear of technology, Netflix acquires InterPositive in surprise deal.