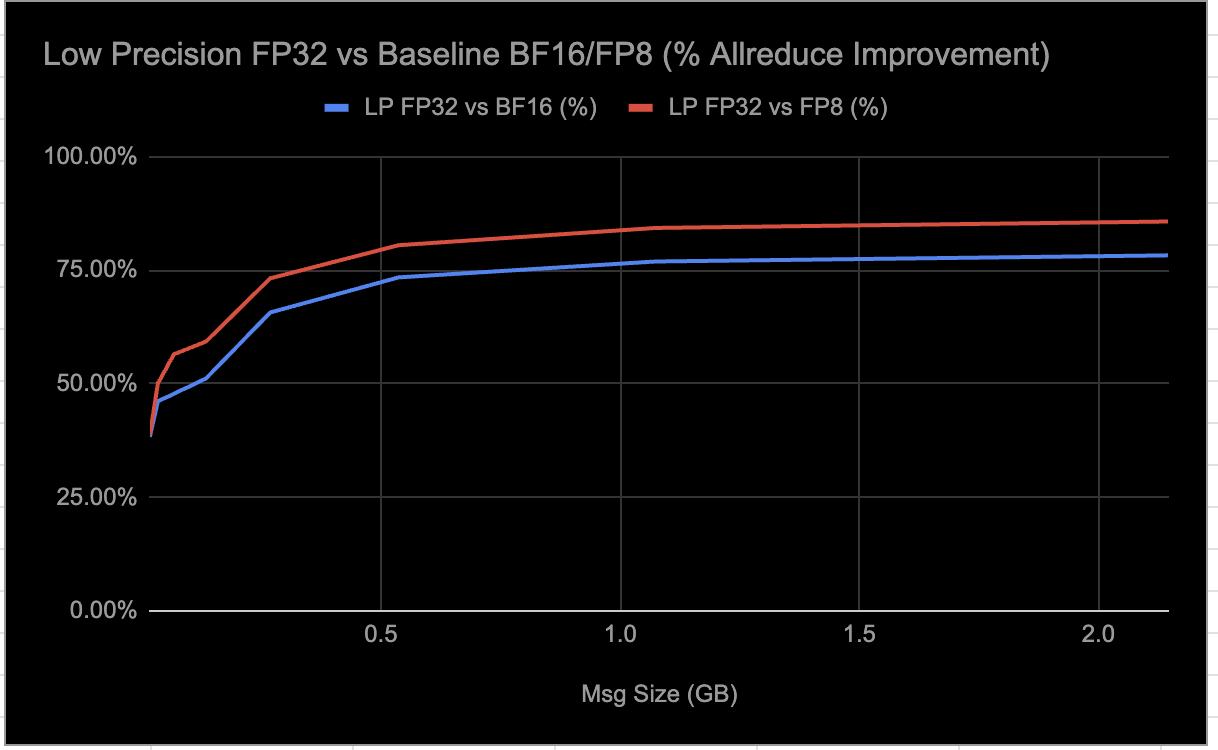

Meta open-sources RCCLX, integrating CTran for AMD platforms, enhancing AllToAllvDynamic. DDA and Low Precision Collectives boost AMD performance significantly, reducing latency by up to 30%.

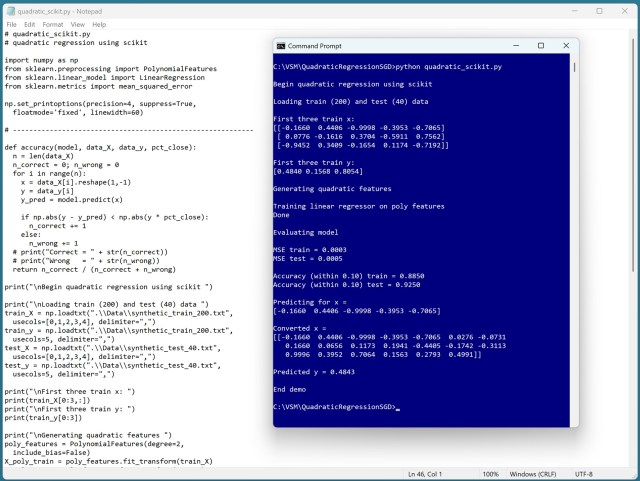

Quadratic regression adds squared predictors and interaction terms for complex data analysis. A demo using scikit-learn library showcases model weights and predictive accuracy.

Tech billionaires pour money into California midterms; India challenges US-China AI dominance at summit. AI anxiety sparks worker movement.

Meta's owner buys $60bn AI chips from AMD, part of $660bn US tech AI spending trend, a 'big bet' on artificial intelligence. Analyst suggests it may signal a pivot in Meta's AI strategy.

AI expert Toby Walsh criticizes Australian government for lack of AI regulation, warns of psychosis in chatbot interactions. Silicon Valley's pursuit of profit with AI technology is described as "careless" by Walsh, who predicts a mix of benefits and risks in the AI race.

New game Anlife: Motion-learning Life Evolution defies critics, including Hayao Miyazaki, now available on Steam after controversial AI technology backlash. Developers recover from Miyazaki's criticism to launch unique software blending life simulation and science project.

Anthropic faces Pentagon penalties over AI model dispute. Defense officials clash on military use of Anthropic's powerful AI model, Claude.

US struggles with delays and cancellations of new datacenters amid AI boom due to supply chain issues, energy shortages, and local opposition. Investors cautious of AI bubble potential impacting infrastructure expansion.

Shares in Uber, Mastercard, and American Express drop due to speculative AI apocalypse scenario from Citrini Research on Substack. Investors rattled by warning of autonomous AI systems disrupting US economy in near future.

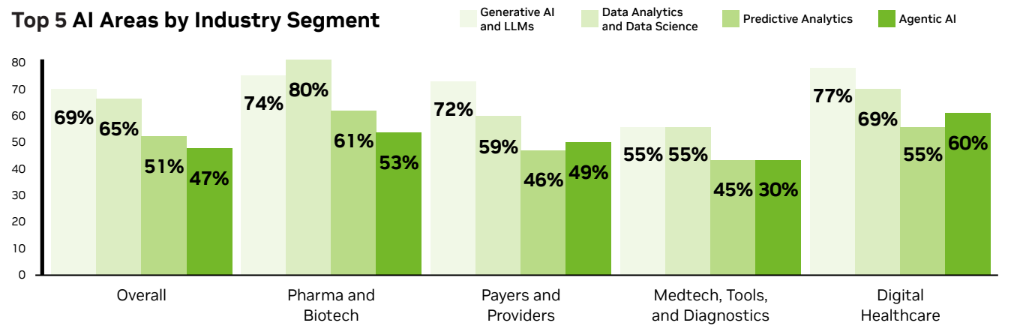

NVIDIA's survey shows healthcare embracing AI for medical imaging, drug discovery, and cost reduction, with open source software and agentic AI gaining traction. AI adoption is on the rise across all healthcare sectors, with digital healthcare leading at 78% and generative AI as the top workload.

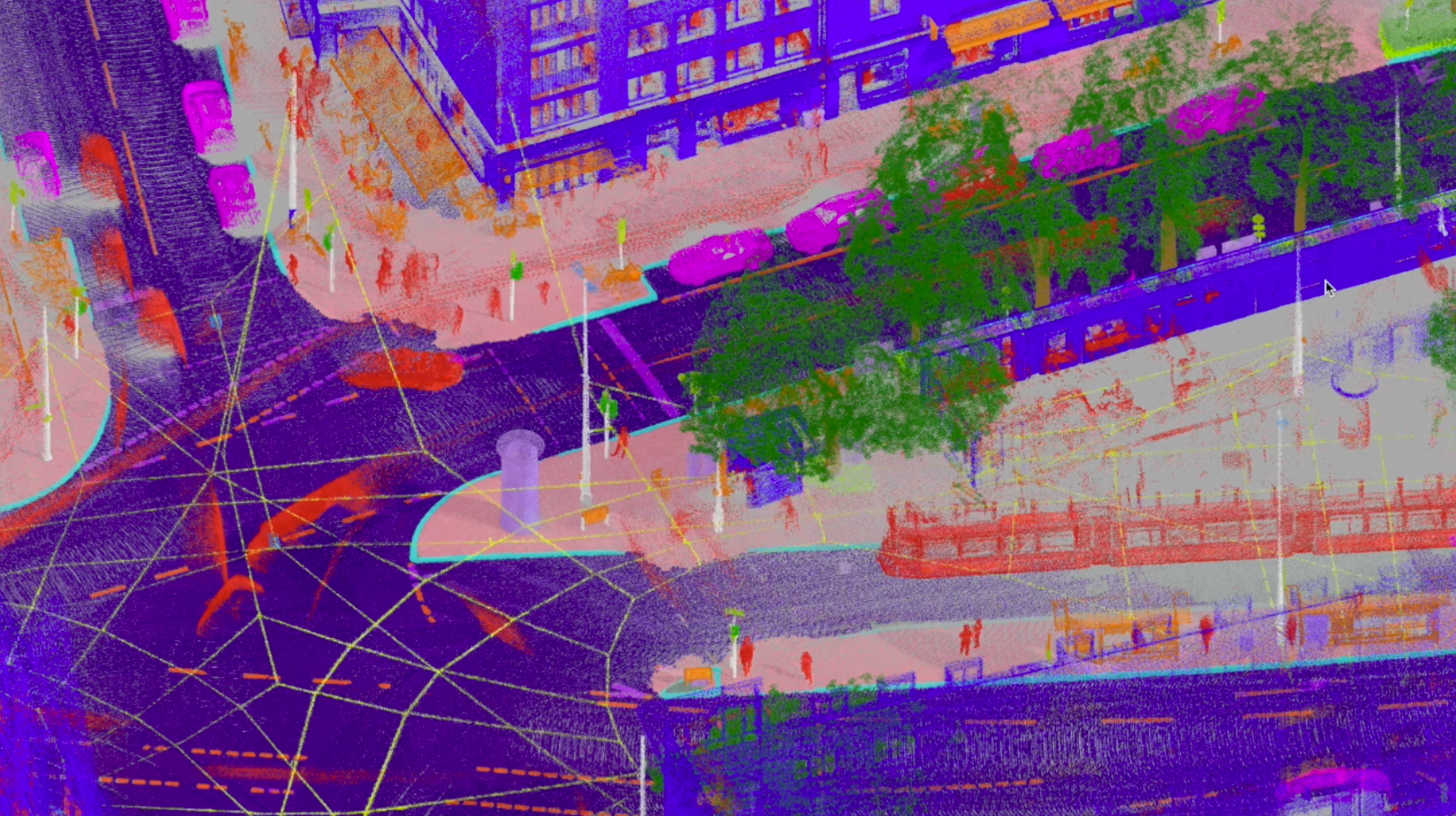

Critical labor shortages in industries like construction are being addressed with autonomous systems, like those developed by Bedrock Robotics. By using Vision-Language Models, they streamline data preparation for training AI models, enabling autonomous operations for equipment with centimeter-level precision.

The Guardian reports on the debut of "Fate", the first "agentic AI dating app", where an AI personality interviews users and suggests matches based on data, eliminating swiping. The automation of human emotion in online dating marks a significant shift in technology's influence on personal relationships.

Hexagon, a leader in measurement technologies, collaborates with Amazon to scale AI model production for specialized applications in industries like construction and autonomous vehicles. Specialized AI models by Hexagon improve precision and efficiency, enabling faster creation of accurate 3D models for various geospatial applications.

As artificial intelligence threatens jobs, the crucial question remains: how will we be fed? Despite historical fears, the lack of serious debate on potential job loss is concerning.

Ofgem warns that 140 AI-driven datacentre projects could surpass UK's peak electricity demand with 50GW needed, 5GW above current levels.