MIT and Symbotic researchers develop AI system to optimize robot movements in warehouses, boosting throughput by 25%. The system uses deep reinforcement learning to prioritize robots and reroute them to avoid bottlenecks, offering potential for significant efficiency gains.

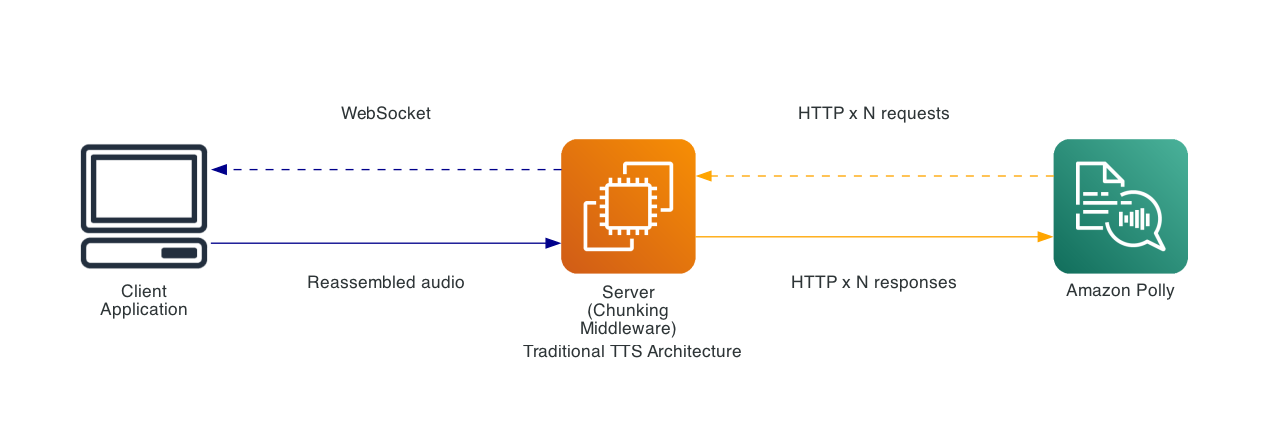

Amazon Polly introduces Bidirectional Streaming API for real-time text-to-speech synthesis, allowing incremental text input and immediate audio output. This innovative approach eliminates waiting for complete text, enhancing speed and efficiency in conversational AI applications.

NYC public hospital system ends Palantir contract amid UK controversy. Dr. Mitchell Katz confirms agreement expiration in October.

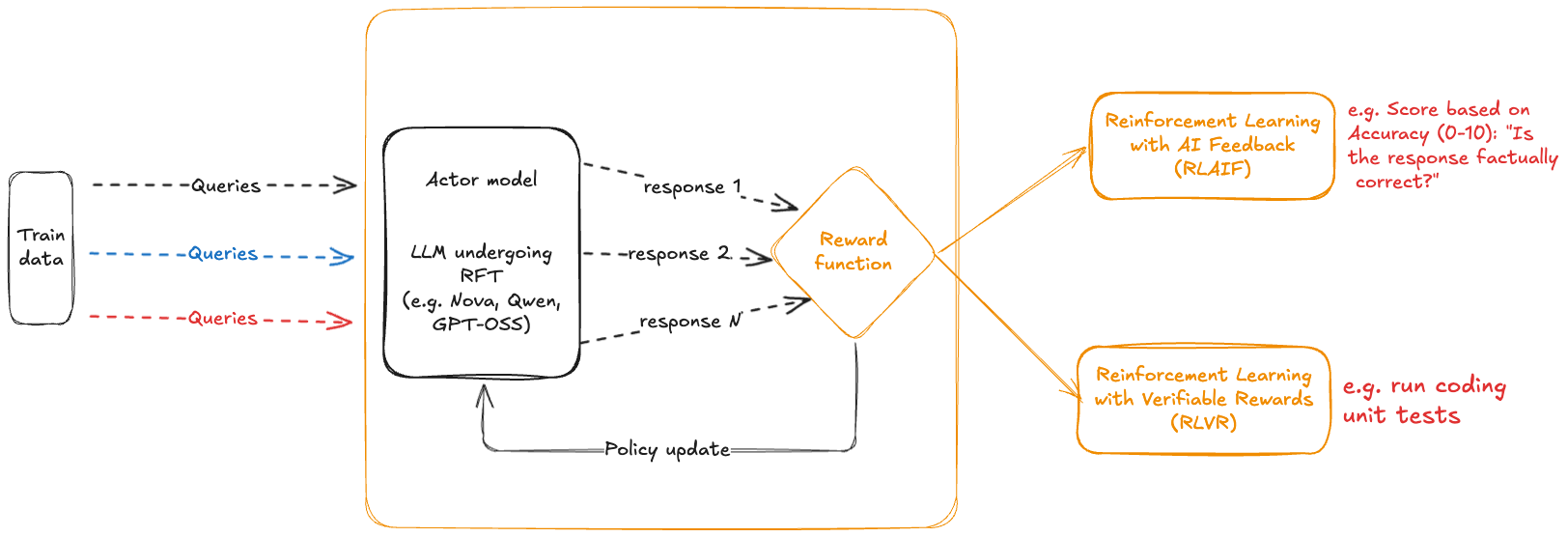

Amazon Bedrock introduced Reinforcement Fine-Tuning (RFT) for Nova and Open weight models like OpenAI GPT OSS 20B, changing how large language models learn through feedback loops, not just static datasets. RFT in Amazon Bedrock streamlines customization by allowing models to improve decision-making capabilities iteratively, learning from feedback on responses to prompts rather than traditional ...

Researchers from Woodwell Climate Research Center, MIT, and Intuit developed a computer vision system for automated fish monitoring using underwater video, enhancing traditional methods. This scalable, cost-effective solution improves efficiency and accuracy in tracking river herring populations during their annual migration.

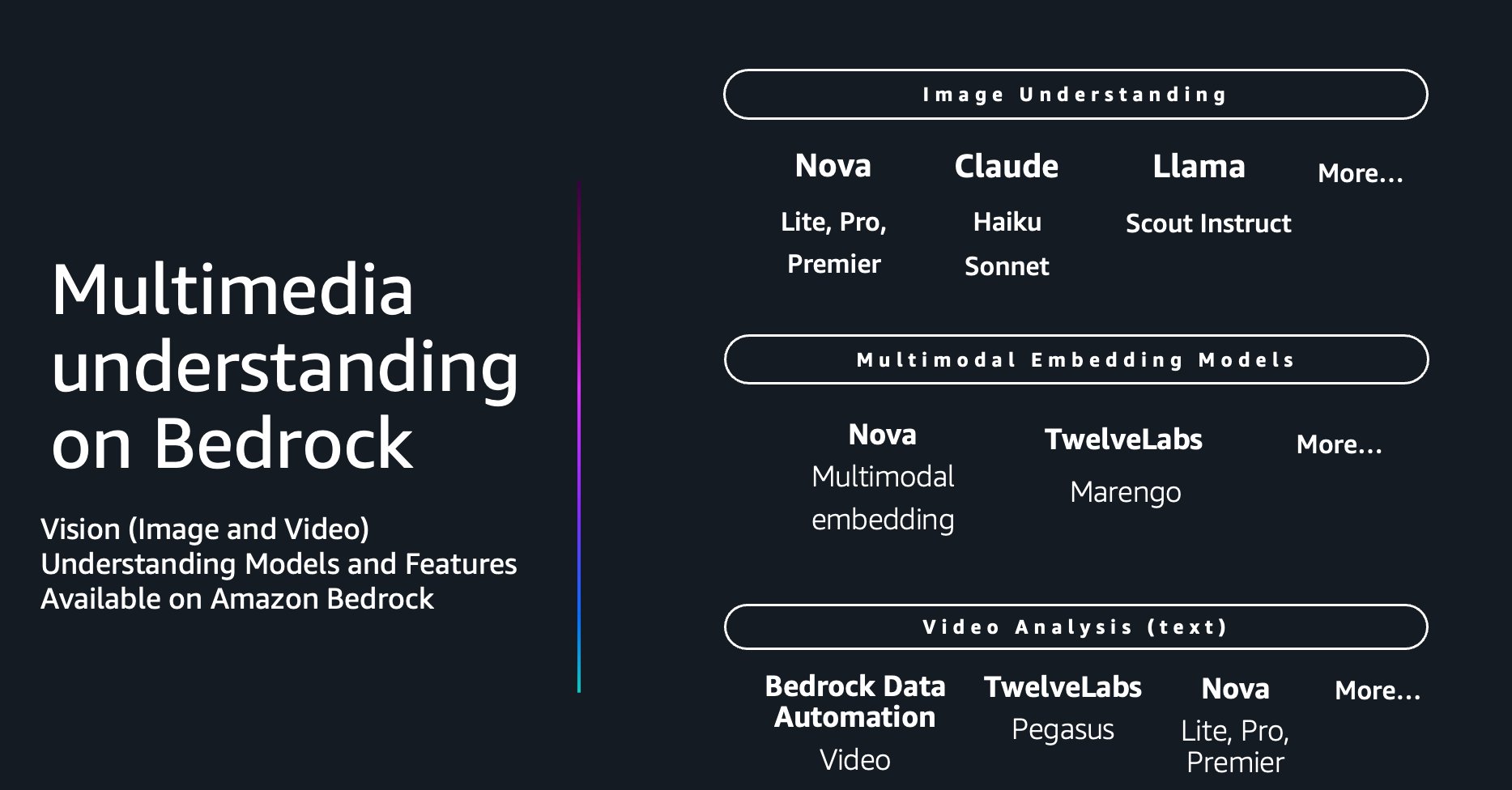

Amazon Bedrock's multimodal foundation models offer scalable video understanding through three architectural approaches, enabling organizations to extract nuanced insights from large volumes of video. The frame-based workflow optimizes precision and cost for use cases like security surveillance and compliance monitoring, utilizing intelligent frame deduplication to enhance processing efficiency.

MIT engineers have developed an ultrasound wristband that tracks hand movements in real-time, allowing wearers to control robots and virtual environments wirelessly. This technology could revolutionize hand tracking in virtual and augmented reality, as well as provide valuable training data for humanoid robots.

Progressive lawmakers propose moratorium on AI datacenter construction to establish federal regulations. Sanders warns of AI's impact, urging Congress to take action.

AI is now core business infrastructure, with a diverse ecosystem of models. NVIDIA leads open source AI with the Nemotron Coalition, shaping the future of AI innovation.

Melania Trump partners with AI robot 'Figure 3' at Fostering the Future Together summit, advocating for global education access. The humanoid robot greets attendees before the first lady's address on technology and children's education.

AI is increasingly popular for holiday planning, with a fifth of under-25s using ChatGPT and AI trip planners. However, some travelers have experienced AI providing inaccurate or false information, including creating non-existent attractions.

Celebrity therapist Esther Perel conducts couples counseling session with man and his AI “girlfriend”, raising concerns about human-machine relationships in society. The session leaves a lasting impact, prompting reflection on the implications of spending time with AI companions over real human interactions.

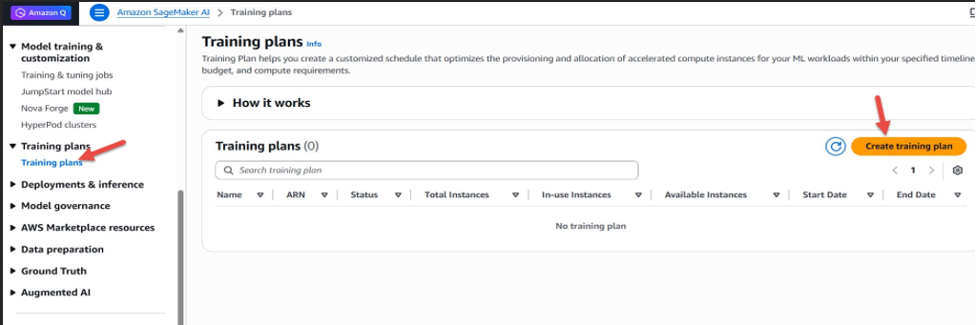

Amazon SageMaker AI training plans offer a solution to reserve GPU capacity for time-bound inference workloads, ensuring uninterrupted access and controlled costs. Customers can select specific time periods and instance types to create reservations, improving application performance and predictability.

MIT-led scientists warn current AI systems may lead doctors astray due to overconfidence. They propose a "humble" AI framework to encourage collaboration, prevent errors, and enhance decision-making in medical settings.

Anthropic battles US agencies in court over refusal to allow AI in lethal weapons. Trump orders halt on government use of Anthropic's tools.