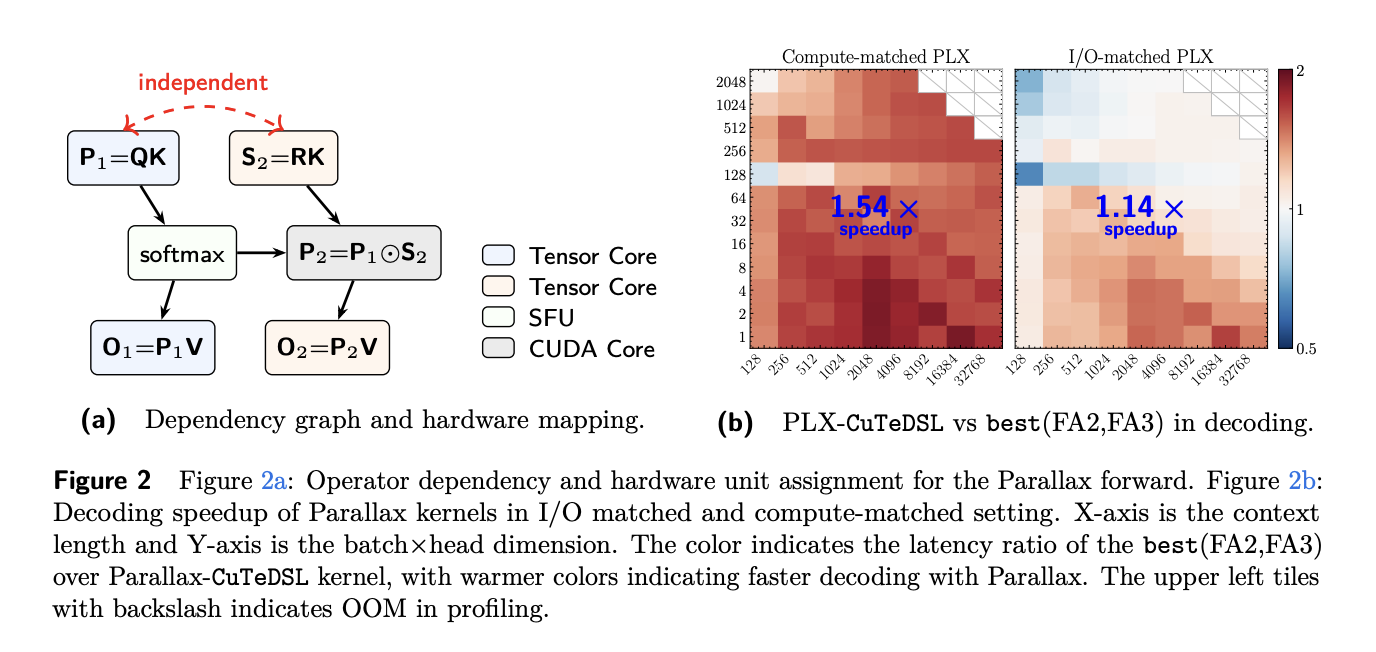

A new paper introduces 'Parallax,' a parameterized Local Linear Attention mechanism that enhances efficiency without cutting compute. Parallax simplifies and improves the LLA framework, making it more efficient and easier to implement, with the potential to scale to LLM pretraining and codesign with Muon.

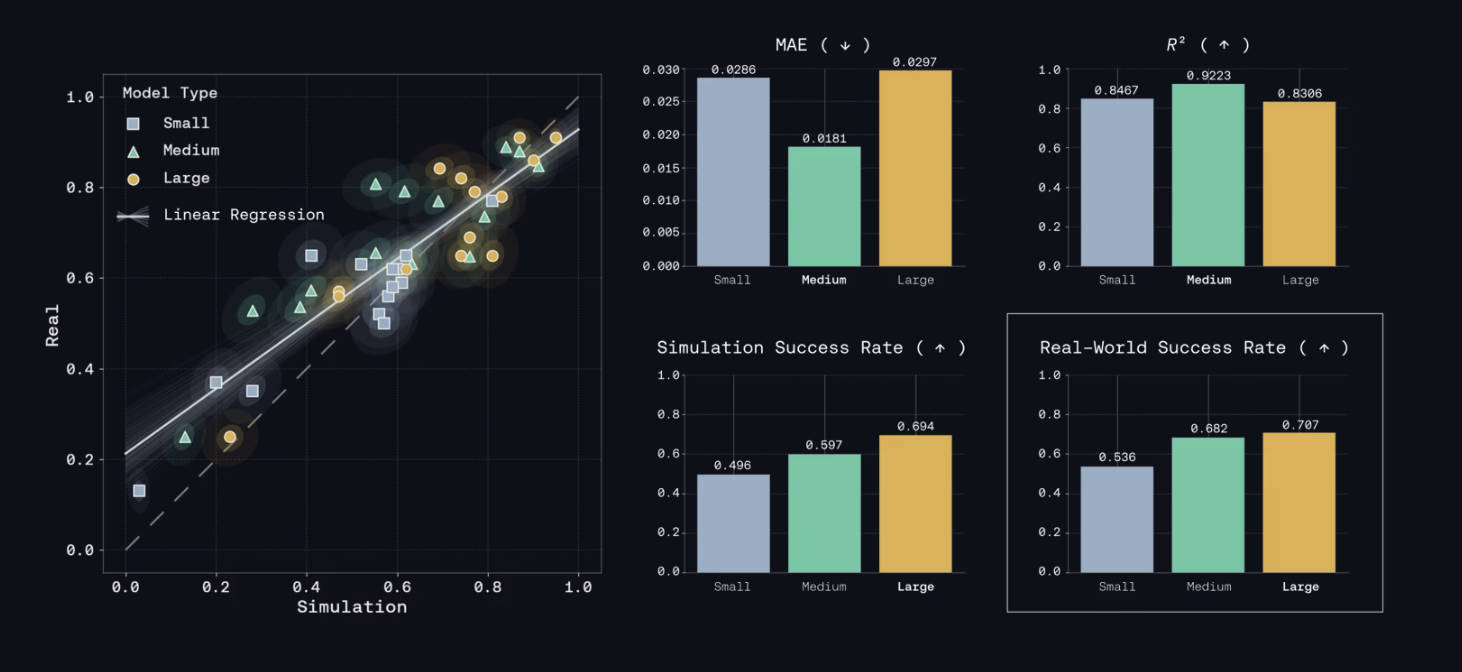

Genesis AI released Genesis World 1.0, featuring Nyx, Quadrants, and a simulation interface to accelerate robotics model development through simulation. Evaluation in under 0.5 hours yields bit-exact results, showing a correlation of 0.8996 between simulation and on-hardware rollouts.

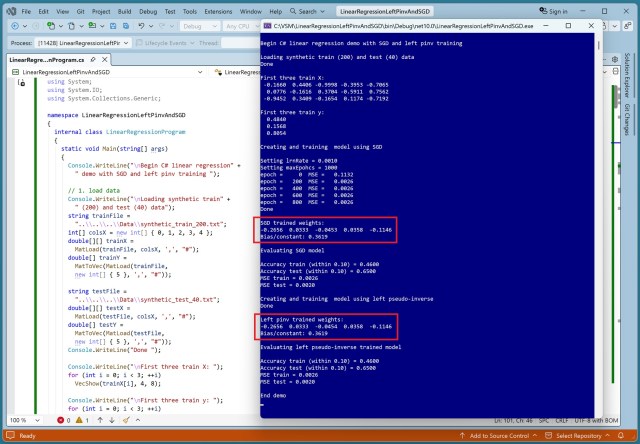

Linear regression predicts values using weights and bias. Techniques like SGD and L-BFGS vary in handling data complexities.

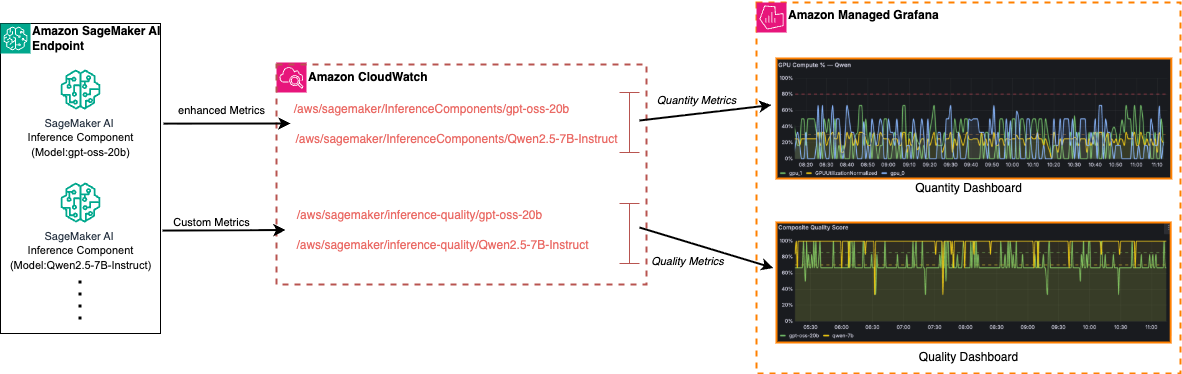

Deploying large language models (LLMs) on Amazon SageMaker AI Inference requires comprehensive observability for monitoring both infrastructure quantity and LLM quality. Monitoring metrics like latency, errors, and response accuracy is crucial for optimizing cost, performance, and output quality over time.

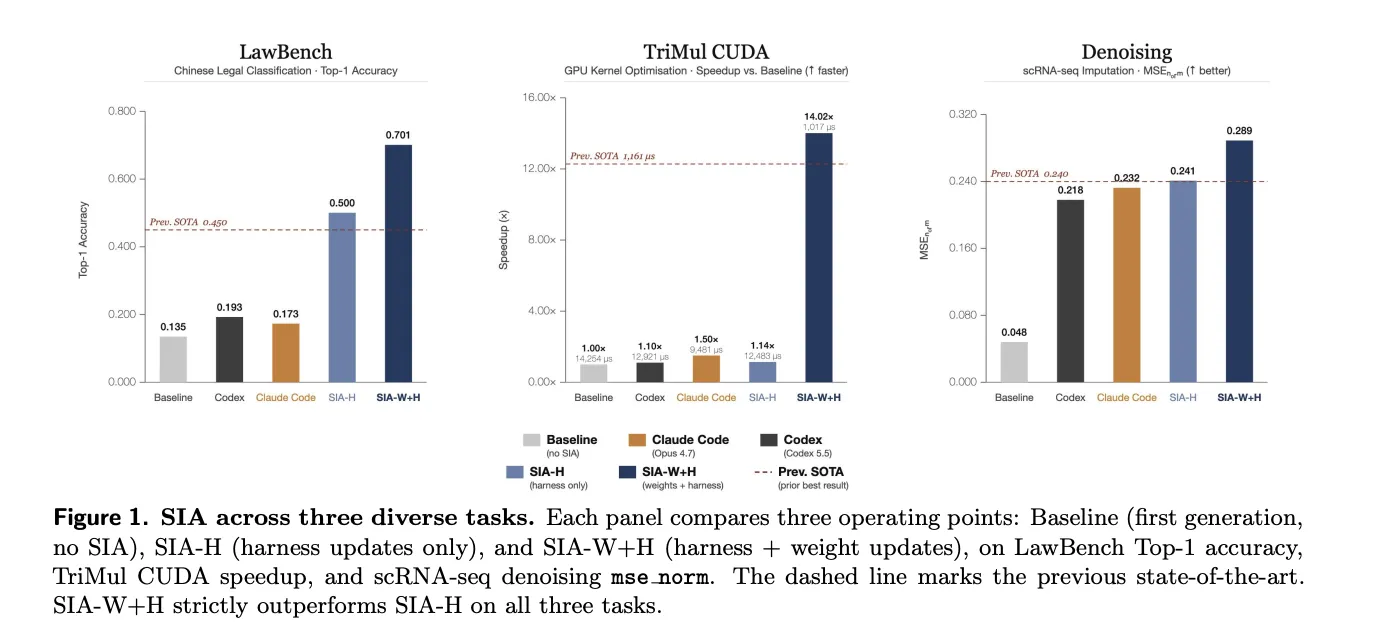

Hexo Labs released SIA (Self-Improving AI), an open-source framework that edits both the agent's scaffold and model weights simultaneously. SIA outperformed traditional methods in three domains, showcasing significant improvements in accuracy and speed.

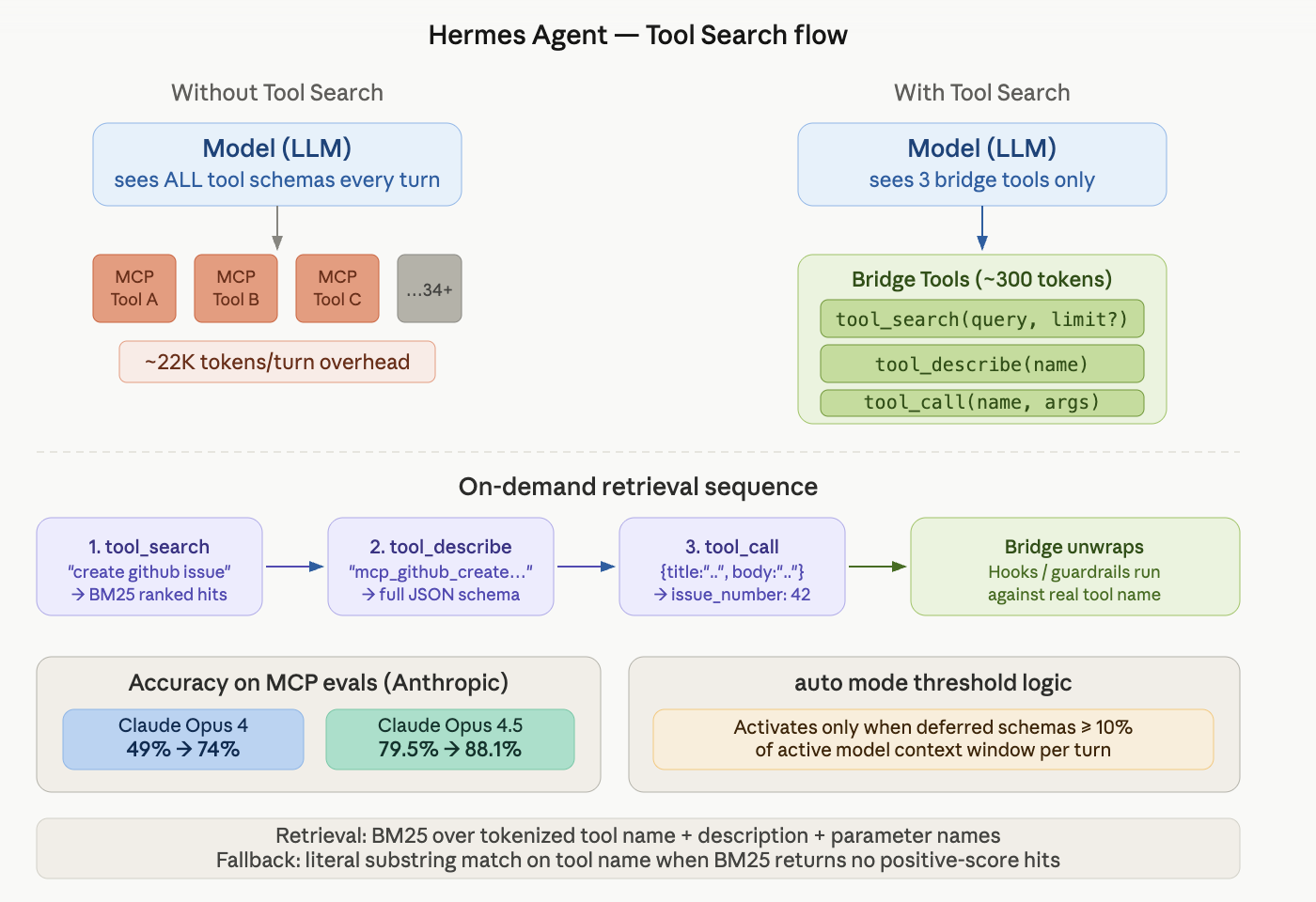

Nous Research's Hermes Agent introduces Tool Search to address AI agent system bottlenecks caused by excessive MCP tools. Tool Search optimizes tool loading, improving accuracy and reducing costs, with significant accuracy improvements shown in internal evaluations by Anthropic.

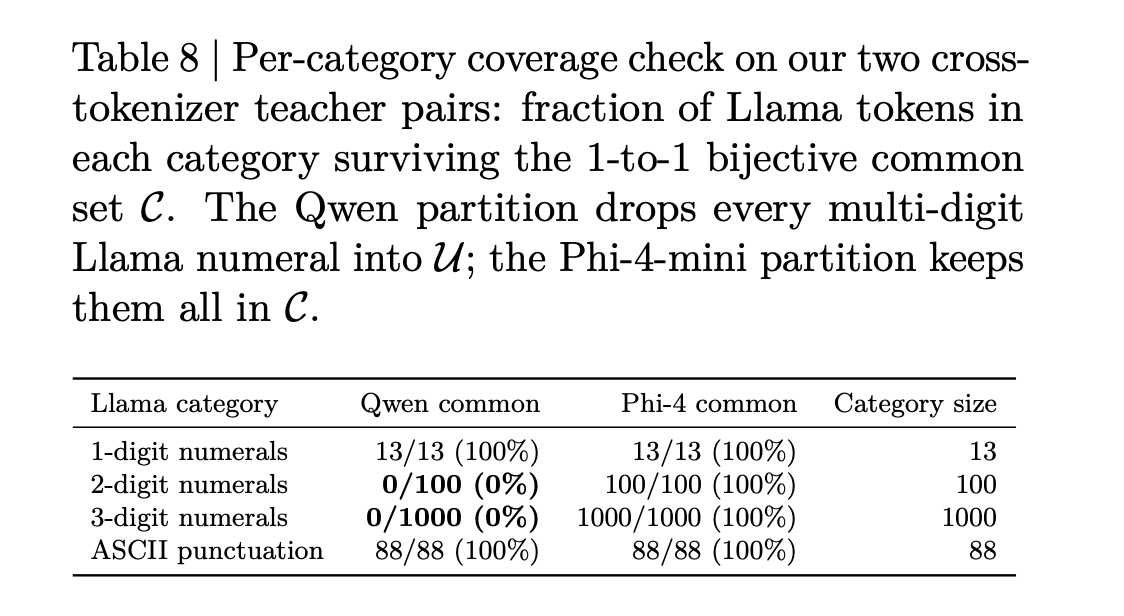

Knowledge distillation transfers "dark knowledge" from a large teacher model to a smaller student, overcoming vocabulary misalignment issues. NVIDIA's X-Token method addresses failures in current cross-tokenizer KD approaches, improving accuracy and alignment in distillation processes.

Robotics is evolving with NVIDIA Research showcasing simulation-to-real transfer for robots to adapt and operate reliably in dynamic environments. Innovations include multi-arm coordination with ScheduleStream and COMPASS policy framework for diverse robot embodiments, achieving significant improvements in success rates.

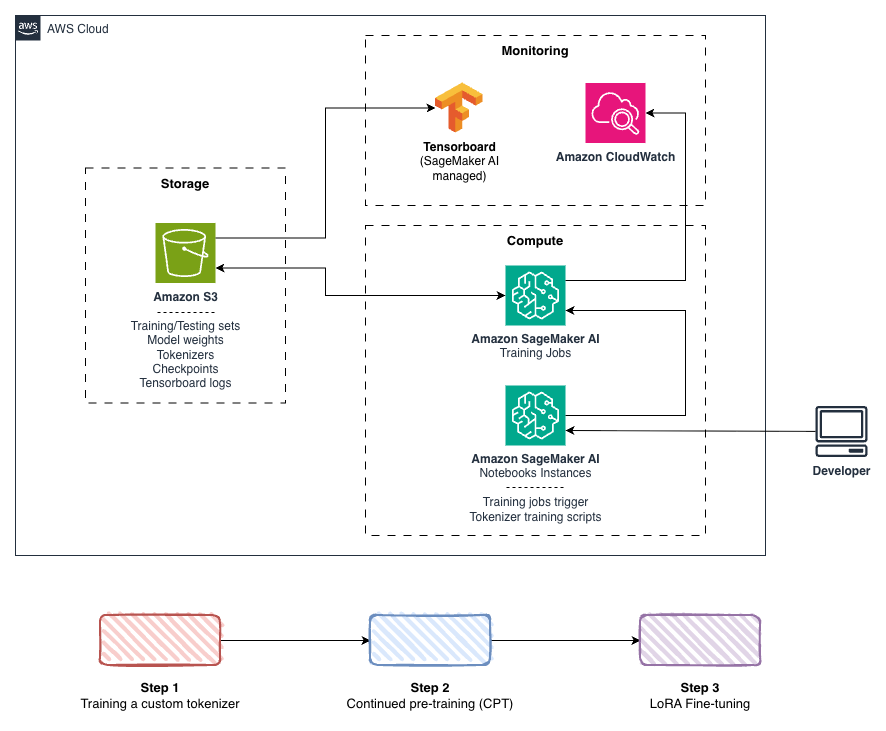

Azercell Telecom collaborates with AWS to build Azerbaijani large language model (LLM) and chatbot, achieving significant optimizations and improvements. Framework on Amazon SageMaker AI delivers higher training throughput, lower memory usage, and doubled text capacity, offering insights for working with complex languages.

Machine learning models predict values like income from sex, age, state, and politics. Imputing missing data for predictions can lead to misleading results in machine learning.

MIT and Massachusetts will establish the Quantum Systems Laboratory (QSL) to advance quantum research and innovation. The QSL will be a cutting-edge facility supporting transformative quantum technologies in various practical domains.

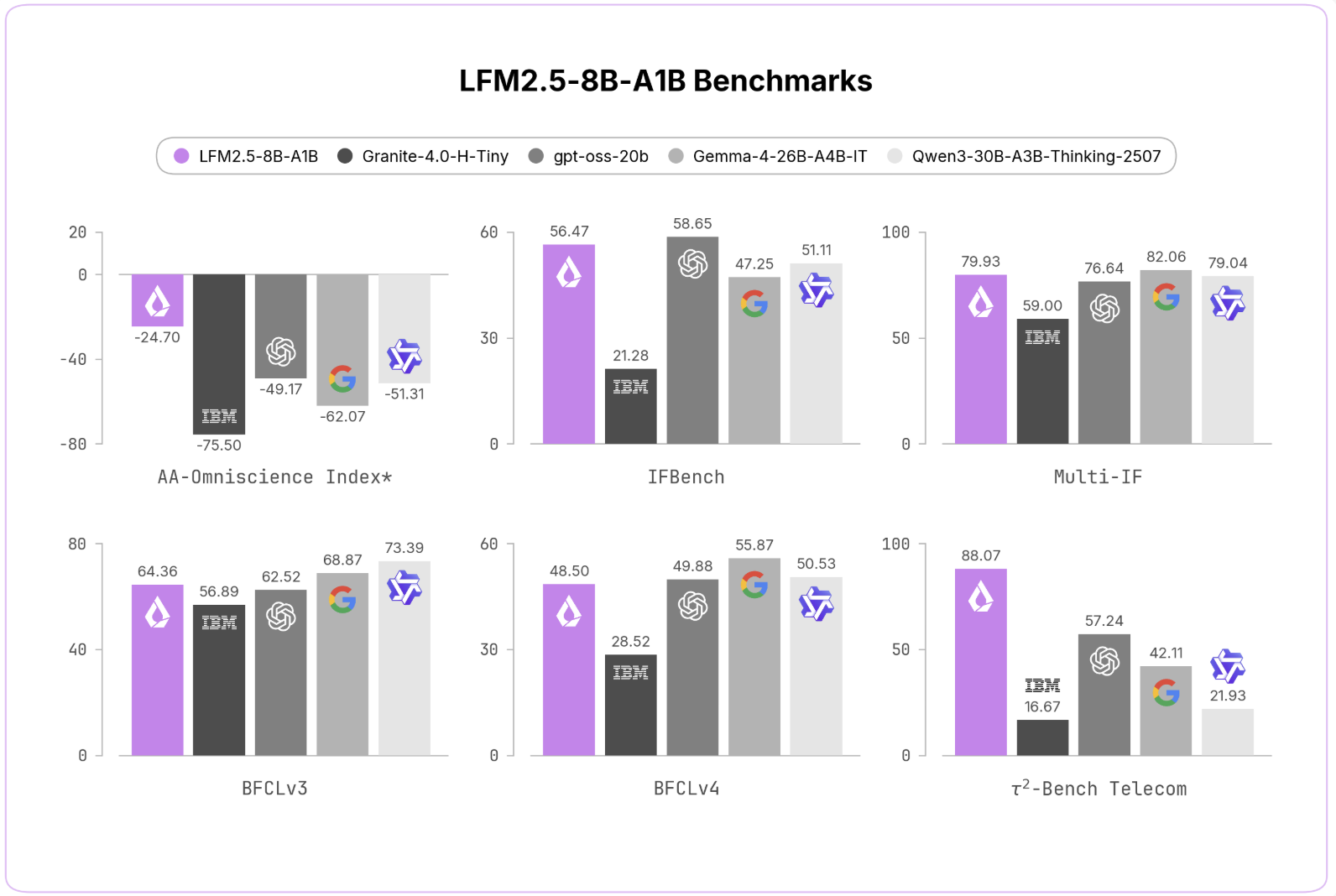

Liquid AI released LFM2. 5-8B-A1B, a sparse MoE model for tool calling. The reasoning-only model boasts improved performance across various benchmarks.

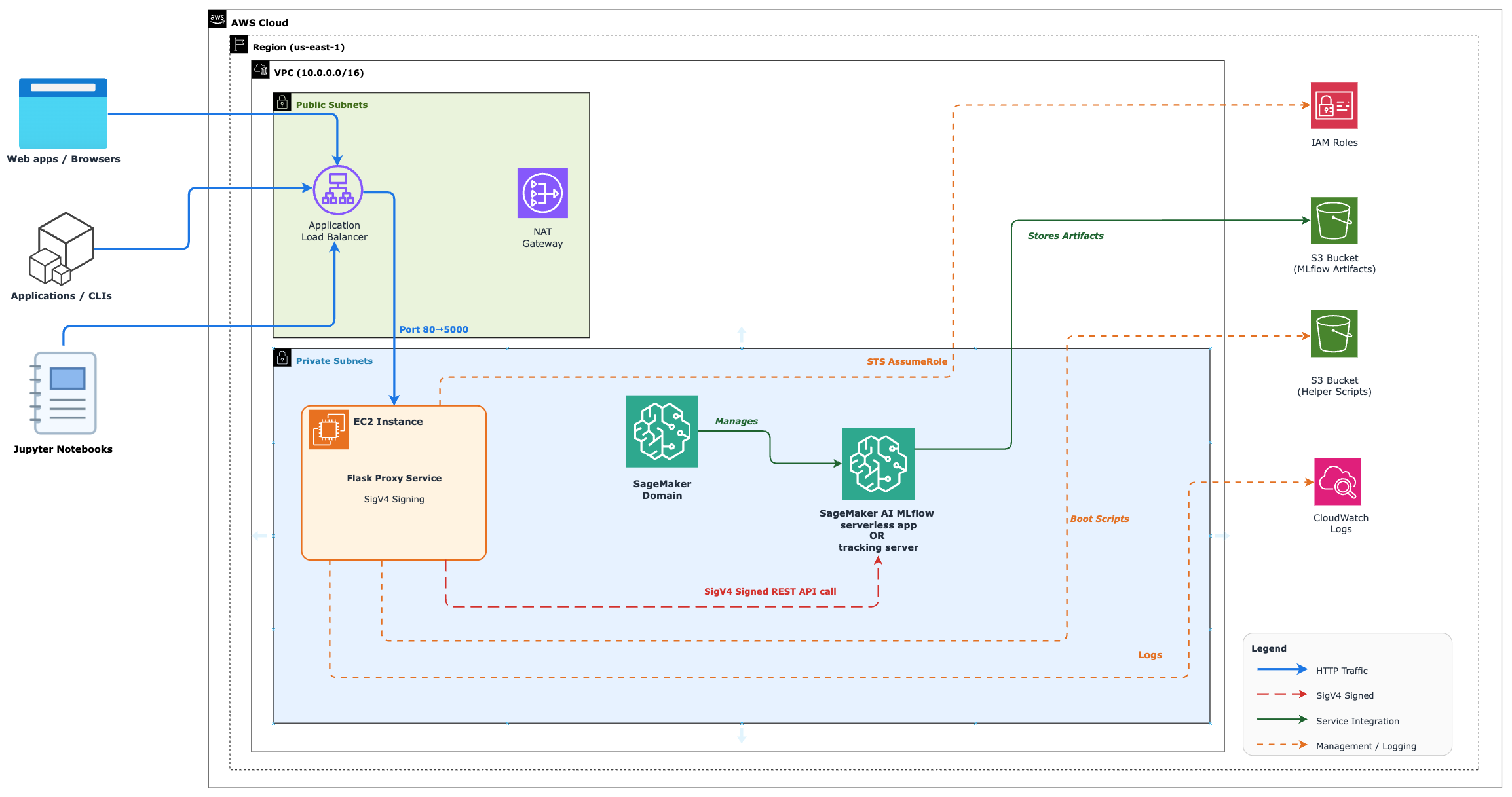

Amazon SageMaker MLflow offers comprehensive ML experiment tracking and model management capabilities. Enterprises can securely integrate MLflow with existing systems using a Flask-based proxy service, ensuring compliance and reducing complexity.

GeForce NOW launches 007 First Light, offering members James Bond's origin story with a free Elite Outfit. Experience high-quality cloud gaming with new games and exclusive rewards, including Resident Evil Requiem demo.

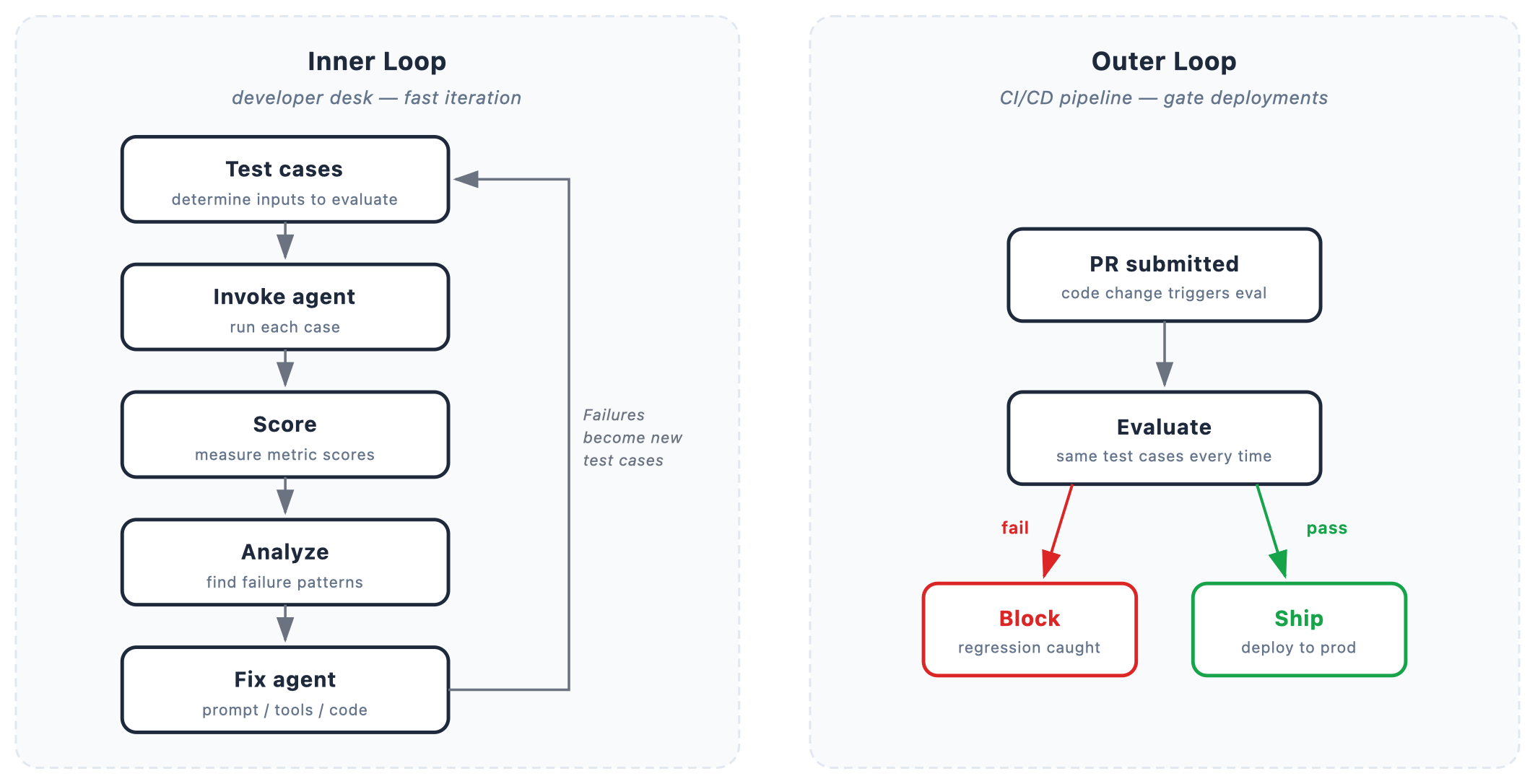

Agent evaluation is enhanced by combining online signals with offline baselines in Amazon Bedrock AgentCore. Versioned datasets provide stable inputs for consistent measurement and ground truth for verifiable results in agent evaluation.