Effective project management in Python development is crucial. Writing clean, maintainable code is essential for readability, debugging, scalability, and reusability in professional environments. Python's popularity lies in its simplicity, comprehensibility, and versatility, but the efficiency and maintainability of projects heavily rely on developers' practices. Proper code structuring and fol...

China awaits Communist party's plan to boost economy amidst Trump's tariffs and DeepSeek's AI tech. Annual parliamentary session in Beijing sparks security measures, reminiscent of past protests.

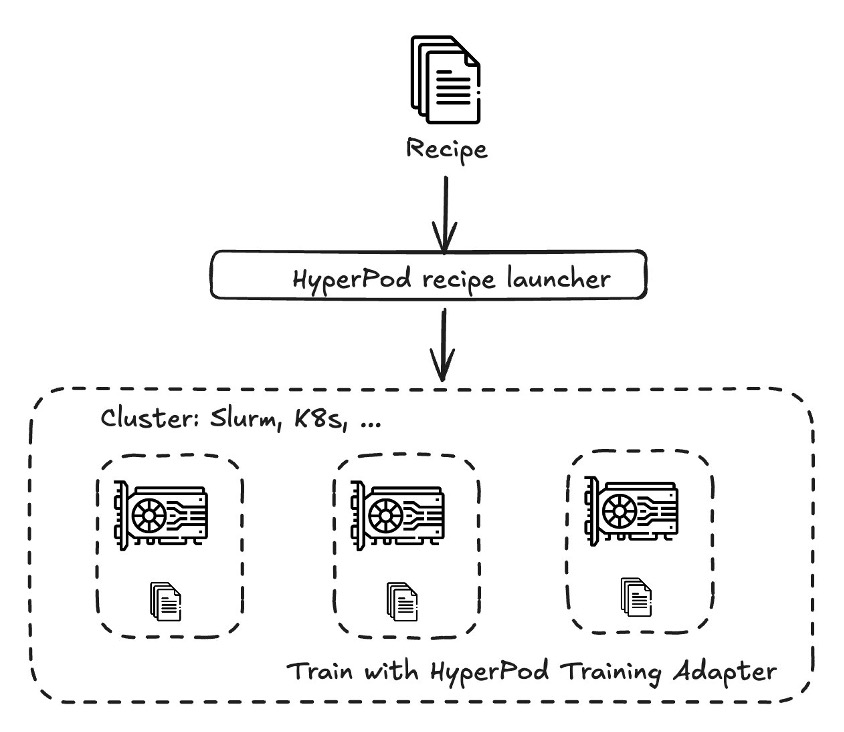

Organizations are customizing DeepSeek AI models for specific use cases, facing challenges in managing computational resources. Amazon SageMaker HyperPod recipes streamline DeepSeek model customization, achieving up to 49% improvement in Rouge 2 score.

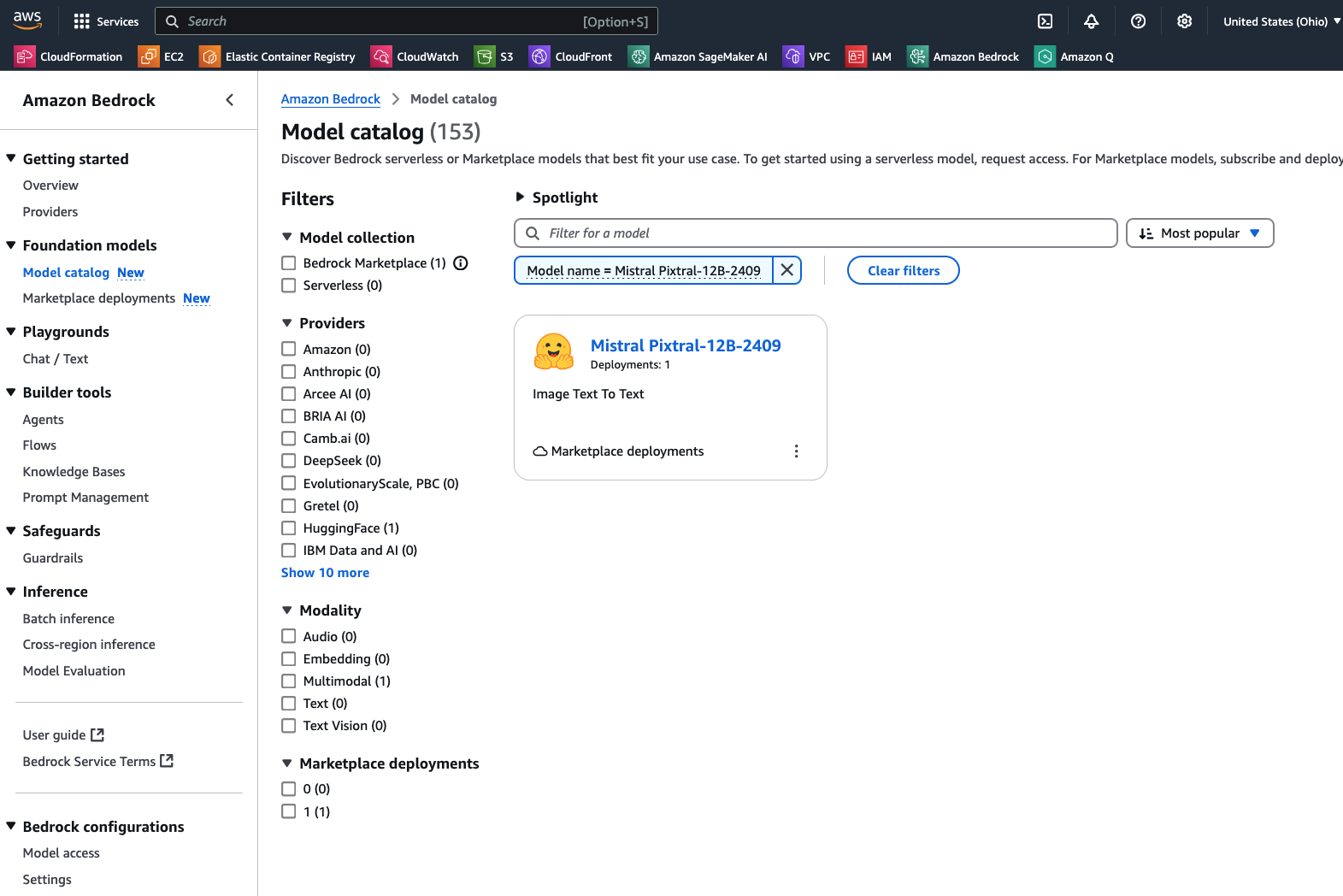

Pixtral 12B, Mistral AI's cutting-edge 12 billion parameter VLM, excels in text-only and multimodal tasks, available on Amazon Bedrock Marketplace. The model's novel architecture enables high-performance image and document comprehension, surpassing open models in various benchmarks.

New book by Karp and Zamiska explores AI, national security, and Silicon Valley's influence. Co-founder of Palantir, Karp's background and connections raise intrigue in tech industry.

Prof Andrew Moran and Dr Ben Wilkinson discuss the rise of AI in university essays, challenging traditional knowledge sources. The "Tinderfication" of knowledge is reshaping public discourse and undermining critical thinking.

TUC urges stronger copyright and AI protections to prevent exploitation by tech bosses in creative industries. Unions emphasize the need for proper guardrails for workers in the face of technological advancements.

The Trump administration is laying off 6,700 IRS workers during tax season, causing chaos. AI's disruption will have far-reaching effects beyond just the IRS layoffs.

Sky Sports pundit praised for accurate assessment of India's Champions Trophy gerrymandering. Speaking truth in sports amidst industrial-scale deception is a revolutionary act.

William Boyd predicts a wave of James Bond spin-offs as Amazon acquires the franchise rights. He anticipates AI-generated novels, theme parks, and more.

Vanderbilt professor introduces son to ChatGPT AI tools for everyday tasks and learning opportunities. 11-year-old now adept at using AI for games, fact-checking, and practical applications.

MIT and GlobalFoundries partner to enhance semiconductor technologies, focusing on AI and power efficiency with silicon photonics. Collaboration between academia and industry drives transformative solutions for the next generation of chip technologies.

Two Australian men arrested in international operation targeting group distributing AI-generated child abuse images. 25 individuals arrested in investigation into child sexual exploitation, including suspects from Queensland and NSW.

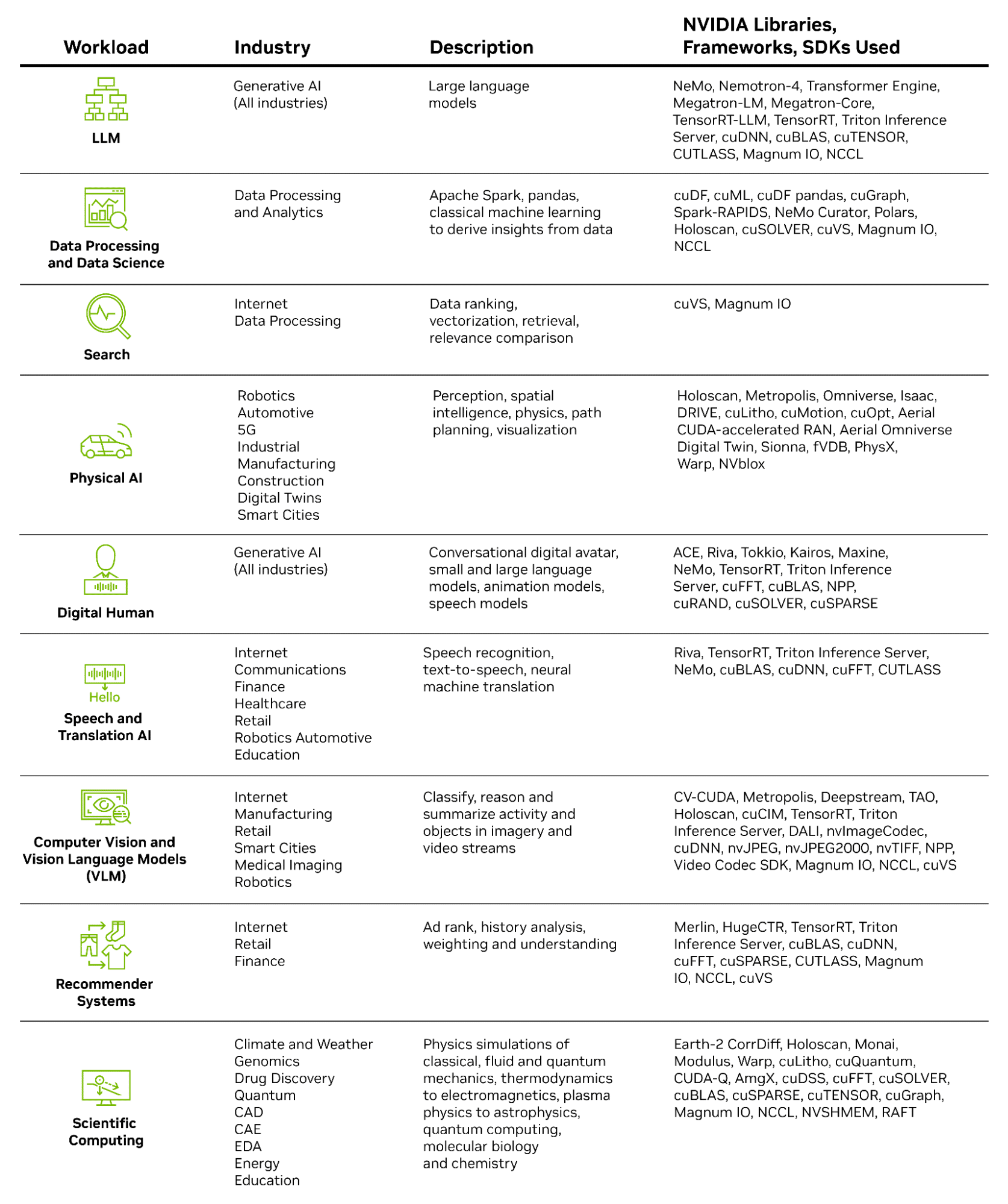

NVIDIA AI-Powered Cybersecurity enhances threat detection with real-time analysis, automation, and scalability. High-speed networking and parallel processing enable faster threat detection and response, minimizing downtime and ensuring business continuity.

Artificial intelligence company OpenAI launches video generation tool Sora in UK, sparking copyright debate led by film director Beeban Kidron. Training data crucial for Sora's existence, intensifying tech sector vs. creative industries clash over copyright.