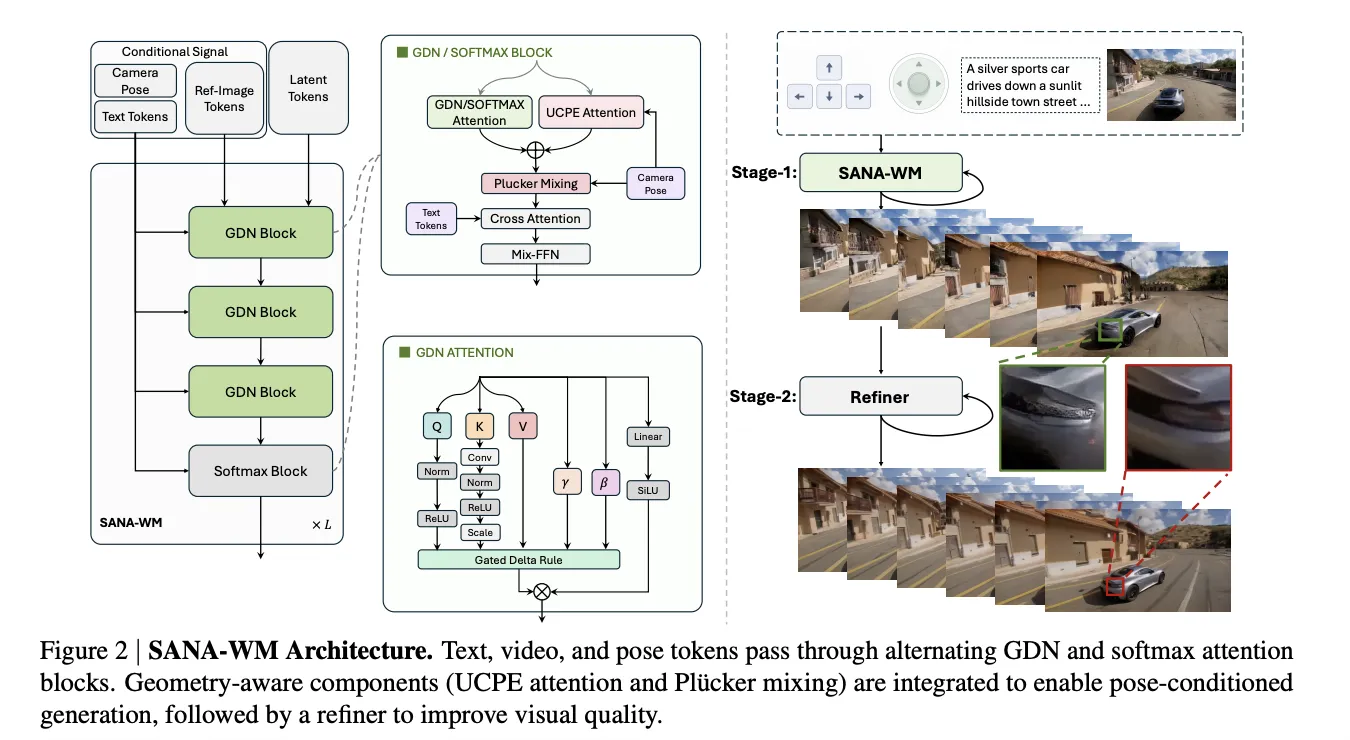

NVIDIA's SANA-WM tackles challenges in scaling world models for high-resolution video synthesis. It features a 2.6B-parameter Diffusion Transformer and supports single-GPU inference for fast deployment.

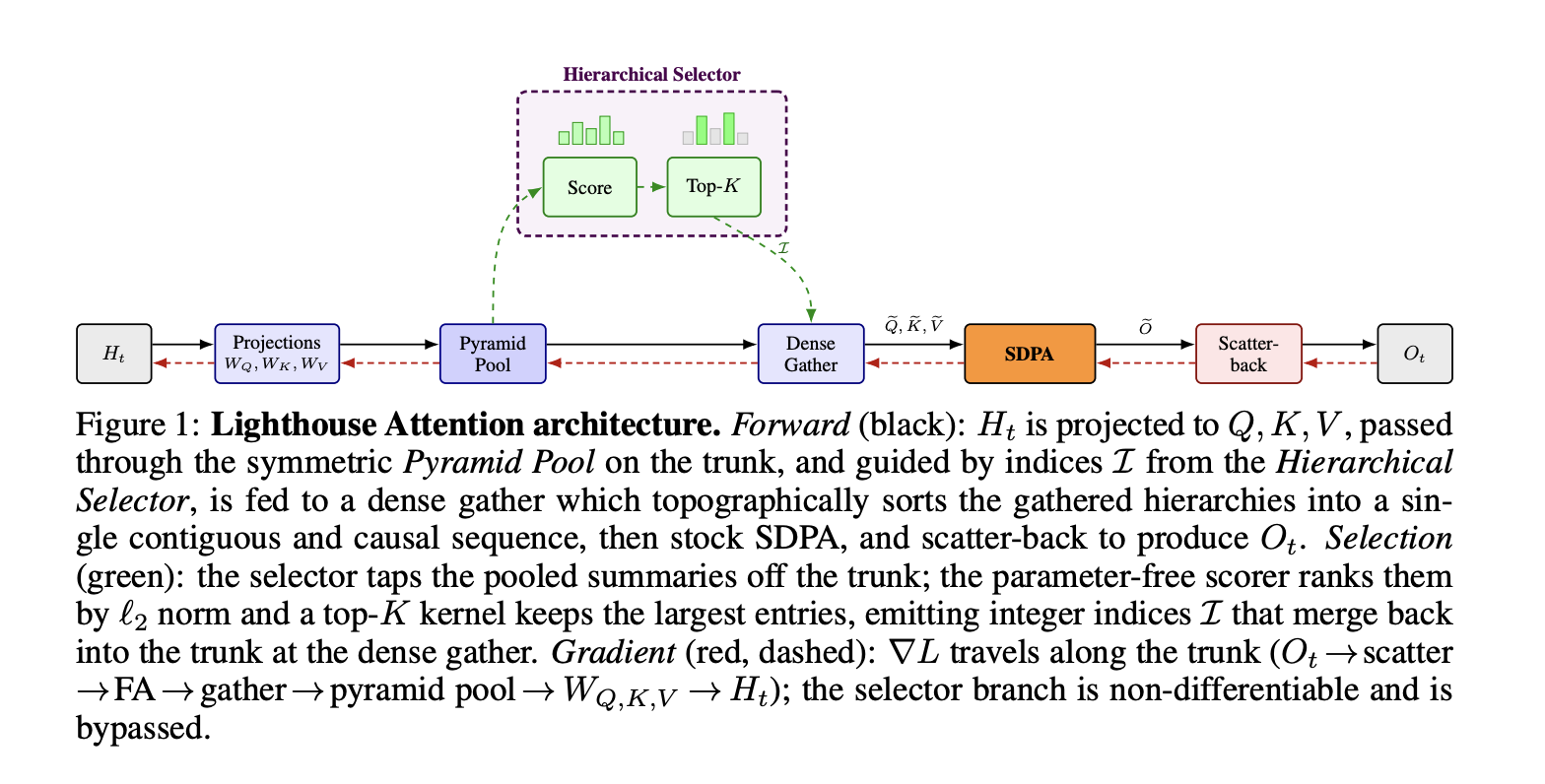

FlashAttention addressed the expensive attention issue in large language models, but Lighthouse Attention by Nous Research achieves faster training with lower memory usage, challenging existing sparse methods. Lighthouse's innovative four-stage pipeline optimizes symmetric pooling and selection logic for improved performance in transformer models.

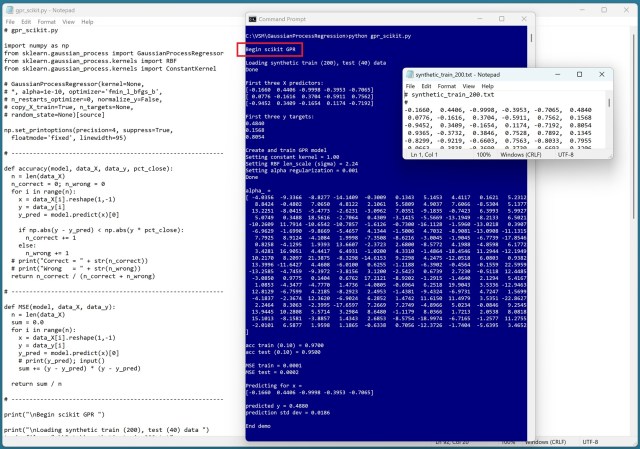

Implementing Gaussian process regression in C# involved exploring scikit-learn's Python module. Using scikit GPR with RBF resulted in high accuracy and confidence intervals for predictions.

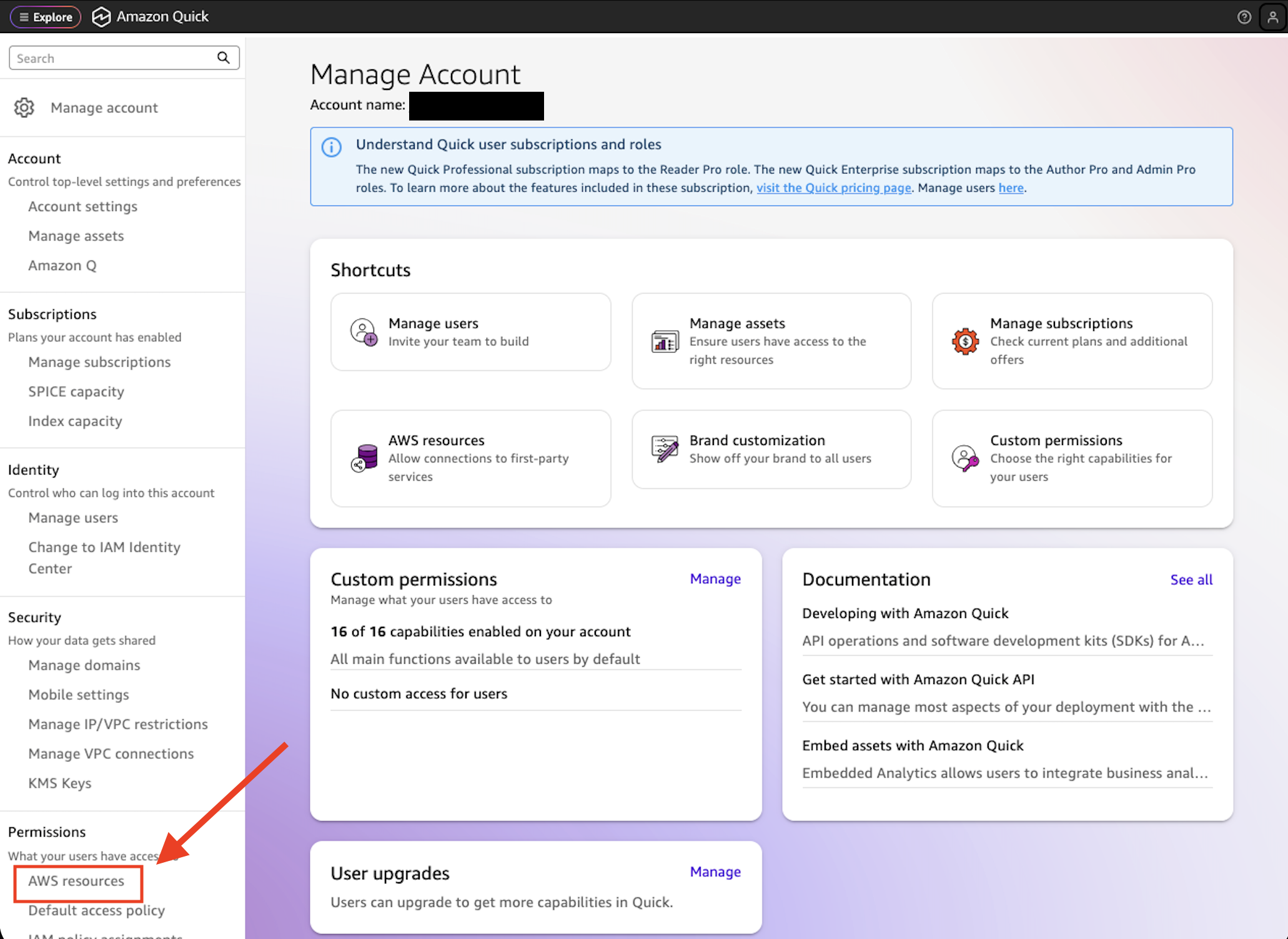

AI-driven search and chat help employees find answers in large repositories. Amazon Quick now offers document-level ACL support for fine-grained control over access to sensitive documents, ensuring compliance and data governance.

Black box AI models pose challenges in decision-making, leading to potential costly outcomes. Dr. James McCaffrey highlights the need for explainable AI to bridge the gap between accuracy and transparency in high-stakes business decisions.

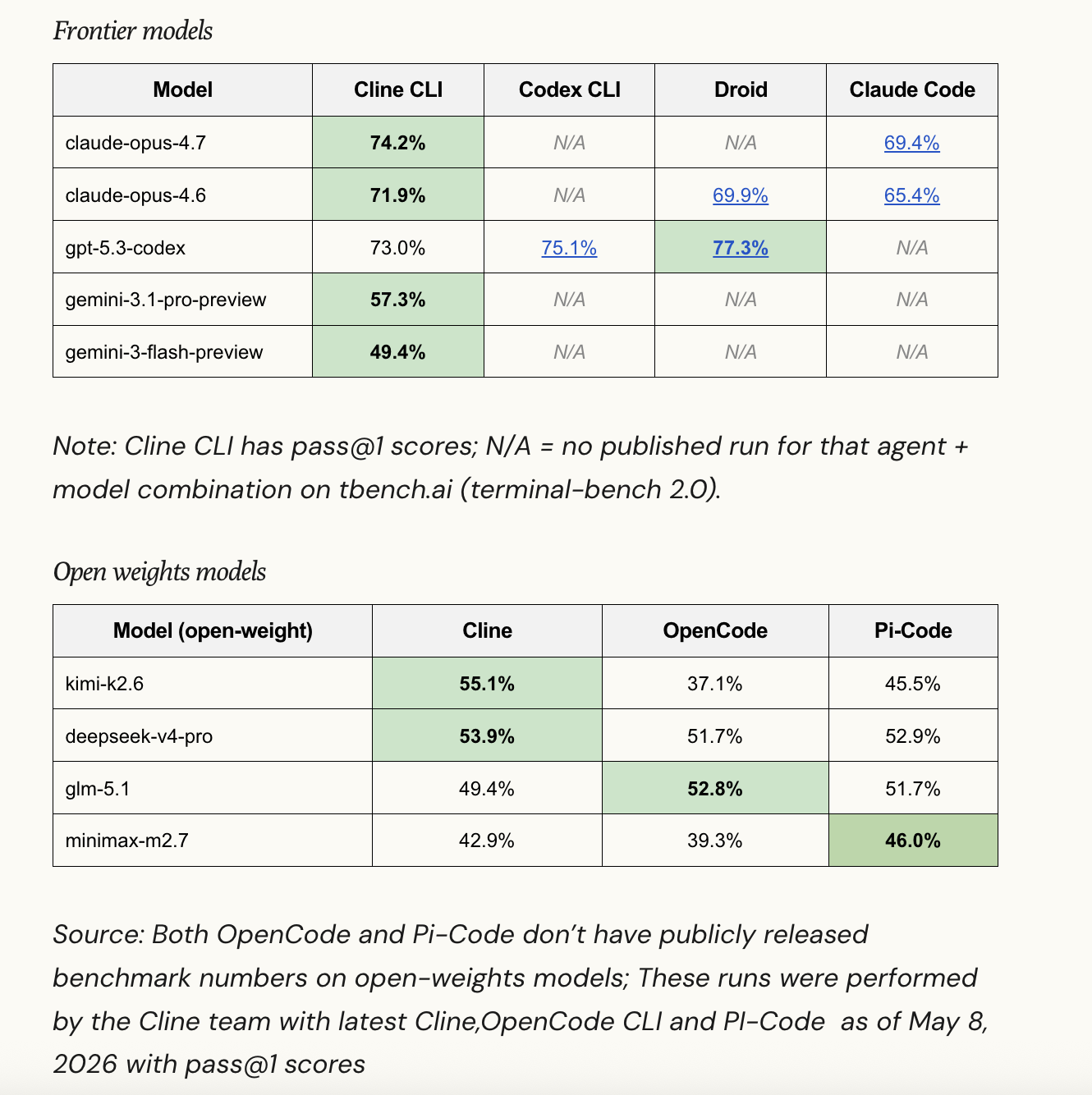

Cline, the popular open-source AI coding agent, introduces a new SDK to rebuild its products for better maintainability and flexibility. The SDK, @cline/sdk, offers a layered TypeScript stack for seamless integration and improved performance, with individual packages for customizable solutions.

MIT master’s students Sunshine Jiang ’25 and Rupert Li ’24 receive prestigious Knight-Hennessy Scholarship for graduate studies at Stanford University. Jiang focuses on embodied AI and robotics, while Li excels in mathematics, receiving multiple prestigious scholarships and awards.

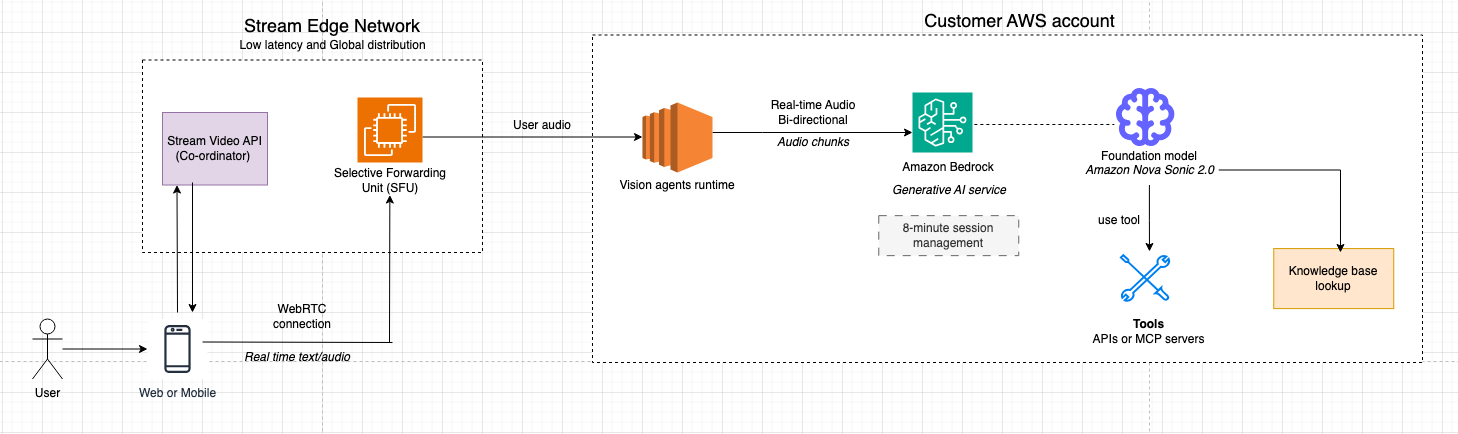

Stream's Vision Agents framework, combined with Amazon Bedrock and Amazon Nova 2 Sonic, simplifies building real-time voice agents. The solution streamlines complex AI pipelines, handling audio streaming, speech recognition, and multilingual support for seamless user experiences.

GeForce NOW introduces Subnautica 2 for seamless gaming on any device. HITMAN World of Assassination event offers unique rewards, including items inspired by CASINO ROYALE.

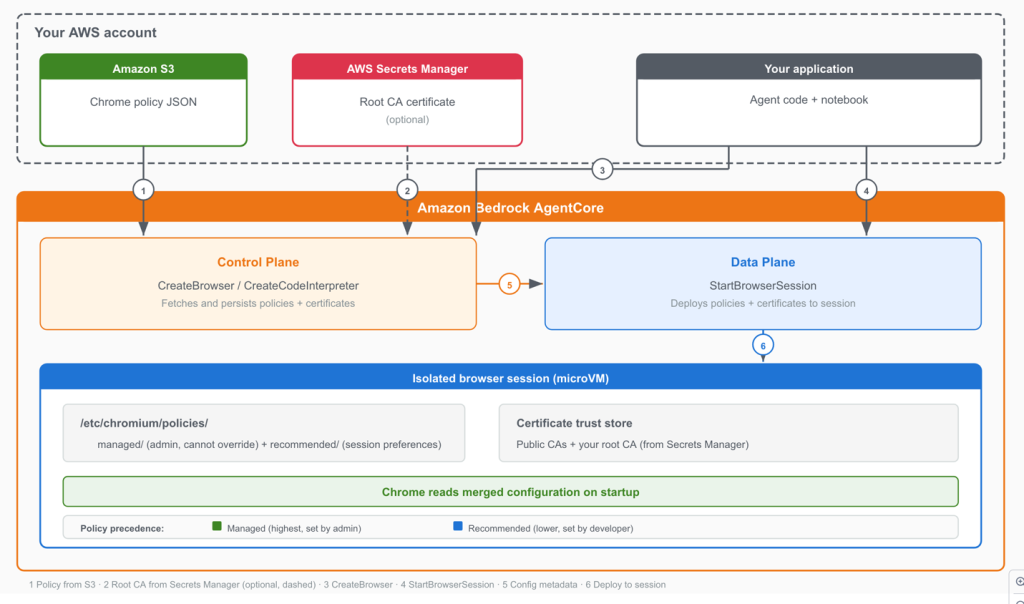

Amazon Bedrock AgentCore Browser now supports Chrome enterprise policies and custom root CA certificates, providing granular control over AI agent browser behavior and connectivity. Chrome policies restrict agent scope, disable risky browser features, and separate policy management from agent development, enhancing security for organizations using AI agents with unrestricted web access.

Amazon Lex Assisted NLU enhances bot accuracy by understanding natural language variations without manual configuration. It improves intent classification by 92% and slot resolution by 84%, with positive feedback from early adopters.

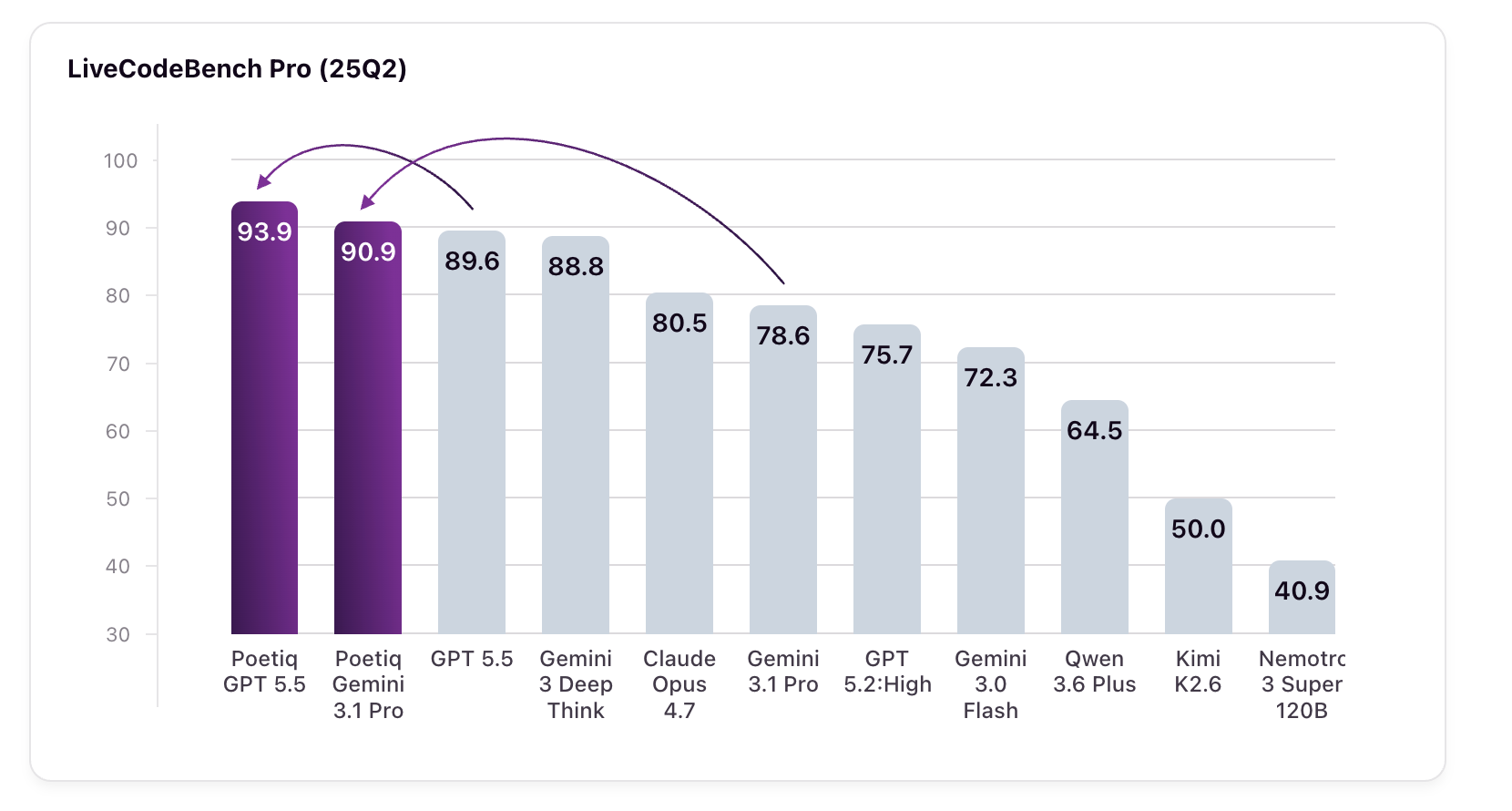

Poetiq's Meta-System achieves groundbreaking results on LiveCodeBench Pro, boosting GPT 5.5 High and Gemini 3.1 Pro scores significantly. Harnessing AI for coding challenges without fine-tuning models sets a new standard in performance and adaptability.

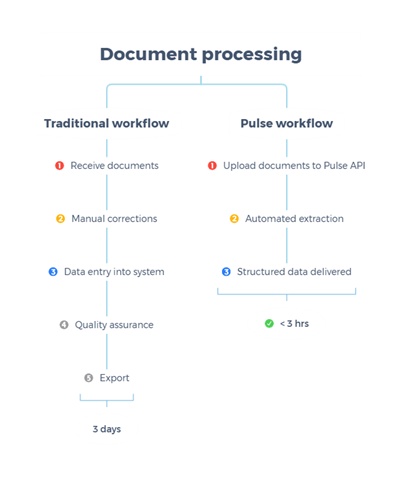

Financial institutions face costly errors due to OCR mistakes in financial data. Pulse AI and Amazon Bedrock offer a solution for accurate extraction and analysis of complex financial documents, saving time and improving accuracy for organizations like Samsung and Fortune 500 firms.

Thinking Machines Lab challenges the turn-based AI interaction model, introducing interaction models for real-time collaboration. The architecture features an interaction model for constant user exchange and a background model for deeper tasks.

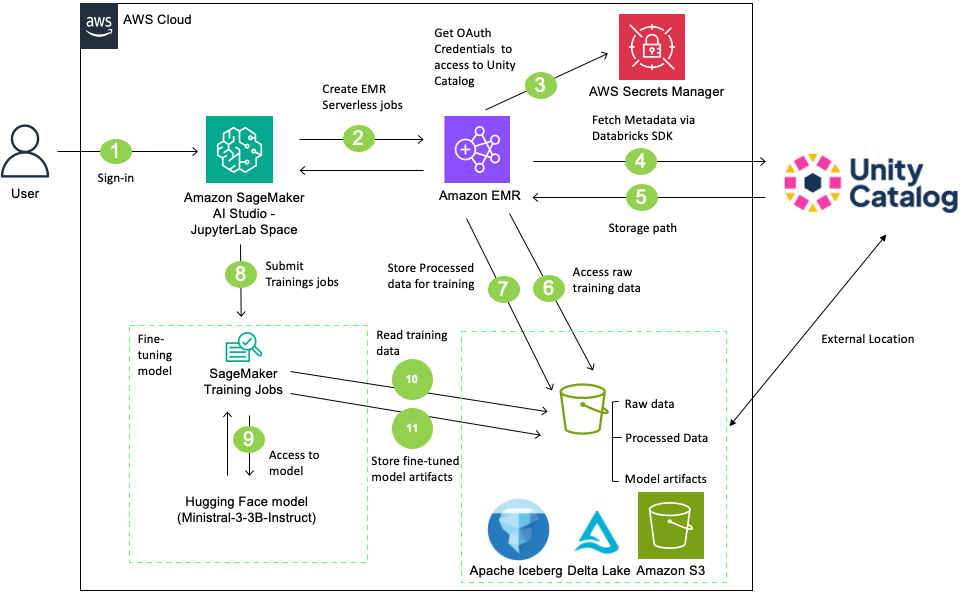

Fine-tune large language models with Amazon SageMaker AI and Databricks Unity Catalog, ensuring strict data governance and compliance. Securely integrate Unity Catalog with SageMaker AI using EMR Serverless for preprocessing, tracking data lineage without compromising security.