Amazon Bedrock Data Automation streamlines data extraction from financial documents with custom blueprints for accuracy and efficiency. Foundation models like Anthropic Claude enhance OCR capabilities for structured, actionable data extraction.

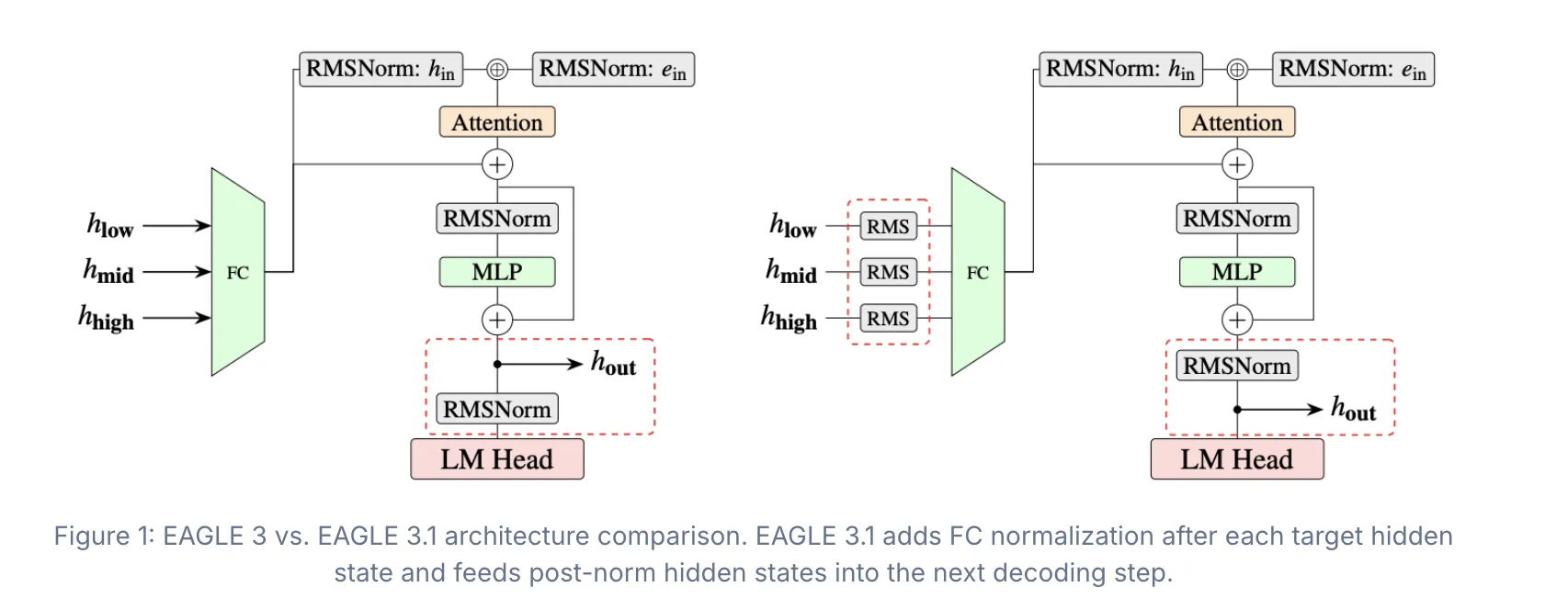

EAGLE Team's EAGLE series introduces EAGLE 3.1, enhancing speculative decoding with attention drift fixes for improved stability and performance in various environments. TorchSpec streamlines training for EAGLE 3.1, advancing research and deployment of speculative decoding algorithms.

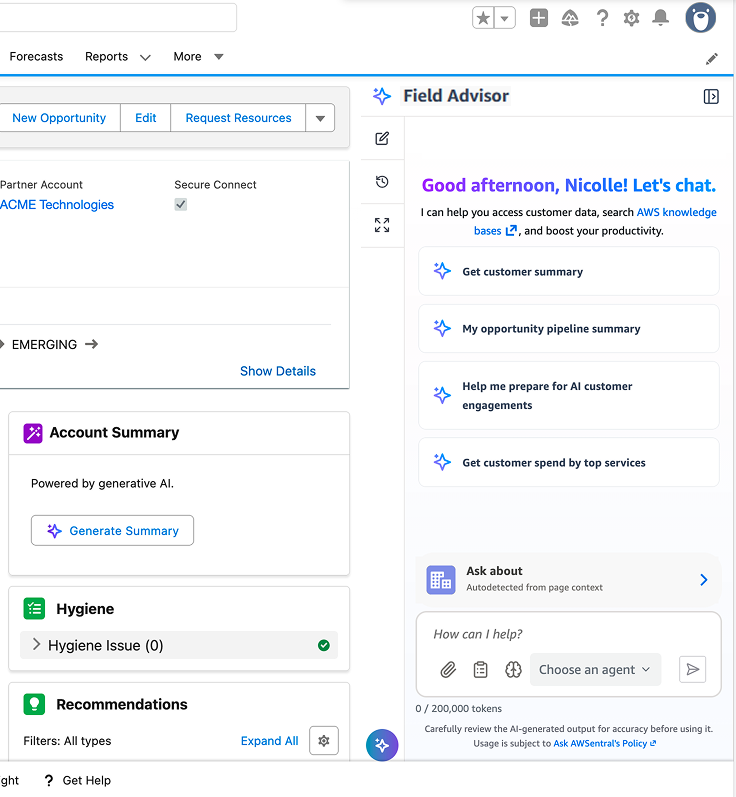

Field Advisor on Amazon Bedrock AgentCore streamlines agent orchestration for AWS Sales, reducing cognitive load and improving customer interactions. This internal conversational assistant enhances productivity by routing requests to specialized agents, enabling sales reps to focus on customer needs.

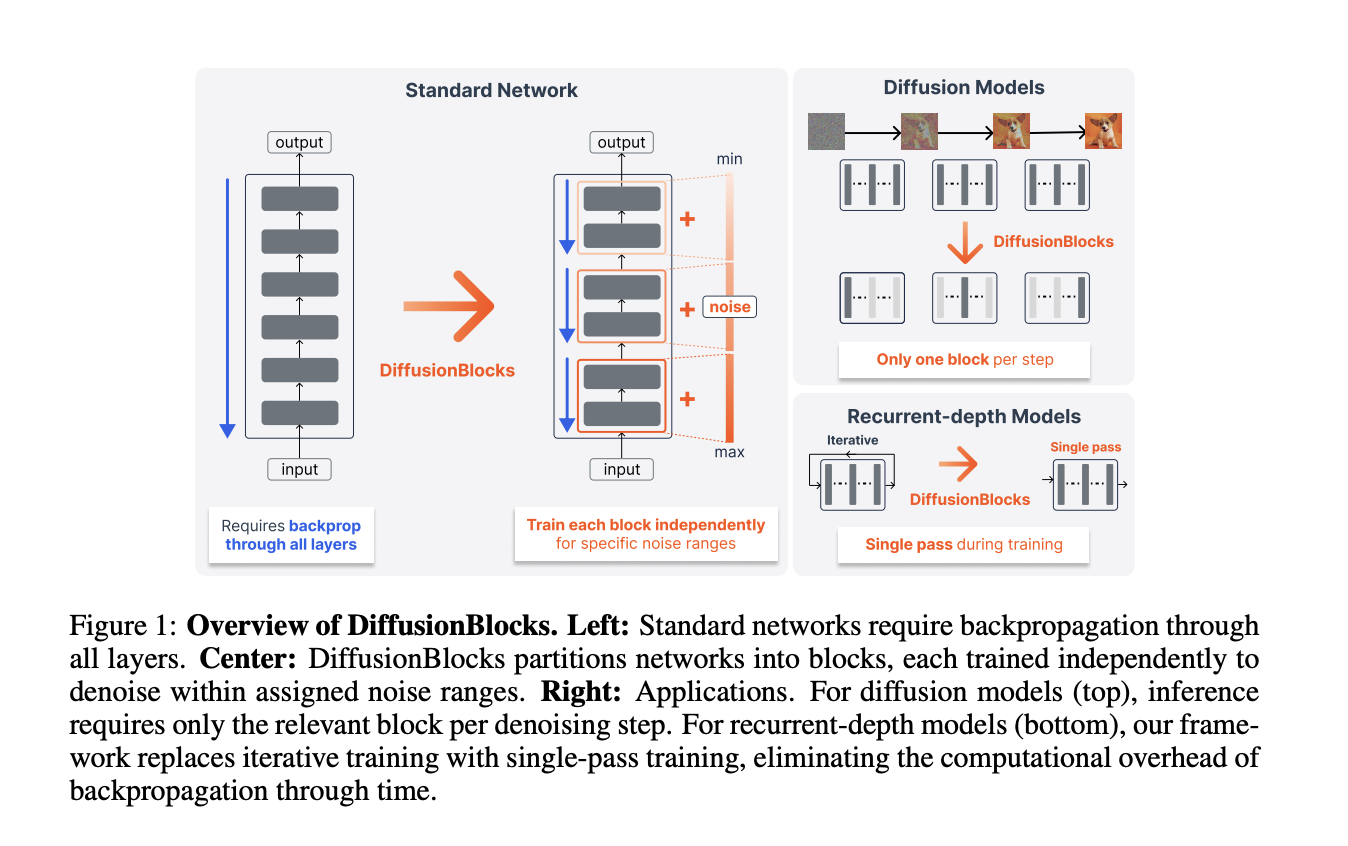

Sakana AI and University of Tokyo propose DiffusionBlocks, reducing memory usage in neural network training. Residual connections mimic Euler steps, enabling independent training of each block.

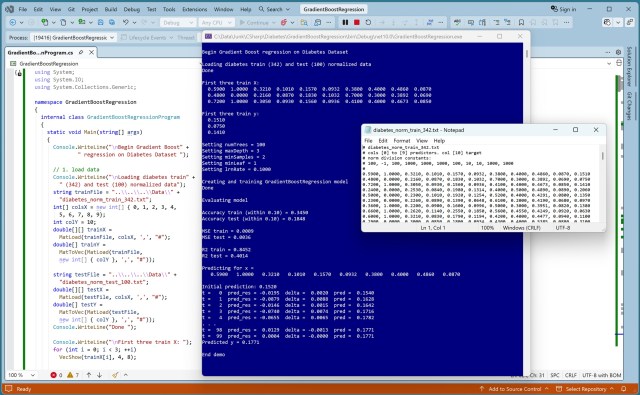

Practicing coding skills, a developer tests a gradient boost regression model on the Diabetes Dataset, highlighting the clever technique behind this ensemble model. Implementing 100 decision trees in C#, the developer explores the subtle yet effective approach of predicting residuals to enhance accuracy.

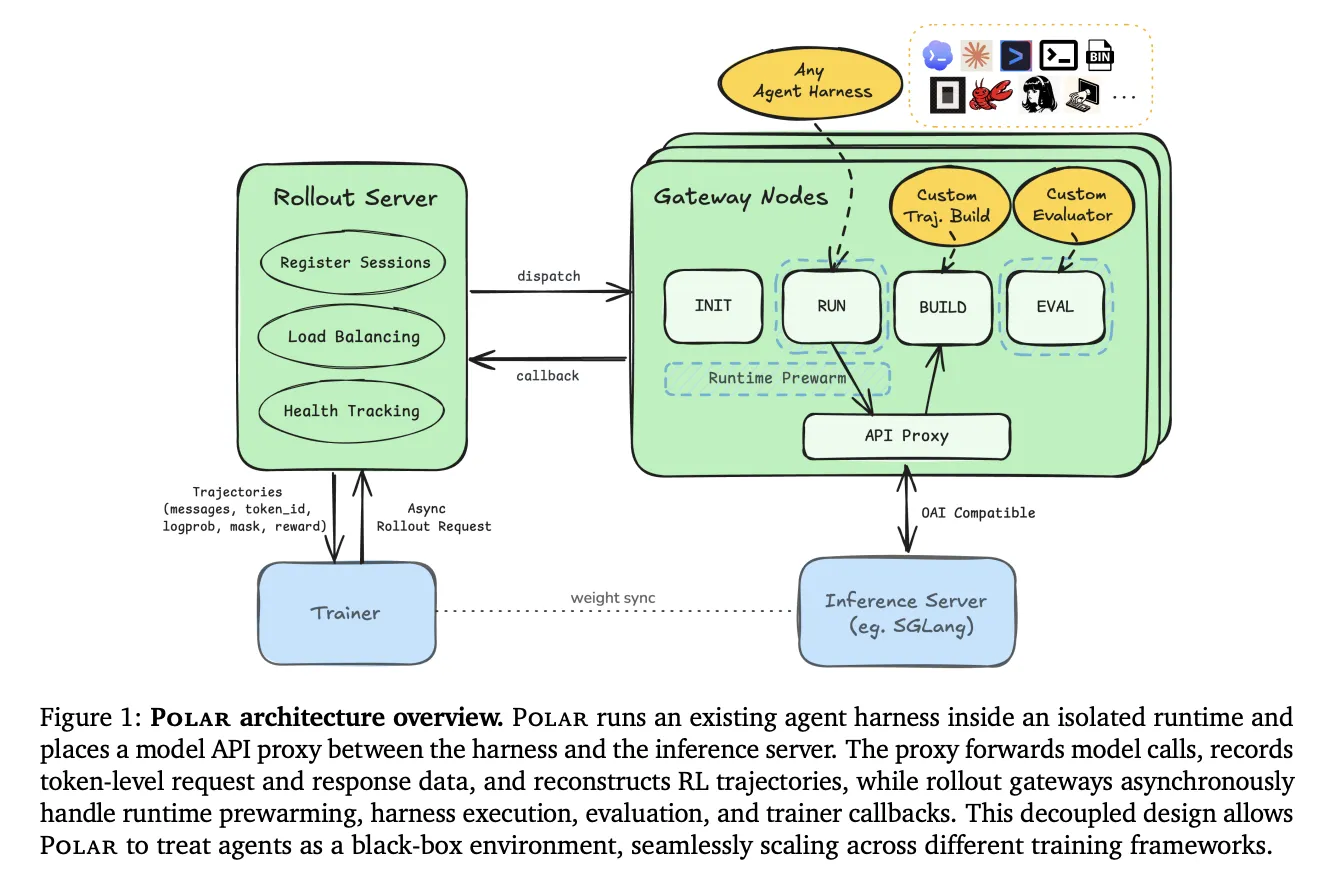

NVIDIA introduces Polar, a framework for reinforcement learning in language agents. Polar simplifies integration with existing agent software, allowing researchers to run reinforcement learning without modifying the agent harness.

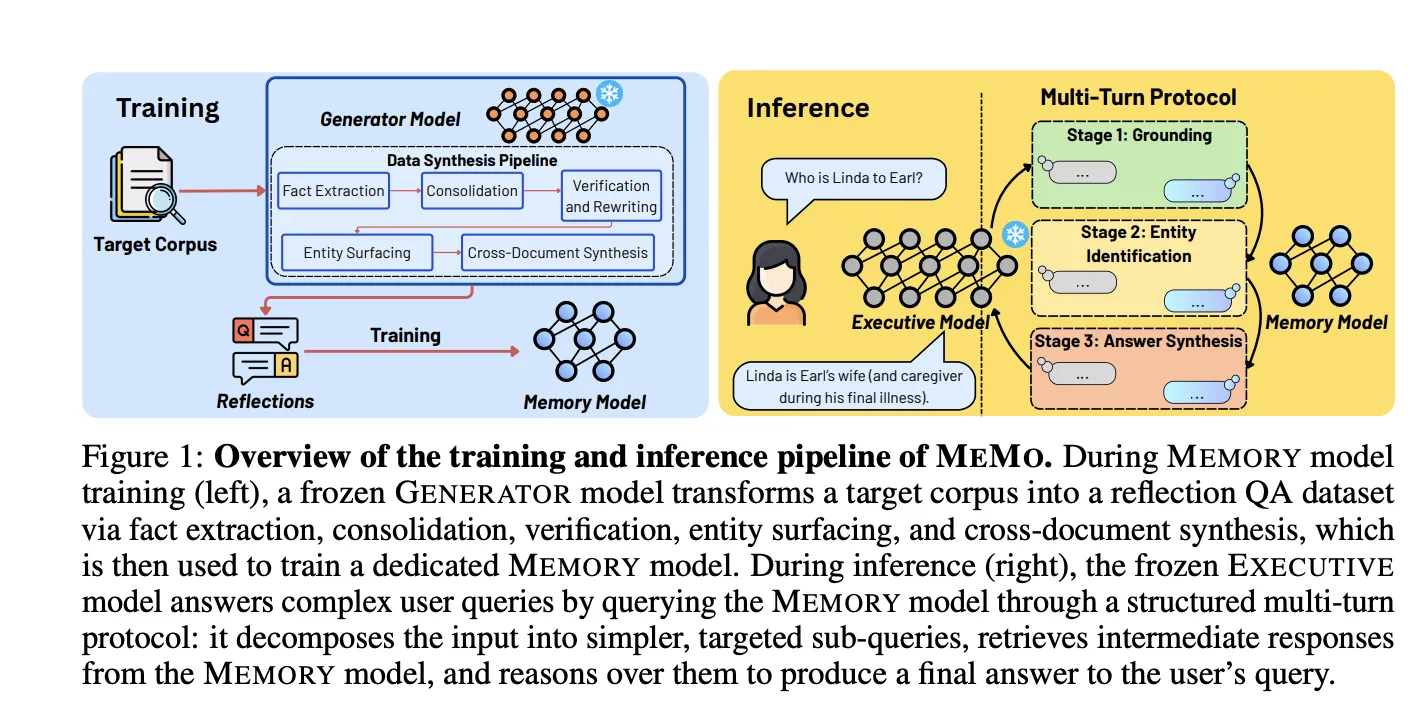

Researchers from National University of Singapore and MIT propose MEMO to integrate new knowledge into large language models without degrading previous knowledge. MEMO separates memory and reasoning, training a separate MEMORY model to internalize knowledge from a corpus, enhancing transferability across models.

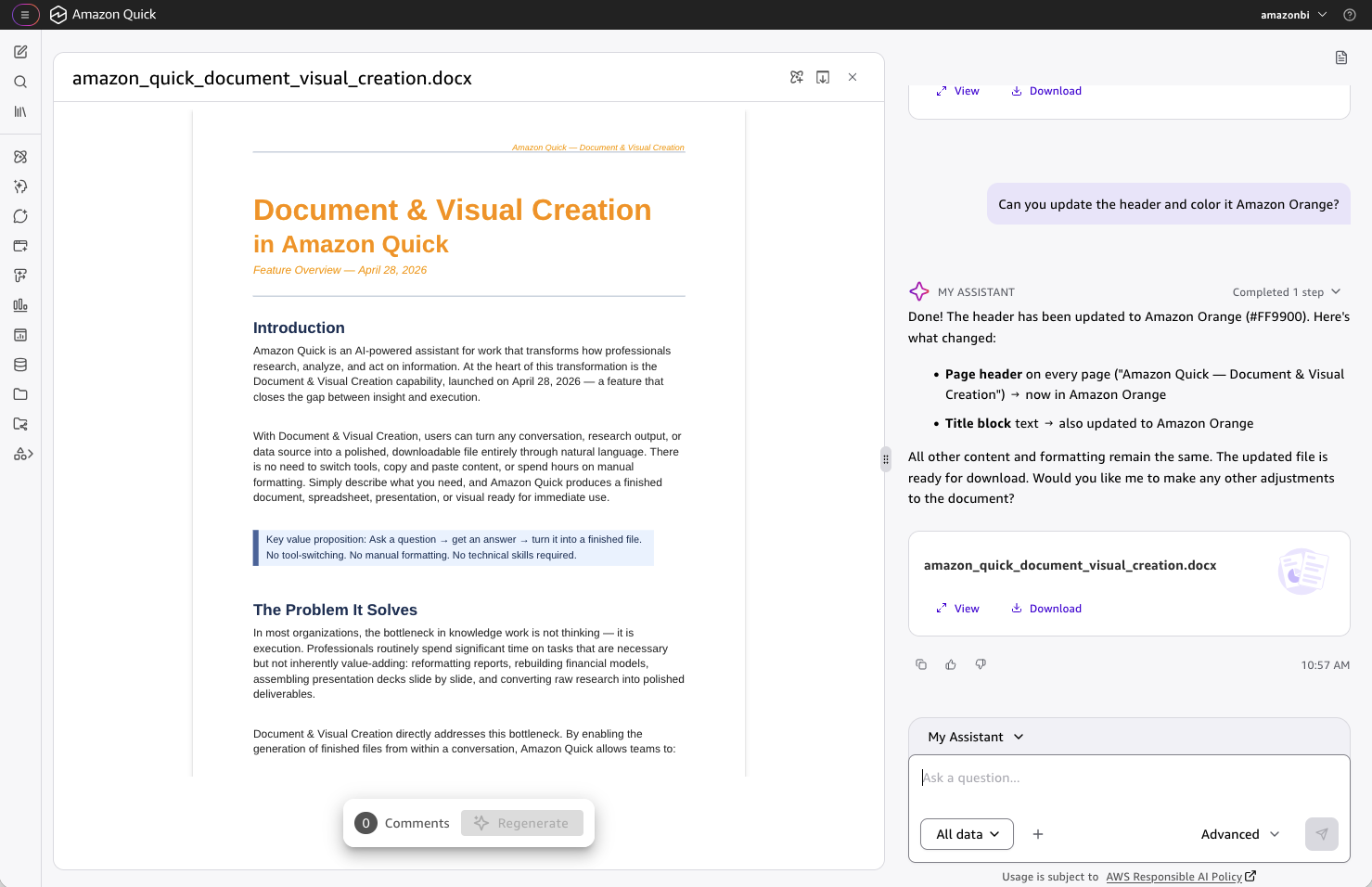

Amazon Quick simplifies document creation by pulling live data from various sources and generating professional-grade documents and visuals, saving time on mechanical tasks. It supports five output types, including fully editable files that preserve formatting and data integrity, streamlining the end-to-end workflow within the Quick conversation.

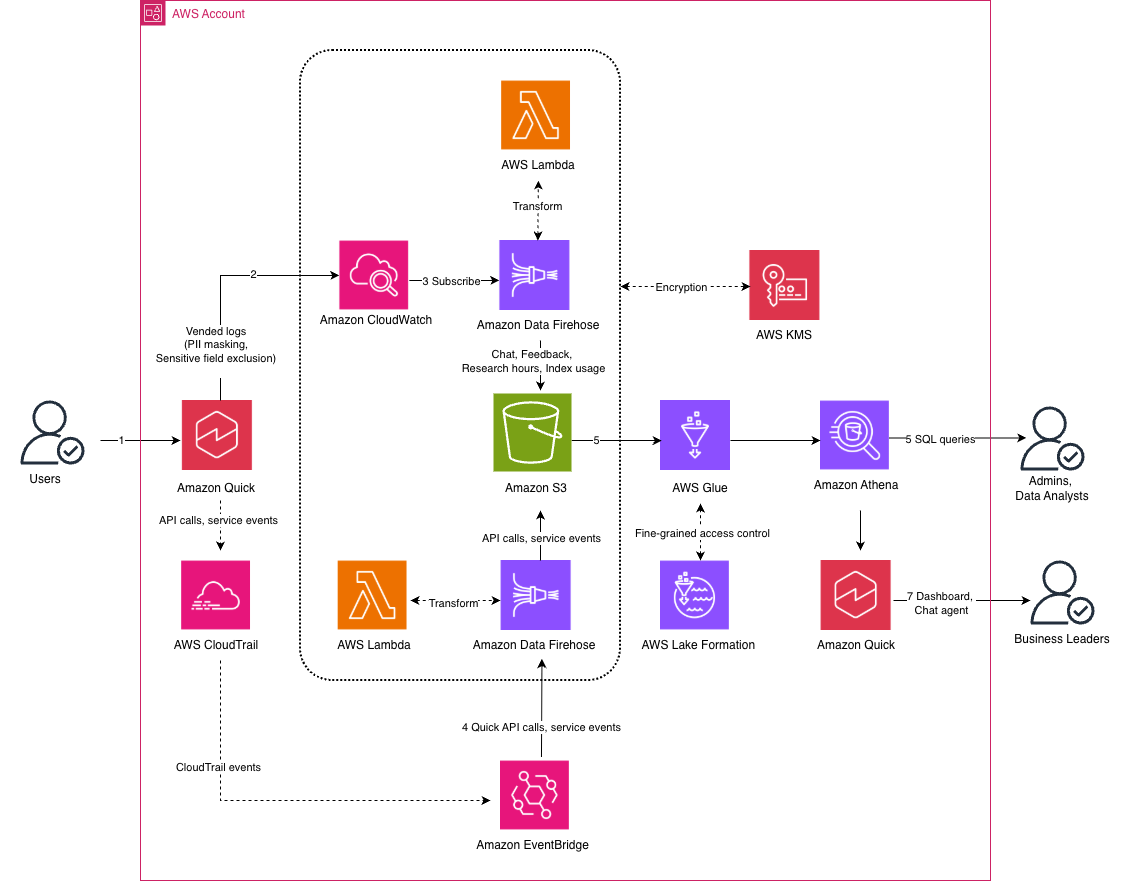

Amazon Quick offers a centralized observability solution for enterprise AI platforms, consolidating usage data for better tracking and analysis. By integrating with AWS services, Amazon Quick enables monitoring, analytics, and governance through a secure data lake, Amazon Athena, and Quick Sight dashboard.

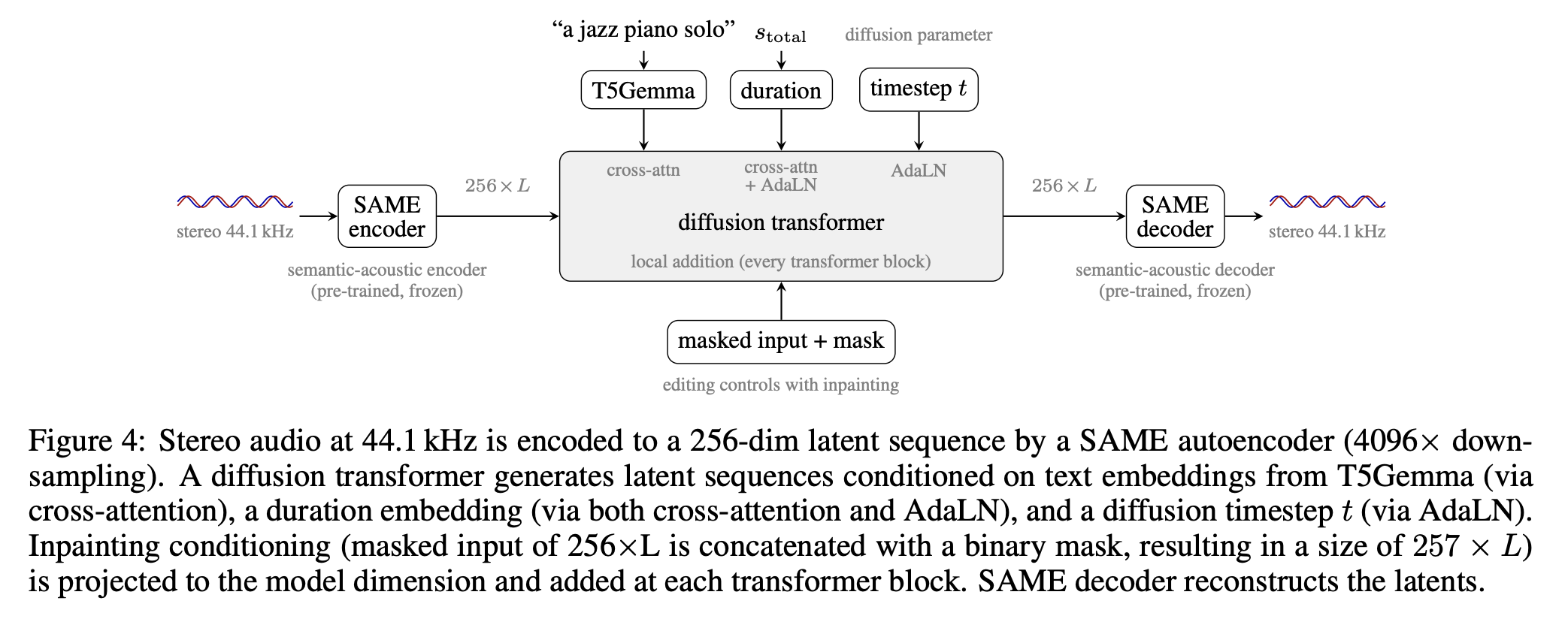

Stability AI unveils Stable Audio 3, featuring latent diffusion models for stereo audio generation. Models vary in size and output length, with open weights available for small and medium scales.

Building AI apps no longer requires complex ML knowledge. Strands Agents and AWS services enable creating intelligent agents with just 30 lines of code, simplifying AI development for AWS environments.

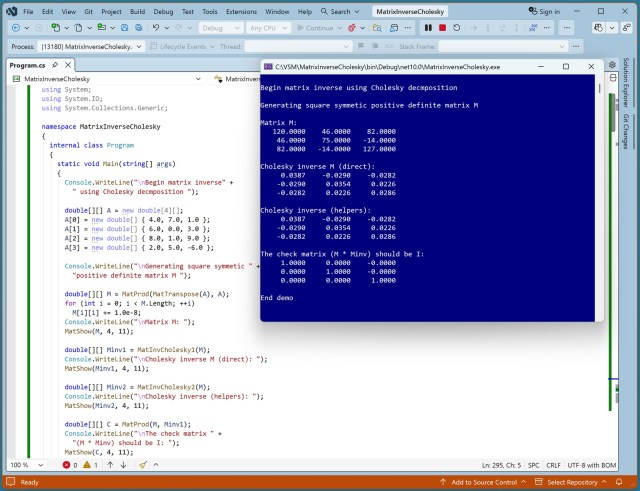

Designing a matrix inverse function using Cholesky decomposition: shorter code vs. more efficiency. Software engineering insights with AI-generated code and character design in animated films.

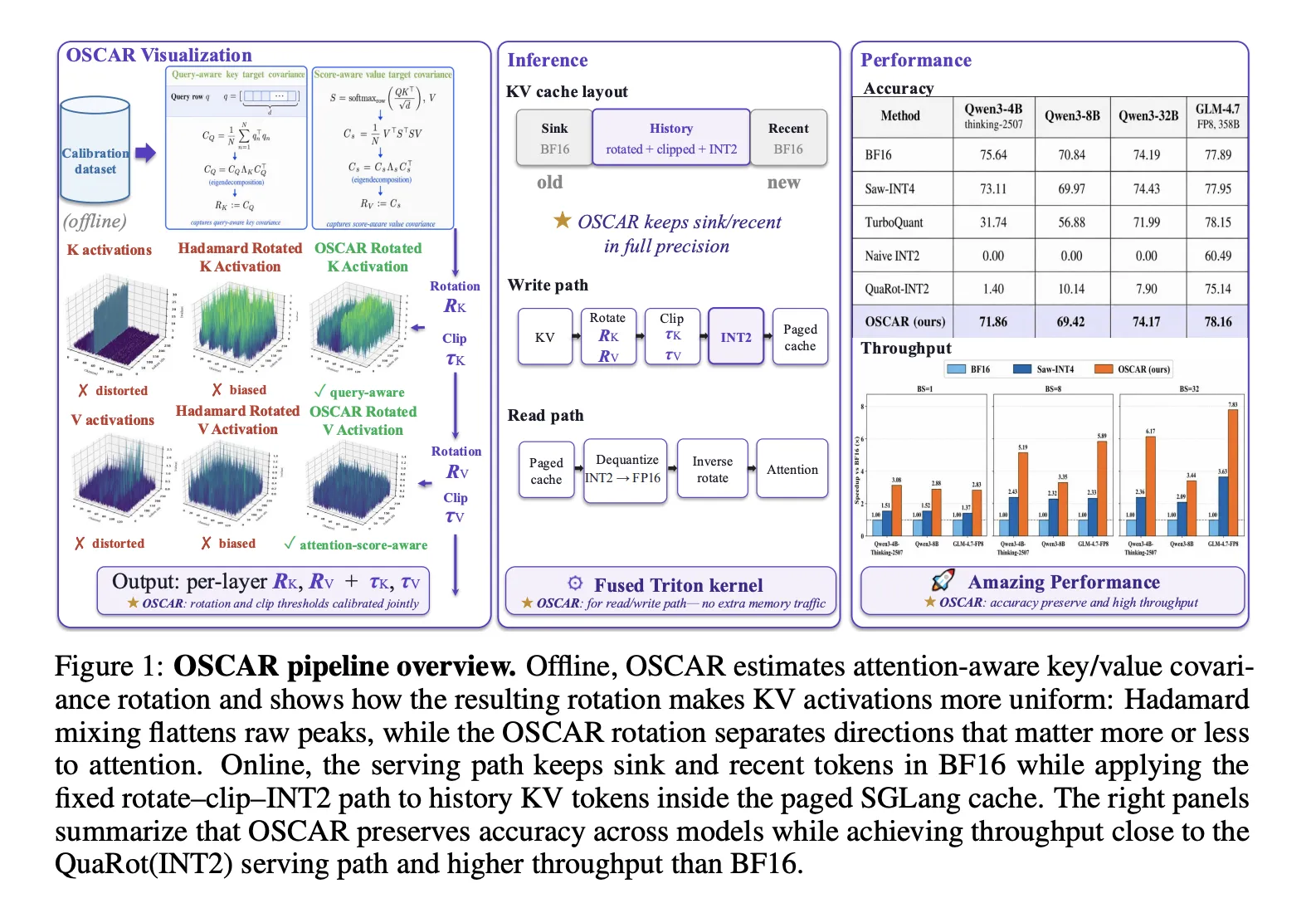

AI's OSCAR addresses the challenges of INT2 KV cache quantization by using attention statistics for rotation. This method improves attention quality and reduces quantization errors, enhancing model performance significantly.

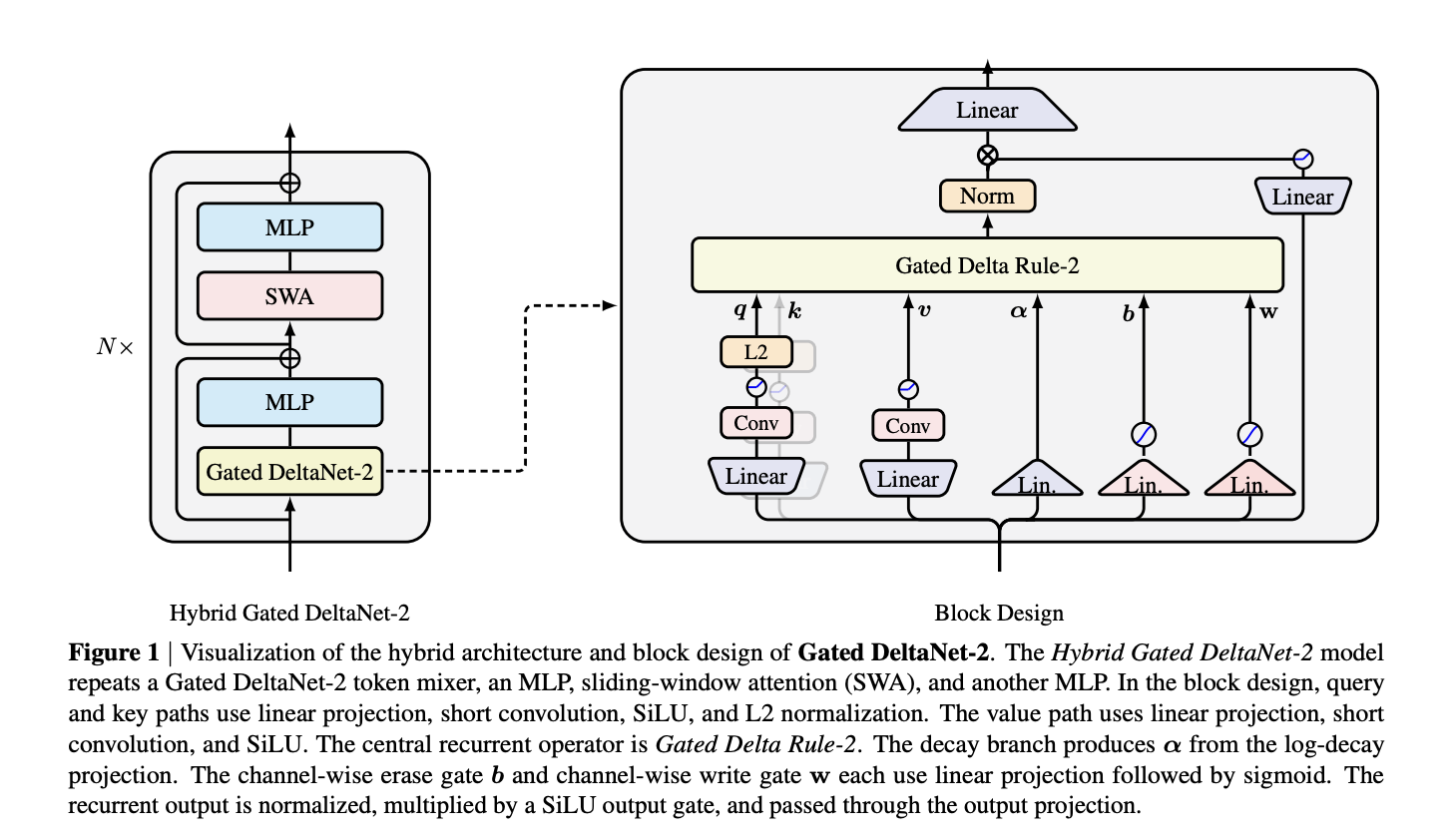

NVIDIA's Gated DeltaNet-2 introduces linear attention with two channel-wise gates, outperforming previous models in memory editing. Gated Delta Rule-2 separates key and value decisions, enhancing the delta-rule model's efficiency.

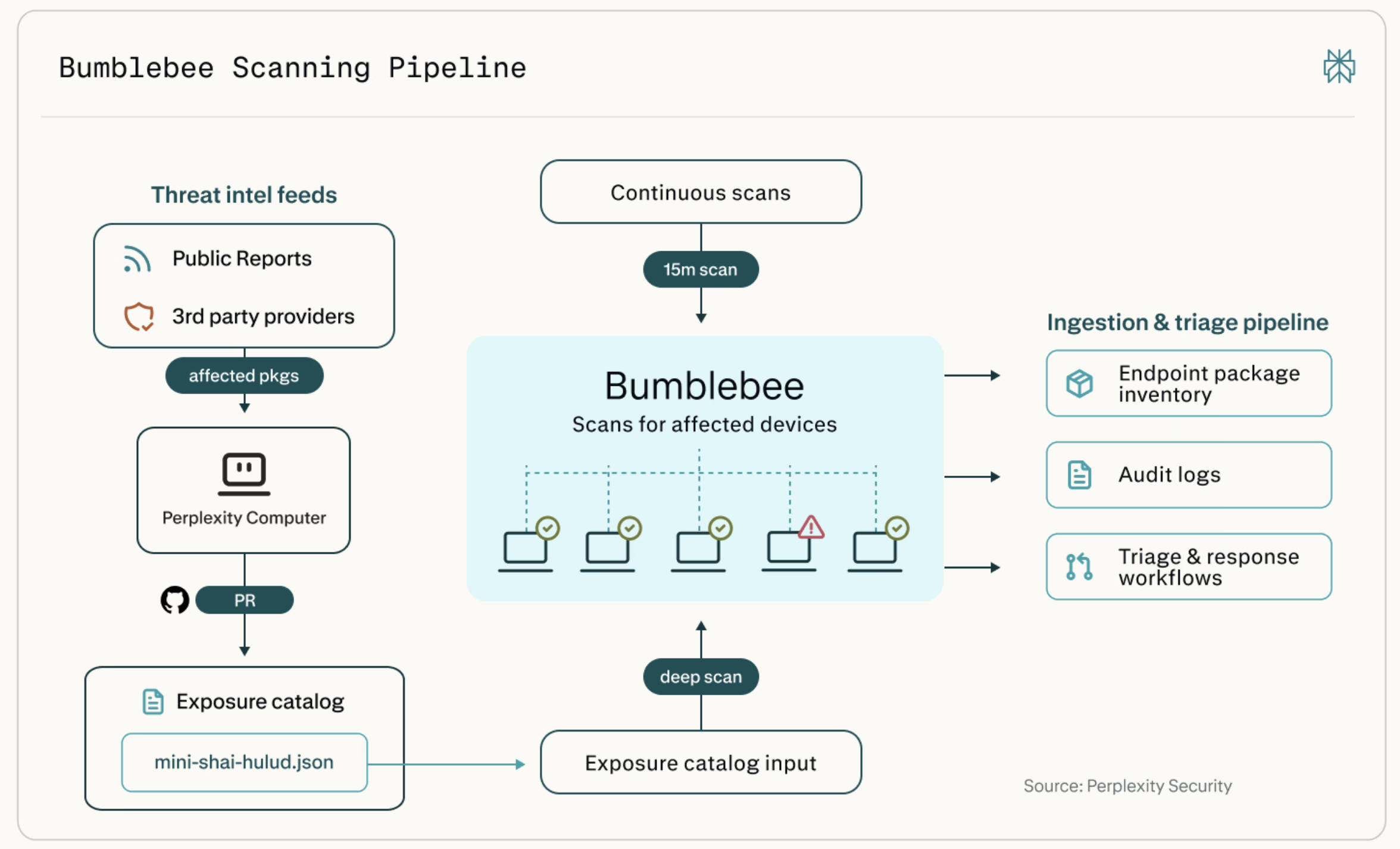

Perplexity's Bumblebee tool scans developer machines for vulnerable packages, extensions, and AI tool configs. It fills a gap in existing tools by checking local developer state for potential security risks.