RAG vs Fine-tuning: how to teach AI about your own data

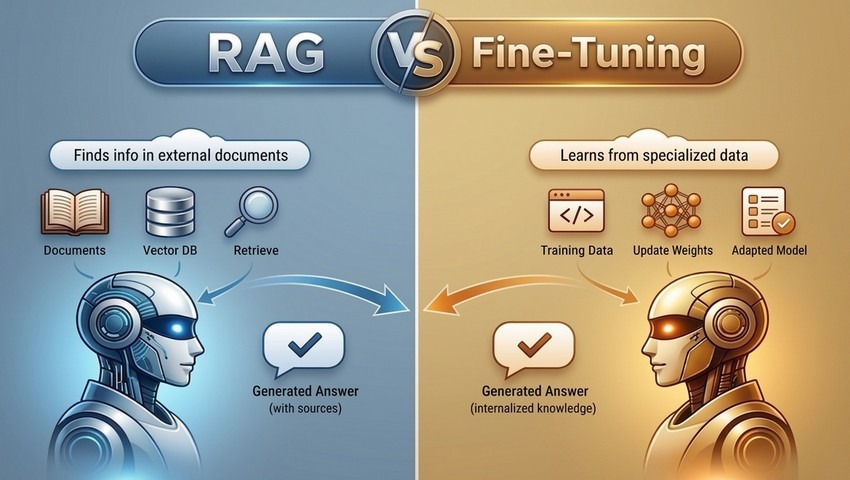

Large language models (LLMs) are trained on public data. A model that has read most of the text and data on the internet still knows nothing about your internal documentation, your product specs, your compliance policies, or the ticket your support team closed last Tuesday. Bridging this gap is the central engineering challenge in AI deployment, and two approaches dominate how practitioners solve it: Retrieval-Augmented Generation (RAG) and fine-tuning.

They’re often discussed as if they’re competing strategies. They’re not, really – they solve different problems. But understanding which problem each one actually solves will save you from building the wrong thing.

The knowledge problem

The gap between what a base model knows and what your organization needs it to know has a few distinct dimensions worth separating.

There’s the content problem: the model simply doesn’t have your data. There’s the recurrency problem: even if you somehow got your data into the model, it changes – documentation is revised, products are updated, policies shift. And there’s the behavior problem: even with the right knowledge, the model may reason about it wrong, use the wrong terminology, or format its output in ways that don’t fit your workflows.

RAG addresses the content and recurrency problems. Fine-tuning addresses the behavior problem. Conflating them leads to architectures that solve the wrong thing.

Before reaching for either, though, it’s worth asking whether the problem can be solved more simply. A surprising amount of "knowledge" can be delivered through a well-structured system prompt – role definition, behavioral constraints, a handful of worked examples, key facts that never change. Prompt engineering is underrated as a first pass, partly because it’s cheap and immediate, and partly because the exercise of writing it forces clarity about what the model actually needs to know versus how it needs to behave. The point where a system prompt starts to feel unwieldy – too long, too brittle, collapsing under the weight of edge cases – is usually the point where RAG or fine-tuning becomes genuinely warranted. Context windows have grown large enough that some teams are simply stuffing entire knowledge bases into prompts; this works until it doesn’t, and understanding why it stops working (latency, cost, attention degradation over very long contexts) is useful framing for the architectural decisions that follow.

How RAG works

RAG keeps the base model’s weights frozen and instead gives it access to a document retrieval system at inference time. When a query arrives, a retriever searches a vector database for relevant chunks, those chunks are injected into the prompt, and the model generates a response grounded in that context.

The core infrastructure is straightforward: a data ingestion pipeline, an embedding model, a vector store, a retrieval layer, and the LLM itself. In practice this usually means LangChain or LlamaIndex for orchestration. LangChain’s RetrievalQA chain wires retrieval and generation together in a few lines; LlamaIndex’s VectorStoreIndex handles chunking, embedding, and querying against your documents with sensible defaults. For vector storage, the choice depends on where you are in the project lifecycle. Chroma and Qdrant are popular for local development and early prototypes – no account required, trivial to set up, and both integrate directly with LangChain and LlamaIndex. If your team already runs PostgreSQL, the pgvector extension adds vector search without introducing a new piece of infrastructure. For production at scale, Pinecone and Weaviate are the most established hosted options. Weaviate is worth knowing specifically if you need hybrid search, combining vector similarity with keyword filtering, which matters more than you’d expect with real enterprise data. At the extreme end – billions of vectors, sub-millisecond latency requirements – FAISS, Meta’s open-source library, is the standard choice.

The practical advantages are significant. Adding new documents requires no retraining – you index them and they’re immediately available. Retrieved sources can be surfaced alongside answers, which matters enormously when users need to verify claims or trace decisions back to authoritative documents. For rapidly evolving knowledge bases, RAG is almost always the right default.

The limitations are real too. Retrieval can miss. Context windows constrain how much retrieved content you can actually use. Performance is sensitive to chunking strategy and index quality – problems that look like model failures often turn out to be retrieval failures. These are engineering problems, but they’re non-trivial ones.

How fine-tuning works

Fine-tuning modifies the model itself. You take a pre-trained model – Llama 3, Mistral, DeepSeek, or a hosted model like Claude or GPT-5.4, that exposes a fine-tuning API – and continue training it on domain-specific examples. The weights update to reflect the patterns in your data: terminology, reasoning style, output format, domain conventions.

For open-weight models, the practical tooling has improved considerably. Hugging Face’s trl library with its SFTTrainer handles supervised fine-tuning with minimal boilerplate. Parameter-efficient methods like LoRA and its quantized variant QLoRA – both implemented in the peft library – let you fine-tune large models on modest hardware by updating only a small fraction of weights. With QLoRA in particular, the base model is compressed to 4-bit precision before training, which reduces memory requirements dramatically: a Llama 3 70B model that would otherwise require a multi-GPU cluster can be adapted on a single A100 node. For teams that don’t want to manage training infrastructure at all, OpenAI and Anthropic both expose fine-tuning APIs for their hosted models – you supply a dataset, they handle the compute, and you get back a model identifier you call like any other.

This is the right tool when what you need isn’t access to documents but internalized expertise. A model fine-tuned on clinical notes doesn’t just retrieve medical terminology – it reasons like someone who has read a lot of medical text. A model fine-tuned on legal briefs structures its arguments differently. These behavioral changes don’t come from retrieval; they come from training.

The costs are real. Compute for training large models is expensive even with LoRA. Dataset curation is hard – poor training data degrades performance in ways that are difficult to diagnose. Updating knowledge means retraining, or at minimum additional fine-tuning runs. And fine-tuned models still hallucinate, because they generate from learned weights, not from verified sources.

When to use which

| RAG | Fine-tuning | Hybrid | |

|---|---|---|---|

| Primary problem solved | Access to current, specific information | Consistent behavior and domain reasoning | Both |

| Knowledge updates | Add documents, no retraining | Requires retraining | Documents update freely; behavior stays trained |

| Upfront cost | Low-medium (infra, indexing) | High (compute, dataset curation) | High |

| Inference cost/latency | Higher (retrieval + large model) | Lower (smaller tuned model possible) | Higher |

| Answer traceability | Strong – sources are retrievable | Weak – generated from weights | Strong |

| Hallucination risk | Lower (grounded in retrieved text) | Higher (relies on internalized patterns) | Lowest |

| Best for | Knowledge bases, support, compliance, search | Specialized reasoning, tone, structured output | High-stakes production systems |

| Main failure mode | Retrieval misses; index quality | Dataset quality; knowledge staleness | Operational complexity |

Use RAG when your application is primarily about accessing current, specific information: knowledge base assistants, documentation search, compliance research, support tooling. The defining characteristic is that correct answers come from documents that exist somewhere in your organization.

Use fine-tuning when the bottleneck is behavior rather than knowledge access: consistent output formatting, domain-specific reasoning patterns, specialized terminology, task-specific performance on structured outputs. The defining characteristic is that the model needs to think differently, not just know more.

There’s a cost dimension here that’s easy to overlook at the design stage and hard to ignore in production. A RAG pipeline typically involves an embedding call, a vector search, and then a generation call against a large hosted model – that’s meaningful latency and per-query cost that compounds quickly at scale. A fine-tuned smaller model – a 7B or 13B parameter Mistral or Llama variant, for instance – can serve high-volume use cases at a fraction of the cost and with substantially lower tail latency (the worst-case response times that matter most in user-facing applications). If your task is well-defined and your training data is good, a fine-tuned small model will often outperform a much larger general model with retrieval, and do it faster and cheaper. The tradeoff is everything described above: brittleness to knowledge updates, dataset maintenance overhead, and the absence of source attribution.

In practice, many mature systems use both. A fine-tuned model brings domain expertise; a RAG pipeline keeps it current. The retriever finds relevant context; the model knows what to do with it. A common pattern is to use LangChain’s agent abstractions to route queries – sending factual lookups to the retrieval chain and open-ended reasoning tasks to the fine-tuned model. This hybrid approach is more complex to build and operate, but for high-stakes applications the tradeoff is usually worth it.

Knowing whether it’s working

This is the part most teams skip, and it tends to catch up with them. Building a RAG pipeline or fine-tuning a model is tractable; knowing whether it’s actually performing well in production is harder and less glamorous.

For RAG systems, Retrieval-Augmented Generation Assessment (RAGAS) has become a practical standard. It is a framework designed to assess the quality of RAG pipelines without requiring extensive manual annotation. RAGAS evaluates performance across four axes:

- faithfulness (does the response actually reflect the retrieved content, or is the model inventing things?),

- answer relevance (does the response address the question that was actually asked?);

- context precision (are the retrieved chunks relevant, or is there noise?);

- context recall (did the retriever find all the important passages, or did it miss something?).

Running RAGAS against a representative set of queries before shipping gives you a baseline and a vocabulary for diagnosing failures. Without it, you’re mostly guessing whether degraded outputs are retrieval failures, generation failures, or chunking failures.

For fine-tuned models, the emerging practice is LLM-as-judge: using a capable frontier model to evaluate outputs from your fine-tuned model against a rubric. This is imperfect and introduces its own biases, but it scales in ways that human evaluation doesn’t, and it’s fast enough to use in CI pipelines. The combination of task-specific test sets, automated LLM-based scoring, and periodic human review represents roughly the current state of the art for teams doing this seriously.

Both approaches benefit from logging and tracing infrastructure from the start. Tools like LangSmith (LangChain’s observability layer) or Arize make it possible to inspect individual traces, identify patterns in failures, and catch regressions before users do. Instrumentation is cheap to add early and expensive to retrofit later.

Getting started: frameworks worth knowing

The ecosystem moves fast and has a lot of noise. Here’s a practical map of what’s actually worth learning, roughly in the order you’d encounter it.

For RAG pipelines, start with LlamaIndex. It’s designed specifically for connecting LLMs to external data, and its abstractions match how RAG actually works – documents, indexes, queries. You can have a basic pipeline running against your own files in an afternoon. LangChain covers similar ground and is more widely used in production, but its generality makes it harder to learn from: it can do many things, which means there are many ways to do any one thing. Once you understand what a RAG pipeline is actually doing, LangChain’s flexibility becomes an asset. Start with LlamaIndex to learn the concepts, move to LangChain when you need more control or are building something agent-like.

For vector storage, Chroma is the right starting point. It runs locally, requires no account or API key, and integrates with both LlamaIndex and LangChain in a few lines. When you’re ready for production, Pinecone is the most straightforward hosted option – managed infrastructure, good documentation, and SDKs that mostly stay out of your way. Weaviate is worth knowing about if you need hybrid search, which turns out to matter more than you’d expect with real enterprise data.

For fine-tuning, Hugging Face is the ecosystem. The transformers library is where most open-weight models live; trl provides the SFTTrainer class that handles the training loop for supervised fine-tuning; and peft gives you LoRA and QLoRA, which is how you fine-tune large models without a rack of GPUs. These three libraries are designed to work together and the Hugging Face documentation is genuinely good. If managing training infrastructure sounds like too much too soon, both OpenAI and Anthropic offer fine-tuning through their APIs – you upload a dataset, they handle the rest, and you get back a model ID you can call like any other.

For evaluation, add RAGAS early. It’s tempting to defer evaluation until something feels broken, but retrofitting it is painful. For general output quality – especially for fine-tuned models – look at promptfoo, a lightweight open-source tool for running test suites against LLM outputs that’s easy enough to run in a CI pipeline.

For observability, LangSmith is the path of least resistance if you’re already using LangChain. It traces every step of your pipeline – retrieval, prompt construction, generation – and makes it possible to inspect failures rather than guess at them. Arize and Weights & Biases are worth knowing about as you scale, but LangSmith is sufficient for most teams getting started.

A reasonable learning sequence:

- get a local RAG pipeline working with LlamaIndex and Chroma;

- add RAGAS to understand where it fails;

- try fine-tuning a small open-weight model with Hugging Face’s tooling to see what changes.

By the time you’ve done both, you’ll have enough intuition to make real architectural decisions.

A note on transparency

RAG has a non-obvious advantage that’s easy to undervalue: it’s auditable. When the system retrieves a passage from your documentation and uses it to generate an answer, you can show that passage to the user. You can log it, review it, trace it. Fine-tuned models generate from internalized patterns that can’t be inspected in the same way. For regulated industries or any context where answer provenance matters, this difference is meaningful.

The right architecture depends on what you’re building, what changes, and what needs to be trusted. Usually the answer involves both techniques – but understanding them separately, including where simpler approaches like prompt engineering are sufficient, is the prerequisite to combining them well.

QuData development team