Human or AI? Scientists have created a detector tool to identify the author of text

The widespread development of artificial intelligence, and especially the launch of ChatGPT by OpenAI with its amazingly accurate and logical answers and dialogues, has stirred the public consciousness and raised a new wave of interest in large language models (LLMs). It has definitely become clear that their possibilities are greater than we have ever imagined. The headlines reflected both excitement and concern: Can robots write a cover letter? Can they help students take tests? Will bots influence voters through social media? Are they capable of creating new designs instead of artists? Will they put writers out of work?

After the spectacular release of ChatGPT, there are now talks of similar models at Google, Meta, and other companies. Computer scientists are calling for greater scrutiny. They believe that society needs a new level of infrastructure and tools to protect these models, and have focused on developing such infrastructure.

One of these key safeguards could be a tool that can provide teachers, journalists and citizens with the ability to distinguish between LLM-generated texts and human-written texts.

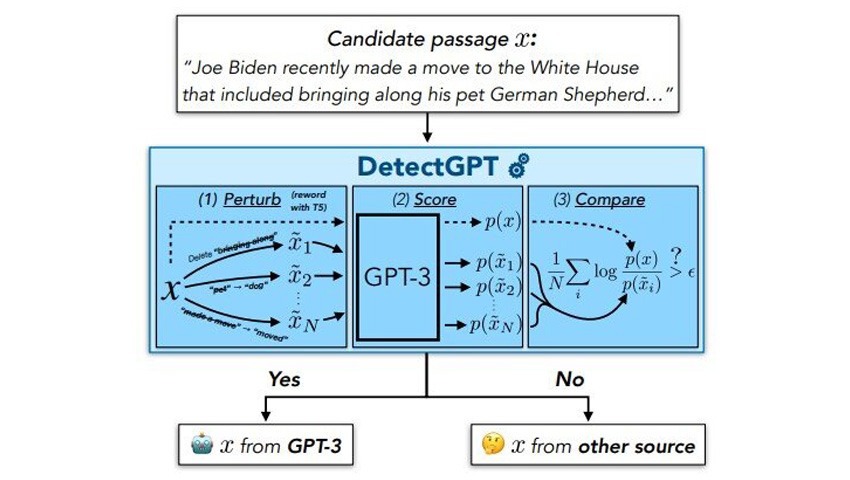

To this end, Eric Anthony Mitchell, a fourth-year computer science graduate student at Stanford University, while working on his PhD together with his colleagues developed DetectGPT. It has been released as a demo and document that distinguishes LLM-generated text from human-written text. In initial experiments, the tool accurately determines authorship 95% of the time in five popular open source LLMs. The tool is in its early stages of development, but Mitchell and his colleagues are working to ensure that it will be of great benefit to society in the future.

Some general approaches to solving the problem of identifying the authorship of texts were previously researched. One approach, used by OpenAI itself, involves training the model with texts of two kinds: some texts generated by LLMs and others created by humans. The model is then asked to identify the authorship of the text. But, according to Mitchell, for this solution to be successful across subject areas and in different languages, this method would require a huge amount of training data.

The second approach avoids training a new model and simply uses LLMs to discover its own output after feeding the text into the model.

Essentially, the technique is to ask the LLM how much it "likes" the text sample, says Mitchell. And by "like" he doesn't mean that it's a sentient model that has its own preferences. Rather, if the model “likes” a piece of text, this can be considered as a high rating from the model for this text. Mitchell suggests that if a model likes a text, then it is likely that the text was generated by it or similar models. If it doesn't like the text, then most likely it was not created by LLM. According to Mitchell, this approach works much better than random guessing.

Mitchell suggested that even the most powerful LLMs have some bias against using one phrasing of an idea over another. The model will be less inclined to "like" any slight paraphrase of its own output than the original. At the same time, if you distort human-written text, the probability that the model will like it more or less than the original is about the same.

Mitchell also realized that this theory could be tested with popular open source models, including those available through the OpenAI’s API. After all, calculating how much the model likes a particular piece of text is essentially the key to teaching the model. This can be very useful.

To test their hypothesis, Mitchell and his colleagues conducted experiments in which they observed how different publicly available LLMs liked human-created text as well as their own LLM-generated text. The selection of texts included fake news articles, creative writing, and academic essays. The researchers also measured how much LLM liked, on average, 100 distortions of each LLM and human-written text. After all the measurements, the team plotted the difference between these two numbers: for LLM texts and for human-written texts. They saw two bell curves that barely overlapped. The researchers concluded that it is possible to distinguish the source of texts very well using this single value. This way a much more reliable result can be obtained compared to methods that simply determine how much the model likes the original text.

In the team's initial experiments, DetectGPT successfully identified human-written text and LLM-generated text 95% of the time when using GPT3-NeoX, a powerful open source variant of OpenAI's GPT models. DetectGPT was also able to detect human-created text and LLM-generated text using LLMs other than the original source model, but with slightly lower accuracy. At the time of the initial experiments, ChatGPT was not yet available for direct testing.

Other companies and teams are also looking for ways to identify text written by AI. For example, OpenAI has already released its new text classifier. However, Mitchell does not want to directly compare OpenAI's results with those of DetectGPT, as there is no standardized dataset to evaluate. But his team did some experiments using the previous generation of OpenAI's pre-trained AI detector and found that it performed well with news articles in English, performed poorly with medical articles, and completely failed with news articles in German. According to Mitchell, such mixed results are typical for models that depend on pre-training. In contrast, DetectGPT worked satisfactorily for all three of these text categories.

Feedback from users of DetectGPT has already helped identify some vulnerabilities. For example, a person might specifically request ChatGPT to avoid detection, such as specifically asking LLM to write text like a human. Mitchell's team already has a few ideas on how to mitigate this drawback, but they haven't been tested yet.

Another problem is that students using LLMs, such as ChatGPT, to cheat on assignments will simply edit the AI-generated text to avoid detection. Mitchell and his team investigated this possibility in their work and found that while the quality of detection of edited essays decreased, the system still does a pretty good job of identifying machine-generated text when less than 10-15% of the words have been changed.

In the long term, the goal of the DetectGPT is to provide the public with a reliable and efficient tool for predicting whether text, or even part of it, was machine generated. Even if the model doesn't think that the entire essay or news article was machine-written, there is a need for a tool that can highlight a paragraph or sentence that looks particularly machine-generated.

It is worth emphasizing that, according to Mitchell, there are many legitimate uses for an LLM in education, journalism, and other areas. However, providing the public with tools to verify the source of information has always been beneficial and remains so even in the age of AI.

DetectGPT is just one of several works that Mitchell is creating for LLM. Last year, he also published several approaches to editing LLM, as well as a strategy called "self-destructing models" that disables LLM when someone tries to use it for nefarious purposes.

Mitchell hopes to refine each of these strategies at least one more time before completing his PhD.

The study is published on the arXiv preprints server.