Muse Spark, the first AI model from the Meta Superintelligence Labs, can see, reason, and solve complex problems using multiple agents in parallel. With Contemplating mode and built-in capabilities for health, coding, and social insights, the model marks a major step toward personal superintelligence.

Yann LeCun is taking a bold step with his new startup AMI, working to create “world models” that understand the physical world, reason about causality, and develop true common sense. This approach directly challenges today’s dominant paradigm, suggesting that scaling LLMs alone may never achieve human-level intelligence.

Researchers have discovered a simple mathematical way to “steer” AI models by directly manipulating internal concept vectors – improving performance while revealing hidden risks. Now AI behavior can be controlled more precisely than ever, but also raises concerns about how easily safeguards can be bypassed.

Meta AI’s DINOv3 is a self-supervised vision model trained on 1.7 billion images, setting new standards in image classification, object detection, and beyond. With innovations like Gram anchoring and real-world impact from monitoring deforestation to powering NASA’s Mars exploration, it marks a paradigm shift in computer vision.

Meta has unveiled Movie Gen, an AI-powered tool that creates high-definition videos with synchronized sound from simple text prompts. The model provides advanced video creation and editing features, offering users enhanced control over content generation.

Llama 3, Meta AI's latest advancement, boasts unmatched language understanding, enhancing its capacity for complex tasks. With expanded vocabulary and advanced safety features, the model ensures improved performance and versatility.

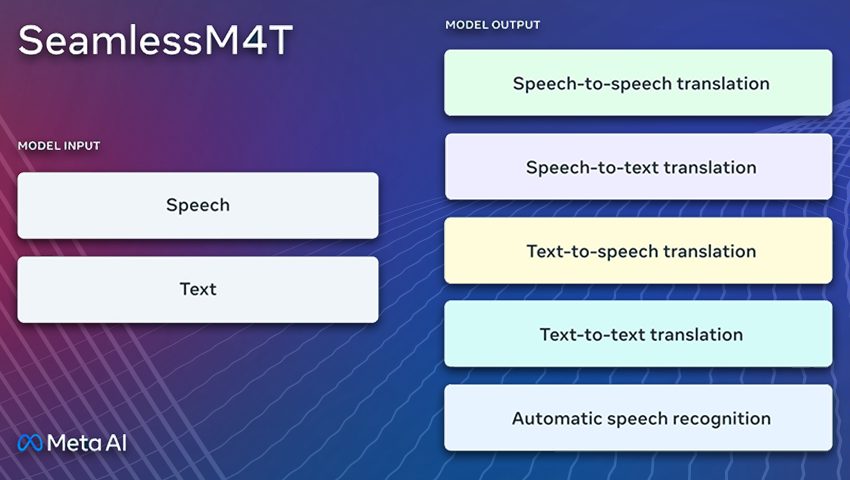

SeamlessM4T breaks down language barriers with its comprehensive translation and transcription capabilities, This AI model can easily convert speech or text, enabling real-time translation, and fostering cross-cultural understanding.