MIT researchers have developed a new framework, simplifying image generation into a single step. The team enhanced existing models like Stable Diffusion, by demonstrating the new framework's ability to quickly create high-quality visual content.

Elon Musk's xAI Corp introduces Grok-1, a new LLM equipped with 314 billion parameters and a Mixture-of-Experts architecture. Released as open source under the Apache 2.0 license, Grok-1 is set to catalyze advancements in AI research.

Stability AI presented the latest advancement in image generative AI models – Stable Diffusion 3. Its expanded parameter range and diffusion transformer architecture ensure smooth generation of complex, high-quality images and accurate text-to-visual translation.

OpenAI's latest creation Sora crafts captivating videos, offering unparalleled realism of visual compositions. Leveraging a fusion of language understanding and video generation the model can interpret text prompts, accommodate various input modalities, and simulate dynamic camera motion.

Drawing inspiration from its predecessor Gemini, Gemma is focused on openness and accessibility, offering versatile models suitable for various devices and frameworks. The model marks a significant step towards democratizing AI while emphasizing its responsible development and transparency.

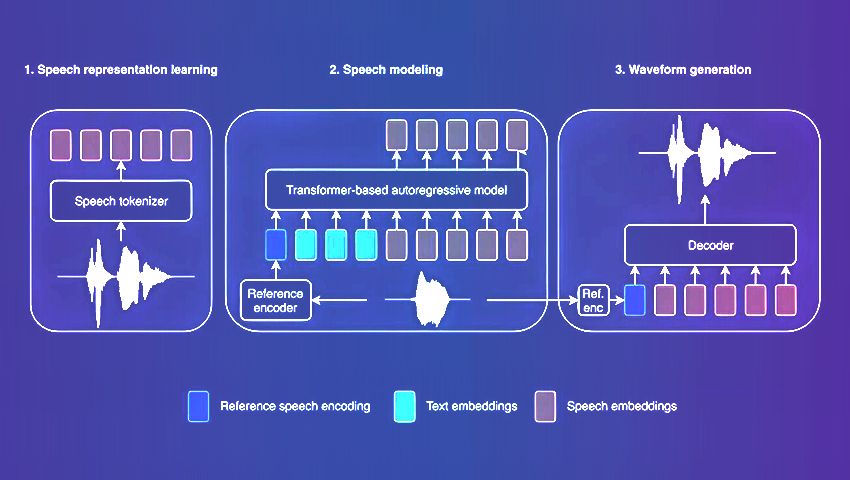

Amazon's latest TTS model with its innovative architecture sets a new benchmark for speech synthesis. BASE TTS not only achieves unparalleled speech naturalness but also demonstrates remarkable adaptability in handling diverse language attributes and nuances.

MPT-7B offers optimized architecture and performance enhancements, including compatibility with the HuggingFace ecosystem. The model was trained on 1 trillion tokens of text and code and sets a new standard for commercially-usable LLMs.

Deep active learning blends conventional neural network training with strategic data sample selection. This innovative approach results in enhanced model performance, efficiency, and accuracy across a wide array of applications.

The integration of high-throughput computational screening and ML algorithms empowers scientists to surpass traditional limitations, enabling dynamic exploration of materials. This combination has led to the discovery of new materials with unique properties.

Coscientist, an advanced AI lab partner, autonomously plans and executes chemistry experiments. Showcasing rapid learning, the system is proficient in chemical reasoning, utilization of technical documents, and adept self-correction.

The StableRep model enhances AI training through the utilization of synthetic imagery. By generating diverse images via text prompts, it not only solves data collection challenges but also provides more efficient and cost-effective training alternatives.

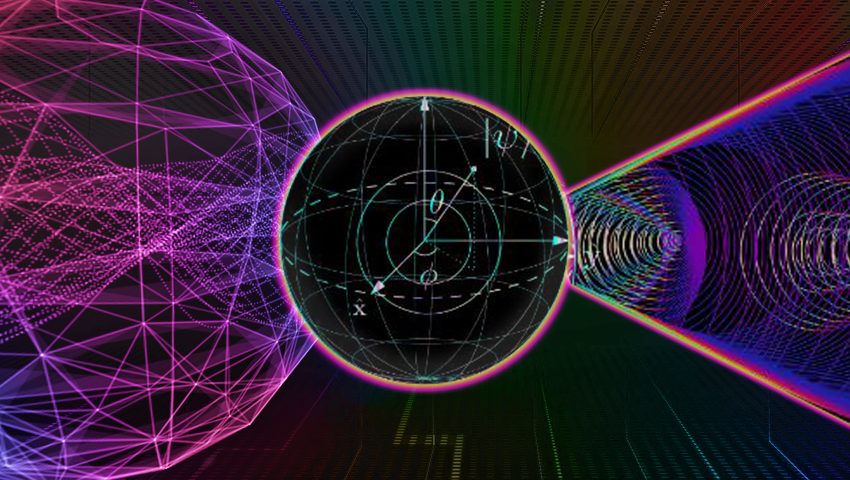

Researchers have joined forces to create a programmable quantum processor that operates with high fault tolerance based on logical qubits. This opens up new prospects for large-scale and reliable quantum computing, capable of solving previously intractable problems.

The Turing test, once groundbreaking for machine thinking, is now limited by AI's ability to mimic human reactions. A new study introduces a three-step system to determine whether artificial intelligence can reason like a human.

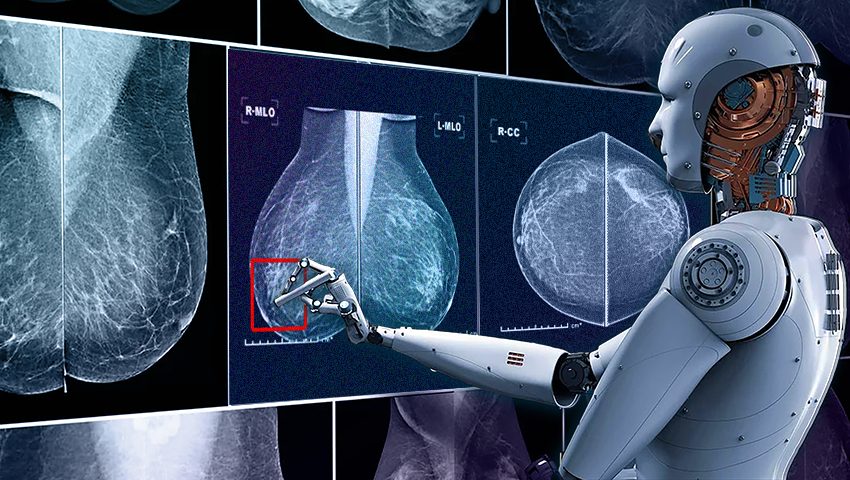

QuData introduces an innovative AI-powered breast cancer diagnostic system. This transformative technology ensures early detection and prompt intervention, marking a significant step forward in accessible, accurate, and timely treatment with better outcomes.

A groundbreaking NLP model Gemini AI is set to surpass existing benchmarks. With its multimodal prowess, scalability across various domains, and integration potential within Google's ecosystem, Gemini AI represents a significant leap in AI technology.