Step inside Van Gogh’s paintings and explore entire AI-generated worlds! At the World Labs Hackathon, hackers built fully interactive environments in just a few hours, meanwhile Google’s Project Genie lets anyone transform simple prompts or images into immersive, navigable AI worlds in real time.

Alibaba’s Qwen3.5 combines multimodal intelligence and advanced reasoning with ultra-efficient compute through MoE sparsity and native vision-language fusion. Spanning compact on-device models to massive flagship versions, this open-weight family brings high-performance AI to everything from smartphones to cloud-scale servers.

APOLLO, a new AI framework, separates shared biological signals across measurement types from those unique to each technique. This unlocks clearer insights into cell states, predicts unmeasured features, spots disease biomarkers more precisely, and could speed up discoveries in cancer, Alzheimer’s, and beyond.

Meta AI’s DINOv3 is a self-supervised vision model trained on 1.7 billion images, setting new standards in image classification, object detection, and beyond. With innovations like Gram anchoring and real-world impact from monitoring deforestation to powering NASA’s Mars exploration, it marks a paradigm shift in computer vision.

The new system enables groups of robots to act as a unified team. The MultiRobot FrameWork lets robots share real-time information about their environment, positions, and tasks, mirroring the collective behavior seen in insect colonies, but powered by advanced sensors and computation.

The new GenSeg framework significantly reduces the need for expert-labeled data and achieves high-accuracy medical image segmentation with as few as 40-50 samples. By creating realistic synthetic scans paired with exact labels, it empowers the development of advanced diagnostic tools even in data-limited settings.

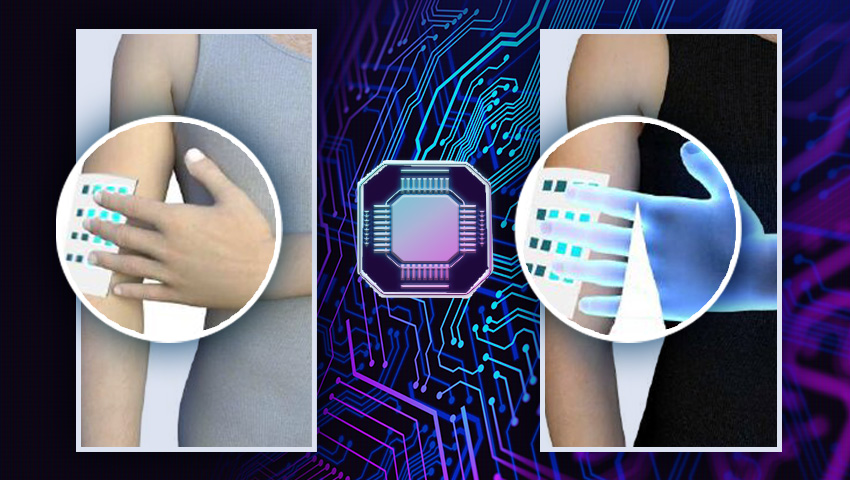

A self-powered artificial synapse can mimic human color vision with 10-nanometer resolution using dye-sensitized solar cells. This technology enables energy-efficient AI systems capable of advanced color recognition and logic processing.

MIT researchers have developed CAV-MAE Sync, an AI model that learns to precisely link sounds with matching visuals in video without any labels. This technology can bring us closer to smarter AI that can see, hear, and understand the world just like humans.

ItpCtrl-AI improves X-ray diagnostics by mimicking radiologists' gaze patterns, providing interpretable heatmaps that enhance transparency and trust in AI-driven medical imaging. By filtering out irrelevant data and focusing on key diagnostic areas, the system ensures more accurate and explainable results.

The Indian Patent Office has granted a patent for the innovative landing system for mini-UAVs. This technology enables precise landings in challenging terrains and has potential applications in both military and civilian logistics, including high-altitude deliveries and emergency.

A low-cost, innovative accident avoidance system for drones uses onboard sensors and cameras to autonomously prevent mid-air collisions. This technology is crucial for UAV operations, ensuring safety and efficiency in increasingly crowded airspaces.

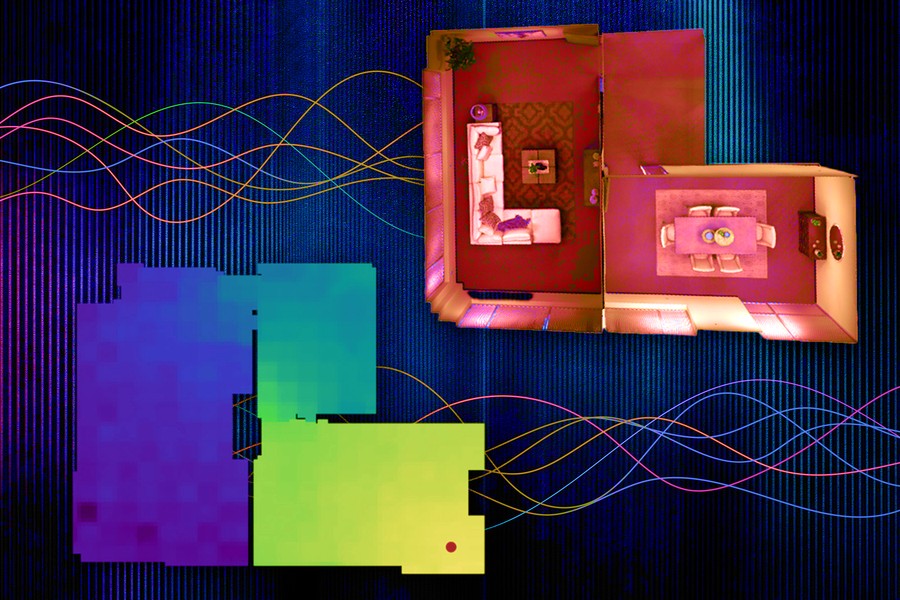

A new computer vision system significantly reduces energy consumption while providing real-time, realistic spatial awareness. It enhances AI systems' ability to accurately perceive 3D space – crucial for technologies like self-driving cars and UAVs.

MAIA can interpret neural networks by conducting experiments and refining its analysis, enhancing understanding of AI models. This agent can identify neuron activities, remove irrelevant features, and detect biases, making AI systems safer and more transparent.

Researchers created insect-inspired autonomous navigation strategies for tiny, lightweight robots. Tested on a 56-gram drone, the system enables it to return home after long journeys using minimal computation and memory.

Nowadays, users can create DEMs with just one click, thanks to radar satellites providing continuous, high-precision data on the Earth's surface and increasingly fast and accessible open-source software. This allows for effective monitoring of terrain changes and natural phenomena.

With the significant rise in UAV usage in recent years, concerns about their safety have also increased. In this regard, a new system has been developed that leverages computer vision and deep learning algorithms to accurately and quickly detect and track drones.

The solar-powered Zephyr drone has set world records for endurance and altitude, staying aloft for 64 days at heights of up to 75,000 feet. With applications ranging from earth observation to mobile phone base stations, Zephyr provides critical connectivity in remote areas.

Researchers from the MIT Computer Science and Artificial Intelligence Laboratory and Google Research have seemingly performed magic with their latest creation: a diffusion model that can alter the material properties of objects in images.

During the Spring Update event OpenAI’s presented GPT-4о – the unique omnimodel that integrates text, audio and image processing, allowing it to work faster and more efficiently than ever before.

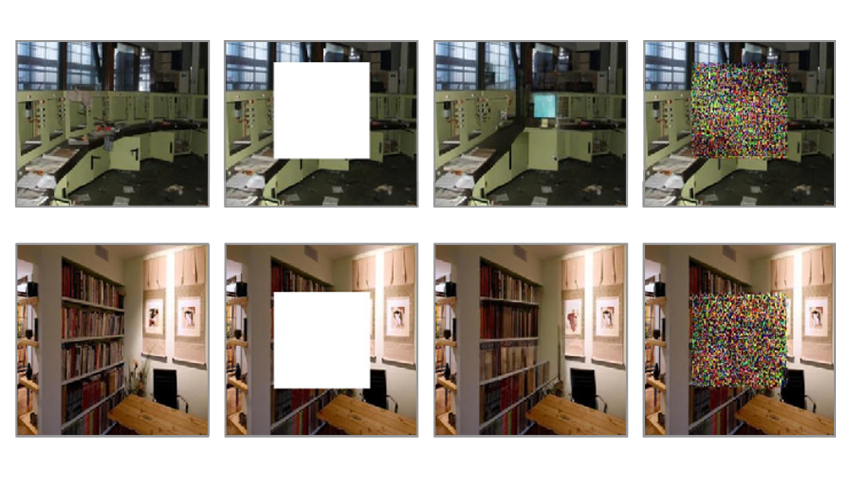

Мachine "unlearning" allows generative AI to selectively forget problematic data without extensive retraining. This method can ensure compliance with legal and ethical standards while maintaining creative capabilities of image-to-image models.

MIT researchers have developed a new framework, simplifying image generation into a single step. The team enhanced existing models like Stable Diffusion, by demonstrating the new framework's ability to quickly create high-quality visual content.

Stability AI presented the latest advancement in image generative AI models – Stable Diffusion 3. Its expanded parameter range and diffusion transformer architecture ensure smooth generation of complex, high-quality images and accurate text-to-visual translation.

OpenAI's latest creation Sora crafts captivating videos, offering unparalleled realism of visual compositions. Leveraging a fusion of language understanding and video generation the model can interpret text prompts, accommodate various input modalities, and simulate dynamic camera motion.

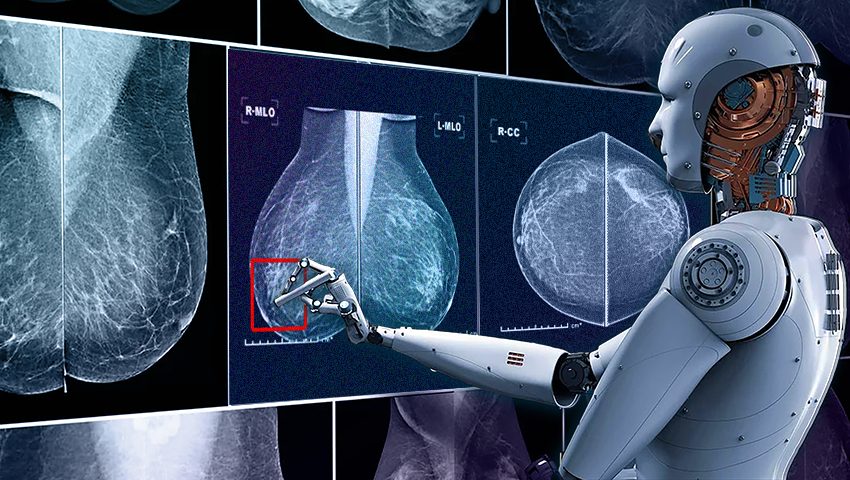

QuData introduces an innovative AI-powered breast cancer diagnostic system. This transformative technology ensures early detection and prompt intervention, marking a significant step forward in accessible, accurate, and timely treatment with better outcomes.

The latest motion estimation method can extract long-term motion trajectories for every pixel in a frame, even in the case of fast movements and complex scenes. Learn more about the exciting technology and the future of motion analysis in this article about OmniMotion.

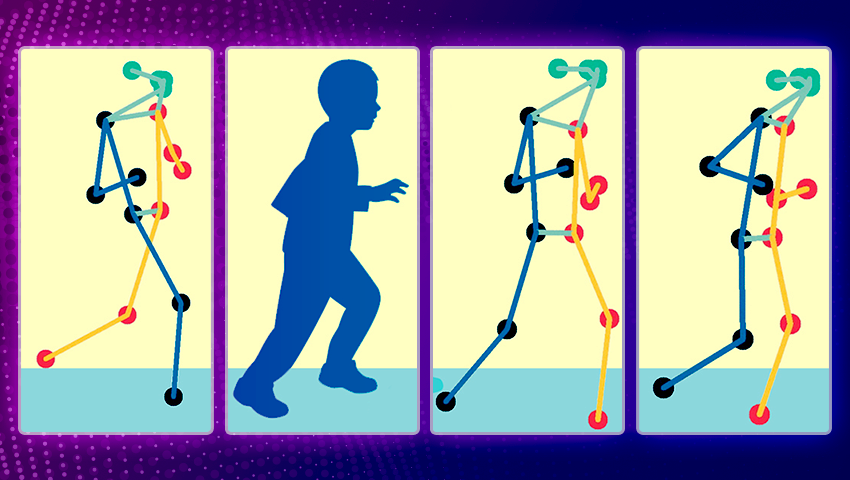

The new technique utilizes real-time video analysis to compute a clinical score of motor function based on specific pose patterns, reducing the need for frequent in-person evaluations and enhancing patient care.

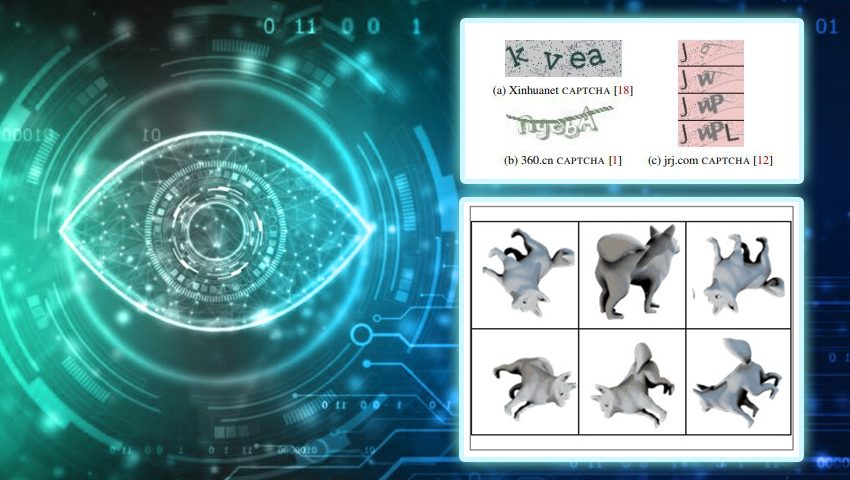

Recent research reveals that despite CAPTCHAs' extensive use as a defense against automation, nowadays bots outperform humans in both speed and accuracy when it comes to solving CAPTCHAs.

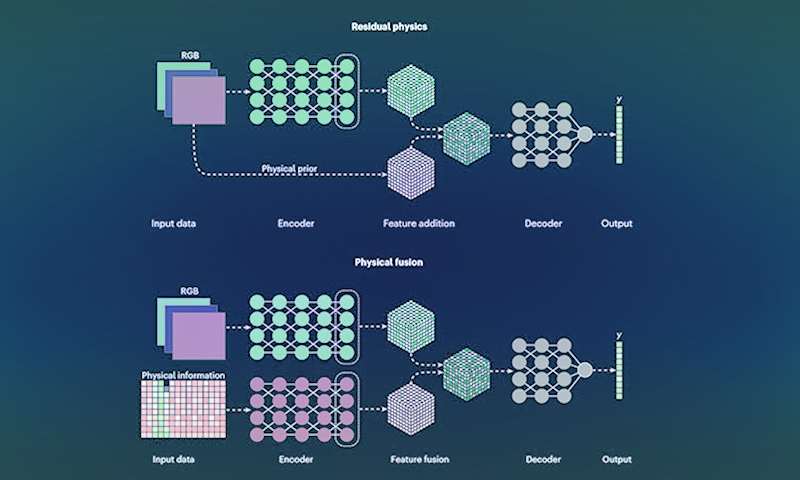

New research focuses on enhancing computer vision technologies by incorporating physics-based awareness into data-driven techniques. This hybrid AI-powered computer vision empowers machinery to intelligently perceive, interact, and respond to real-time environments.

The researchers used a diverse set of simple image generation programs to create a dataset for training a computer vision model. This approach can improve the performance of image classification models trained on synthetic data.

Using advances in artificial intelligence engineers at the University of Colorado Boulder are working on a new type of walking cane for blind or visually impaired.

Researchers have discovered new ways for retailers to use AI in conjunction with in-store cameras to better understand consumer behavior and adapt store layouts to maximize sales.

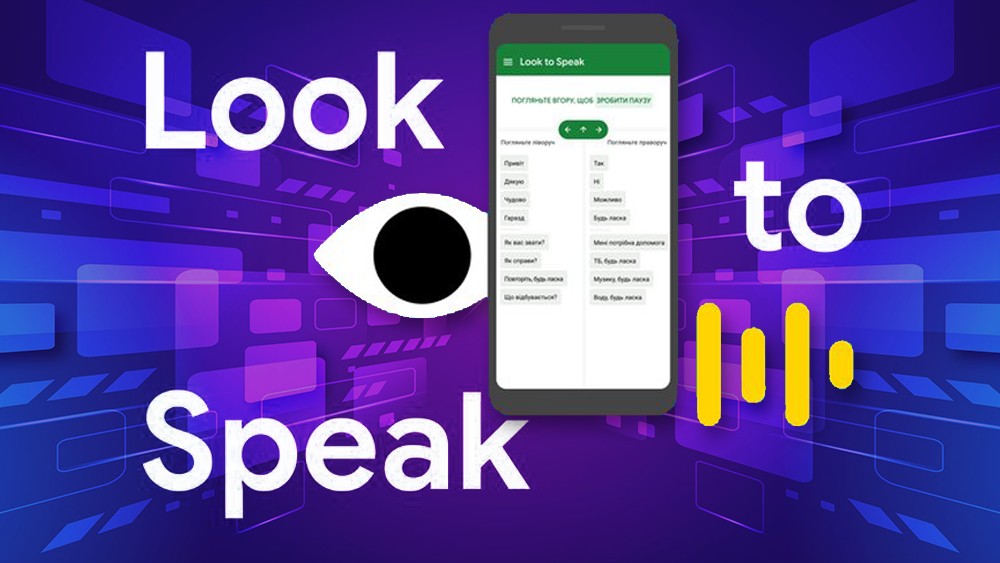

Look to Speak is designed to help those with motor function impairments and speech difficulties to communicate more easily. The app lets people use their eyes to select pre-written phrases and have them spoken out loud.

MIT researchers have developed a machine-learning technique that precisely collects and models the underlying acoustics of a location from just a limited number of sound recordings.