Yann LeCun is taking a bold step with his new startup AMI, working to create “world models” that understand the physical world, reason about causality, and develop true common sense. This approach directly challenges today’s dominant paradigm, suggesting that scaling LLMs alone may never achieve human-level intelligence.

Scientists have engineered a transneuron – an artificial neuron capable of mimicking the activity of multiple regions of the human brain. This advancement could accelerate the development of robots with human-like perception, adaptability, and learning.

Recent research has revealed that AI language models store memory and reasoning in entirely separate neural circuits, showing that machines “think” and “remember” in different ways. This discovery leads the way to creating AI systems that can forget sensitive data while preserving their intelligence.

Mamba-3 - state-space model that redefines how AI thinks, learns, and understands language. By improving context tracking, information processing, and response generation, Mamba-3 sets a new standard for performance and inference speed, beyond traditional transformer models.

FlyingToolbox is a drone system capable of docking and exchanging tools mid-air, even in turbulent airflow. This technology enables precise multi-stage operations: from maintenance and high-altitude construction to emergency response missions.

MIT scientists explored a critical flaw in AI language models called position bias, where models favor information at the beginning and end of text while ignoring the middle. Their research reveals this bias is rooted not only in the training data, but also in the architecture of the models themselves.

Microsoft’s Phi-4 family is a new generation of compact language models built for complex tasks like math, coding, and planning – often outperforming larger systems. Trained with advanced techniques and curated data, they offer strong reasoning while staying efficient for low-latency use.

Midjourney has launched V7, its most powerful AI image model yet, featuring smarter prompts and real-time personalization. With a redesigned architecture, V7 delivers improved object coherence, enhanced texture realism, and introduces Draft Mode for rapid, cost-efficient image iteration.

A new advanced neural system that mimics the brain’s learning processes promises to create faster, more efficient, and energy-saving AI. By leveraging Hebbian learning and spike-timing-dependent plasticity, this innovation could enhance AI performance while significantly reducing environmental and economic costs.

Edify 3D by NVIDIA creates high-quality 3D models in under 2 minutes using AI. By combining multi-view diffusion models and Transformers, it offers fast, accurate, and scalable 3D generation from text or images, making it a perfect solution for gaming, animation, and design industries.

Microsoft has launched the Phi-4 model with open weights under the MIT license, offering researchers and developers unprecedented flexibility. With 14 billion parameters, Phi-4 outperforms its counterparts in solving mathematical problems and multitasking, ensuring efficient work with limited resources.

The 2024 Nobel Prizes in physics and chemistry have set a precedent for acknowledging AI’s contributions to science. While some may question the fit between AI and traditional disciplines, others see this as a necessary step toward recognizing the interdisciplinary nature of modern research.

Developed by researchers from Boston University, Neural Phase Retrieval leverages deep learning techniques to enhance the reconstruction of high-resolution images from low-resolution data. New neural framework NeuPh is already successfully outperforming traditional methods.

MAIA can interpret neural networks by conducting experiments and refining its analysis, enhancing understanding of AI models. This agent can identify neuron activities, remove irrelevant features, and detect biases, making AI systems safer and more transparent.

Drone racing has been used to test neural networks for future space missions. This project aims to autonomously manage complex spacecraft maneuvers, optimizing onboard operations and enhancing mission efficiency and robustness.

AI learnt to decode dog barks, identifying playful versus aggressive barks, as well as the dog’s age, sex, and breed. Originally trained on human speech, AI models have achieved impressive accuracy, offering significant advancements in animal care and communication research.

Much like the invigorating passage of a strong cold front, major changes are afoot in the weather forecasting community. The end game? An entirely new way to forecast weather based on artificial intelligence that can run on a desktop computer.

Llama 3, Meta AI's latest advancement, boasts unmatched language understanding, enhancing its capacity for complex tasks. With expanded vocabulary and advanced safety features, the model ensures improved performance and versatility.

OpenAI's latest creation Sora crafts captivating videos, offering unparalleled realism of visual compositions. Leveraging a fusion of language understanding and video generation the model can interpret text prompts, accommodate various input modalities, and simulate dynamic camera motion.

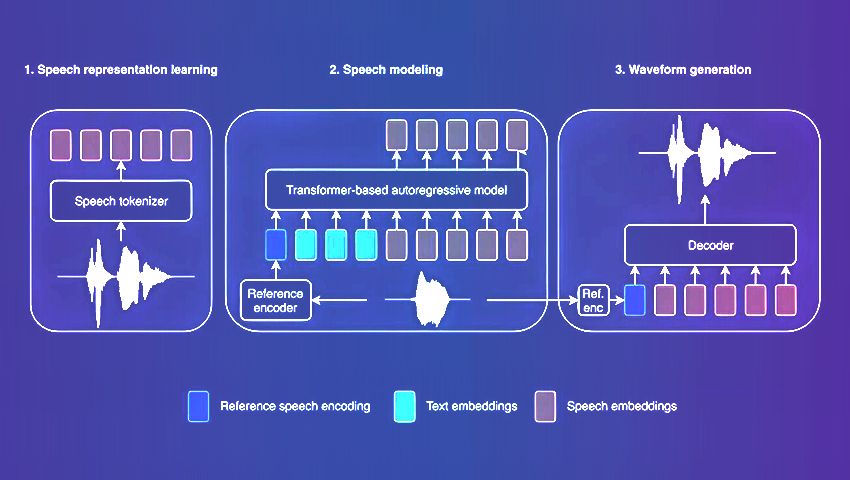

Amazon's latest TTS model with its innovative architecture sets a new benchmark for speech synthesis. BASE TTS not only achieves unparalleled speech naturalness but also demonstrates remarkable adaptability in handling diverse language attributes and nuances.

MPT-7B offers optimized architecture and performance enhancements, including compatibility with the HuggingFace ecosystem. The model was trained on 1 trillion tokens of text and code and sets a new standard for commercially-usable LLMs.

Deep active learning blends conventional neural network training with strategic data sample selection. This innovative approach results in enhanced model performance, efficiency, and accuracy across a wide array of applications.

ALERTA-Net is a new deep neural network that combines social networks, macroeconomic indicators and search engine data. The unique model predicts stock price movements and stock market volatility, going beyond traditional analysis methods.

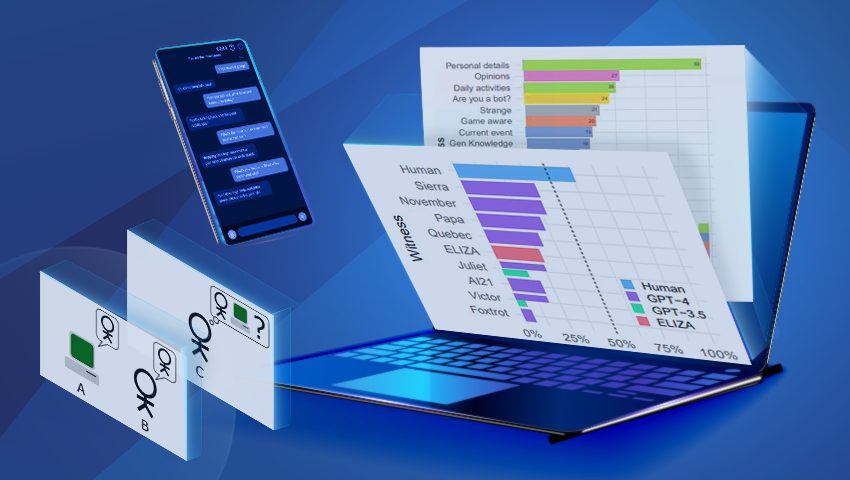

In 1950, British scientist Alan Turing proposed a test to determine whether machines can think. To date, no artificial intelligence has yet successfully passed it. Will ChatGPT be the first?

OpenAI had an impressive DevDay introducing new features. Let's dive into the world of innovation and explore new horizons in the landscape of artificial intelligence. Find out about all the new amazing possibilities in our article!

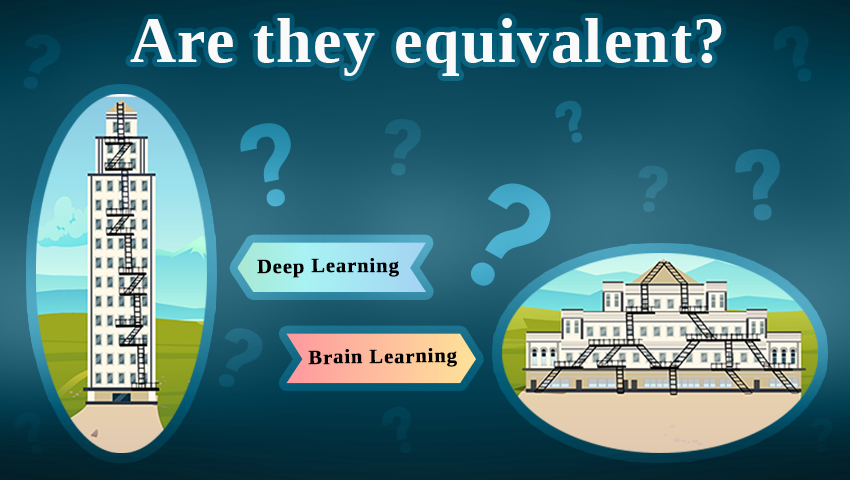

Continuing research on tree-like architectures, scientists from Bar-Ilan University examine the need for deep learning in AI and suggest alternative machine learning methods that may be more effective for complex classification tasks.

Researchers from the Institute for Assured Autonomy highlight new methods for ensuring safety in the growing world of unmanned aircraft systems using advanced artificial intelligence techniques and simulation environments.

The latest motion estimation method can extract long-term motion trajectories for every pixel in a frame, even in the case of fast movements and complex scenes. Learn more about the exciting technology and the future of motion analysis in this article about OmniMotion.

Inspired by ants, researchers from the Universities of Edinburgh and Sheffield are developing an artificial neural network to help robots recognize and remember routes in complex natural environments.

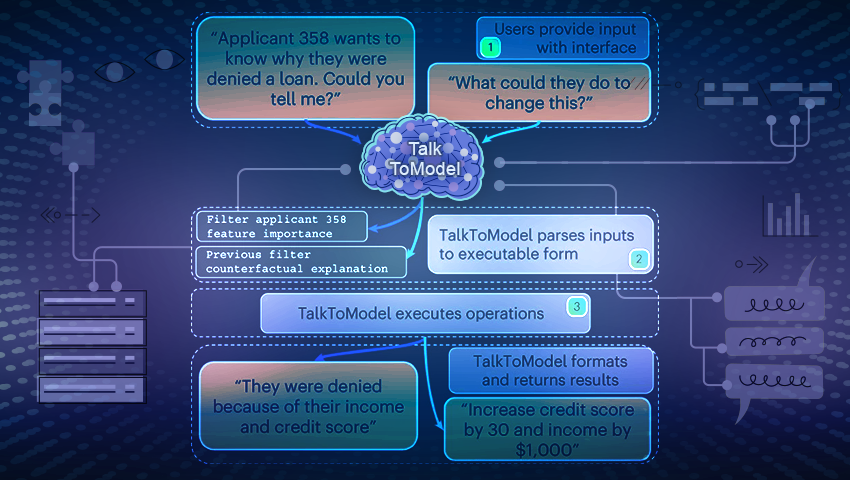

TalkToModel is an innovative system for enabling open conversations with ML models. This platform allows users to not only understand, but also communicate with ML models in natural language, as well as receive explanations of their predictions and operating processes.

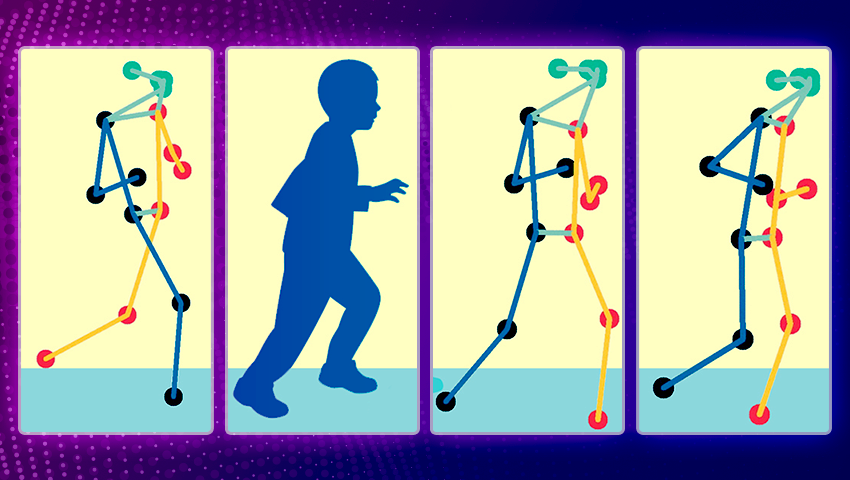

The new technique utilizes real-time video analysis to compute a clinical score of motor function based on specific pose patterns, reducing the need for frequent in-person evaluations and enhancing patient care.

Recent AI research centered around tree-based architectures opens new perspectives for training artificial neural networks.

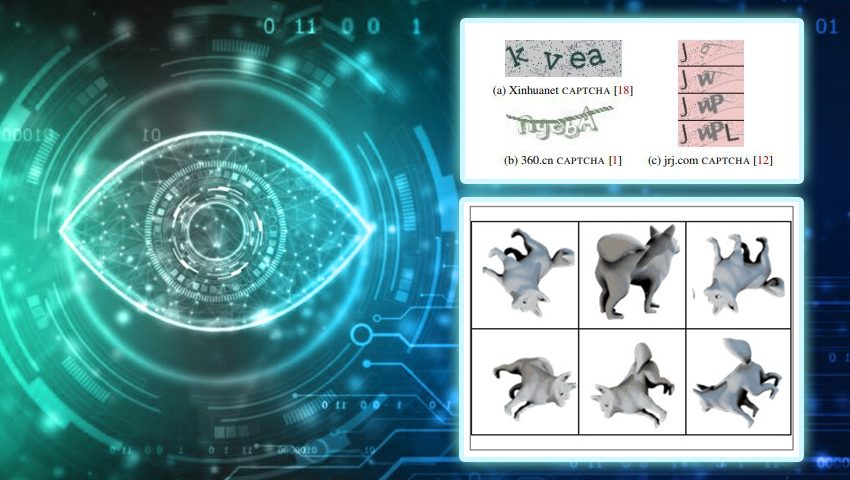

Recent research reveals that despite CAPTCHAs' extensive use as a defense against automation, nowadays bots outperform humans in both speed and accuracy when it comes to solving CAPTCHAs.

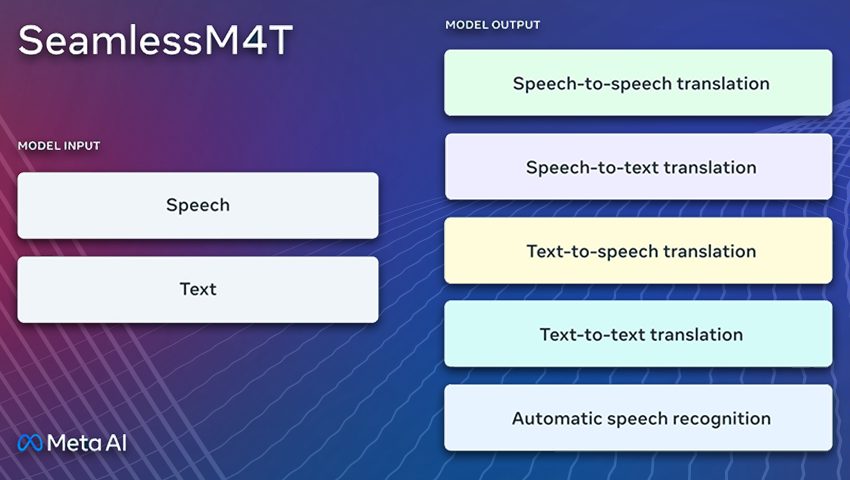

SeamlessM4T breaks down language barriers with its comprehensive translation and transcription capabilities, This AI model can easily convert speech or text, enabling real-time translation, and fostering cross-cultural understanding.

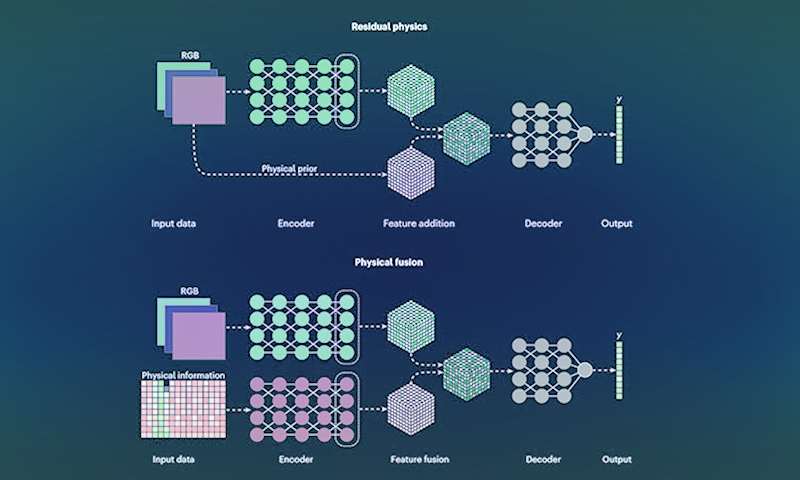

New research focuses on enhancing computer vision technologies by incorporating physics-based awareness into data-driven techniques. This hybrid AI-powered computer vision empowers machinery to intelligently perceive, interact, and respond to real-time environments.

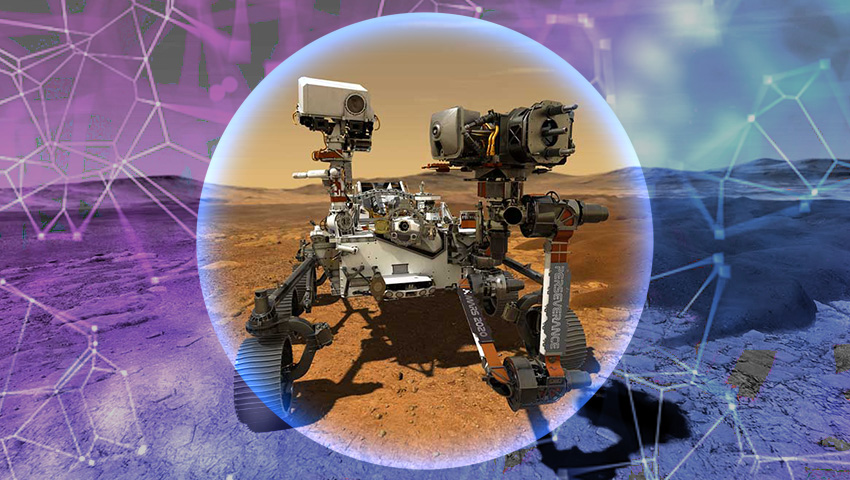

The European Space Agency is developing a sample retrieval system using neural networks, aiming to collect and transport samples from Mars. The challenging mission of returning samples gathered by Perseverance rover is considered crucial for unlocking the mysteries of the Red Planet.

A new architecture aims to overcome the existing limitations of neural networks and symbolic AI. Developed model already demonstrates high effectiveness in solving logical problems and provides a promising framework for integrating different AI paradigms.

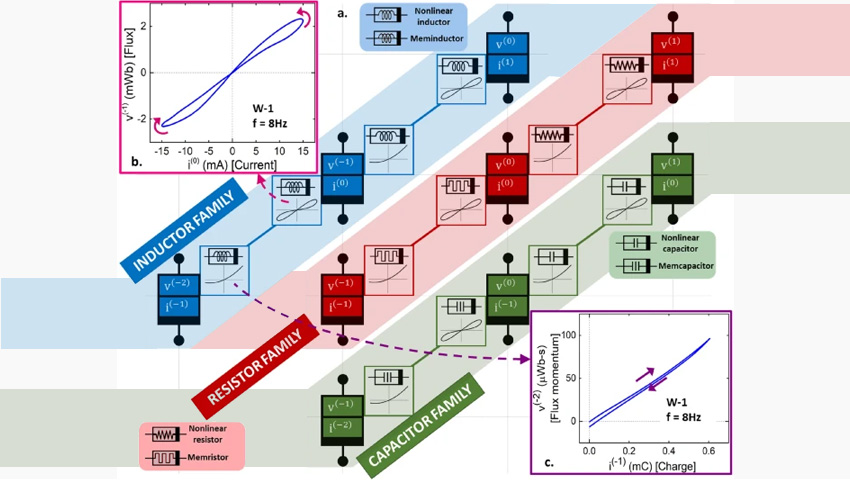

The meminductor joins the previously discovered memristors and memcapacitors in a line of circuit elements that can store and recall previous current or voltage values.

Solar cells based on hybrid organic-inorganic perovskites are a rapidly developing area of alternative energy. These molecules initiated the development of a new class of photovoltaic devices – perovskite solar cells.

The researchers used a diverse set of simple image generation programs to create a dataset for training a computer vision model. This approach can improve the performance of image classification models trained on synthetic data.

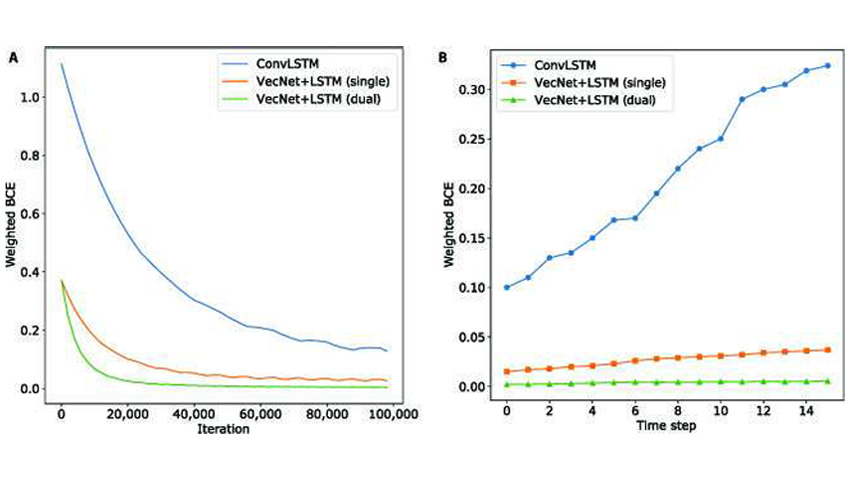

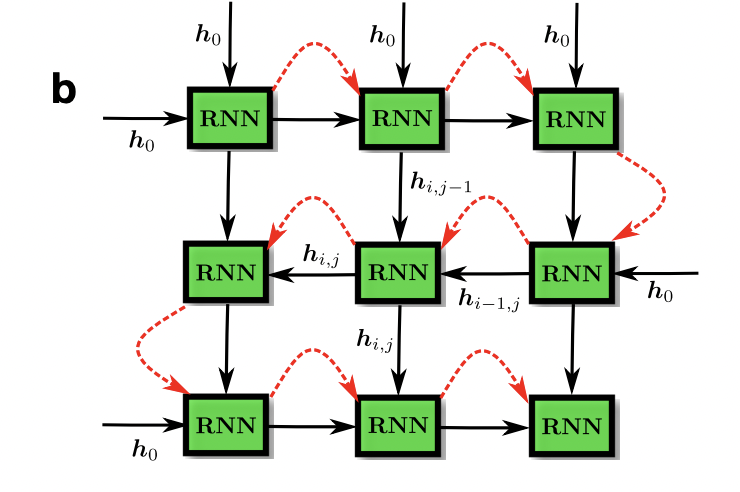

Researchers developed a new approach to motion modeling using relative position change. They evaluated the ability of deep neural networks architectures to model motion using motion recognition and prediction tasks.

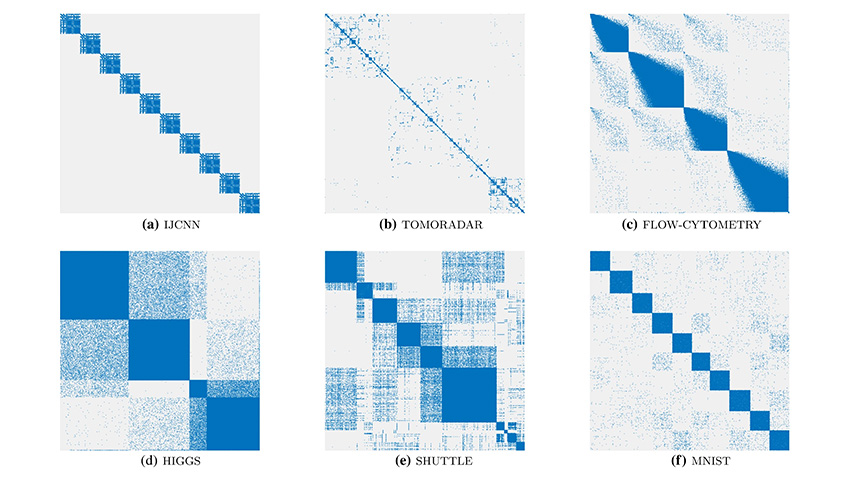

Researchers designed a new AI algorithm that is designed to visualize data clusters and other macroscopic features in a way that they are as distinct, easy to observe and human-understandable as possible.

Researchers have recently created a new neuromorphic computing system supporting deep belief neural networks (DBNs) - a generative and graphical class of deep learning models.

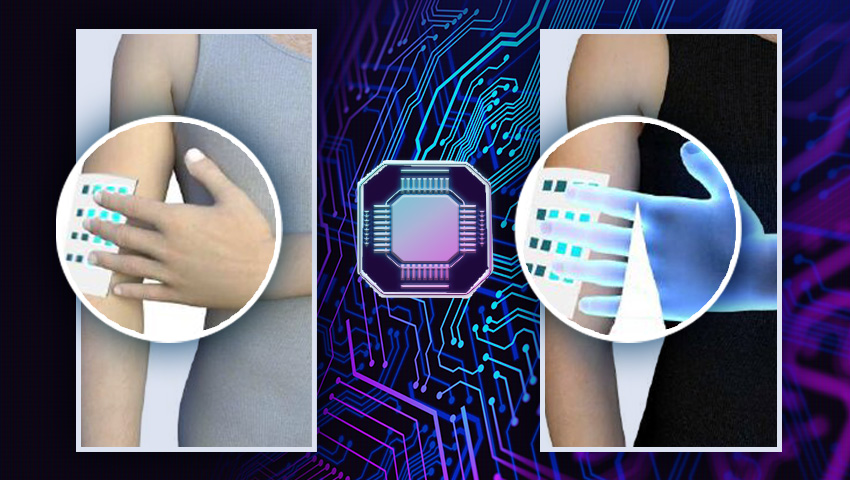

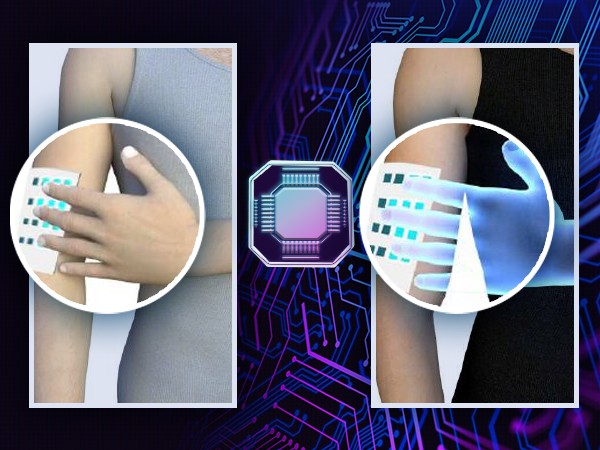

The wireless soft e-skin can both detect and transmit the sense of touch, and form a sensory network, which opens up great possibilities for improving interactive sensory communication.

Meta AI launched LLaMA, a collection of foundation language models that can compete with or even outperform the best existing models such as GPT-3, Chinchilla and PaLM.

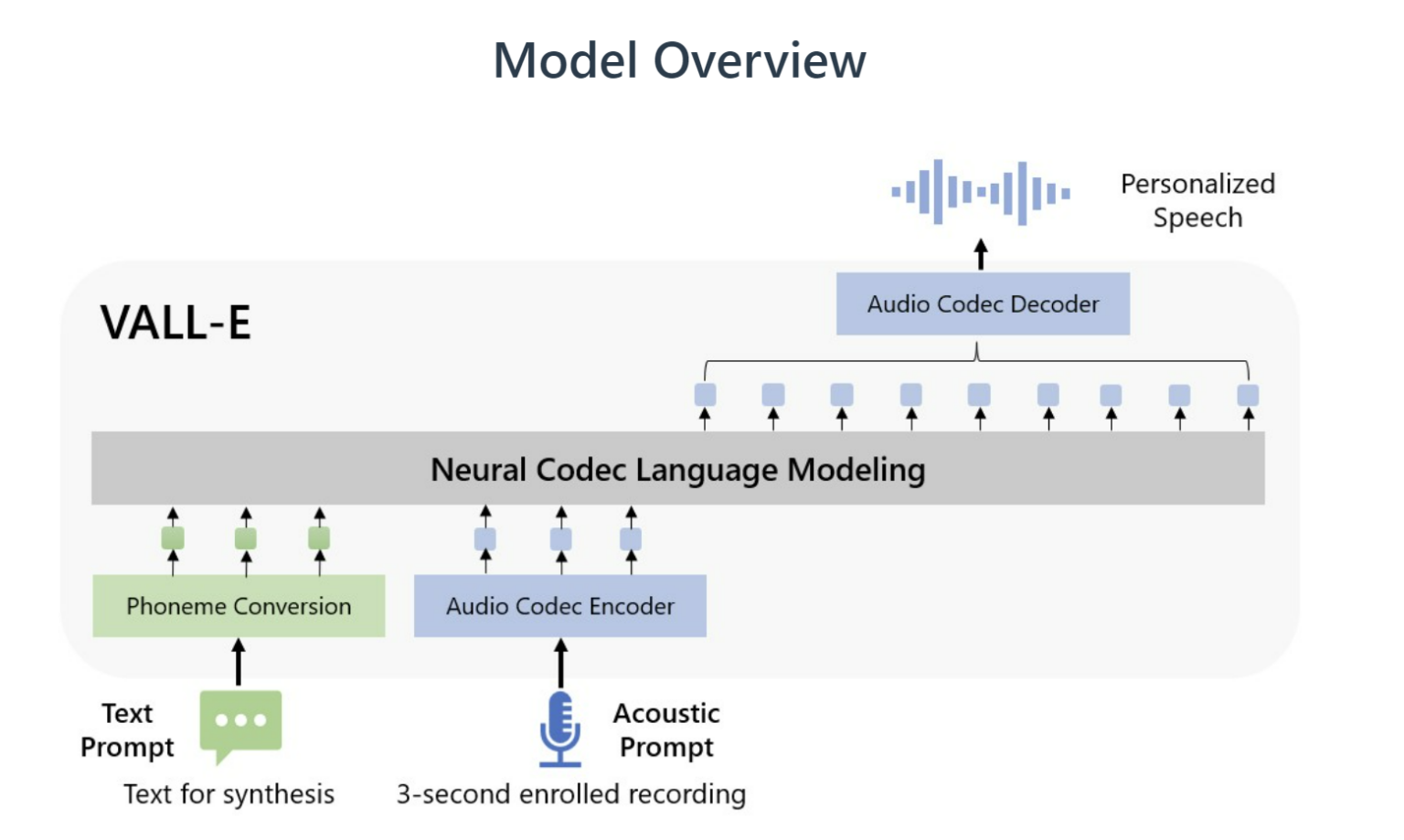

Text-to-speech models usually require significantly longer training samples, while VALL-E creates a much more natural-sounding synthetic voice from just a few seconds.

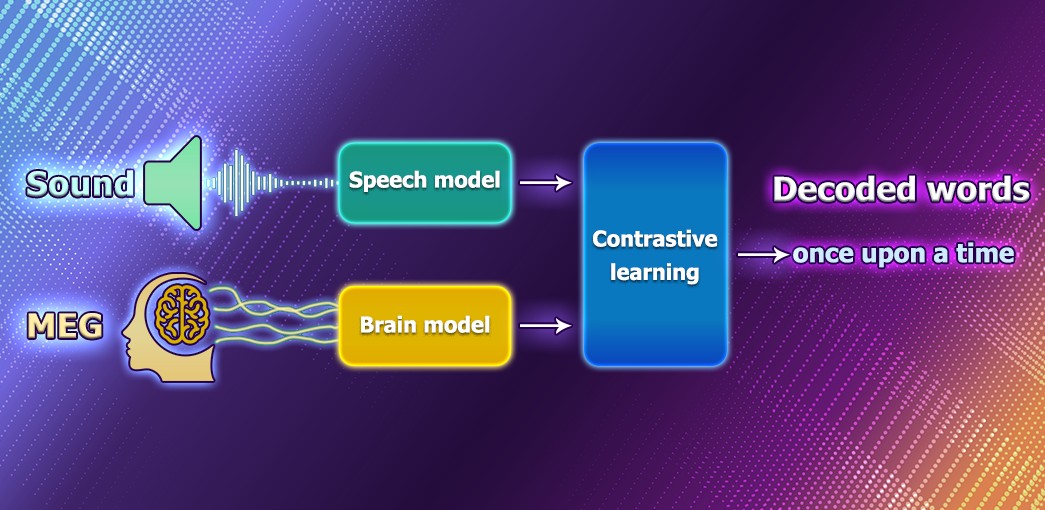

Decoding speech based on brain activity has been a long-established goal of neuroscientists and clinicians. Nowdays, Meta is working on an AI model that can decode speech from noninvasive recordings of brain activity to help people after traumatic brain injury.

During the last decade, one of the biggest issues in the gaming industry is the explosive growth of the AAA video games production cost. Studios are always on the look-up for technologies that could help bring down the cost of game development. Recent advances in the neural image generation models bring some hope that the realization of this dream may be not so far away.

Can computers think? Can AI models be conscious? These and similar questions often pop up in discussions of recent AI progress, achieved by natural language models GPT-3, LAMDA and other transformers. They are nonetheless still controversial and on the brink of a paradox, because there are usually many hidden assumptions and misconceptions about how the brain works and what thinking means. There is no other way, but to explicitly reveal these assumptions and then explore how the human information processing could be replicated by machines.

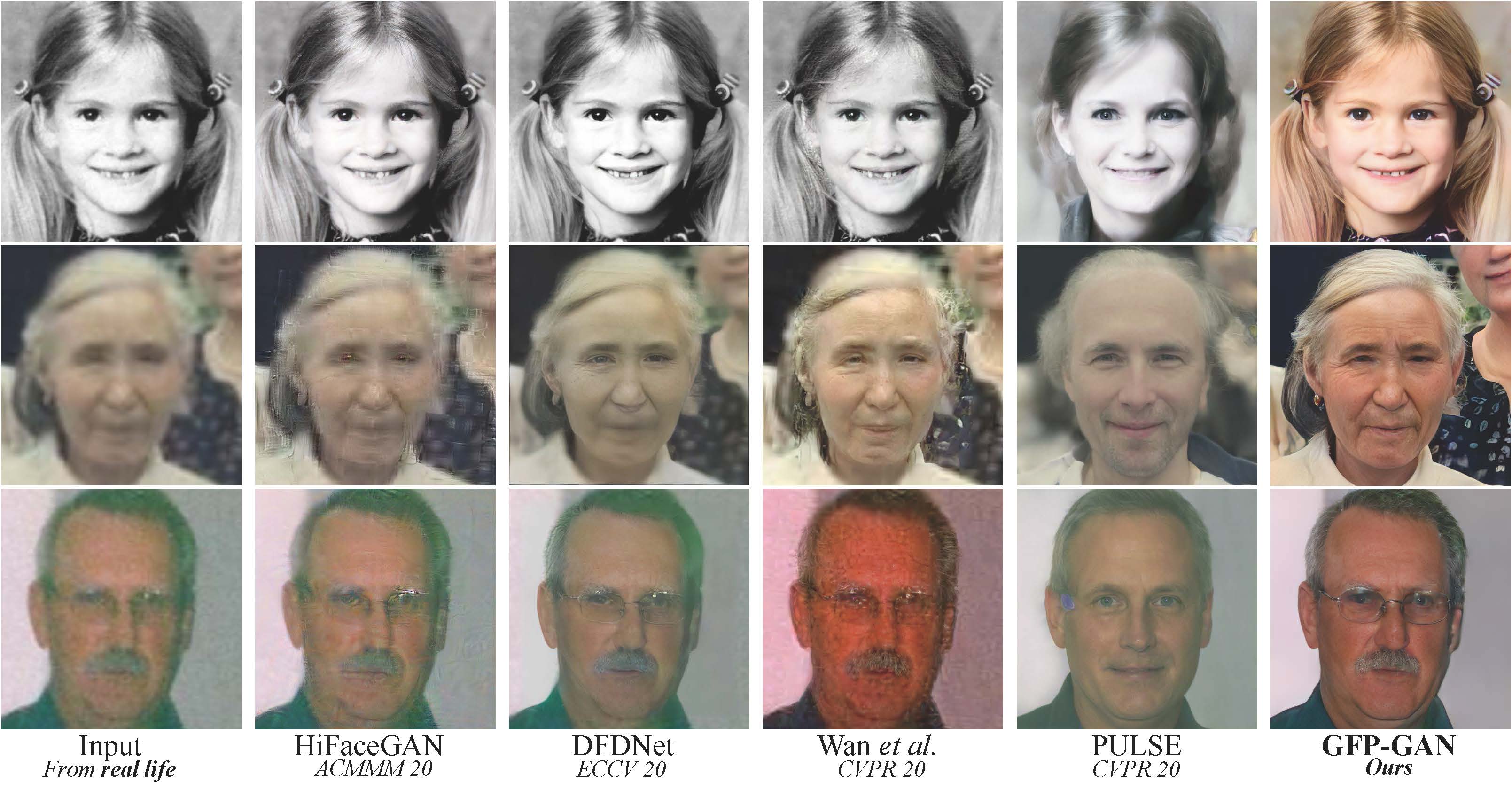

Now you won’t surprise anyone with filters that improve the quality of photos. But the restoration of old portraits still leaves much to be desired. Older photos tend to be too blurry, so normal image sharpening methods won't work on them.

Facebook has released the NLLB project (No Language Left Behind). The main feature of this development is the coverage of more than two hundred languages, including rare languages of African and Australian peoples. In addition, Facebook has applied a new approach to the machine learning model, where the translation is carried out directly from one language to another, without intermediate translation into English.

Have you ever seen a photo of an avocado-shaped teapot or read a clever article that deviates slightly from the topic? If so, then you may have discovered the latest trend in artificial intelligence (AI). DALL-E, GPT, and PaLM machine learning systems are making a name for themselves as innovative tools that are able to accomplish creative tasks.

Optimization problems involve determining the best viable answer from a variety of options, which can be seen frequently both in real life situations and in most areas of scientific research. However, there are many complex problems which cannot be solved with simple computer methods or which would take an inordinate amount of time to resolve.

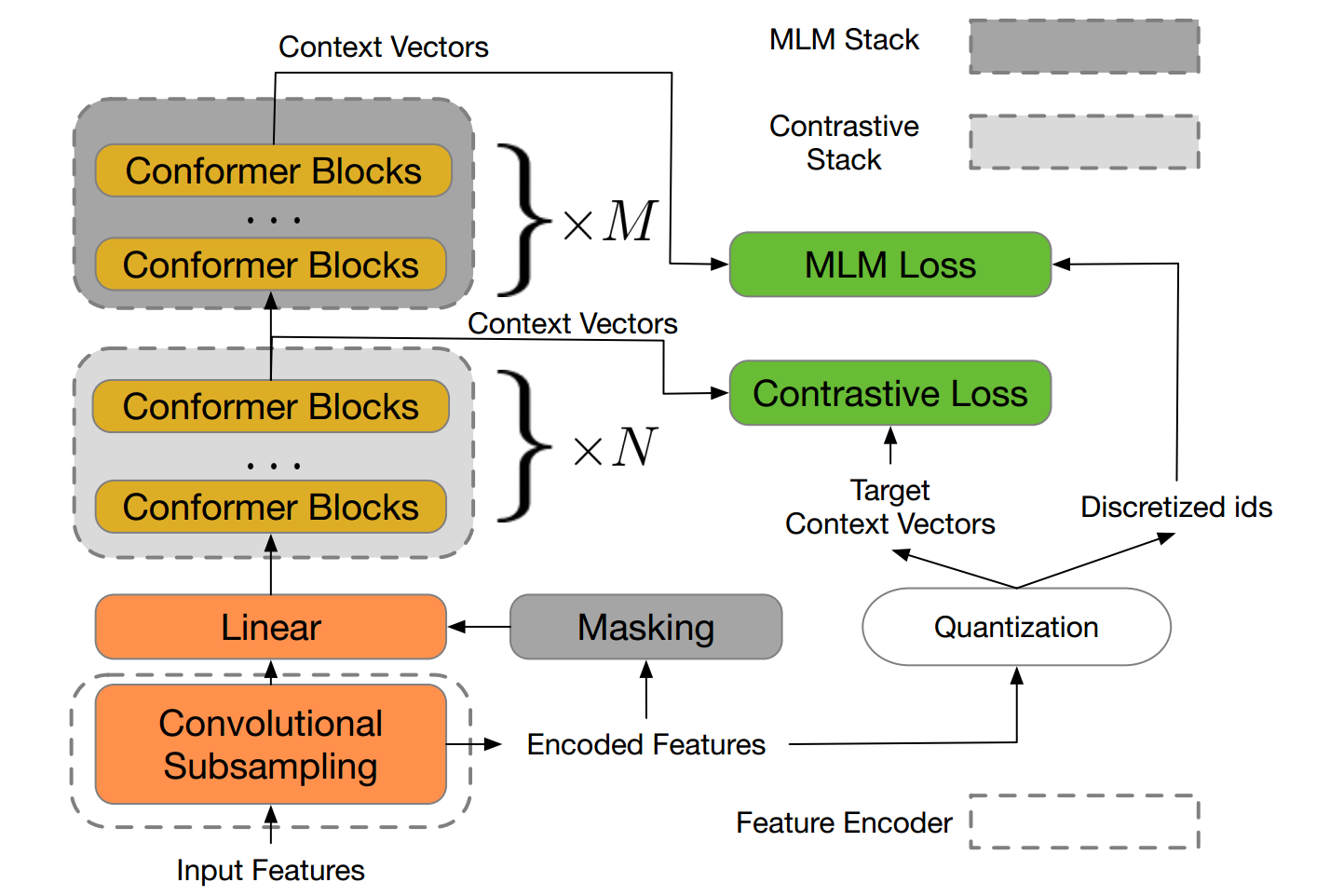

Motivated by the success of masked language modeling (MLM) in pre-training natural language processing models, the developers propose w2v-BERT that explores MLM for self-supervised speech representation learning.

“We find that DALL·E also allows for control over the viewpoint of a scene and the 3D style in which a scene is rendered” OpenAI explains. Produced images can range from illustrations to objects, and also adjusted real-world pictures.